NFL Schedule

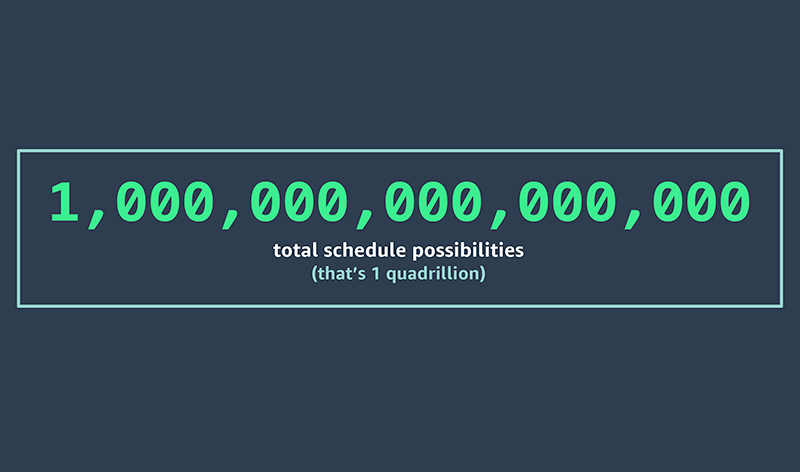

With 1,000,000,000,000,000 (yes, one quadrillion) total schedule possibilities, how does the NFL create a fair and balanced schedule each year?

The NFL just released its season schedule, but how was it built?

Did You Know?

2023 NFL Schedule Blogs

A LOOK INSIDE THE MAKINGS OF AN NFL FOOTBALL SCHEDULE

Predicting what fans are going to watch in December, now. What is the NFL up to with AWS and how does it work?

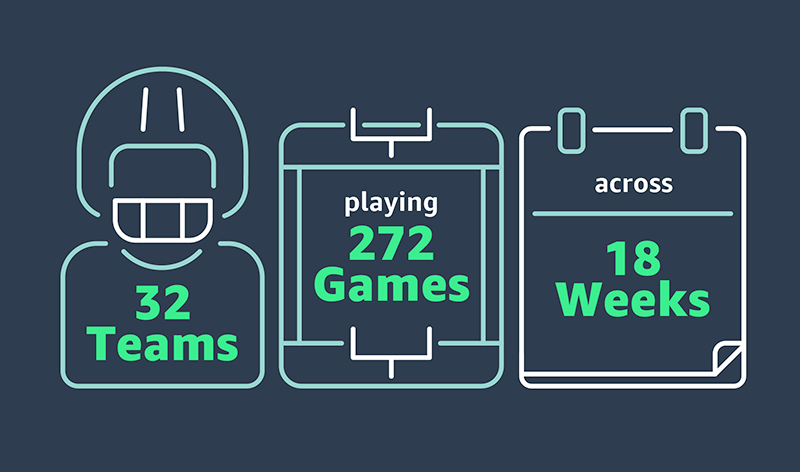

In just three months, NFL schedule makers methodically build an exciting 18 week 272-game schedule spanning across 576 possible game windows. How do they do it? We caught up with NFL’s director of broadcasting, Charlotte Carey, as she and her team were building this year's schedule, to get an inside look at their detailed process and how they use AWS to help them uncover the one.

THE SCHEDULING SWEET SPOT: A SEASON WITHIN THE SEASON

“When it comes to creating a schedule, there’s definitely a mix of art and science that is involved,” Carey says. Howard Katz, NFL’s senior vice president of broadcasting and media operations, has led on the art side for the past 15 years. “[He’s] built the schedules for many years, trusting his gut and instincts, and it has worked out very well.” And on the science side? That’s where she and VP of NFL broadcasting, Mike North, sit. “We’re on the backend trying to figure out exactly what everyone is saying and how the computers are reacting to everyone’s gut and instincts.”

The now automated operation was once done completely by hand, on a cork board in the main office. That’s where schedule makers, like North, would hunker down for weeks on end manually churning though hundreds of potential NFL schedules, game by game, sometimes only producing a few potential finished schedule options per season.

“I wasn’t here during the cork board era, but I was here when we only had 100 computers at our disposal. That was really all we had from a schedule creation standpoint,” Carey recalls. “[Back then,] when we set off to search at night, it was very common that we’d come back in the morning with nothing. Or one schedule. To be honest, having thousands of AWS spot instances available each night has completely changed our process.”

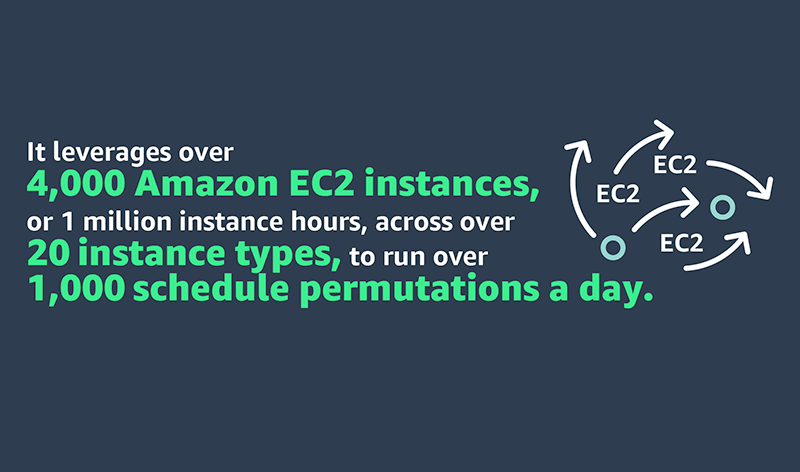

Since then, the complexity of assembling the schedule has grown. Carey’s team uses more data than they ever have before, 100 computers has now grown to 4,000 and with the help of AWS they are able to produce dozens of schedule options each night. “The hardware at this point is really helping the number of seeds that we can run though. We’re able to travel through a lot more of the solution space every night with each run. We’re getting a lot smarter about how we actually look at and analyze [all of] these schedules thanks to what AWS is able to provide us in terms of firepower.”

Today, the team relies on Amazon Elastic Cloud Compute (Amazon EC2) machines to run thousands of schedule scenarios, with the hopes of discovering what Carey calls the mythical, magical, perfect schedule. “We have so much more computing power at our disposal and AWS has been great with telling us which boxes we should use, how we should use them and which spot instances we should be looking for. Those different pieces have really helped us from a solve perspective. And it’s gotten us to a much better place where we are seeing a lot of high-quality schedules every day.”

WHERE IT ALL BEGINS

As soon as the Super Bowl ends, the NFL’s small but nimble broadcast planning team head back into the office to run simulations with a complex algorithm that analyzes millions of potential schedules. “As soon as we finish Week 18 of the regular season, we know the standings-based matchups. The final piece of the puzzle comes with who wins the Super Bowl. That’s where we start,” Carey explains. “For instance, this year, Kansas City won the Super Bowl. So, what are the options for the kickoff game? You could look at division games, some interesting NFC North matchups, or you could look at some pretty epic playoff rematches with Cincinnati or Buffalo. And you could certainly consider a Super Bowl rematch with Philly. There’s so many different options.”

They begin whittling down the pool by first defining key variables and writing unique rules that must be taken into consideration—things like travel requirements, primetime games, free agency, division rivalries, stadium blocks, health and safety concerns and many more. “To be honest, we have north of 20,000 rules in the system,” Carey admits. “It’s a crazy, crazy management process to say the least.” Rules are input into the system and each night thousands of Amazon Elastic Cloud Compute machines run different scenarios—each one talking to one another as they search though different branches of the search tree, in pursuit of a schedule that abides by each and every rule. It’s a job that would have taken thousands of people hours without AWS.

The ideal schedule minimizes competitive inequities while at the same time maximizing television viewership. Carey admits finding an option that equally satisfies 32 NFL teams, 7 network partners and millions of NFL die-hard fans is nothing short of challenging. “You could ask all coaches what their least favorite thing about any schedule would be and I think you [would] get all different answers. There are some things that everyone hates—nobody likes three straight road games or a road game after a road Monday game,” explains Carey. “We definitely pay a lot of attention to competitive inequity and try to make sure we balance everything out. There may never be such a thing as that mythical, magical, perfect schedule with no team pain or all the networks getting everything they want,” confesses Carey. “I'm not sure we're ever going to get to perfect but we're going to darn well try to get as close as we can.”

PREDICTING THE UNPREDICTABLE

So, how does the NFL account for variables that they can’t expect, like free agency, trades or unexpected retirements? The short answer is by staying flexible. One example that comes to mind for Carey was in 2020, when Tom Brady infamously signed with the Tampa Bay Buccaneers. The sudden move caused a massive ripple effect on their schedule models.

“In the midst of COVID Tom Brady decided that he was going to become a Buccaneer. [Tampa Bay] is now a team with the G.O.A.T. It’s a completely different calculus. How do you then rethink about different brands and franchises and everything else when these players have such a big impact on one team? That’s where we use data to inform decisions."

The shocking swap greatly impacted the landscape of their schedule models—catapulting the Buccaneers to the national window and into primetime television territory. But, with the AWS cloud, Carey and her team accounted for the changes and adapted quickly—allowing them to freely leverage baseline models and rewrite team weightings in the rules engine.

Big moves are still being made—like Aaron Rodgers’ official trade announcement to the New York Jets. “I think the Jets are going to be fun this year. It should be an interesting team, and the calculus definitely shifts now that Rodgers is their quarterback.” The move is sure to pique network interest and influence the upcoming schedule.

WHAT’S NEXT: LOOKING TO THE 2023 SEASON

The 2023 season is fast approaching and schedule makers are back in the office running simulations. With technological advancements and more data at their disposal each day, the team plans to further leverage AWS cloud computing and potentially predictive analytics to help them better optimize for things like TV ratings, available television windows, delivery methods and alternative dates and times.

And with the Draft behind them, Carey and her team are now able to make final touches and last-minute schedule updates to an already highly anticipated 2023 football season. “Now that the schedule comes out after Draft, it’s great because we can factor in interesting things that happened during the draft—particularly rookie quarterbacks that may actually start at the beginning of the season. Those are great opportunities to get good games on schedule that are interesting early on.” So, what should we expect to see on our televisions this year? That’s something we’ll just have to wait to find out.

NEW - Best practices to optimize your Amazon EC2 Spot Instances usage

The NFL saves $2 million each season by using Amazon EC2 Spot Instances. Check out best practices for using Spot Instances.

Amazon EC2 Spot Instances are a powerful tool that thousands of customers use to optimize their compute costs. The National Football League (NFL) is an example of customer using Spot Instances, leveraging 4000 EC2 Spot Instances across more than 20 instance types to build its season schedule. By using Spot Instances, it saves 2 million dollars every season! Virtually any organization – small or big – can benefit from using Spot Instances by following best practices.

Overview of Spot Instances

Spot Instances let you take advantage of unused EC2 capacity in the AWS cloud and are available at up to a 90% discount compared to On-Demand prices. Through Spot Instances, you can take advantage of the massive operating scale of AWS and run large workloads at a significant cost saving. In exchange for these discounts, AWS has the option to reclaim Spot Instances when EC2 requires the capacity. AWS provides a two-minute notification before reclaiming Spot Instances, allowing workloads running on those instances to be gracefully shut down.

In this blog post, we explore four best practices that can help you optimize your Spot Instances usage and minimize the impact of Spot Instances interruptions: diversifying your instances, considering attribute-based instance type selection, leveraging Spot placement scores, and using the price-capacity-optimized allocation strategy. By applying these best practices, you’ll be able to leverage Spot Instances for appropriate workloads and ultimately reduce your compute costs. Note for the purposes of this blog, we will focus on the integration of Spot Instances with Amazon EC2 Auto Scaling groups.

Pre-requisites

Spot Instances can be used for various stateless, fault-tolerant, or flexible applications such as big data, containerized workloads, CI/CD, web servers, high-performance computing (HPC), and AI/ML workloads. However, as previously mentioned, AWS can interrupt Spot Instances with a two-minute notification, so it is best not to use Spot Instances for workloads that cannot handle individual instance interruption — that is, workloads that are inflexible, stateful, fault-intolerant, or tightly coupled.

Best practices

1. Diversify your instances

The fundamental best practice when using Spot Instances is to be flexible. A Spot capacity pool is a set of unused EC2 instances of the same instance type (for example, m6i.large) within the same AWS Region and Availability Zone (for example, us-east-1a). When you request Spot Instances, you are requesting instances from a specific Spot capacity pool. Since Spot Instances are spare EC2 capacity, you want to base your selection (request) on as many spare pools of capacity as possible in order to increase your likelihood of getting Spot Instances. You should diversify across instance sizes, generations, instance types, and Availability Zones to maximize your savings with Spot Instances. For example, if you are currently using c5a.large in us-east-1a, consider including c6a instances (newer generation of instances), c5a.xl (larger size), or us-east-1b (different Availability Zone) to increase your overall flexibility. Instance diversification is beneficial not only for selecting Spot Instances, but also for scaling, resilience, and cost optimization.

To get hands-on experience with Spot Instances and to practice instance diversification, check out Amazon EC2 Spot Instances workshops. And once you’ve diversified your instances, you can leverage AWS Fault Injection Simulator (AWS FIS) to test your applications’ resilience to Spot Instance interruptions to ensure that they can maintain target capacity while still benefiting from the cost savings offered by Spot Instances. To learn more about stress testing your applications, check out the Back to Basics: Chaos Engineering with AWS Fault Injection Simulator video and AWS FIS documentation.

2. Consider attribute-based instance type selection

We have established that flexibility is key when it comes to getting the most out of Spot Instances. Similarly, we have said that in order to access your desired Spot Instances capacity, you should select multiple instance types. While building and maintaining instance type configurations in a flexible way may seem daunting or time-consuming, it doesn’t have to be if you use attribute-based instance type selection. With attribute-based instance type selection, you can specify instance attributes — for example, CPU, memory, and storage — and EC2 Auto Scaling will automatically identify and launch instances that meet your defined attributes. This removes the manual-lift of configuring and updating instance types. Moreover, this selection method enables you to automatically use newly released instance types as they become available so that you can continuously have access to an increasingly broad range of Spot Instance capacity. Attribute-based instance type selection is ideal for workloads and frameworks that are instance agnostic, such as HPC and big data workloads, and can help to reduce the work involved with selecting specific instance types to meet specific requirements.

For more information on how to configure attribute-based instance selection for your EC2 Auto Scaling group, refer to Create an Auto Scaling Group Using Attribute-Based Instance Type Selection documentation. To learn more about attribute-based instance type selection, read the Attribute-Based Instance Type Selection for EC2 Auto Scaling and EC2 Fleet news blog or check out the Using Attribute-Based Instance Type Selection and Mixed Instance Groups section of the Launching Spot Instances workshop.

3. Leverage Spot placement scores

Now that we’ve stressed the importance of flexibility when it comes to Spot Instances and covered the best way to select instances, let’s dive into how to find preferred times and locations to launch Spot Instances. Because Spot Instances are unused EC2 capacity, Spot Instances capacity fluctuates. Correspondingly, it is possible that you won’t always get the exact capacity at a specific time that you need through Spot Instances. Spot placement scores are a feature of Spot Instances that indicates how likely it is that you will be able to get the Spot capacity that you require in a specific Region or Availability Zone. Your Spot placement score can help you reduce Spot Instance interruptions, acquire greater capacity, and identify optimal configurations to run workloads on Spot Instances. However, it is important to note that Spot placement scores serve only as point-in-time recommendations (scores can vary depending on current capacity) and do not provide any guarantees in terms of available capacity or risk of interruption. To learn more about how Spot placement scores work and to get started with them, see the Identifying Optimal Locations for Flexible Workloads With Spot Placement Score blog and Spot placement scores documentation.

As a near real-time tool, Spot placement scores are often integrated into deployment automation. However, because of its logging and graphic capabilities, you may find it to be a valuable resource even before you launch a workload in the cloud. If you are looking to understand historical Spot placement scores for your workload, you should check out the Spot placement score tracker, a tool that automates the capture of Spot placement scores and stores Spot placement score metrics in Amazon CloudWatch. The tracker is available through AWS Labs, a GitHub repository hosting tools. Learn more about the tracker through the Optimizing Amazon EC2 Spot Instances with Spot Placement Scores blog.

When considering ideal times to launch Spot Instances and exploring different options via Spot placement scores, be sure to consider running Spot Instances at off-peak hours – or hours when there is less demand for EC2 Instances. As you may assume, there is less unused capacity – Spot Instances – available during typical business hours than after business hours. So, in order to leverage as much Spot capacity as you can, explore the possibility of running your workload at hours when there is reduced demand for EC2 instances and thus greater availability of Spot Instances. Similarly, consider running your Spot Instances in “off-peak Regions” – or Regions that are not experiencing business hours at that certain time.

On a related note, to maximize your usage of Spot Instances, you should consider using previous generation of instances if they meet your workload needs. This is because, as with off-peak vs peak hours, there is typically greater capacity available for previous generation instances than current generation instances, as most people tend to use current generation instances for their compute needs.

4. Use the price-capacity-optimized allocation strategy

Once you’ve selected a diversified and flexible set of instances, you should select your allocation strategy. When launching instances, your Auto Scaling group uses the allocation strategy that you specify to pick the specific Spot pools from all your possible pools. Spot offers four allocation strategies: price-capacity-optimized, capacity-optimized, capacity-optimized-prioritized, and lowest-price. Each of these allocation strategies select Spot Instances in pools based on price, capacity, a prioritized list of instances, or a combination of these factors.

The price-capacity-optimized strategy launched in November 2022. This strategy makes Spot Instance allocation decisions based on the most capacity at the lowest price. It essentially enables Auto Scaling groups to identify the Spot pools with the highest capacity availability for the number of instances that are launching. In other words, if you select this allocation strategy, we will find the Spot capacity pools that we believe have the lowest chance of interruption in the near term. Your Auto Scaling groups then request Spot Instances from the lowest priced of these pools.

We recommend you leverage the price-capacity-optimized allocation strategy for the majority of your workloads that run on Spot Instances. To see how the price-capacity-optimized allocation strategy selects Spot Instances in comparison with lowest-price and capacity-optimized allocation strategies, read the Introducing the Price-Capacity-Optimized Allocation Strategy for EC2 Spot Instances blog post.

Clean-up

If you’ve explored the different Spot Instances workshops we recommended throughout this blog post and spun up resources, please remember to delete resources that you are no longer using to avoid incurring future costs.

Conclusion

Spot Instances can be leveraged to reduce costs across a wide-variety of use cases, including containers, big data, machine learning, HPC, and CI/CD workloads. In this blog, we discussed four Spot Instances best practices that can help you optimize your Spot Instance usage to maximize savings: diversifying your instances, considering attribute-based instance type selection, leveraging Spot placement scores, and using the price-capacity-optimized allocation strategy.

To learn more about Spot Instances, check out Spot Instances getting started resources. Or to learn of other ways of reducing costs and improving performance, including leveraging other flexible purchase models such as AWS Savings Plans, read the Increase Your Application Performance at Lower Costs eBook or watch the Seven Steps to Lower Costs While Improving Application Performance webinar.

Read this post on the AWS Blog

NFL Schedule Creation Statistics

Behind the Scenes

As soon as the Super Bowl ends, the NFL starts running an algorithm to analyze over 100,000 potential season schedules until it finds the optimal one, leveraging over 4000 Amazon EC2 Spot instances to do so.

2022 Q&A with NFL VP for Broadcasting Planning

Read an exclusive behind-the-scenes look at how AWS helped the NFL sort through a quadrillion possible options to build a winning schedule for the 2022-2023 season

Talk to an AWS for Sports expert

Ready to learn more about how AWS can help accelerate your business?

Did you find what you were looking for today?

Let us know so we can improve the quality of the content on our pages