- AWS Solutions Library

- Guidance for a Cell-Based Architecture for Amazon EKS

Guidance for a Cell-Based Architecture for Amazon EKS

Improve resiliency and reduce your data transfer costs between Availability Zones

Overview

Benefits

Deploy independent application cells using bulkhead architecture patterns. Prevent issues in one cell from cascading across your container environment while maintaining service stability.

Keep container workload communications within Availability Zone boundaries. Eliminate inter-AZ data transfer costs for chatty microservices while maintaining high availability.

Deploy and manage containerized workloads separately within each zone. Respond to changing business needs without complex infrastructure coordination.

How it works

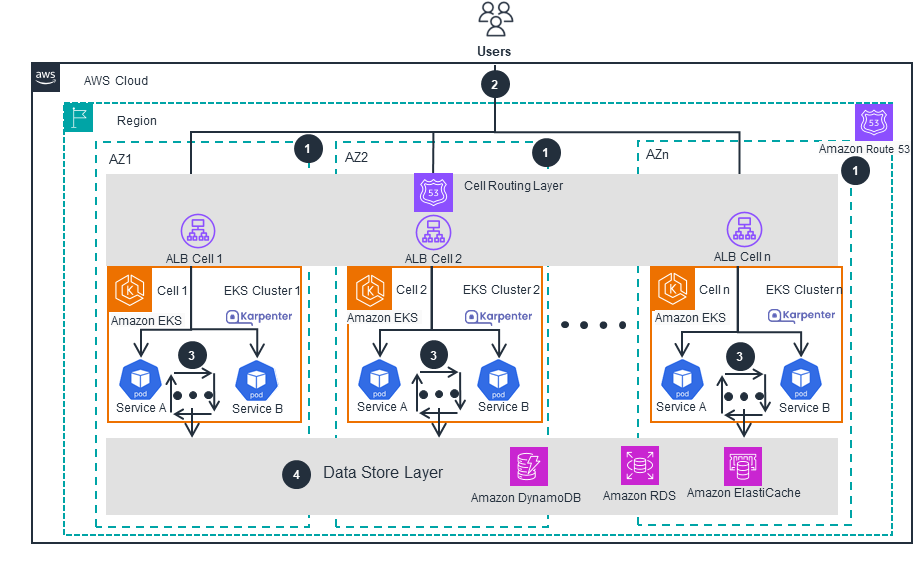

Cell-Based EKS Architecture

This architecture diagram shows how you can use a cell-based architecture to improve resiliency and reduce data transfer costs for Amazon EKS workloads. It shows what a cell consists of and how those cells are routed. For more details about supercells, open the other tab.

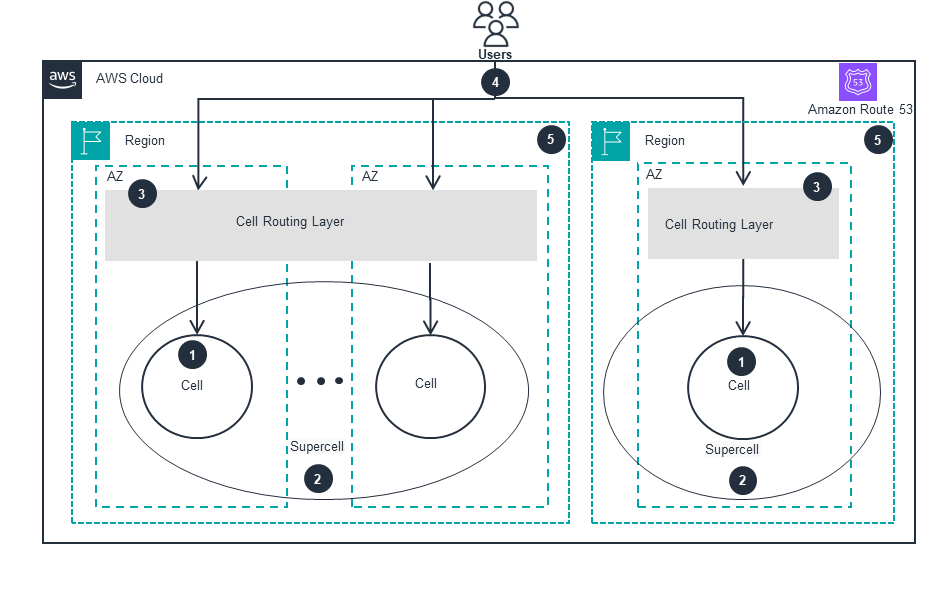

Regional Cell Aggregation

This architecture diagram shows how multiple cells are aggregated to create a supercell. It also outlines how those supercells are routed. For more details about the main architecture, open the other tab.

Deploy with confidence

Everything you need to launch this Guidance in your account is right here

We'll walk you through it

Dive deep into the implementation guide for additional customization options and service configurations to tailor to your specific needs.

Let's make it happen

Ready to deploy? Review the sample code on GitHub for detailed deployment instructions to deploy as-is or customize to fit your needs.

Disclaimer

The sample code; software libraries; command line tools; proofs of concept; templates; or other related technology (including any of the foregoing that are provided by our personnel) is provided to you as AWS Content under the AWS Customer Agreement, or the relevant written agreement between you and AWS (whichever applies). You should not use this AWS Content in your production accounts, or on production or other critical data. You are responsible for testing, securing, and optimizing the AWS Content, such as sample code, as appropriate for production grade use based on your specific quality control practices and standards. Deploying AWS Content may incur AWS charges for creating or using AWS chargeable resources, such as running Amazon EC2 instances or using Amazon S3 storage.

References to third-party services or organizations in this Guidance do not imply an endorsement, sponsorship, or affiliation between Amazon or AWS and the third party. Guidance from AWS is a technical starting point, and you can customize your integration with third-party services when you deploy the architecture.

Did you find what you were looking for today?

Let us know so we can improve the quality of the content on our pages