- AWS Solutions Library

- Guidance for Improving Workforce Health & Safety on AWS

Guidance for Improving Workforce Health & Safety on AWS

Overview

This Guidance shows how to use AWS services to address workforce health and safety concerns in high-risk industrial environments. It enables virtual training of new and existing employees on standard operating procedures, reducing onboarding risks and associated hazards. It can also help prevent accidents through real-time monitoring, breach detection, and instant alerting. Computer vision and artificial intelligence models can be configured to ensure adherence to environmental, health, and safety protocols by identifying violations like improper protective gear usage or access to restricted areas. Interactive dashboards and 3D visualizations provide insights into risk patterns, historical trends, and compliance metrics. Additionally, natural language processing capabilities can summarize relevant information and recommend training based on the identified hazards.

How it works

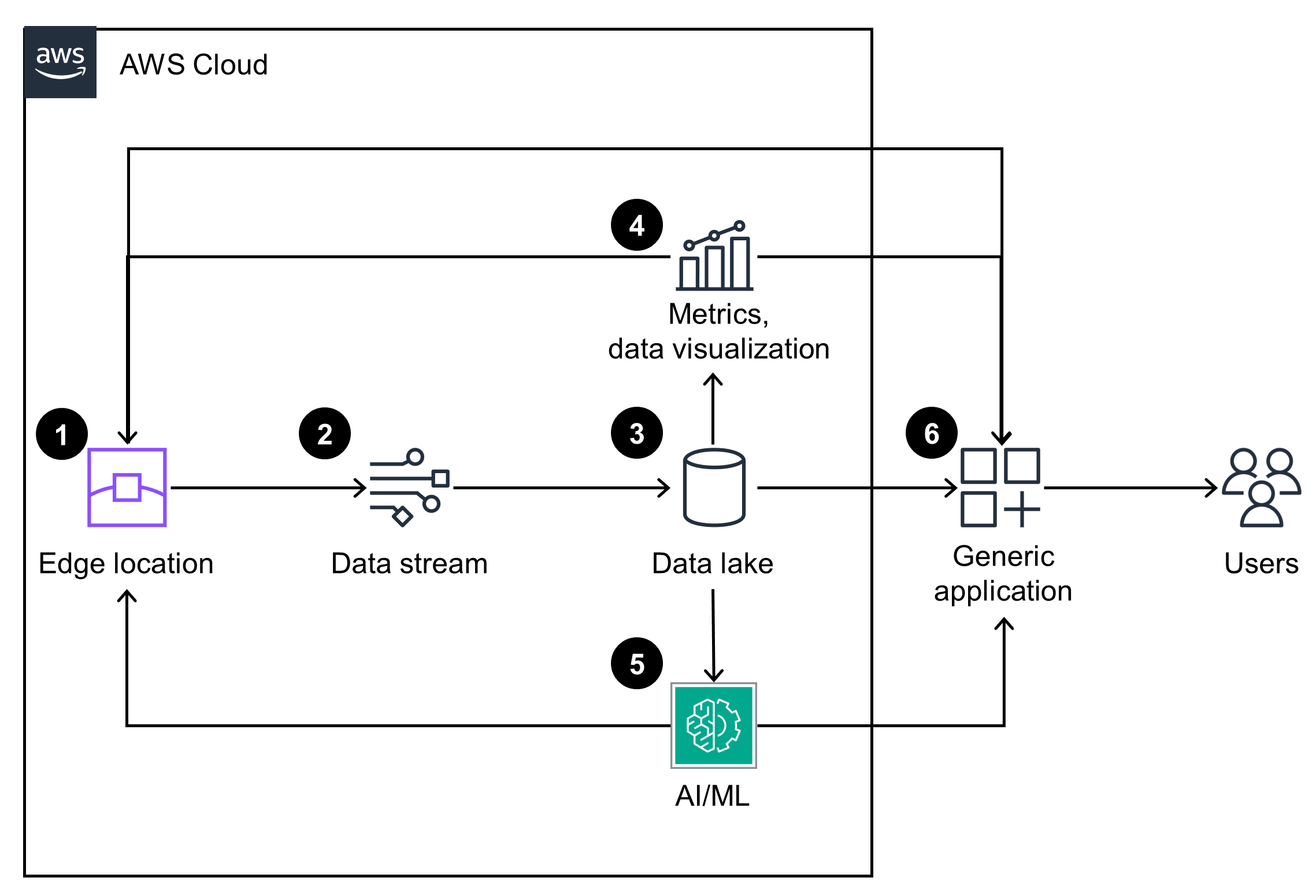

Overview

This architecture diagram consists of three integrated modules that address key stages of workforce safety and compliance. This diagram provides a conceptual overview of each module and its interdependencies.

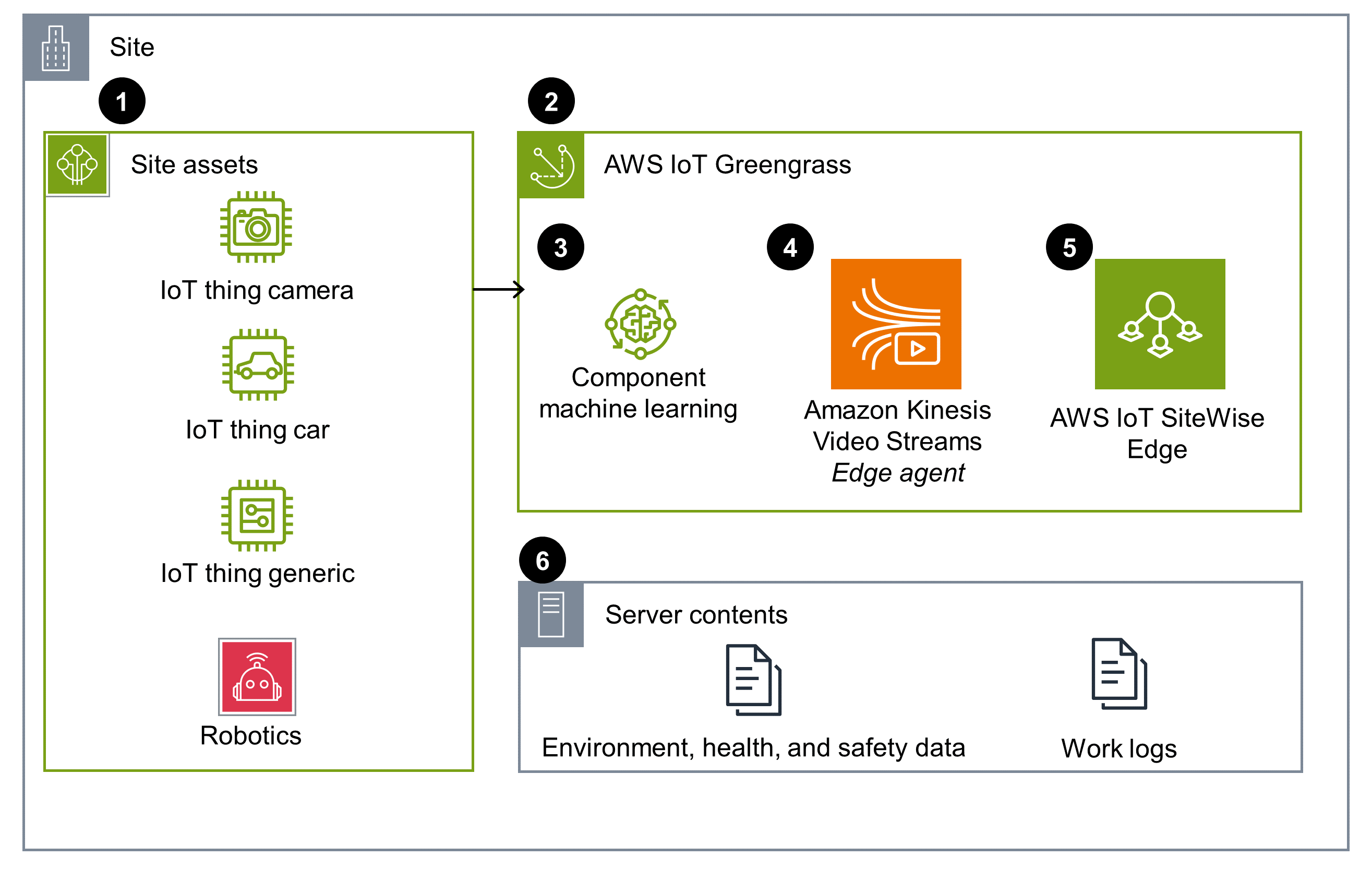

Data ingestion

This architecture diagram displays an edge location component that represents the on-site operational environment. It enables data ingestion from IoT devices, video streams, PLCs, and documents.

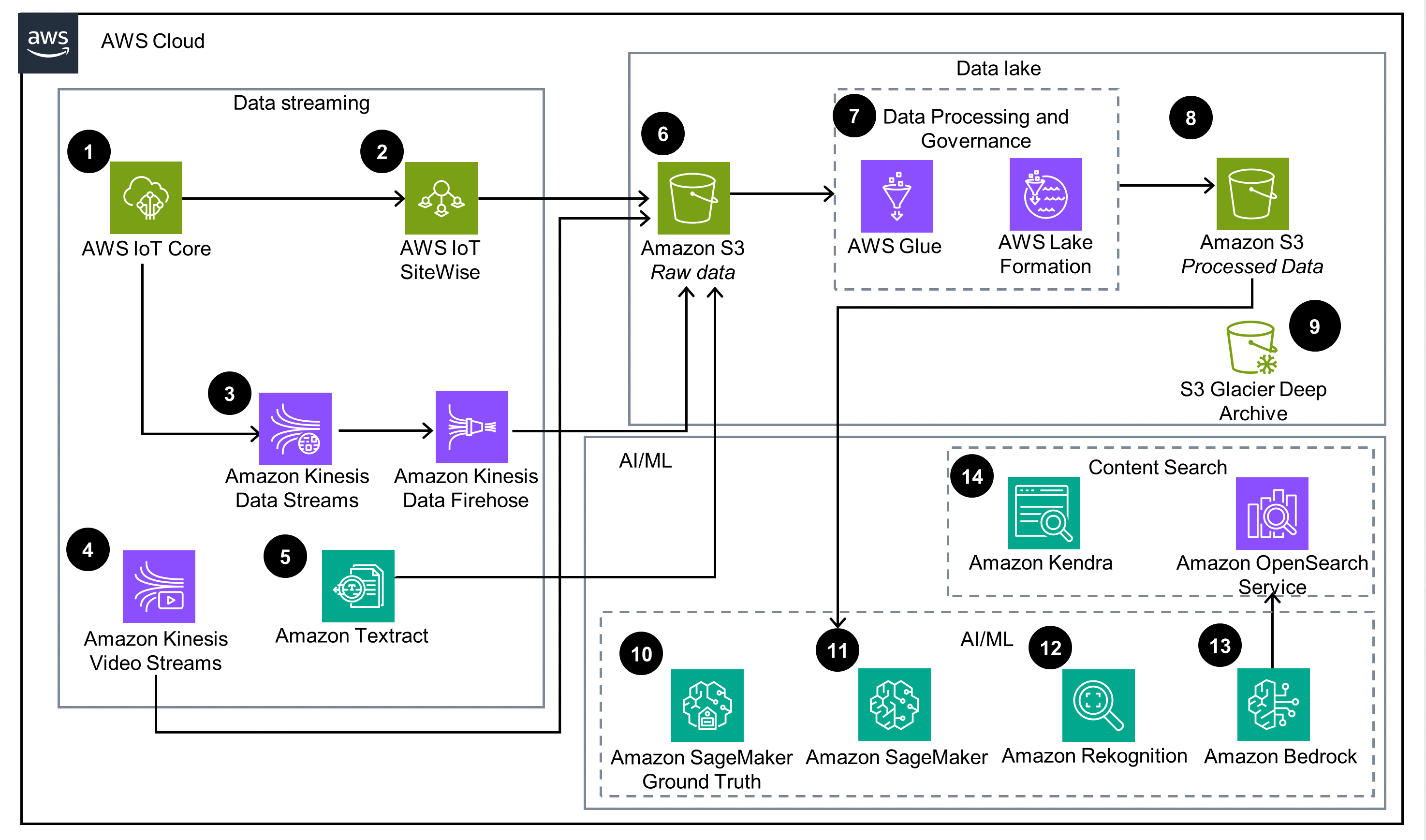

Data streaming & processing

This architecture diagram displays how data from the edge location is processed and ingested to a data lake.

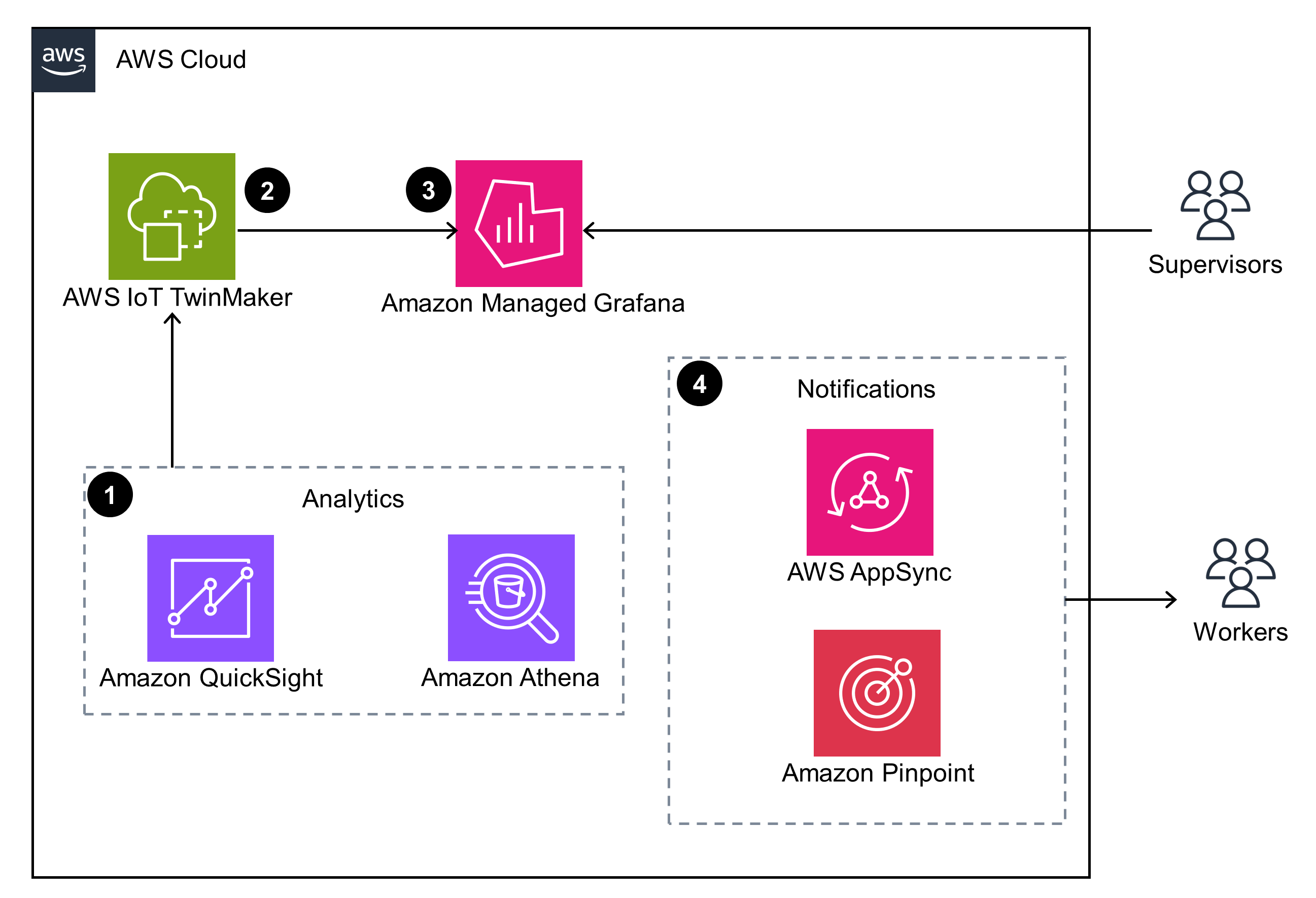

Data visualization & notifications

This architecture diagram shows how data is ingested and used for dashboards, 3D visualizations, and risk mapping.

Well-Architected Pillars

The architecture diagram above is an example of a Solution created with Well-Architected best practices in mind. To be fully Well-Architected, you should follow as many Well-Architected best practices as possible.

AWS IoT Core enables seamless onboarding of devices through just-in-time provisioning, registration, and multi-account support. This service empowers efficient changes, impacts forecasting before implementation, and maintains operational excellence throughout the workforce health and safety lifecycle.

Devices can be associated with metadata using the device registry and the AWS IoT Device Shadow service.

Lastly, integration with Amazon CloudWatch allows for monitoring of incoming data streams and alerting on potential issues. By understanding service metrics, you can optimize event workflows and ensure scalability.

By using AWS Identity and Access Management (IAM) and AWS IoT Core policies, this Guidance prioritizes data protection, system security, and asset integrity, aligning with best practices and improving your overall security posture. These policies enable granular access control, limiting permissions to only the necessary level. Specifically, AWS IoT Core policies regulate which devices can send data to specific MQTT topics and how they can interact with the cloud. This approach prevents unauthorized access, mitigating potential security risks such as accruing unintended charges or devices being compromised to send malicious commands.

We recommend enabling encryption at rest for all data destinations in the cloud, a feature supported by both Amazon S3 and AWS IoT SiteWise, to further safeguard sensitive information.

Through multi-Availability Zone (multi-AZ) deployments, throttling limits, and managed services like Amazon Managed Grafana, this Guidance helps to ensure continuous operation and minimal downtime for critical workloads. Specifically, AWS IoT SiteWise and AWS IoT TwinMaker implement throttling limits for data ingress and egress, ensuring continued operation even during periods of high traffic or load.

Furthermore, the Amazon Managed Grafana console provides a reliable workspace for visualizing and analyzing metrics, logs, and traces without the need for hardware or infrastructure management. It automatically provisions, configures, and manages the workspace while handling automatic version upgrades and auto-scaling to meet dynamic usage demands. This auto-scaling capability is crucial for handling peak usage during site operations or shift changes in industrial environments.

By utilizing the capabilities of AWS IoT SiteWise to manage throttling, as well as the automatic scaling of both AWS IoT SiteWise and Amazon S3, this Guidance can ingest, process, and store data efficiently, even during periods of high data influx. This automatic scaling eliminates the need for manual capacity planning and resource provisioning, enabling optimal performance while minimizing operational overhead.

Cost savings for this Guidance are primarily realized through reduced on-site operational efforts, regulatory compliance costs, and human resource expenses. AWS IoT SiteWise and AWS IoT TwinMaker are cost-optimized, managed services that provide digital twin capabilities at a low price point. Their pay-as-you-go pricing model ensures you are only charged for the data ingested, stored, and queried.

AWS IoT SiteWise also offers optimized storage settings, enabling data to be moved from a hot tier to a cold tier in Amazon S3, further reducing storage costs.

The services in this Guidance use the elastic and scalable infrastructure of AWS, which scales compute resources up and down based on usage demands. This prevents overprovisioning and minimizes excess compute capacity, reducing unintended carbon emissions. You can monitor your CO2 emissions using the Customer Carbon Footprint Tool. Additionally, the agility provided by technologies like digital twins (AWS IoT TwinMaker), event-based automation, and AI/ML-based insights empowers engineering teams to optimize on-site operations, increasing efficiency and minimizing emissions from industrial processes.

This Guidance also promotes efficient data storage by utilizing the Apache Parquet format in the AWS IoT SiteWise cold tier on Amazon S3, an open-source, column-oriented data file format designed for efficient data storage and retrieval, reducing storage overhead and associated emissions. Furthermore, by using data visualization and natural language processing generative AI through Amazon Bedrock, this Guidance can identify unknown areas of risk based on collected historical data, allowing you to assess the effectiveness of interventions and further optimize on-site operations for increased efficiency and reduced emissions.

Disclaimer

The sample code; software libraries; command line tools; proofs of concept; templates; or other related technology (including any of the foregoing that are provided by our personnel) is provided to you as AWS Content under the AWS Customer Agreement, or the relevant written agreement between you and AWS (whichever applies). You should not use this AWS Content in your production accounts, or on production or other critical data. You are responsible for testing, securing, and optimizing the AWS Content, such as sample code, as appropriate for production grade use based on your specific quality control practices and standards. Deploying AWS Content may incur AWS charges for creating or using AWS chargeable resources, such as running Amazon EC2 instances or using Amazon S3 storage.