AWS Database Blog

Use default encryption at rest for new Amazon Aurora clusters

In this post, you learn how Amazon Aurora now provides encryption at rest by default for all new database clusters using AWS owned keys. You’ll see how to verify encryption status using the new StorageEncryptionType field, understand the impact on new and existing clusters, and explore migration options for unencrypted databases.

Streamline Amazon RDS management with consolidation techniques – Part 2

This post is the second in a series of two dedicated to Amazon RDS consolidation. In the first post, we discussed the challenges, opportunities, and solution patterns for Amazon RDS consolidation. In this post, we introduce RDS Consolidator, a practical tool designed to optimize Amazon RDS database consolidation.

Streamline Amazon RDS management with consolidation techniques – Part 1

In this first post of a two-part series, we share proven strategies for Amazon RDS database consolidation that can help you reduce operational overhead and optimize resource utilization. In the second post, we will introduce an open source tool that we developed called RDS Consolidator, which helps you visualize your current database landscape and plan consolidation scenarios.

Accelerate your database migration journey with AI

When running database migration with AWS DMS, you may encounter opportunities to streamline your workflow: interpreting error messages, understanding configuration parameter relationships, and navigating between the console, documentation, and community forums during troubleshooting. What if you could have an AI-powered assistant that understands your migration context, diagnoses issues in real-time, and provides actionable guidance—all within your workflow? In this post, we show you have Amazon Q integration with AWS DMS can transform your database migration experience.

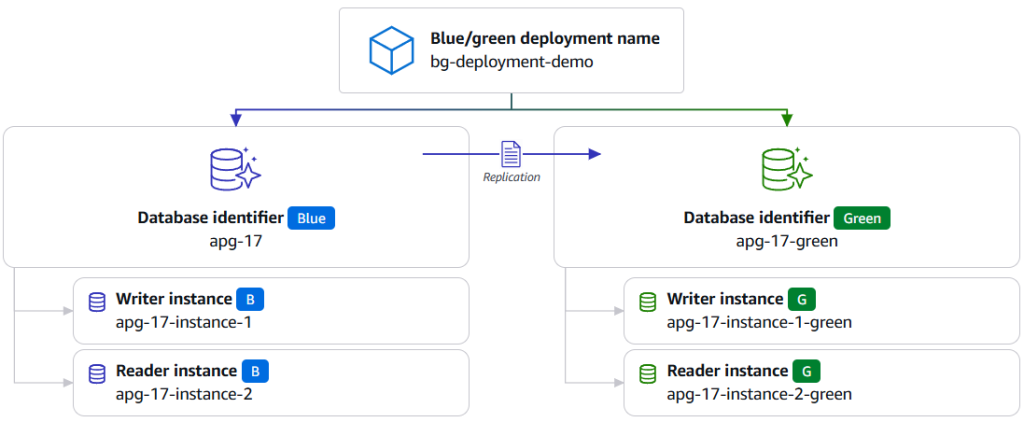

Achieve near-zero downtime database maintenance by using blue/green deployments with AWS JDBC Driver

In this post we introduce the blue/green deployment plugin for the AWS JDBC Driver, a built-in plugin that automatically handles connection routing, traffic management, and switchover detection during blue/green deployment switchovers. We show you how to configure and use the plugin to minimize downtime during database maintenance operations during blue/green deployment switchovers.

Essential tools for monitoring and optimizing Amazon RDS for SQL Server

In this post, we demonstrate how you can implement a comprehensive monitoring strategy for Amazon RDS for SQL Server by combining AWS native tools with SQL Server diagnostic utilities. We explore AWS services including AWS Trusted Advisor, Amazon CloudWatch Database Insights, Enhanced Monitoring, and Amazon RDS events, alongside native SQL Server tools such as Query Store, Dynamic Management Views (DMVs), and Extended Events. By implementing these monitoring capabilities, you can identify potential bottlenecks before they impact your applications, optimize resource utilization, and maintain consistent database performance as your business scales.

Migrate relational-style data from NoSQL to Amazon Aurora DSQL

In this post, we demonstrate how to efficiently migrate relational-style data from NoSQL to Aurora DSQL, using Kiro CLI as our generative AI tool to optimize schema design and streamline the migration process.

Replication instance sizing for optimal database migrations with AWS DMS

In this post, I show you how to use the new AWS DMS instance estimator tool for initial sizing recommendations, review monitoring strategies to collect accurate benchmark data, and present considerations on how to optimize your database migrations.

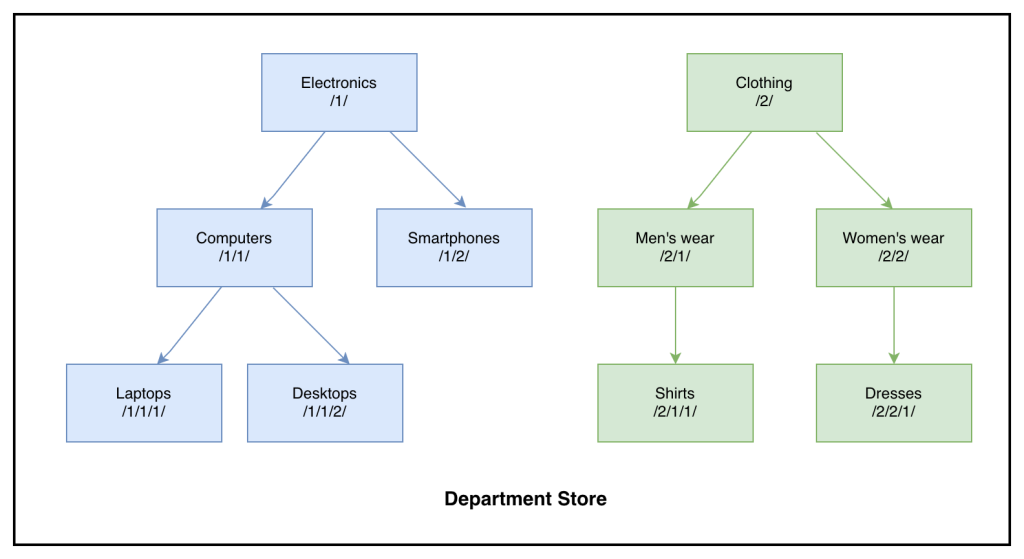

Build a custom solution to migrate SQL Server HierarchyID to PostgreSQL LTREE with AWS DMS

In this post, we discuss configuring AWS DMS tasks to migrate HierarchyID columns from SQL Server to Aurora PostgreSQL-Compatible efficiently.

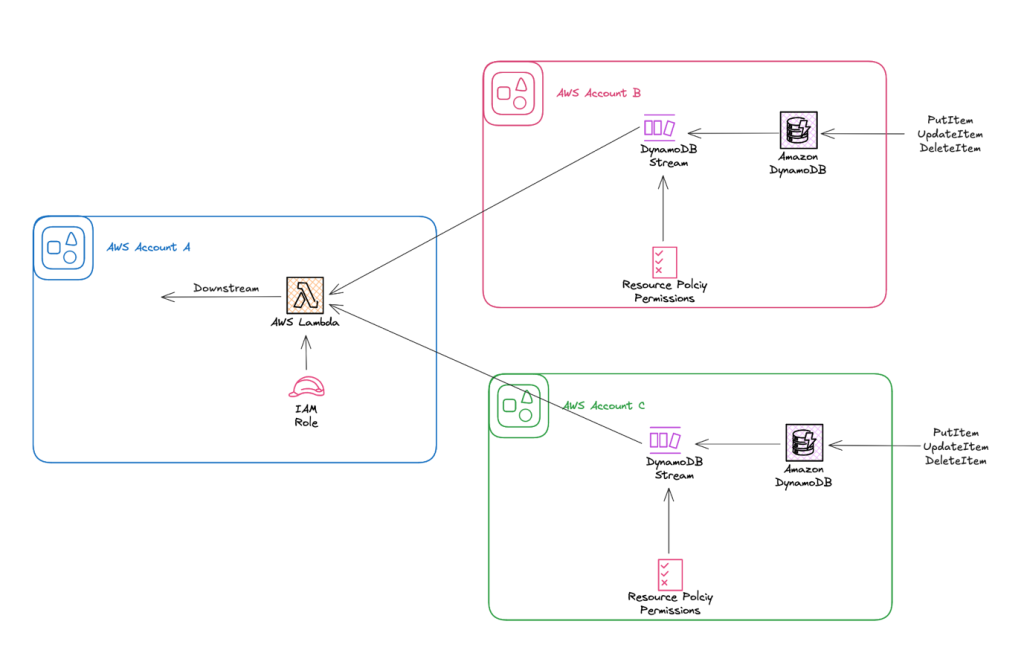

Simplify cross-account stream processing with AWS Lambda and Amazon DynamoDB

In this post, we explore how to use resource-based policies with DynamoDB Streams to enable cross-account Lambda consumption. We focus on a common pattern where application workloads live in isolated accounts, and stream processing happens in a centralized or analytics account.