Networking & Content Delivery

Zendesk’s Global Mesh Network: How we lowered operational overhead and cost by migrating to AWS Transit Gateway

This post is presented by our guest Vicente De Luca, Principal Engineer at Zendesk and contributor at AWS Community Builders program, focusing on architecting scalable and reliable networks for Zendesk’s global footprint, and Tom Adamski, AWS Networking Solutions Architect.

Zendesk is a global CRM company, building software designed to improve customer relationships. Our customers span 160 countries and territories, and our network architecture needs to go with that footprint. Last year I published a blog post telling a bit of our hybrid journey transitioning from data centers to the AWS Cloud and how we developed an in-house network technology for interconnecting many VPCs across multiple regions and our on-premises. Due to the large number of dynamic VPN tunnels this setup created, the project was aptly named Medusa.

With the data centers decommissioned, Medusa continued as the routing solution to move packets across VPCs, until we learned about the launch of AWS Transit Gateway as a potential Medusa replacement. We had to wait until AWS Transit Gateway supported peering across AWS Regions, and once available, we could then start our planning to switch over. In this blog post, I will describe how Zendesk migrated from Medusa to an AWS Transit Gateway based architecture.

Medusa Architecture

Before the migration, our architecture relied upon EC2 instance routers with a custom-built Ubuntu AMI. This image included open-source network daemons to build a DMVPN network on top of IKE, IPsec, OpenNHRP, and BIRD for BGP.

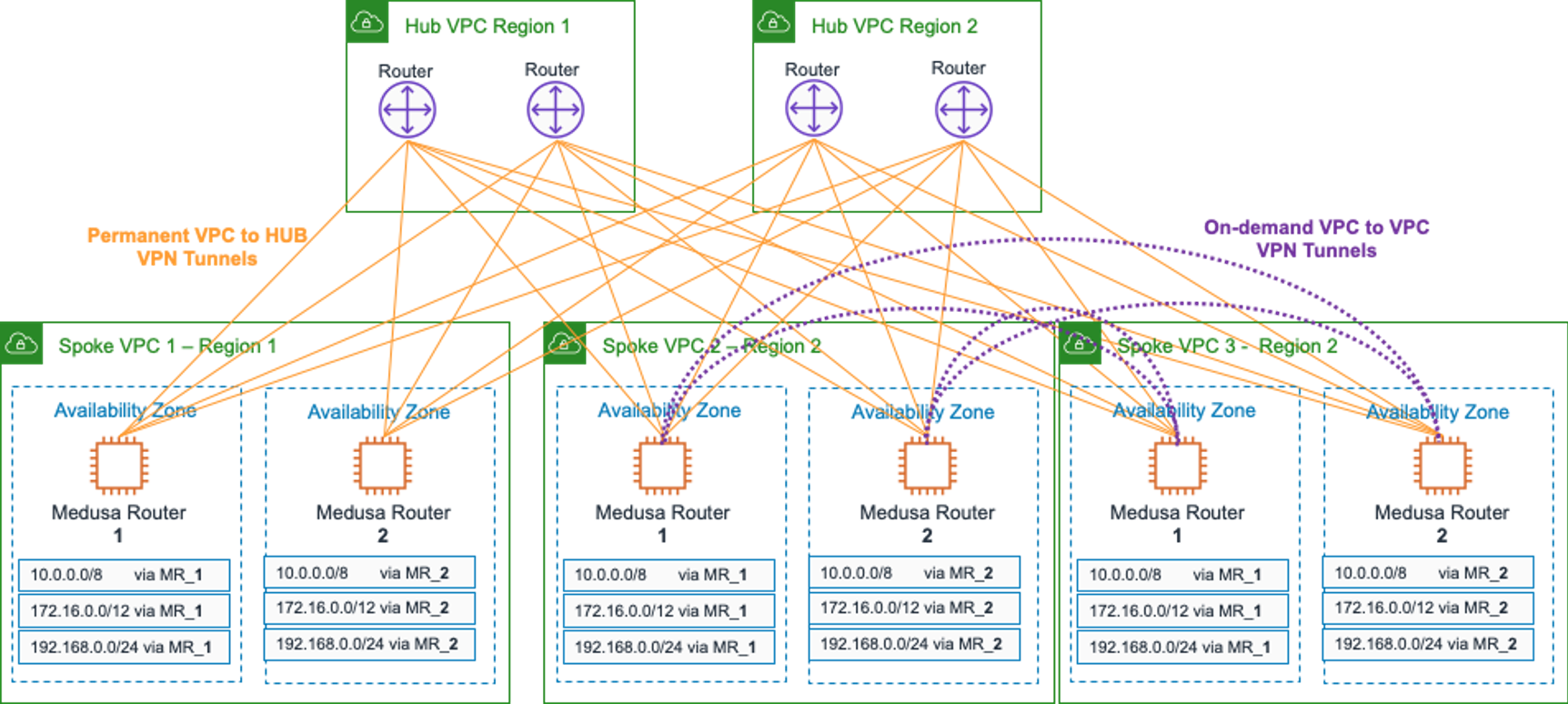

The diagram that follows shows a simplified view of the setup.

Figure 1: Original Medusa network architecture

Each of the spoke VPCs had EC2-based routers deployed into an Auto Scaling group per each Availability Zone. Managing route tables in the VPCs required us to write medusa-vpc-route-table-injector, a Python daemon for Linux using the boto3 AWS SDK.

At runtime, the VPC route tables would be automatically updated by a Medusa router (MR) within the same Availability Zone to forward private traffic — RFC1918 prefixes — to the EC2 router’s own ENI. In case of failures of a particular Medusa router, a distributed lock mechanism would trigger another available router to update the VPC route tables to point to the new healthy Medusa router ENI, providing automatic failover.

The diagram shows IPsec tunnels between Medusa routers and the DMVPN Hubs, but also on-demand dynamic tunnels across Medusa routers in all Spoke VPCs. This means cross Spoke VPC traffic flows directly with no latency penalty, rather than being forwarded via the Hubs. To boost performance and take advantage of the multiple tunnels available, we enabled ECMP and BGP Multipath to provide per-flow load balancing.

This architecture allowed for flexible connectivity between VPCs, as well as our on-premises networks for about three years. It could scale up or out to a certain point, self-heal, and the deployment of new infrastructure was effortless compared to previous days, as it was heavily automated.

That said, there were some drawbacks. Managing all the EC2 Medusa Routers turned out to be a bit more daunting than expected. We had to stay on top of software vulnerabilities and rotate the fleet of immutable routers frequently. It also required us to set up different monitoring profiles depending on the EC2 instance type used as a router, as each has unique network capacity, limits, and quirks.

Migration

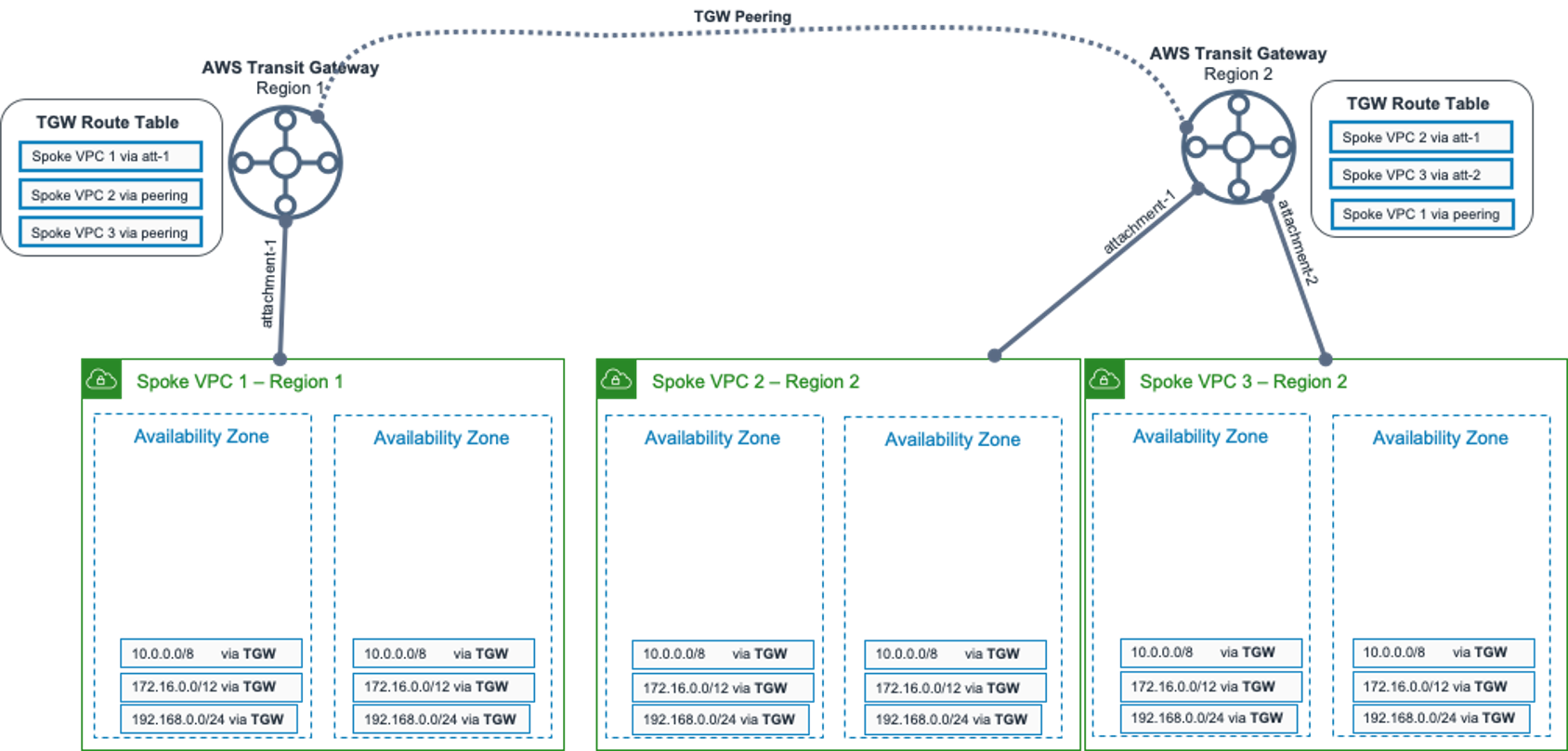

When AWS Transit Gateway launched at AWS re:Invent in 2018, we knew it would be the natural next step in our network evolution. It allows the creation of regional hub and spokes topologies, and more recently, peering them across AWS Regions. It also supports IPv4 / IPv6 dual-stack and multicast.

Our target architecture would have a transit gateway in each AWS Region connecting all the local region VPCs. The transit gateways would peer across AWS Regions, replicating the full-mesh topology we achieved with Medusa.

We knew where we were going, but we needed to figure out a few bits first, some of the most important ones:

- Migration from Medusa to transit gateway with zero downtime

- Automatic VPC route tables injection of transit gateway aggregated routes

- Automatic transit gateway route propagation across peered AWS Regions

After drafting an initial plan and exhausting test iterations in our staging environment, we came up with a functional approach to migrate from Medusa to AWS Transit Gateway, one VPC at a time, and with zero downtime.

Step 1 – Build out the transit gateway connectivity and update Medusa routing policy

In this step, we created one transit gateway for each of the 8 AWS Regions our platform spanned. We further connected each VPC to its regional transit gateway and propagated all VPC routes into the default transit gateway route-table.

We then modified our medusa-vpc-route-table-injector code to inject more specific prefixes (full-routes) into the VPC route tables, instead of the previous aggregated RFC1918 routes. This step prepared us for the future cutover, ensuring traffic will prefer the Medusa routers (MR), even when a transit gateway architecture coexists.

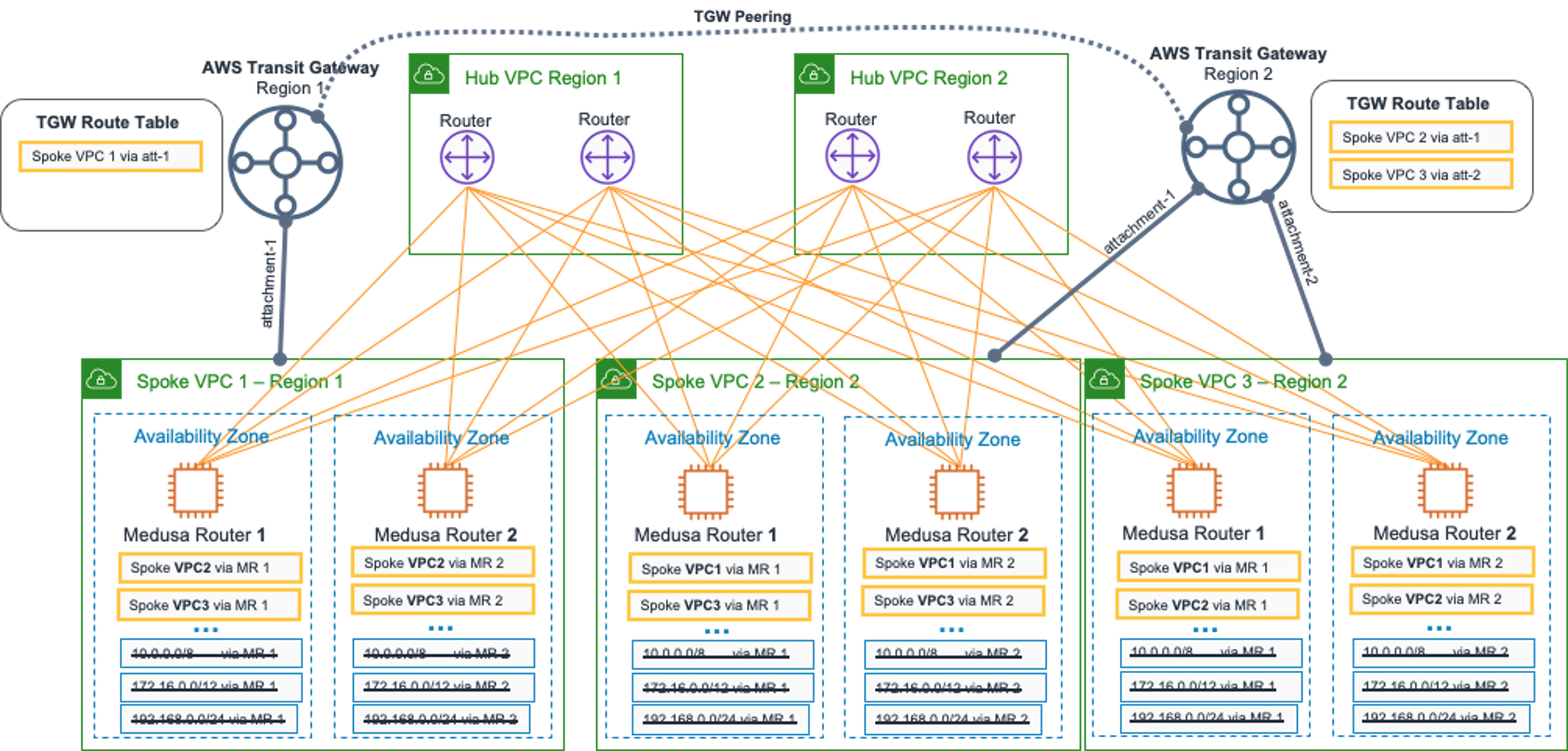

The following diagram shows the network after completing step 1. To simplify the drawings, we focus on two AWS Regions.

Figure 2: Network topology after completing step 1

At this point, we’ve built the transit gateway network “plumbing” for all our VPCs in 8 AWS Regions, but the traffic was still using the Medusa network for communication across VPCs.

Step 2 – VPC Route tables injection

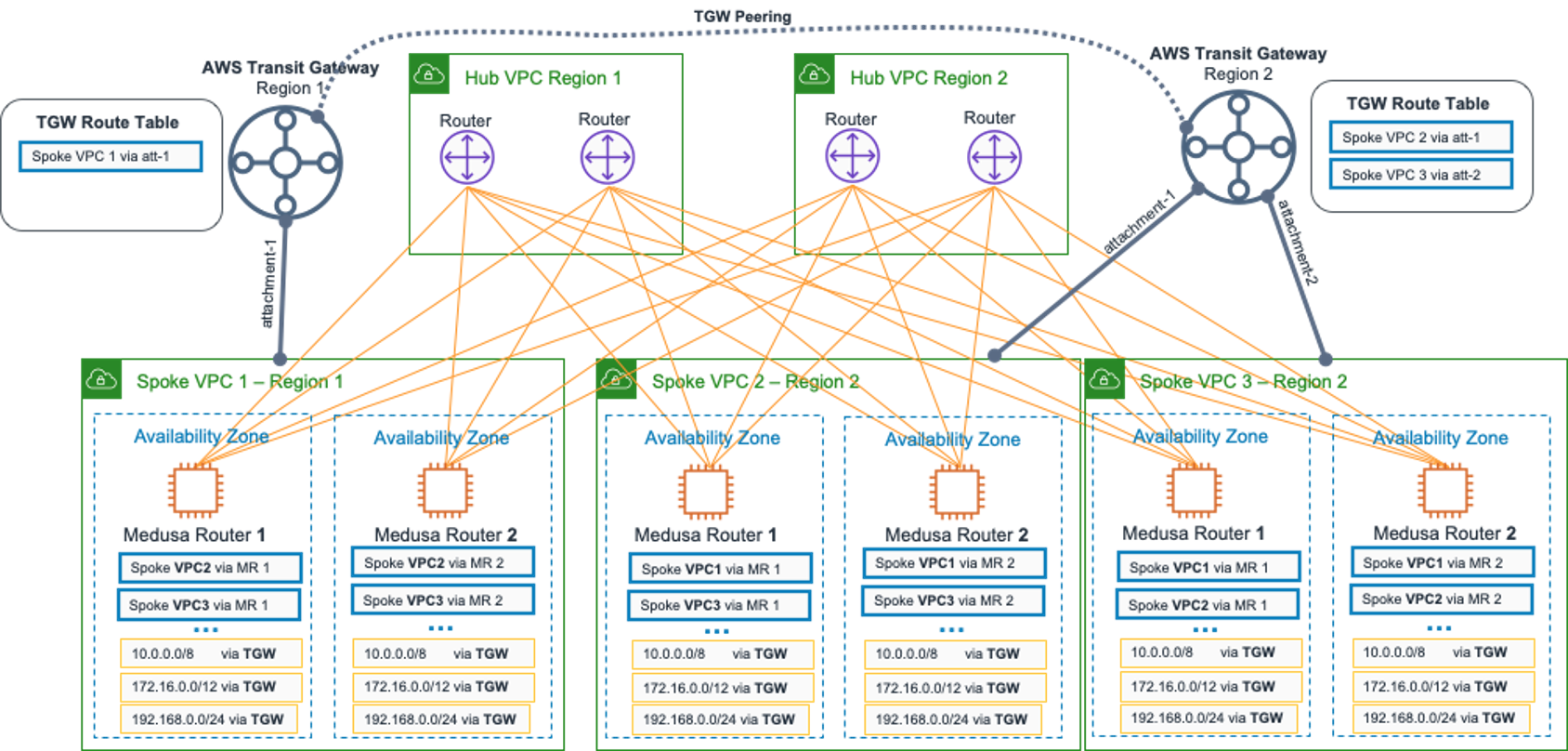

As with Medusa, using transit gateways required us to create static routes for each VPC route table using the private RFC1918 prefixes via the regional transit gateway-ID. The vpc-route-tables-injector comes in here as a Lambda function to automate the route entries creation.

Figure 3: Network topology after completing step 2

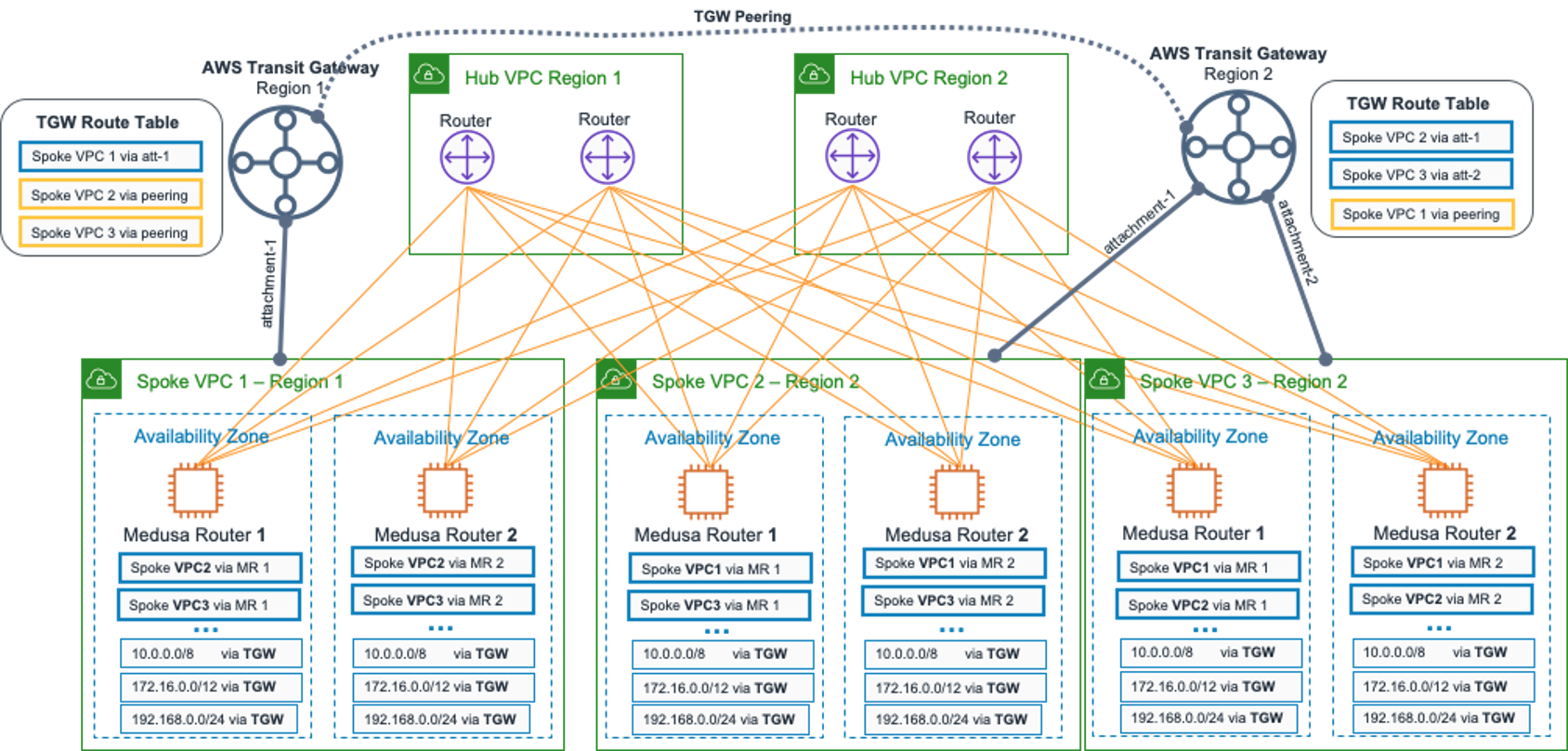

Step 3 – Transit gateway peering routes propagation

At the moment, there is no native support for route propagation across peered transit gateways, so we had to write another Lambda function to provide this functionality. It works by mapping all transit gateways route table entries and creating static routes in the default route table for each peered transit gateway. Our internal nickname for this function is L-BGP – AWS Lambda-Based Gateway Protocol 🙂

Figure 4: Network topology after completing step 3

Pre-flight check!

After completing the previous steps, from a routing point of view, all traffic between spokes still uses the Medusa network. That’s because the more specific routes injected by the Medusa routers daemon takes precedence over the aggregate RFC1918 routes injected by the transit gateway Lambda function. We need to remove the Medusa routes before traffic can flow via transit gateway.

Step 4 – Shift traffic from Medusa Routers to the transit gateways

At this stage, we developed the capability to cut over traffic from Medusa to transit gateway, one VPC at a time, with no downtime to the upper layers of nodes and services.

To perform the migration, all we had to do was disable the medusa-vpc-route-tables-injector daemon and reconfigure BIRD BGP for all the Medusa Routers in the target migration to withdraw its own VPC prefixes announcements.

The following steps were performed with automation, resulting in a sequence of events that ultimately converged egress and ingress traffic to flow via transit gateways.

1. Disable medusa-vpc-route-table-injector daemon

Stopping this process prevented Medusa routers at the target migration VPC from injecting its routes into the VPC route table, and also cleaned up all the existing more specific routes existent via Medusa Routers

Now, egress traffic from our target migration VPC is matching the aggregate routes via the transit gateway! However, ingress traffic is still coming through the Medusa routers. The asymmetric routing condition existed only for a brief period, as this and the next step were executed in sequence via an automated fashion.

Figure 5: VPC 1 Migration – Medusa route injection disabled

2. Withdrawal the VPC CIDR announcements from BGP

We reconfigured BIRD BGP to no longer advertise the target VPC prefixes. All the other VPCs Medusa routers react by removing these networks from its Linux routing tables, also triggering the medusa-vpc-route-table-injector daemon to delete them from its VPC route tables.

Now both egress and ingress traffic is routed via transit gateways on our target VPC!

Figure 6: VPC 1 Migration – BGP route withdrawal

After ironing out and validating the change process in our staging environment, we began rolling into production. We started with a single VPC and slowly ramped up one at a time until we had migrated all.

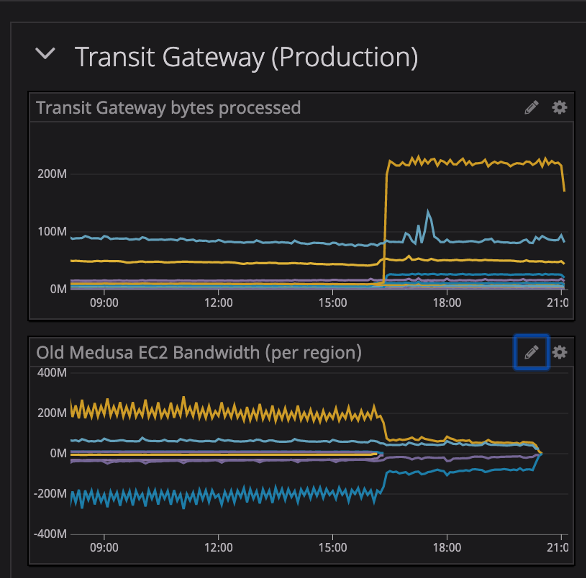

Throughout the migration, we monitored a variety of metrics to observe the overall platform’s correct functionality. These included bandwidth utilization, cross-Region IP packet loss, TCP retransmissions, internal DNS resolution failures, HTTP error rates, and connection timeouts.

The following graph shows how traffic shifted via transit gateways.

Figure 7. Bandwidth utilization on transit gateway and Medusa Routers during migration

Step 5 – Decommission of the Medusa Routers

We froze all the Medusa Routers in this standby mode for a couple of days, in case unforeseen events forced us to rollback. With the confidence gained along the migration process and after a cooldown period, we proceeded on deleting the Medusa router stacks, one VPC at a time, repeating our tests at each iteration.

Figure 8: Migration completed for all 3 VPCs

Results

The migration from our in-house networking solution to AWS Transit Gateway removed a lot of moving parts in the Zendesk network architecture. It also translated to less overhead and cognitive load on our Network team. Each transit gateway attachment can achieve throughput up to 50 Gbps, more than enough for our current cross-Region traffic demand. Replacing our EC2 infrastructure with this managed service also helped reduce our overall AWS cross-Region network spend by close to 50%.

The following diagram represents the global network architecture after we completed the entire migration process across all AWS Regions.

Figure 9: Final global architecture

The amount of toil that was eliminated by the migration released cycles back to our team, enabling us to deep dive into other challenges up in the network stack. This helps keep us ahead of demand as the global Zendesk customer base continues to grow rapidly.

We face the familiar complexity that comes with running a service-oriented architecture, and to that end, we are now rolling out Istio to all of our Kubernetes workloads. Building a service mesh will help us provide advanced traffic management features, like canary deployments, locality load balancing, mutual TLS, and many more. Exciting times!