AWS Compute Blog

Amazon S3 Adds Prefix and Suffix Filters for Lambda Function Triggering

![]() Tim Wagner, AWS Lambda General Manager

Tim Wagner, AWS Lambda General Manager

Today Amazon S3 added some great new features for event handling:

- Prefix filters – Send events only for objects in a given path

- Suffix filters – Send events only for certain types of objects (.png, for example)

- Deletion events

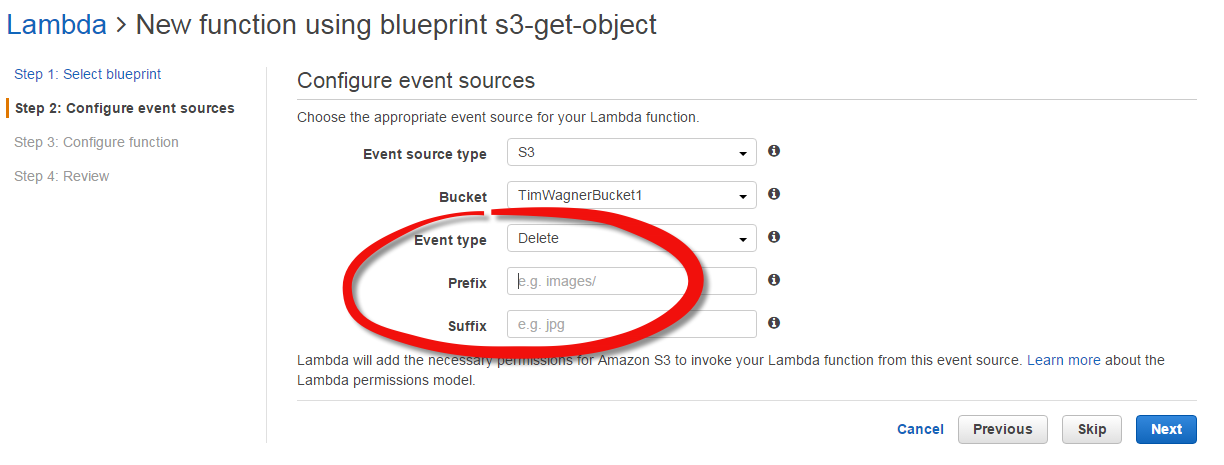

You can see some images of the S3 console’s experience on the AWS Blog; here’s what it looks like in Lambda’s console:  .

.

Let’s take a look at how these new features apply to Lambda event processing for S3 objects.

Processing Deletions

Previously, you could get S3 bucket notification events (aka “S3 triggers”) when objects were created but not when they were deleted. With the new event type, you can now use Lambda to automatically apply cleanup code when an object goes away, or to help keep metadata or indices up to date as S3 objects come and go.

You can request notification when an object is deleted by using the s3:ObjectRemoved:Delete event type. You can request notification when a delete marker is created for a versioned object by using s3:ObjectRemoved:DeleteMarkerCreated. You can also use a wildcard expression like s3:ObjectRemoved:* to request notification any time an object is deleted, regardless of whether it’s been versioned.

S3 events aren’t guaranteed to be sent in order, but S3 does offers help for keeping the event sequence organized: The S3 event structure includes a new field, sequencer, which is a hexadecimal value that establishes a relative ordering among PUTs and DELETEs for the same object. (The values can’t be meaningfully compared across different objects.) If you’re trying to keep another data structure, like an index, in sync this is critical information to save and compare, as a PUT followed by a DELETE is very different from a DELETE followed by a PUT. Check out the S3 event format for a detailed description of the event format and options.

Prefix Filters

Prefix filters can be used to pick the “directory” in which to send events. For example, let’s say you have two paths in use in your S3 bucket:

Incoming/ Thumbnails/

Your client app uploads images to the Incoming path and, for each image, you create a matching thumbnail in the Thumbnails path.

Previously, to use Lambda for this you’d need to send all events from the bucket to Lambda – including the thumbnail creation events. That has a couple of downsides: You have to send more events than you’d like, and you have to explicitly code your Lambda function to ignore the “extra” ones in order to avoid recursively creating thumbnails of thumbnails (of thumbnails of …)

Now, it’s easier: You set a prefix filter of “Incoming/”, and only the incoming images are sent to Lambda. The Lambda code also gets simpler because the recursion check is no longer needed, since you never write images to the path in your prefix filter. (Of course it doesn’t hurt to retain it for safety if you prefer.)

You can also use prefix filters on the object name (the “path” is just a string in reality). So if you name files like, “IMAGE001” and “DOC002” and you only want to send documents to Lambda, you can set a prefix of “DOC”.

Suffix Filters

Suffix filters work similarly, although they’re conventionally used to pick the “type” of file to send to Lambda by choosing a suffix, such as “.doc” or “.java”. You can combine prefix and suffix filters.

In general, you can’t have define overlapping prefix or suffix filters, as it would make the delivery ambiguous; the partitioning of events must be unique. The exception to this is that you can have overlapping prefix filters if the suffix filters disambiguate. If you want to send one event to multiple recipients, check out the post on S3 event fanout to see some suggested architectures for doing just that.

You can read all the details on Amazon S3 event notification settings in the docs, which also cover using the command line and programmatic/REST access.

Until next time, happy Lambda (and S3 event) coding!

-Tim