Containers

Cloud Native CI/CD with Tekton and ArgoCD on AWS

Introduction

With the ongoing popularity and adoption of container orchestrators such as Kubernetes, more and more cloud-native applications are built on top of it. Besides business applications, companies are migrating their infrastructure-related components such as CI/CD systems as well.

But are those systems ready for such modern platforms? The answer depends. Clearly, most of the existing CI/CD systems weren’t built directly for Kubernetes and the cloud-native world.

This blog post should give a quick introduction to how to use the Kubernetes native CI/CD system Tekton on top of Amazon Elastic Kubernetes Service (Amazon EKS) and its integration with various developer-related AWS services.

We will start with a quick introduction about Tekton and an explanation of some key concepts. The second part contains a practical example that walks the reader through the process in order to build and deploy a Spring Boot-based web application with a Tekton native CI/CD Pipeline. The sample code provided should enable you to build more sophisticated Pipelines for your unique requirements.

Tekton Overview

Tekton is an open-source project which allows you to build cloud-native CI/CD systems on top of Kubernetes. A good definition of what makes an application cloud native has been defined by the Cloud Native Computing Foundation.

Compared to other CI/CD solutions, Tekton runs natively on top of Kubernetes. It makes use of custom resource definitions (CRDs) in order to register common CI/CD building blocks as native Kubernetes resources. Since Kubernetes 1.16, it is possible to introduce custom resources that extend the Kubernetes API. Similar to existing resources such as Pods or Deployments, you can register your own objects with the help of CRDs. In addition to handling these new resources, you have to develop your own business logic in order to process them. These applications are deployed into Kubernetes as custom controllers.

In a nutshell, it is just a set of custom controllers developed with cloud-native properties in mind and a few custom resources. Together these custom resources define the building blocks for your CI/CD Pipelines.

Tekton is designed as a modular system that consists of multiple components. This allows you to choose only the components that are required for your specific use case. The basic one is called Tekton Pipelines, which contains the main custom resources and controllers in order to build Pipelines. We will cover the most important custom resources out of this component in the subsequent section.

Further, there needs to be a way that Tekton can trigger a Pipeline based on code changes made to a version control system. This capability is covered within another component named Tekton Triggers. The CRDs of this project allow you to set up an event listener that listens for incoming Git events, such as pull requests or commits made to your codebase. After extraction of the necessary fields of the payload provided by the webhook, a Pipeline run can be automatically instantiated.

Finally, there is the Tekton Dashboard project which provides a web UI in order to visualize all of your Tekton resources and keep track of current and recent Pipeline executions.

The deployment of these projects to a Kubernetes cluster installs multiple custom resources. This blog post shows only a subset of the available resources. Therefore the following section covers only the most basic ones that are part of the Tekton Pipelines project:

- Task

This custom resource defines one or more CI/CD steps that run in sequential order. In Kubernetes terminology, a Task executes as a Pod, and the steps are the containers that are part of this Pod. Besides specifying the container image, the Task CRD allows you to specify context in the form of volume mounts or environment variables. Further, a Task can contain parameters in order to make it reusable and customizable. Possible examples for Tasks are a clone Task in order to fetch source code from a repository or a test Task that runs unit tests for an application. - TaskRun

In order to execute a previously defined Task, there is an additional custom resource required, which is named TaskRun. This resource contains a reference to the Task that should be run and the input parameters. - Pipeline

A Pipeline contains references to one or more Tasks and therefore represents the different stages for your CI/CD Pipeline. Also, the required input parameters for the different Tasks can be defined. Tasks within a Pipeline can be executed either in parallel or sequential order. - Pipeline Run

This final piece instantiates an already defined Pipeline resource and defines the input parameters for it. Pipeline Run objects can either be applied manually or triggered by an automation extension such as the Trigger Template provided by the previously mentioned Tekton Triggers project. - Workspace

A workspace defines a persistent storage location that allows Tasks running as part of a Pipeline to exchange build artifacts such as Jar files. Workspaces can be either defined as Kubernetes Persistent Volumes (PV) or ConfigMaps (CM).

Pipeline Architecture

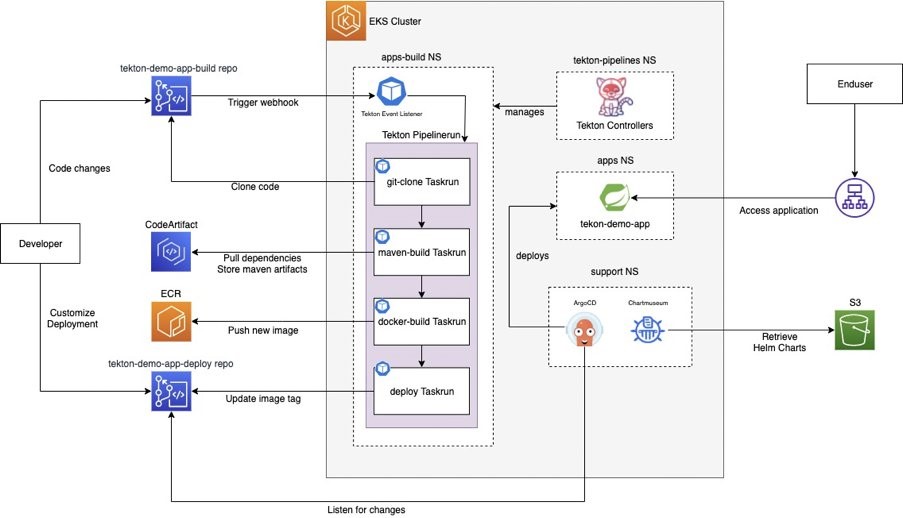

The upcoming section contains an example of how you can use Tekton in order to build and deploy a Spring Boot-based web application. Please find below the architecture diagram and explanation of each Pipeline Task.

The Pipeline is named tekton-demo-spring-boot and contains the following stages:

- Git Clone Task

This is the initial Task, which contains a step in order to clone the source code from the AWS CodeCommit repository. In addition, it computes the commit SHA, which will be used as the container image tag, and build artifact identifier in later Tasks. Further, it is responsible for making the source code available through a Workspace for subsequent Tasks. Workspaces are another custom resource that allows Tekton to share artifacts between Tasks. This action is required because Tasks can be executed on arbitrary nodes within the EKS cluster. Workspaces are linked to Pipelines or Tasks and make use of PV or CM, which acts as Kubernetes storage backends. An example for such a Task can be found in the related Github repository. - Maven Build Task

Apache Maven is a widely used build tool for Java projects. It allows the management of dependencies and plugins that lay out how Java source code can be transformed into deployable build artifacts such as Java Archives (JAR). Most software projects nowadays rely on various third-party dependencies. For projects built with Maven, the upstream repository Maven Central provides a central place to retrieve such dependencies. However, most companies nowadays prefer to store internal and external dependencies in company-managed repositories. Although many solutions exist, the management and maintenance of such repositories can be challenging and time-consuming. Therefore we rely on AWS CodeArtifact, which is a fully managed artifact repository. It allows the storage and management of various kinds of software artifacts, including Maven. Our Pipeline Task contains two steps. The first one is required to authenticate against our AWS CodeArtifact repository, retrieve the required auth token, and make it available to the next step. The second step executes the mvn package command in order to build a Java archive file. It makes use of the previously cloned source code files, which can be found in the Workspace. Maven fetches the required dependencies directly from AWS CodeArtifact, which is configured as a proxy to upstream Maven Central. After successfully building the JAR artifact, it uploads it to the private AWS CodeArtifact repository and stores the artifact on the Workspace for subsequent Tasks. - Docker Build Task

The deployment target for our application is EKS. Therefore the Pipeline needs to build a container image that contains the JAR artifact. Hence this Task is responsible for building a container image and pushing it to the Amazon Elastic Container Registry (Amazon ECR), which is a fully managed container registry provided by AWS. In order to perform a Docker in docker build, the Task uses an open-source tool called Kaniko. - Deploy Task

Finally, the Pipeline needs to deploy the new revision of the application to our cluster. Although Tekton could directly deploy to EKS, the way it is done here is slightly different. The Pipeline uses another open source project called ArgoCD. In a nutshell, ArgoCD connects to one or more Git repositories and listens for changes to them. If a change is detected, ArgoCD pulls the repository and applies the content of it to the cluster. This allows for a GitOps-like workflow. If you want to learn more about ArgoCD, the official website is a good starting point. For this solution, there is a dedicated AWS CodeCommit repository that contains a Helm chart. This Helm chart has a dependency to a master Helm chart that contains all the required Kubernetes manifests in order to deploy a general-purpose web application. Through this dependency, it is possible to define an interface and expose only a subset of parameters that are allowed to change. Further, this AWS CodeCommit repository is connected to an ArgoCD instance within the cluster. Whenever the Helm chart changes, ArgoCD triggers a new deployment of the application. In order to achieve that, our deploy Task clones the current state of the tekton-demo-deploy repository, updates the image tag within the Helm chart, commits, and pushes the changes back to the repository. Subsequently, ArgoCD recognizes the change and deploys the new application version with the new image version pulled directly from ECR.

Pipeline Demo

This tutorial demonstrates a Pipeline run that covers an application build and deployment to EKS.

Prerequisites

For this demo, we need to set up an EKS cluster and various AWS services. We suggest creating a dedicated AWS account for this demo.

Before you continue with the demo steps, please make sure that you have completed all prerequisites outlined within the README.md of the following repository:

https://github.com/aws-samples/aws-pipeline-demo-with-tekton

Please make sure that you have installed all of the required tools and a dedicated EKS cluster. We recommend creating a fresh EKS cluster with the command line tool eksctl. In order to help you with the cluster provisioning, we have provided a sample cluster config in the directory (eks-cluster-template.yaml).

Once you have created the cluster and installed the required tooling, please execute the install script that you can find in the repository (install.sh).

After successful installation with the provided script, you should see an output that contains various output parameters, which will be referenced through the demo.

Repository Structure

The provided code repository contains various files required to set up the demo environment. Please find below a short summary of the outlined folders:

- cloudformation

This folder contains CloudFormation templates in order to deploy the AWS services required for our CI/CD Pipeline.

The services created by these templates are AWS CodeCommit, AWS CodeArtifact, Amazon S3, Amazon ECR, and various additional resources such as security groups and IAM roles. - docker

This folder contains the Dockerfile to build the container images that our Pipeline relies on in order to produce the build artifact. - helm-springboot

This folder contains a Helm chart that contains the Kubernetes files in order to deploy a simple application. The Helm chart will be used by ArgoCD to deploy the application to the cluster. - tekton-demo-app-build

This folder contains the source code of our Spring Boot application. The content of this folder will be uploaded to the provisioned tekton-demo-app-build repository. - tekton-demo-app-deploy

This folder contains the content of the tekton-demo-app-deploy repository, which is connected to ArgoCD in order to deploy the initial application, and updated to the cluster. - tekton-pipeline-demo-k8s-artifacts

This folder contains the Tekton based CI/CD Pipeline and supporting resources such as Persistent Volumes (PV) for Workspaces. - tekton-webhook-middleware

This folder contains a small helper application that runs inside the cluster in order to extract data from incoming Git events.

Execution of the Demo

1. Show the current state of the web application

Locate the load balancer URL (DEMO APP) that points to the demo application and enter it into a web browser of your choice. Your browser should display a simple web application with the following content:

In order to simulate a small change to the application, we are going to change the text color to match the color of the Tekton logo.

2. Make a simple change to the application

Within the output of the install script, locate the url of the source repo and the required Git credentials. Clone the repo into a destination with the following command:

$ git clone "SOURCE REPO URL" #Please enter the git username and password

$ cd tekton-demo-app-buildAfter successfully cloning the repo, open the file greeting.html located under src/main/resources/templates and comment the previous h1 Tag (Line Nr. 13). Finally, add the h1 tag with the inline CSS gradient. The comments within the file should provide further guidance.

Save your changes and commit them with the following commands:

$ git commit -am "Change text color to match color of Tekton logo"

$ git push3. Verify the current pipeline run

Locate the load balancer URL (TEKTON DASHBOARD) that points to the Tekton Dashboard and enter it in a browser of your choice. From the left menu of the Dashboard, select PipelineRuns to see all your running jobs. You should be able to see that Tekton has started a build after our recent commit. Tekton executes the Tasks in sequential order. The running Task is highlighted with a blue indicator. Feel free to explore the build logs on the right side of the screen.

In addition, you can verify the ongoing activity within the EKS cluster. Use the following command to monitor the pods created by Tekton:

$ kubectl --namespace tekton-pipelines get pods --watchAfter a few minutes, the build should complete successfully with a green status indicator.

4. Verify the new version of the JAR artifact within AWS CodeArtifact

Switch to your AWS account and select the AWS CodeArtifact service. Select the com.amazon:tekton-demo package within the tekton-demo-repository repository. Now you should see a new version of the package with the recent git commit SHA.

5. Verify the new container image version within Amazon ECR

Switch to your AWS account and select the Amazon ECR service. Open tekton-demo-app from the list of private repositories. Now you should see a new image tag that contains the recent git commit SHA as its identifier.

6. Verify the new application version

Locate the load balancer URL that points to the demo application and enter it in a web browser of your choice. You should now see the same application as in step 1, but this time with the matching text color.

Congratulations! You have successfully completed a CI/CD Pipeline execution with Tekton. Feel free to have a look at the various Kubernetes manifests that were used to build this Pipeline. You can start your exploration within the tekton-pipeline-demo-k8s-artifacts/templates folder of the cloned repository.

Cleanup

Please follow the instructions provided in the README file within the Github repository in order to clean up any provisioned resources.

Wrapping up

We started this blog with a quick overview of Tekton and finished it with a practical example where we built and deployed a simple web application. Although we have a functional Pipeline that is integrated with a couple of AWS services, the topics covered here are only the tip of the iceberg. Tekton gives you solid building blocks to build complex and comprehensive Pipelines for a cloud-native world. I encourage you to visit the official documentation to learn more about these building blocks and the official GitHub repository to discover new and upcoming projects within the Tekton ecosystem:

Feel free to extend the Pipeline and add additional build stages. I would be happy to see what you have built with Tekton on top of EKS.