Containers

Preparing for Kubernetes API deprecations when going from 1.15 to 1.16

Note: The contents of this blog are no longer up to date as the referenced Amazon EKS Kubernetes version is no longer supported. Refer to the Amazon EKS Kubernetes versions AWS documentation for up to date information on supported Amazon EKS Kubernetes versions.

The way that Kubernetes evolves and introduces new features is via its APIs. These APIs are versioned and features go through three phases: alpha, beta, and general availability (also known as stable). For users of Kubernetes this usually takes the form of an apiVersion version you are putting on the top of your YAML spec documents such as apiVersion: extensions/v1beta1 or apiVersion: apps/v1. As features evolve, they may change the parameters that you can specify and therefore the schema of these YAML files. Also the API versions will change to indicate that they are no longer beta features and have become stable.

To help facilitate cluster and workload updates, there is a period of time when upstream Kubernetes will provide both the old and the new API both the old and the new API. During this period, the old API is called ‘deprecated’. Eventually, Kubernetes stops including the old versions, and when you update your clusters during those upgrades, your workloads will break if they have not had their YAML spec files updated to the new API version.

There are many such deprecated API removals in 1.16

The oldest Kubernetes version supported and available in Amazon Elastic Kubernetes Service (Amazon EKS) is 1.15. This will no longer be supported starting May 3rd, 2021, and customers will need to upgrade these clusters to 1.16. This upgrade involves major removals of Deprecated APIs as described here. These include many common APIs such as NetworkPolicy, PodSecurityPolicy, DaemonSet, Deployment, StatefulSet, and ReplicaSet and thus will impact many customer workloads.

Tools to help check for things that will break post-upgrade to 1.16

While I am sure there are many tools that can help prepare for the 1.16 upgrade, there are two that I have seen customers use successfully:

kube-no-trouble

The first tool that I have seen customers use to prepare for this is kube-no-trouble. This tool will scan a particular cluster’s YAML specs for APIs versions that will be removed in future versions, tell you which things are going to break, and what you’ll need to be update before starting a cluster upgrade to 1.16. You can iteratively run this tool gradually fixing each thing until it shows that everything is good for you to proceed.

Deprek8ion / OPA conftest

If you’d prefer to scan your Kubernetes spec files within the pipelines that are building and deploying your applications, or do it manually on an ad-hoc basis against the YAML that you might have in your git repo, there is Deprek8ion. Under the hood, this uses the Open Policy Agent (via a tool called Conftest that allows you to do one-off local scans against the policies) to compare your YAML to a set of policies to alert you to these to-be-removed API versions. The error messages it returns will tell you the current API version you should be targeting as well.

This has been nicely packaged up in a Docker container, so all you need to do is pass or pipe your YAML object in and it’ll tell you if you need to update some things before the upgrade. This can be added as a stage of a CI/CD pipeline to throw either a warning or an error so that going forward you’ll know you need to make these changes early and as part of your normal development processes.

Example of finding and fixing an issue

We’ll start by scanning our 1.15 cluster for potential upgrade troubles with kube-no-trouble:

As we can see, we have a StatefulSet called web that we need to update from apps/v1beta1 to apps/v1. This isn’t as simple as just changing the version on the top of the document though, the schema has also changed as well as described in this blog post. In this case, spec.selector is now required and spec.updateStrategy.type now defaults to a RollingUpdate, so we’ll need to explicitly set that to OnDelete to maintain the current/older behavior we’ve become used to. We’ll take the easy path there and explicitly ask for the existing behavior until we can properly test the new RollingUpdate default.

So we’ll change this original spec file from this:

To this (changes bolded and underlined):

*_apiVersion: apps/v1_*

kind: StatefulSet

metadata:

name: web

spec:

serviceName: "nginx"

replicas: 2

*_selector_:

_matchLabels_:

_app: nginx_

_updateStrategy_:

_type__: OnDelete_*

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: registry.k8s.io/nginx-slim:0.8

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 1GiAnd then apply it and rerun kube-no-trouble to confirm we are now good to go!

One thing to note is that Kubernetes 1.15 actually has been converting from these old APIs to the new API for us. We can see this if we apply the original deprecated API spec file to the cluster and then do a kubectl edit statefulset web command we’ll see an object with the new API version as shown below. It seems that kube-no-trouble is scanning the last-applied-configuration annotation to determine if the older APIs were what you deployed pre-conversion. This means:

- The things you have already deployed to your 1.15 cluster will likely continue to work after the upgrade. It will just refuse any future applying of the deprecated API spec YAML files by your deployment pipelines etc.

- If we dump out the items from the 1.15 cluster with a command like

kubectl get statefulset web -o yaml, you should also get a spec file that will still work in 1.16 if you need some help working out how to generate one.

There is a light at the end of the tunnel coming with version 1.19+

There are no deprecated API removals in 1.17, 1.18, or 1.19, so once you get through the 1.15 to 1.16 upgrade, you won’t need to worry about this for a few Kubernetes versions. However, Kubernetes is a fast paced project, and these practices should be put in place to help prepare for future versions which might deprecate API versions and require your attention.

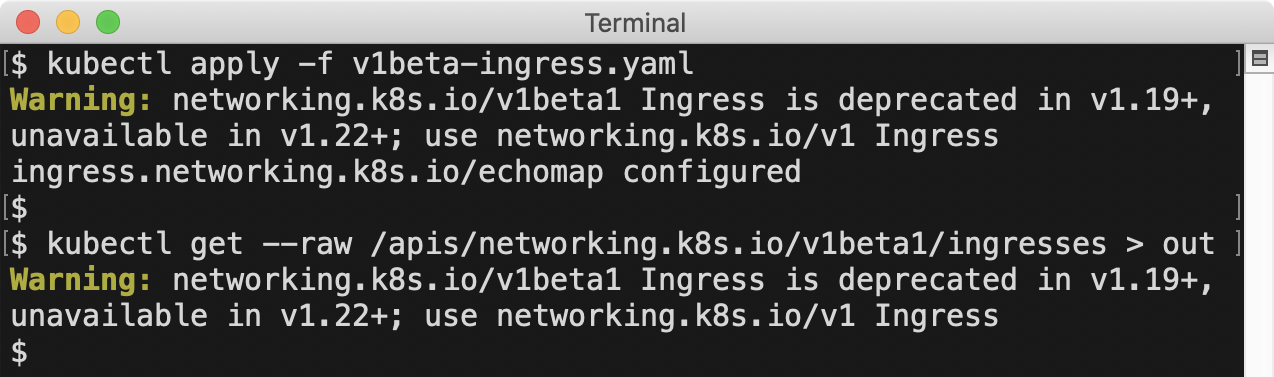

Also, starting with Kubernetes version 1.19, you’ll see that the Kubernetes API as well as the kubectl CLI tool will now throw warnings to alert you that you are using deprecated API versions and in what future version they’ll be removed.

What this means is that rather than needing to use the above tools in the future, you’ll just need to monitor your deployment logs for these warnings. You can either take action as they come up or search for them before doing an upgrade to see if there are any in the logs before proceeding with the upgrade.