AWS Compute Blog

Understanding AWS Lambda’s invoke throttling limits

This post is written by Archana Srikanta, Principal Engineer, AWS Lambda.

When you call AWS Lambda’s Invoke API, a series of throttle limits are evaluated to decide if your call is let through or throttled with a 429 “Too Many Requests” exception. This blog post explains the most common invoke throttle limits and the relationship between them, so you can better understand scaling workloads on Lambda.

Overview

The throttle limits exist to protect the following components of Lambda’s internal service architecture, and your workload, from noisy neighbors:

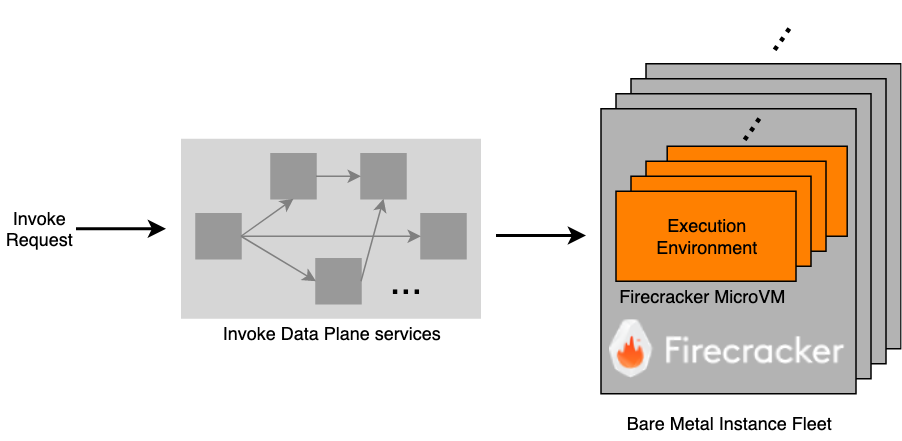

- Execution environment: An execution environment is a Firecracker microVM where your function code runs. A given execution environment only hosts one invocation at a time, but it can be reused for subsequent invocations of the same function version.

- Invoke data plane: These are a series of internal web services that, on an invoke, select (or create) a sandbox and route your request to it. This is also responsible for enforcing the throttle limits.

When you make an Invoke API call, it transits through some or all of the Invoke Data Plane services, before reaching an execution environment where your function code is downloaded and executed.

There are three distinct but related throttle limits which together decide if your invoke request is accepted by the data plane or throttled.

Concurrency

Concurrent means “existing, happening, or done at the same time”. Accordingly, the Lambda concurrency limit is a limit on the simultaneous in-flight invocations allowed at any given time. It is not a rate or transactions per second (TPS) limit in and of itself, but instead a limit on how many invocations can be inflight at the same time. This documentation visually explains the concept of concurrency.

Under the hood, the concurrency limit roughly translates to a limit on the maximum number of execution environments (and thus Firecracker microVMs) that your account can claim at any given point in time. Lambda runs a fleet of multi-tenant bare metal instances, on which Firecracker microVMs are carved out to serve as execution environments for your functions. AWS constantly monitors and scales this fleet based on incoming demand and shares the available capacity fairly among customers.

The concurrency limit helps protect Lambda from a single customer exhausting all the available capacity and causing a denial of service to other customers.

Transactions per second (TPS)

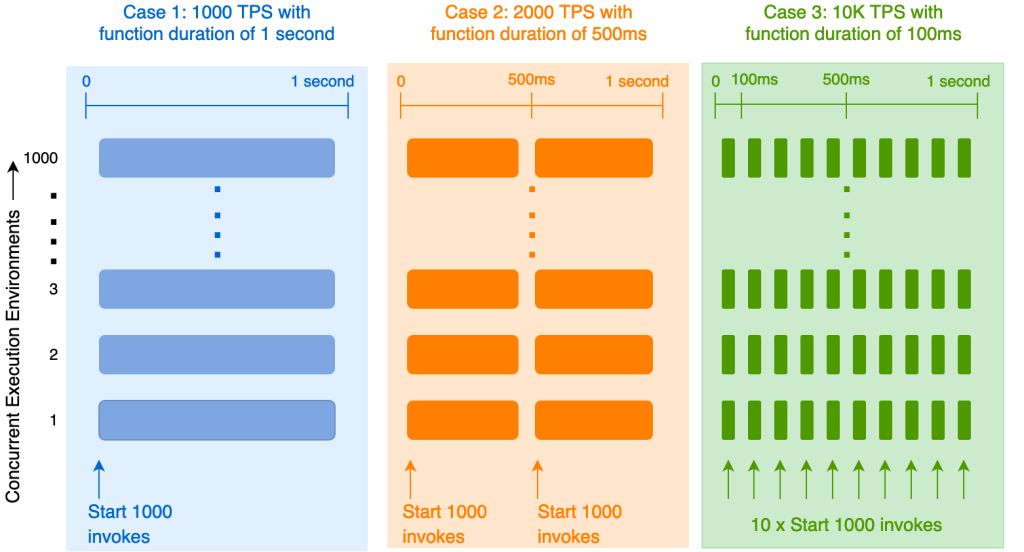

Customers often ask how their concurrency limit translates to TPS. The answer depends on how long your function invocations last.

The diagram above considers three cases, each with a different function invocation duration, but a fixed concurrency limit of 1000. In the first case, invocations have a constant duration of 1 second. This means you can initiate 1000 invokes and claim all 1000 execution environments permitted by your concurrency limit. These execution environments remain busy for the entire second, and you cannot start any more invokes in that second because your concurrency limit prevents you from claiming any more execution environments. So, the TPS you can achieve with a concurrency limit of 1000 and a function duration of 1 second is 1000 TPS.

In case 2, the invocation duration is halved to 500ms, with the same concurrency limit of 1000. You can initiate 1000 concurrent invokes at the start of the second as before. These invokes keep the execution environments busy for the first half of the second. Once finished, you can start an additional 1000 invokes against the same execution environments while still being within your concurrency limit. So, by halving the function duration, you doubled your TPS to 2000.

Similarly, in case 3, if your function duration is 100ms, you can initiate 10 rounds of 1000 invokes each in a second, achieving a TPS of 10K.

Codifying this as an equation, the TPS you can achieve given a concurrency limit is:

TPS = concurrency / function duration in seconds

Taken to an extreme, for a function duration of only 1ms and at a concurrency limit of 1000 (the default limit), an account can drive an invoke TPS of one million. For every additional unit of concurrency granted via a limit increase, it implicitly grants an additional 1000 TPS per unit of concurrency increased. The high TPS doesn’t require any additional execution environments (Firecracker microVMs), so it’s not problematic from a fleet capacity perspective. However, driving over a million TPS from a single account puts stress on the Invoke Data Plane services. They must be protected from noisy neighbor impact as well so all customers have a fair share of the services’ bandwidth. A concurrency limit alone isn’t sufficient to protect against this – the TPS limit provides this protection.

As of this writing, the invoke TPS is capped at 10 times your concurrency. Added to the previous equation:

TPS = min( 10 x concurrency, concurrency / function duration in seconds)

The concurrency factor is common across both terms in the min function, so the key comparison is:

min(10, 1 / function duration in seconds)

If the function duration is exactly 100ms (or 1/10th of a second), both terms in the min function are equal. If the function duration is over 100ms, the second term is lower and TPS is limited as per concurrency/function duration. If the function duration is under 100ms, the first term is lower and TPS is limited as per 10 x concurrency.

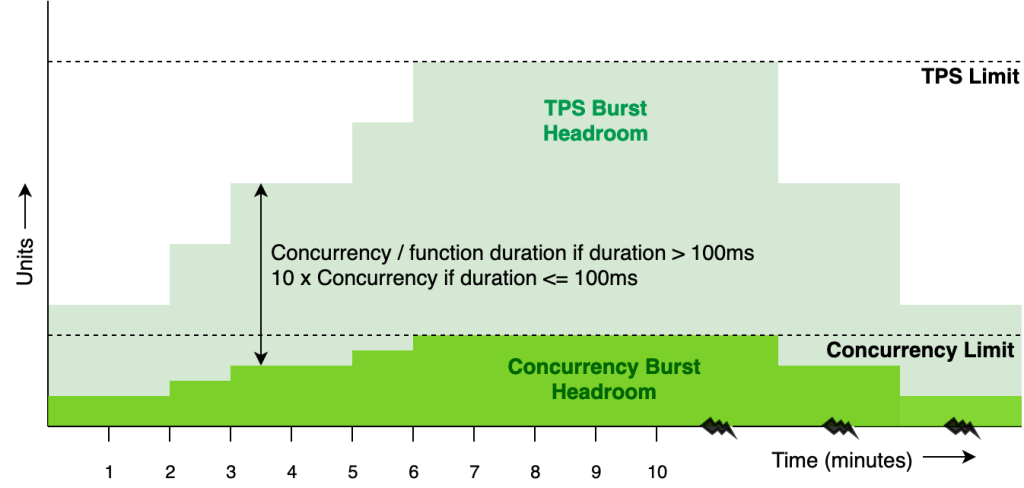

To summarize, the TPS limit exists to protect the Invoke Data Plane from the high churn of short-lived invocations, for which the concurrency limit alone affords too high of a TPS. If you drive short invocations of under 100ms, your throughput is capped as though the function duration is 100ms (at 10 x concurrency) as shown in the diagram above. This implies that short lived invocations may be TPS limited, rather than concurrency limited. However, if your function duration is over 100ms you can effectively ignore the 10 x concurrency TPS limit and calculate your available TPS as concurrency/function duration.

Burst

The third throttle limit is the burst limit. Lambda does not keep execution environments provisioned for your entire concurrency limit at all times. That would be wasteful, especially if usage peaks are transient, as is the case with many workloads. Instead, the service spins up execution environments just-in-time as the invoke arrives, if one doesn’t already exist. Once an execution environment is spun up, it remains “warm” for some period of time and is available to host subsequent invocations of the same function version.

However, if an invoke doesn’t find a warm execution environment, it experiences a “cold start” while we provision a new execution environment. Cold starts involve certain additional operations over and above the warm invoke path, such as downloading your code or container and initializing your application within the execution environment. These initialization operations are typically computationally heavy and so have a lower throughput compared to the warm invoke path. If there are sudden and steep spikes in the number of cold starts, it can put pressure on the invoke services that handle these cold start operations, and also cause undesirable side effects for your application such as increased latencies, reduced cache efficiency and increased fan out on downstream dependencies. The burst limit exists to protect against such surges of cold starts, especially for accounts that have a high concurrency limit. It ensures that the climb up to a high concurrency limit is gradual so as to smooth out the number of cold starts in a burst.

The algorithm used to enforce the burst limit is the Token Bucket rate-limiting algorithm. Consider a bucket that holds tokens. The bucket has a maximum capacity of B tokens (burst). The bucket starts full. Each time you send an invoke request that requires an additional unit of concurrency, it costs a token from the bucket. If the token exists, you are granted the additional concurrency and the token is removed from the bucket. The bucket is refilled at a constant rate of r tokens per minute (rate) until it reaches its maximum capacity.

What this means is that the rate of climb of concurrency is limited to r tokens per minute. Even though the algorithm allows you to collect up to B tokens and burst, you must wait for the bucket to refill before you can burst again, effectively limiting your average rate to r per minute.

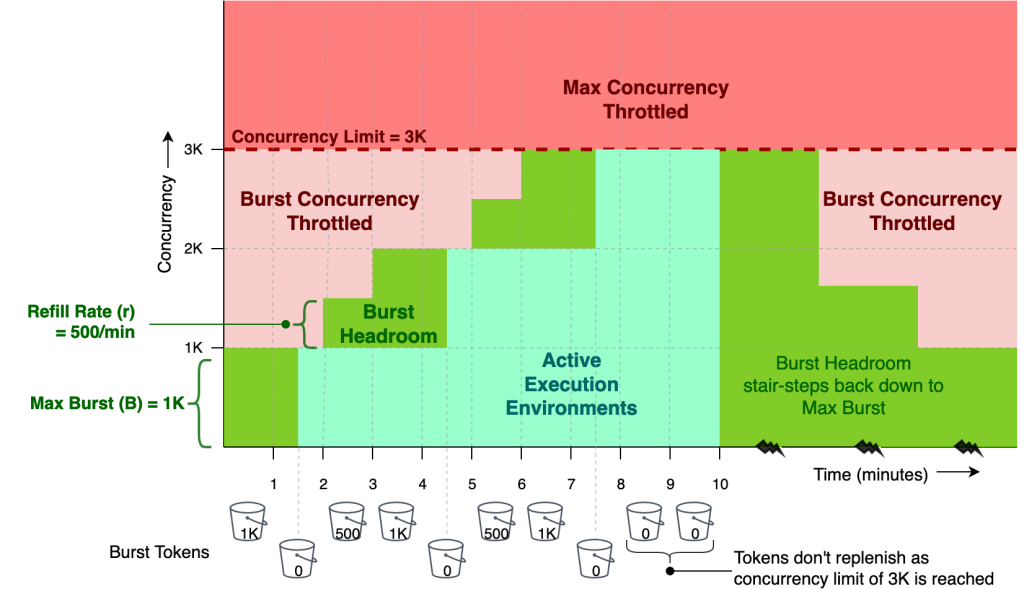

The chart above shows the burst limit in action with a maximum concurrency limit of 3000, a maximum burst(B) of 1000 and a refill rate(r) of 500/minute. The token bucket starts full with 1000 tokens, as is the available burst headroom.

There is a burst activity between minute one and two, which consumes all tokens in the bucket and claims all 1000 concurrent execution environments allowed by the burst limit. At this point the bucket is empty and any attempt to claim additional concurrent execution environments is burst throttled, in spite of max concurrency not being reached yet.

The token bucket and the burst headroom are replenished at minutes two and three with 500 tokens each minute to bring it back up to its maximum capacity of 1000. At minute four, there is no additional refill because the bucket is at maximum capacity. Between minutes four and five, there is a second burst activity which empties the bucket again and claims an additional 1000 execution environments, bringing the total number of active execution environments to 2000.

The bucket continues to replenish at a rate of 500/minute at minutes five and six. At this point, sufficient tokens have been accumulated to cover the entire concurrency limit of 3000, and so the bucket isn’t refilled anymore even when you have the third burst activity at minute seven. At minute ten, when all the usage ramps down, the available burst headroom slowly stair steps back down to the maximum initial burst of 1K.

The actual numbers for maximum burst and refill rate vary by Region and are subject to change, please visit the Lambda burst limits page for specific values.

It is important to distinguish that the burst limit isn’t a rate limit on the invoke itself, but a rate limit on how quickly concurrency can rise. However, since invoke TPS is a function of concurrency, it also clamps how quickly TPS can rise (a rate limit for a rate limit). The following chart shows how the TPS burst headroom follows a similar stair step pattern as the concurrency burst headroom, only with a multiplier.

Conclusion

This blog explains three key throttle limits applied on Lambda invokes: the concurrency limit, TPS limit and burst limit. It outlines the relationship between these limits and how each one protects the system and your workload from noisy neighbors. Equipped with this knowledge you can better interpret any 429 throttling exceptions you may receive while scaling your applications on Lambda. For more information on getting started with Lambda visit the Developer Guide.

For more serverless learning resources, visit Serverless Land.