AWS News Blog

EC2 Cluster Compute Instances now Available in US West (Oregon)

We’re adding a new region to our High Performance Computing service on EC2 today, and making a new template available to spin up clusters quickly and easily. But first, a quick recap.

The story of High Performance Computing on EC2

As the name suggests, High Performance Computing (HPC) users have an insatiable desire for compute, but are all too often constrainted by the resources available to them. The scientists and engineers working in this field often have to restrict the scope of their research based on the resources available to them. So it was no surprise that almost as soon as Amazon EC2 arrived in 2006, we saw customers starting to remove these constraints by taking advantage of the utility computing model, spinning up large scale computing clusters to get their jobs done more quickly, and to remove the constraints placed on their research by running simulations with more mocules, higher resolutions or bigger galaxies. And boy, did they fill them up, with everything from Monte Carlo simulations to R&D in the life sciences.

This area was given an extra set of tools in December 2009 with the arrival of the Spot Market, which opened up name-your-price supercomputing: customers were able to run at higher scale for the same price, or choose the cost of their compute runs. Whether you’re running batch processing or Hadoop, this is a big win, but we heard customers wanted to augment their large, scale out systems with more tightly coupled, parallel jobs.

To that end, in July 2010 we added a new instance type to EC2 which was specifically designed for HPC clusters. The Cluster Compute instance was deployed on a high performance network to help run tightly coupled, parallel computations over non blocking, fully bisectional 10 gigabit Ethernet, ran hardware virtualization and introduced the concept of a Placement Group, which helps physically locate instances close to one another for low latency communication. We added GPUs to the line up in November 2010, and rolled out the second generation in November 2011 (cc2.8xlarge instances, starring the Intel Xeon E5 chip), and rolled into the Top 500 list of the world’s faster supercomputers at number 42. We’ve seen cluster compute instances become adopted by some of the finest research organisations in the world, and beyond to customers with business applications that need high bandwidth networking and significant CPU cycles.

Extended availability

Today we’re expanding the availability of CC2 instances into a third region so that you can take advantage of high performance computing from the West Coast. US West (Oregon) joins US East (Virginia) and EU West (Ireland), allowing you to bring the power of cc2.8xlarge instances to your data and colleagues on the West Coast.

Easier to use

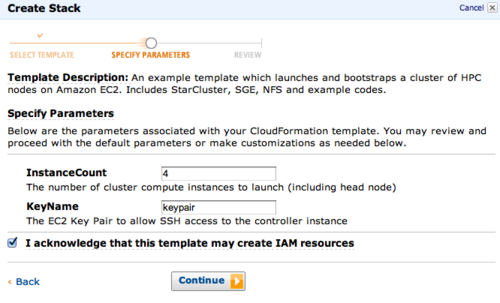

We’re also always looking for ways to make our HPC services easier to use, and to help the scientists and engineers get to their familiar tools quickly, so they can get straight to work. Today we’re adding a new script which will automatically provision, configure and install a fully functioning HPC cluster in just five clicks.

Your first click is here:

This will set up an AWS CloudFormation template in the console, from there you can choose the size of the cluster to spin up, and the name of a keypair to use to log in. Review your settings, click continue, and we’ll provision a fully operational HPC cluster, ready to rock with Open MPI, Open Grid Scheduler/Grid Engine, ATLAS libraries, NFS for data sharing, SciPi, NumPi and iPython (courtsey of the wonderful StarCluster). I’ve also pre-loaded it with some example code and instructions on how to run them.

Once the stack is up, you’ll see the connection details in the ‘Outputs’ tab, to connect directly via SSH, or to jump to a web page with more details for running parallel tasks over MPI, or via the scheduler. You can also scale your cluster up down elastically using StarCluster.

Give it a try, and let us know how you’re putting all these FLOPS to use.

~ Matt