AWS Marketplace

Autonomously manage release quality for AWS Lambdas and Amazon EKS with Sedai

Testing new code releases can be time-consuming, which makes it hard for teams to focus on innovation and the next build. Autonomous cloud platform solutions such as Sedai can help manage your code deployments so that you can focus on what’s next instead of what’s already been done.

Sedai Release Intelligence provides real-time insight on code releases so developers can quickly view an analysis of deployment performance. The platform automatically detects releases for AWS Lambda and Kubernetes clusters and identifies relative changes in errors, latency, and saturation in varying traffic scenarios. Sedai generates a scorecard that grades overall performance quality so that you can quickly validate whether the deployment was successful or if it needs to be rolled back to avoid negatively impacting your customers. This allows you to expedite your own Continuous integration / Continuous development (CI/CD) pipeline through autonomous analysis of code releases.

In this blog post, I’ll walk through how to set up Sedai. I’ll also show you how to review release scorecards.

Prerequisites

Sedai is an agent-free Software as a service (SaaS) platform that integrates with your existing environments. To get up and running with Release Intelligence, you need the following:

- AWS account. If you are running Amazon Elastic Kubernetes Service (Amazon EKS) clusters, you also need access credentials to add these resources to Sedai.

- Monitoring or application performance management (APM) tools, such as Amazon CloudWatch.

- Subscription to Sedai. Choose the plan that best fits your fleet size. Your Sedai subscription also includes access to other Sedai features.

Solution walkthrough: Autonomously manage release quality for AWS Lambdas and Amazon EKS with Sedai

Connect your environment to Sedai

- To integrate an AWS account or AWS/Kubernetes account, follow Sedai’s support documentation. You will be prompted to add at least one account during initial setup. For each account you integrate, I recommend adding a nickname so you can easily identify resources in Sedai.

- For Lambda and EKS, you will need to first set up IAM for Sedai.

- For EKS, you will also need to enable API access, create Cluster Role and Cluster Role Binding, and update aws-auth ConfigMap.

- Once IAM setup is complete, connect Sedai to your AWS Lambdas or Amazon EKS.

- Connect Sedai to your preferred monitoring data.

- Wait for the email notification indicating Sedai has finished inferring your entire topology.

- For Lambda and EKS, you will need to first set up IAM for Sedai.

View release scorecards

Sedai automatically enables Release Intelligence for all integrated cloud accounts. This means Sedai autonomously detects your new releases for all Lambda or Kubernetes resources, analyzes their performance, and generates a scorecard.

For Sedai to generate a scorecard, you must deploy a Lambda or EKS application following your normal workflow. Sedai generates a preliminary score once it detects a release and continues to evaluate its performance in low, typical, and/or high traffic scenarios over the next 24 hours for a final score. This score is based on a scale of 1 to 10. A higher score means Sedai did not detect much change from the previous release, while a lower score indicates Sedai identified deviations in performance. View Sedai’s documentation to understand how scores are calculated.

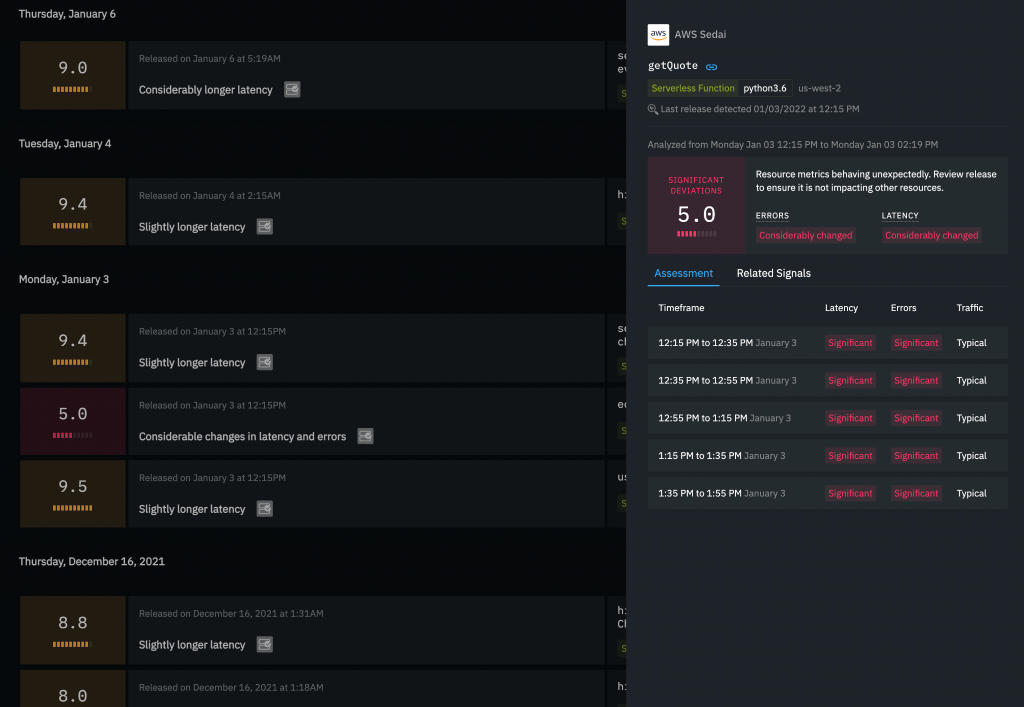

In my example below, I deployed the Lambda function getQuote. To track the release, I do the following:

- Sign in to Sedai. In the far-left vertical list, navigate to Release Intelligence. This page provides an overview of all of your accounts and a breakdown of their total release scorecards from the past 90 days.

- Choose an account and the page refreshes to display a list of all auto-generated scorecards for resources in that account, ordered by most recent. Scorecards are grouped by release date. This list features cards that are broken down into three distinct sections:

- The far-left portion provides the overall score.

- The middle portion includes when the release occurred, as well as details about the score.

- The far-right portion identifies the resource.

- To better understand a score, select the scorecard. This opens the side drawer with details that will help you make informed decisions about leaving the release as-is or rolling it back to address observed issues. The following screenshot shows the score summary for my last deployment of getQuote. In the top portion, it shows an overall score of 5.0 and a notation that resource metrics are behaving unexpectedly. It indicates that errors and latency have Considerably changed.

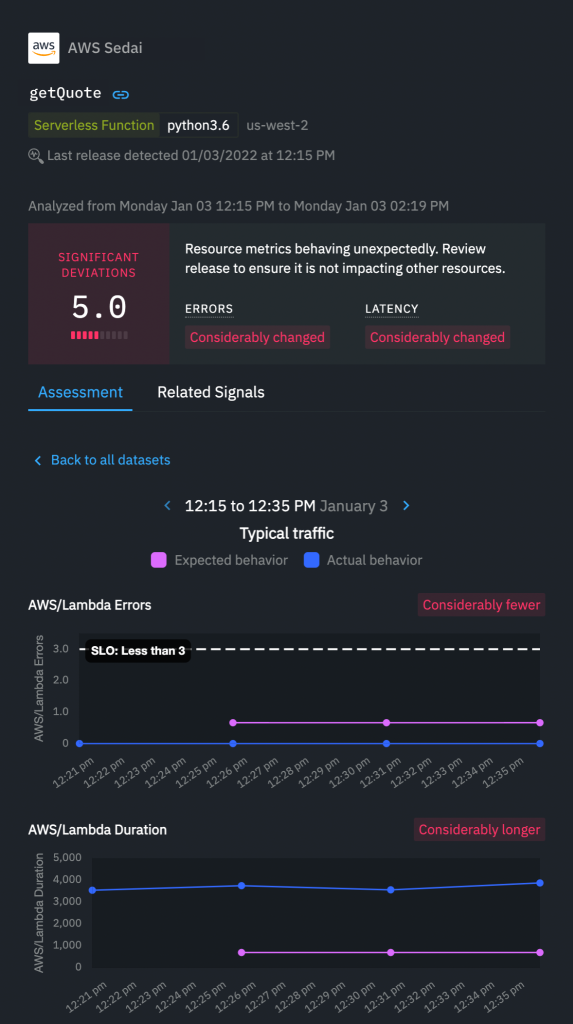

- Underneath that score is an Assessment tab, and underneath that are multiple random datasets that combined represent the overall score. For getQuote I can see that each time period in the analysis yielded significant deviations in latency and errors. You can select any of these datasets for a graphical representation of how the release performed versus how Sedai expected it to behave.

- The following screenshot shows Significant Deviations score of 5.0 with Errors and Latency notations of Considerably changed. There is also an AWS/Lambda errors chart with the evaluation of Considerably fewer and an AWS/Lambda Duration chart with the evaluation of Considerably longer. This automatic analysis validates my personal goal with my getQuote deployment, which was to reduce errors.

Conclusion

While a microservices-based architecture enables your team to innovate and deploy more frequently, it also adds pressure to ensure you release safely without negatively impacting your customer experience. Sedai can help you manage releases autonomously with in-depth analysis that helps you quickly understand what changed. Sedai’s smart scorecards provide fast insight so that you can validate whether the release is behaving as you expected. Get started in minutes and understand your releases’ performance immediately with a Sedai subscription available in AWS Marketplace.

The content and opinions in this post are those of the third-party author, and AWS is not responsible for the content or accuracy of this post.

About the author

Nikhil Kurup is the VP of Engineering at Sedai, where he builds autonomous management tools for Serverless platforms. His experience as an enterprise architect and data scientist fuels his passion to simplify the difficult things and then automate the simple things.

Nikhil Kurup is the VP of Engineering at Sedai, where he builds autonomous management tools for Serverless platforms. His experience as an enterprise architect and data scientist fuels his passion to simplify the difficult things and then automate the simple things.