AWS Big Data Blog

Building high-quality benchmark tests for Redshift using open-source tools: Best practices

Amazon Redshift is the most popular and fastest cloud data warehouse, offering seamless integration with your data lake, up to three times faster performance than any other cloud data warehouse, and up to 75% lower cost than any other cloud data warehouse.

When you use Amazon Redshift to scale compute and storage independently, a need arises to profile the compute requirements of various production workloads, so that your Amazon Redshift cluster configuration reflects an appropriately-balanced compute layer. Existing Amazon Redshift customers often benchmark scaled-up clusters against various production workloads to accommodate growth in their business. Whether it be from YoY data growth, democratization of data leading to an ever-larger user base, or the onboarding of ever-more workloads, the need to scale up with eyes wide open eventually arises as part of the normal data management and analytics lifecycle. In addition, existing customers may also use benchmark tests to proactively monitor a production cluster’s performance in real time.

For prospective Amazon Redshift customers, benchmarking Amazon Redshift is often one of the main components of their evaluation and a key source of insight into the price-to-performance ratio of different Amazon Redshift configurations.

Open-source tools, with their cost-efficiency and vendor neutrality, are often the preferred choice of customers for profiling their production workloads and benchmark tests. However, best practices for using these tools are scarce, possibly resulting in flawed compute profiles, flawed benchmark results, customer frustration, or bloated timelines. This series of posts offers a succinct guide for profiling customer workloads with commonly used open-source tools such as SQLWorkbench, psql, and Apache JMeter.

The first installment of this series discusses some general best practices for benchmarking, and the subsequent installments discuss the different strengths and challenges that may come with different open-source tools.

Although this post focuses on benchmarking best practices using open-source tools with any data/workload combination, another discussion can be had altogether on the benchmark data and workloads that can be employed. For example, benchmark tests could use industry-standard TPC data and workloads, or they could use a customer’s actual production data and workloads. For more information and helpful artifacts on the use of TPC industry-standard data and workloads in benchmark tests on Amazon Redshift, see Rapidly evaluate AWS analytics solutions with Amazon Redshift and the Redshift Gold Standard Github. You can also reach out to an Amazon Analytics Specialist Solutions Architect for additional guidance.

One final point before we get started: there is a lot that could be said about benchmarking—more than can be accommodated in a single post. Analytics Specialists Solutions Architects such as myself frequently and happily engage with current and prospective customers to help them evaluate their benchmarking strategy and approach at no charge. I highly recommend you take advantage of that benefit by reaching out to your AWS account SA.

Benchmarking host

Regardless of which open-source tool you use for benchmarking Amazon Redshift, two needs are constant in your benchmark environment:

- A test machine from which to initiate the benchmark tests

- A running Amazon Redshift cluster to serve as the target test cluster

It may seem intuitive to use your remote laptop or an on-premises server as your host for launching the benchmark tests, but there are a number of flaws with that approach.

Firstly, any network traffic between the remote laptop and the target Amazon Redshift cluster (or any AWS Cloud service) must traverse the internet, and internet transmission times can vary tremendously from one test run to another. Internet transmission times introduce so much noise into query runtimes that it becomes very difficult, if not impossible, to achieve high-confidence benchmark results. Just recently, I’ve seen a customer exhibit an increase in query runtimes of several orders of magnitude simply because they used their remote laptop as the launch point for their benchmark tests.

In addition, your remote laptop most likely must be connected to a corporate VPN in order to access any AWS Cloud service such as Amazon Redshift. VPNs achieve a secure connection through mechanisms such as encryption and traffic rerouting, which naturally result in slower network speeds. However, the reduction in network speeds can vary tremendously from one test run to another, so VPNs have the potential to introduce a lot of noise in your benchmark results.

Secondly, your remote laptop simply may not be configured with sufficient memory and CPU to efficiently run the benchmark tests. An underpowered test machine could lead to an out-of-memory error for the benchmarking tool or yield longer query runtimes.

Even if your remote laptop has sufficient CPU and memory for running benchmark tests, your laptop probably has many other applications running on it—email, web browser, development IDE, and more—that are all consuming CPU and memory resources at different intervals. If the benchmarking tool has to compete with other heavyweight applications for CPU and memory, it’s very likely that query runtimes will be impacted negatively and in varying degrees from one test run to another, thereby introducing a lot of noise into the benchmark results.

A better alternative to using your remote laptop or an on-premises server as your benchmarking host is to launch a new Amazon Elastic Compute Cloud (Amazon EC2) instance. There are many EC2 instance types to choose from, just be sure to select an instance type with sufficient memory and vCPUs to handle your benchmark tests. It’s recommended to install and launch benchmark tools and scripts from locally attached Amazon EC2 storage as opposed to network attached storage, such as Amazon Elastic Block Storage (Amazon EBS) volumes, to minimize the risk of network speeds impacting benchmark tests. I have seen customers do very well using the m5dn.24xlarge EC2 instance type for their benchmarking host, but scaling up or down is easy on the AWS Cloud, so feel free to start with something smaller or bigger.

One very important note: try as much as possible to keep the EC2 instance in the same AWS Region, VPC, and Availability Zone as the target Amazon Redshift cluster, so that the number of network hops are kept to a minimum, and to minimize the possibility of additional security settings being needed to connect to the cluster. It’s also highly recommended that you keep any Amazon Simple Storage Service (Amazon S3) data for data lake tests in the same AWS Region as your Amazon Redshift cluster. The following diagram illustrates this architecture.

Number of test iterations

It’s strongly recommended that you conduct at least four iterations (one warm-up iteration and three subsequent iterations) for each test for statistical confidence in the benchmark results. The warm-up iteration is intended to prime the Amazon Redshift cluster just as a real-world cluster would be. For example, Amazon Redshift compiles all queries to machine code to achieve the fastest query performance. More than 99.6% of all real-world queries that run on Amazon Redshift, however, don’t require compilation, because their code already exists in the Amazon Redshift compile cache.

The Amazon Redshift compilation process employs many approaches for efficient and speedy compilation. For example, in the event that Amazon Redshift has never seen a query previously and it requires a compilation, the compilation is scaled to a serverless compilation service beyond the compute resources of the leader node of your Amazon Redshift cluster. The compile cache also survives cluster restarts and software upgrades. In addition, compiled queries are parameterized so that a simple change to the filter value in a where clause still uses the same machine code in the compile cache.

As mentioned, in a real-world Amazon Redshift cluster, less than 1% of queries need to be compiled. However, in a brand-new test cluster, it’s possible that the compile cache could be empty. Performing a warm-up iteration ensures that your benchmark test results reflect real-world production conditions.

The mathematical average and standard deviation of the last three test iterations offer a statistically confident result to be reported for that given test.

Result set caching

Amazon Redshift caches queries and their result sets by default, so that subsequent iterations of the identical query can use those results if the underlying data hasn’t changed. When Amazon Redshift determines a query is eligible to reuse previously cached results, it bypasses query planning, the workload manager (WLM), and the query execution engine altogether. Cached result rows are returned to the client application immediately, making a query’s runtime more a function of network transmission speeds rather than Amazon Redshift processing.

There is clearly a tremendous benefit to leaving result set caching enabled in all your Amazon Redshift clusters. In fact, with the Amazon Redshift Spectrum Request Accelerator feature, even if two data lake queries aren’t identical, but rely on the same aggregated datasets, it’s possible you can use your intermediate or aggregated result sets stored in the Amazon Redshift external data cache. For more information about Spectrum Request Accelerator, see Extending Analytics Beyond the Data Warehouse.

When it comes to benchmarking, however, there may be a valid justification to temporarily disable result set caching so that the Amazon Redshift query processing engine is engaged for every benchmark test. You can disable the result set cache for a test session with the following command:

For more information, see enable_result_cache_for_session.

You can also disable result set caching at the user level (for example, for all future sessions of a particular user) using the ALTER USER command. For example, to disable result set caching for the demo user, enter the following code:

If you choose to disable result set caching in your session, there are two crucial points to keep top of mind:

- The query runtimes observed in your benchmarking tests are very likely to be significantly longer than your real-world production scenario, and shouldn’t be compared to the query runtimes of on-premises systems

- As always with benchmarking, be sure to compare “apples-to-apples” by disabling caching in other benchmark environments and tests

With regards to Spectrum Request Accelerator, it is transparently and automatically enabled when certain conditions are met that make data caching a worthwhile activity for a query. Unlike local result set caching, Spectrum Request Accelerator can’t be disabled.

Query execution time, query runtime, and query throughput

The basic purpose of benchmark tests on a database system is to measure performance with respect to query processing under varying conditions. Three of the most common metrics used to assess performance are query execution time, query runtime, and query throughput. To discuss these three metrics, let’s start with a brief overview of the typical query lifecycle.

The typical query lifecycle consists of many stages, such as query transmission time from the query tool (SQL application) to Amazon Redshift, query plan creation, queuing time, execution time, commit time, result set transmission time, result set processing time by the query tool, and more. The following diagram illustrates the basic query lifecycle.

One of the core principles of benchmark tests is to eliminate any factors or components that aren’t in scope (also called noise). For example, Amazon Redshift has no control over how fast the network transmission speeds are or how efficiently your chosen query tool can process result sets—not to mention that these components can also vary from one test run to another. Such artifacts should be considered out of scope and eliminated as much as possible from benchmark tests aiming to measure, for example, Amazon Redshift’s query processing performance.

Query execution time

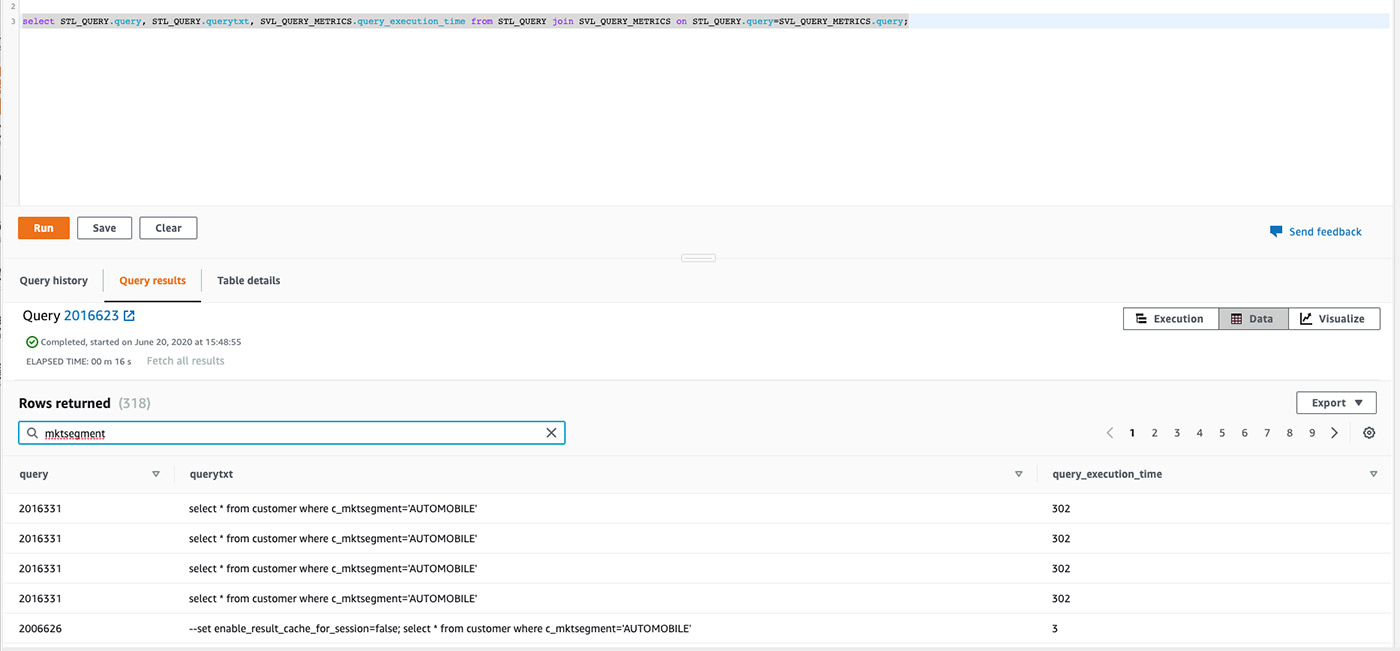

We can aim to do just that by measuring query execution time; this metric represents the amount of time that Amazon Redshift spent actually executing a query—excluding most other components of the query lifecycle—such as queuing time, result set transmission time, and more. It’s a great metric for isolating the time attributed to the Amazon Redshift engine’s query processing, and can be obtained from the Amazon Redshift system view STL_WLM_QUERY using a query such as the following. You can also add a time filter to the query to limit the result set to specific testing time window.

The following screenshot shows the query results.

Query runtime

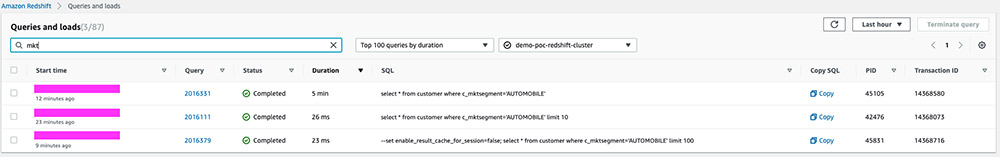

Query runtime, on the other hand, represents the total time for the query to complete its entire lifecycle. It’s an attractive option because it’s the default runtime offered by most query tools such as on the Amazon Redshift console (see the Duration column in the following screenshot).

Although using the query runtime can still yield good quality benchmark tests, it’s a better practice to rely on query execution time when measuring the performance of individual queries across different benchmarking scenarios and environments.

Query throughput

Query throughput offers much more practical insight into the performance of a data warehouse such as Amazon Redshift.

Query throughput measures the volume of queries that can be run in a period of time (usually an hour), and is often stated as x queries per hour. It’s often coupled with queries of varying degrees of complexity (as indicated by their average runtime), such as small, medium, and large queries. You can incorporate the query throughput into a custom testing script or capture it from a testing tool such as Apache JMeter.

Pause, resume, and snapshots

Whether your benchmark tests are simple enough to be wrapped up in a few hours, or elaborate enough to require a few days or even weeks to complete, you rarely use the Amazon Redshift clusters involved nonstop. To keep costs as lean as possible, it’s highly recommended to pause the clusters (via the Amazon Redshift console) when they’re not expected to be used for several hours in a row (such as overnight or on weekends).

It’s also recommended to keep the clusters used in benchmark testing available for an extended period of time after the benchmarking testing is complete, in case additional benchmark scenarios are required. A final snapshot before deleting an Amazon Redshift cluster is one of the easiest and most cost-effective ways to preserve availability to the cluster. Pausing a cluster is another way and has the added benefit of allowing the cluster to be more quickly resumed should additional benchmark scenarios arise.

Cluster resize

It’s quite common to run a series of benchmark tests on different cluster configurations by doubling the number of nodes in the baseline cluster. Amazon Redshift offers two ways to resize a cluster: elastic resize and classic resize.

Most customers find it easiest to use the elastic resize approach, which resizes the Amazon Redshift cluster within minutes by redistributing the cluster’s existing slices (logical virtual nodes) onto more cluster nodes. For example, if a cluster has 10 cluster nodes that have a default of 16 slices per node (16 slices x 10 cluster nodes = 160 slices total in the cluster), and it’s resized to 20 cluster nodes using the elastic resize approach, the resulting cluster still has 160 slices, but each cluster node only has 8 slices (8 slices x 20 cluster nodes = 160 slices total in the cluster). Because there are fewer slices on each cluster node, each slice in the resized cluster receives an increased memory and CPU capacity.

Alternatively, if you use the classic resize approach, the resized cluster doubles the number of slices in the cluster, but each slice has the standard memory and CPU capacity (16 slices x 20 cluster nodes = 320 slices total in the cluster). Although the slices have the standard memory and CPU allocations, the increased number of slices provides the opportunity for greater parallelization in the cluster.

In short, elastic resize results in fewer, but more powerful Amazon Redshift slices, whereas classic resize results in more standard-power slices (greater parallelization). When resizing a cluster, it’s best to stay consistent with the resize approach used. Workloads can benefit differently from each configuration, which is why you should remain consistent with resize approaches.

Benchmark artifacts

Depending on the purpose of your benchmark tests, your benchmarking approach could be as simple as running five or six long-running or complex BI queries in a query editor against two or three different environments and hand-recording the resulting query runtimes. Or it could be much more elaborate, involving the methodical implementation of hundreds of benchmark tests against 10 or more benchmark environments, with results captured in multiple spreadsheet charts.

Regardless of where your approach falls on the benchmarking spectrum, it’s imperative for the fidelity of your results that you maintain consistent testing methods, data, and queries or workloads across different benchmarking setups and environments. For example, if your goal is to measure the impact of doubling your cluster’s node count, both the base test that runs against x nodes and the experimental test running against 2x nodes should be using identical test tool and configuration, test data, schema, queries, and so on. Also, you should also be consistent with the Amazon Redshift resize approach used (elastic or classic).

Ideally, you should aim to first establish a baseline and change only one variable at a time (such as changing the number of nodes in the cluster) to observe the impact on the benchmark results from just that change. Changing multiple variables simultaneously makes it very difficult, if not impossible, to measure the impact of each individual variable change.

You could explore the following benchmarking ideas in a benchmark test plan:

- Baseline – This is a highly recommended and typically a standard component of any benchmark test

- Linear scalability – The impact on query throughput of increasing or decreasing the Amazon Redshift node count

- Elasticity – Automatic scaling capabilities

- Concurrency – The impact on query throughput of increasing number of concurrent users

- Different instance types – The query throughput achieved using an Amazon Redshift ra3.4xl instance type vs. an ra3.16xl instance type, for example

- Data lake – The query throughput of data lake queries

- Workload management optimizations – Switching from auto mode to a custom WLM setup in Amazon Redshift

- Data model optimizations – Using materialized views in Amazon Redshift, Parquet file format in the data lake, and so on

- Load and unload times – The amount of time needed to unload a fact table to Parquet

Conclusion

We discussed a number of recommended best practices for conducting high-quality benchmark tests. Some of the best practices represented core principles that span all the open-source tools discussed (such as consistency in testing methodology).

In the next set of installments of this series, we review the strengths and appropriateness of three popular open-source tools that you can use to conduct benchmark tests. I hope this series has been helpful, and strongly encourage current and prospective customers to reach out to me or other AWS colleagues if you wish to delve deeper.

About the Author

Asser Moustafa is an Analytics Specialist Solutions Architect at AWS based out of Dallas, TX, USA. He advises customers in the Americas on their Amazon Redshift and data lake architectures and migrations, starting from the POC stage to actual production deployment and maintenance.

Asser Moustafa is an Analytics Specialist Solutions Architect at AWS based out of Dallas, TX, USA. He advises customers in the Americas on their Amazon Redshift and data lake architectures and migrations, starting from the POC stage to actual production deployment and maintenance.