Containers

How to rapidly scale your application with ALB on EKS (without losing traffic)

To meet user demand, dynamic HTTP-based applications require constant scaling of Kubernetes pods. For applications exposed through Kubernetes ingress objects, the AWS Application Load Balancer (ALB) distributes incoming traffic automatically across the newly scaled replicas. When Kubernetes applications scale down due to a decline in demand, certain situations will result in brief interruptions for end users. In this post, we will show you how to create an architecture that allows the application’s resources to scale down gracefully and minimize user impact.

Achieving graceful shutdown of an application requires a combination of application, Kubernetes, and target group configurations. The AWS Load Balancer Controller supports health check annotations to match the pod readiness probe to indicate that a pod IP that is registered as an ALB target is healthy to receive traffic.

In this post, we will demonstrate how to use a separate application endpoint as an Amazon Load Balancer health check along with Kubernetes readiness probe and PreStop hooks that together enable graceful application termination. In addition, we will simulate a large-scale load of thousands of concurrent sessions at peak and demonstrate how implementing these steps will eliminate the 50X HTTP error rates experienced by end users.

Simulation architecture

The code sample deploys a web application behind ALB and demonstrates seamless failover between pods during a scale-down event. The app uses a backend database to store the logistics orders. We will create an Amazon Elastic Kubernetes Service (Amazon EKS) cluster, use VPC CNI for pod networking, and install the AWS Load Balancer Controller add-on. Next, we will deploy a simple Django app that accepts synthetic requests from a load simulator and modifies the ReplicaSet of a Kubernetes deployment. In addition, we will deploy a cluster autoscaler, which changes the Auto scaling group size to suit the needs of Django app pods. We will monitor the application’s health during the scale-down event.

Source code

We have provided the complete set of deployment artifacts in the aws-samples code repository. In addition, the repository contains instructions to set up the required infrastructure as well as logistics-db. To get started, deploy the Django Ingress application without health checks and the simulator app. Allow the simulator to run for roughly 30 minutes. This must create enough data for graph creation, as detailed in the following section.

Evaluation

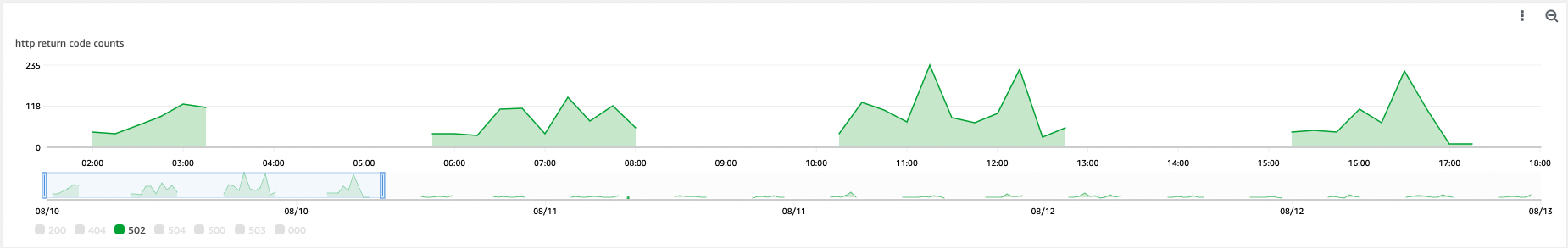

Let’s create an Amazon CloudWatch dashboard in the “appsimulator” namespace to monitor the metrics reported by the simulator. The first graph represents the total number of application pods and load simulator pods. The second and third graphs illustrate the overall number of HTTP error codes during the scale-down period. The graphs below depict the number of cluster nodes at a given time. At peak, a total of 623 simulation pods are running on 30 t4g.xlarge EC2 instance nodes, as shown in the following graph. Observe the increasing 50x HTTP error rate during the scale-down event as a result of fluctuating user demand.

In the next section, we demonstrate how we can use the Kubernetes PreStop hook, readiness probe, and health checks supported by AWS Load Balancer Controller to handle SIGTERM signals caused by pod termination to allow a graceful exit without affecting the end user.

The steps we did to remove the errors:

We implemented a new application health functionality with a signal handler. Then we configured the load balancer target group and kubelet to use the new health indication.

We have added a separate /logistics/health endpoint, and we will configure the Kubernetes ingress object to use the health check endpoint using annotation alb.ingress.kubernetes.io/healthcheck-path.We have configured a similar probe frequency as alb.ingress.kubernetes.io/healthcheck-interval-seconds to mark the container as not available.

Snippet from Kubernetes ingress spec

Snippet from Kubernetes deployment spec

The pod receives the SIGTERM signal from the kubelet because Kubernetes wants to stop it due to node termination or scale events. The PreStop hook allows you to run a custom command before the SIGTERM is sent to the pod container. The logistics pod starts to return 500 for GET /logistics/health to let Readiness probe know that it’s not ready to receive more requests, and the Load Balancer Controller updates the ALB to remove unhealthy targets.

Snippet from Kubernetes deployment spec:

You can now apply the updated Django ingress manifest with the above changes. Allow around thirty minutes for the simulation to produce the load before scaling down the application.

Test results

The number of 504 errors decreased from an average of 340 during the scale-down event to zero, while the number of 502 errors decreased from 200 to 30. These 502 problems were detected by kubelet, but not by end users visiting an application via ALB.

Conclusion

This post demonstrates how Amazon EKS and the AWS Load Balancer Controller enable you to manage changing app demand without impacting the end-user experience. By combining the Kubernetes PreStop hook and the readiness probe with a health check endpoint, you may gracefully manage SIGTERM signals for your application. Check out the AWS Load Balancer Controller documentation for the full list of supported annotations. Please visit the AWS Containers Roadmap to provide feedback, suggest new features, and review our roadmaps.