AWS for Industries

OpenVINO™ AMI now available on AWS for accelerating oil & gas exploration

Guest authored by Dr. Manas Pathak, Global AI Lead for Oil and Gas at Intel & Dr. Ravi Panchumarthy, Machine Learning Engineer at Intel

—

Introduction

Convolutional Neural Networks (CNNs) offer State-of-the-art performance not only for traditional computer vision applications but also for seismic interpretation. Geoscientists can use CNNs for basin-wide quick look interpretation of seismic data for fault, salt or facies identification. This helps in saving time on a lot of tedious work, thereby reducing the time to first oil. Machine Learning is a good tool for basin wide quick look interpretation, and prospect generation – which cannot be done with the classic tools available. When manually performed, only a very few interpretations are feasible.

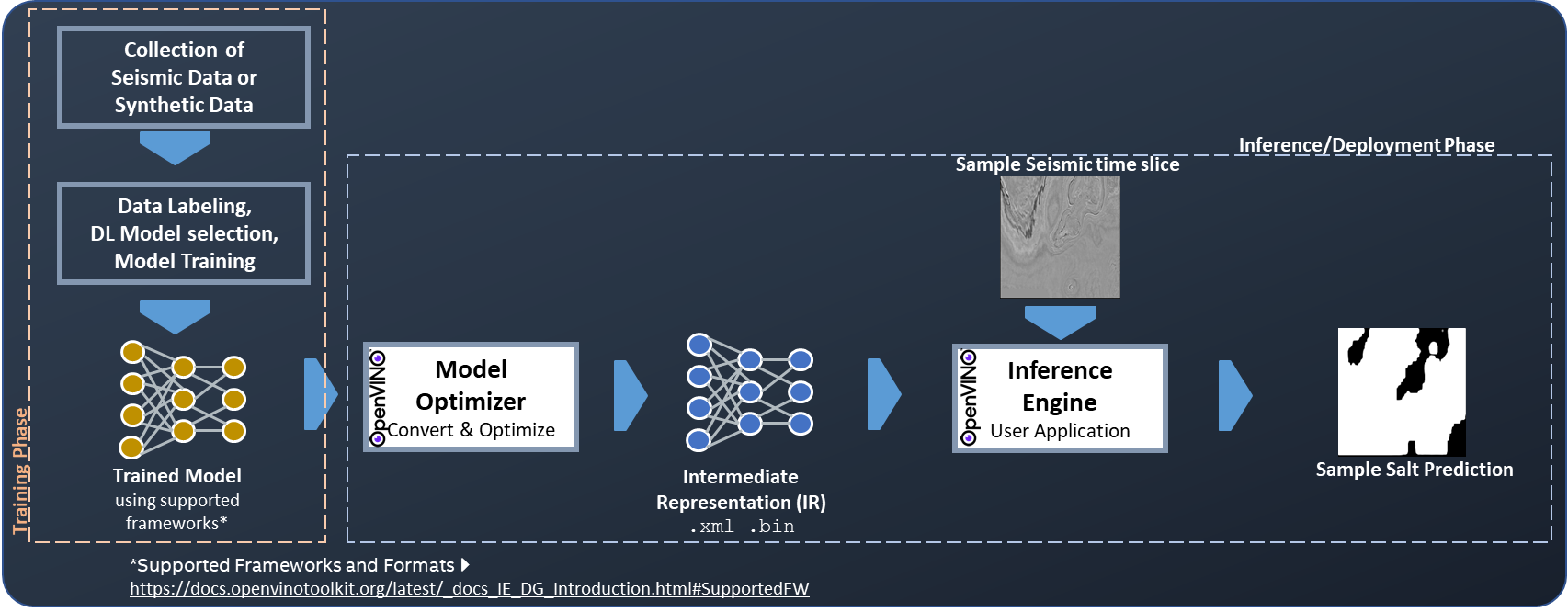

A recent development in this field shows that CNN models that have been trained well on synthetic seismic datasets are producing acceptable accuracy in identifying faults using real datasets. Such solutions accelerate oil and gas exploration since geoscientists do not need to train models from scratch on newly acquired seismic datasets in order to get quick-look interpretation results – even at basin scale. Intel® Distribution of OpenVINO™ toolkit can help accelerate the inference pipeline for seismic interpretation, technical details on training and inference can be found in a recently published work. In that work, we showed a workflow (Figure 1) to use OpenVINO™ toolkit on a pre-trained model to perform inference faster without compromising accuracy on 2nd Generation Intel® Xeon® Scalable Processors (Cascade Lake) that can be availed in C5 instances on AWS. The Latest blog shows over 3x improvement with INT8 precision in fault detection seismic workload by leveraging OpenVINO™ toolkit and Intel® Deep Learning Boost (Intel® DL Boost). Intel® DL Boost includes new Vector Neural Network Instructions (VNNI) which enable INT8 deep learning inference (Refer to Optimization Notice for more information regarding performance and optimization choices in Intel software products).

Figure 1: End to end workflow showing deep learning performed on a seismic dataset. The training/trained model must be in OpenVINO™ toolkit’s supported Frameworks and Formats . The inference in this case is fault detection performed on F3 Seismic data.

OpenVINO AMI in AWS Marketplace

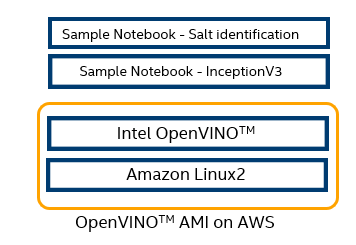

In order to facilitate accelerated seismic interpretation on AWS, Intel Energy and AWS Energy teams worked together to create OpenVINO™ AMI (Amazon Machine Image) based on Amazon Linux 2 operating system and published it in the AWS Marketplace (Figure 2).

Figure 2: OpenVINOTM AMI offering in AWS Marketplace

Sample Jupyter notebooks are also provided to perform inference using OpenVINOTM toolkit from a pre-trained model. The AMI can be searched in step 4 of AWS Quick Start Guide with name OpenVINO. After you launch an instance from OpenVINOTM AMI, you can connect to it and use it just like you use any other server. For information about launching, connecting, and using instance, see Amazon EC2 instances. For more information on using this AMI, please visit AWS AMI instruction.

Figure 3: Architecture diagram showing OpenVINOTM AMI on EC2 and its samples

Following two sample Jupyter notebooks are provided as reference to use OpenVINOTM AMI on AWS.

- InceptionV3 for general computer vision-based applications

- Salt Identification in seismic data. This notebook uses a 3D CNN based model on data from the F3 Dutch block in the North Sea to identify salts. Salt-bodies are important subsurface structures with significant implications for hydrocarbon accumulation and sealing in petroleum reservoirs. On the other hand, if Salts are not recognized prior to drilling, they can lead to number of complications if encountered unexpectedly while drilling the well. The ability to quickly launch OpenVINO™ enabled instances in AWS to perform automatic quick look seismic interpretation from a pre-trained model will help geoscientists in reducing time to the first oil.

To access other pre-optimized deep learning models, visit OpenVINO™ – Open Model Zoo repository which provides free 100+ pre-trained models to speed-up the development and production deployment process.

—

Notices & Disclaimers

Software and workloads used in performance tests may have been optimized for performance only on Intel microprocessors.

Performance tests, such as SYSmark and MobileMark, are measured using specific computer systems, components, software, operations and functions. Any change to any of those factors may cause the results to vary. You should consult other information and performance tests to assist you in fully evaluating your contemplated purchases, including the performance of that product when combined with other products. For more complete information visit www.intel.com/benchmarks.

Performance results are based on testing as of dates shown in configurations and may not reflect all publicly available updates. See backup for configuration details. No product or component can be absolutely secure.

Your costs and results may vary.

Intel technologies may require enabled hardware, software or service activation.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

Optimization Notice

Intel’s compilers may or may not optimize to the same degree for non-Intel microprocessors for optimizations that are not unique to Intel microprocessors. These optimizations include SSE2, SSE3, and SSSE3 instruction sets and other optimizations. Intel does not guarantee the availability, functionality, or effectiveness of any optimization on microprocessors not manufactured by Intel. Microprocessor-dependent optimizations in this product are intended for use with Intel microprocessors. Certain optimizations not specific to Intel microarchitecture are reserved for Intel microprocessors. Please refer to the applicable product User and Reference Guides for more information regarding the specific instruction sets covered by this notice

Configuration

—

Acknowledgment to Intel Contributors

We would like to acknowledge other contributors from Intel who contributed to this work: Flaviano Christian Reyes, Vibhu Bithar, Alexey Gruzdev, Alexey Khorkin, Louis Desroches

Also, acknowledging Joseph Glover from AWS for his contribution in AMI creation.

—

Dr. Manas Pathak is the Global AI Lead for Oil and Gas at Intel. He has a Ph.D. in Chemical Engineering and a Masters in Applied Geology. In his Ph.D. research, he used machine learning to optimize oil production.

Dr. Ravi Panchumarthy is a machine learning engineer at Intel. He collaborates with Intel’s customers and partners to build and optimize AI solutions. He also works with cloud service providers to enable Intel’s AI optimizations in cloud instances and services. He has a PhD in computer science and engineering, holds two patents and several peer-reviewed publications in journals and conferences. In his free time, he enjoys traveling and hiking.