Networking & Content Delivery

How Flowplayer Improved Live Video Ingest With AWS Global Accelerator

Flowplayer is an online video platform designed for publishers and the media industry. Founded in 2007, their platform fast became known for being a powerful yet lightweight solution. Rather than concentrating on just a single subset of the market, they have designed their solution to suit small, specialized businesses all the way up to global-scale media houses.

Originally, Flowplayer only offered their customers a web-based video player. As more customers started to use the video player on their own websites, Flowplayer started to offer additional services that their customers needed:

- Video upload, encoding, and hosting

- Live streaming video ingest, transcoding, and distribution

While building out these additional adjacent services, Flowplayer operated their own data centers. They relied entirely on third-party CDN providers to offer the content delivery back to the end users, as well as to ensure that Flowplayer had strong peering connections into their data centers from those CDNs.

As the user base scaled, the peaks and troughs of utilization became more exaggerated. Within their own data centers this was becoming more of an operational cost than Flowplayer wanted. It quickly became evident that a shift to a cloud-based solution with AWS as the foundation would be much better able to handle the scaling of the user base, and that the scaling of this on-demand capacity could easily occur on a global basis.

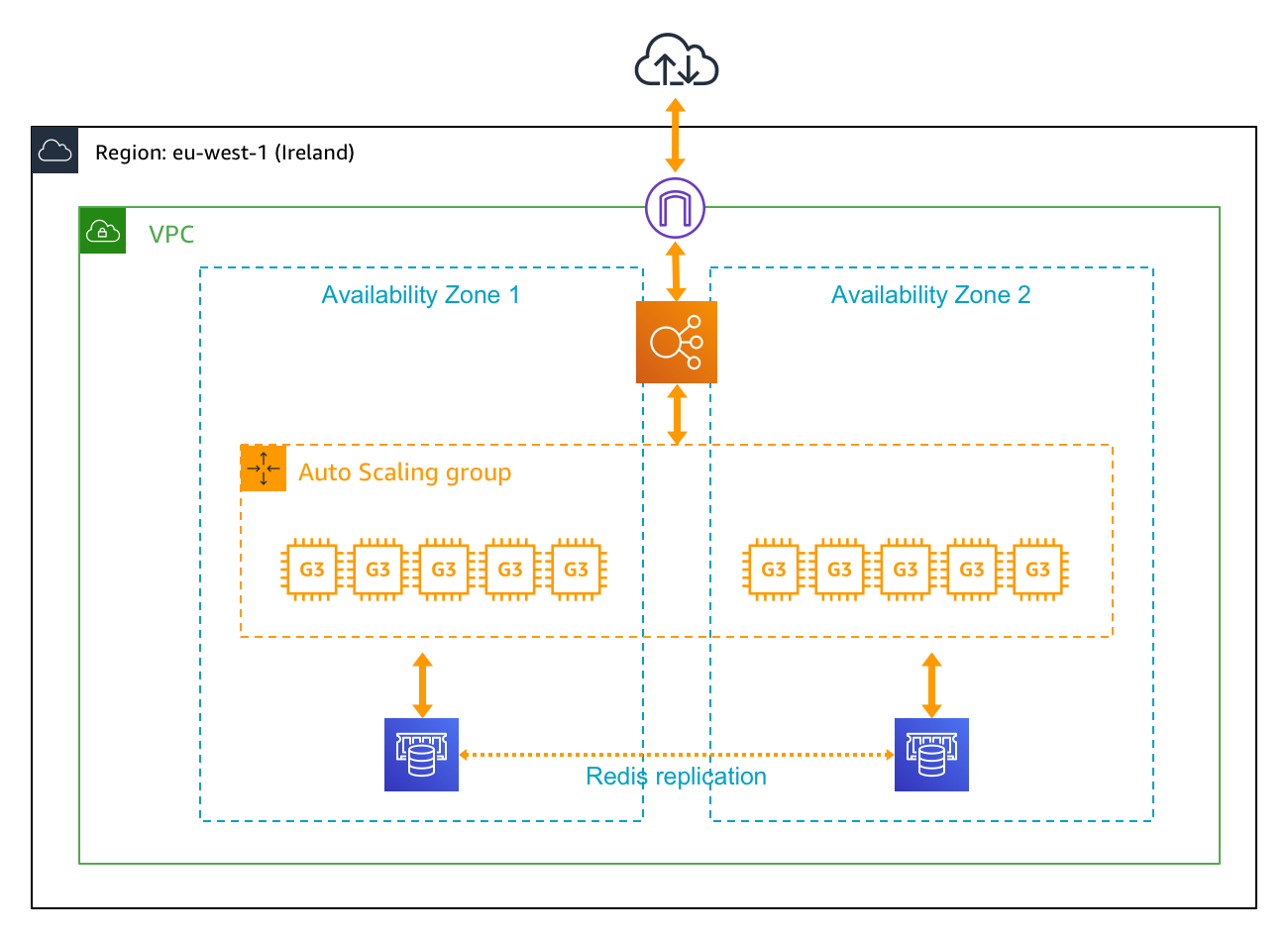

Before the adoption of AWS Global Accelerator, the Flowplayer application for video ingestion and transcoding was deployed into a single AWS Region, as shown in the following diagram:

The multi-tenant cluster scaled up to a maximum of 10 EC2 instances, which were either g3.4xlarge or g3.8xlarge instances. Amazon Elasticache for Redis provided an internal transcoding map, using multiple nodes for redundancy. Such a cluster could handle up to 200 concurrent ingest processes.

The automatic scaling policy was set to be aggressive against CPU and GPU utilization so that additional cluster nodes would be immediately available to new customer ingest jobs. It would not be unusual for a single customer to request ingest of 20 live football matches from multiple mobile locations, so there could not be any delays due to having to scale.

Challenges With Global Live Video Ingest

Flowplayer had several challenges that made it complex to operate a truly global, high-quality, live video ingestion solution:

- Flowplayer needed a truly global solution. Due to the nature of their user-base, live ingest feeds could originate from any part of the world at any time.

- Given that engineering resources are scarce, Flowplayer needed a solution that was as automated as possible. The solution had to provide them with the flexibility to update the service dynamically with more transcoding clusters without changing the endpoint to which end users connect.

- High performance was important to ensure that ingest feeds are routed to the transcoding cluster closest to the end-user.

- The solution had to provide high availability over long periods of time, especially given that live streams could be running for hours or even days.

- Fast failover between different transcoding clusters was important, as the end-to-end service had to provide high availability even for component failures.

- In scenarios such as mobile live broadcasting, the bandwidth available is limited. This made the quality of the underlying upload connection network even more important.

- They needed high levels of capacity at short notice, as many feeds are sent from mobile production setups.

Solution

Real-time applications such as live streaming can be quite complicated to design an architecture for. This is partly due to the time sensitivity of the feed, but also because of the capacity requirements for providing a good service. These challenges are less present in the video-on-demand workflow, where a delay or problem during the upload process can easily be fixed by restarting the upload. However, the live use case required an always-on ingest connection to the transcoding cluster for long periods of time, which could last for several hours.

Flowplayer had been searching for practical solutions to these problems for some time. They were excited to find out that AWS had a service that was a perfect match to their needs. Putting Global Accelerator in place allowed them to provide the following benefits:

- Higher availability for end users by removing the need to travel across multiple public networks.

- Ability to fail over to different transcoding clusters in either the same or a different AWS Region.

- Increased ingestion bitrates, allowing more simultaneous HD-quality ingest feeds

- The ability to set a static IP address as the endpoint to the application and then adjust the routing behind that static IP address.

- Automatic routing to the closest healthy endpoint.

The video transcoding application was already built and deployed on AWS, but Flowplayer had no real control over the network paths that their customer traffic would take. By using Global Accelerator, they know that customer traffic will now be pushed towards the Amazon global network quickly, being ingested at an edge location near to the customer. They also now have more visibility and control over the ingestion network.

Even a single failure in a live stream can cause a disaster when you have a popular event with the equivalent of a full stadium of viewers (50,000+), all experiencing a black screen simultaneously. That can have a negative effect on social media. Using a consistent ingest service and managing to avoid even one outage per month can have a positive business impact. Live media is one of those services where you just cannot afford to take any chances. Global Accelerator is successfully mitigating this risk for Flowplayer.

With their previous solution, the ingest capacity for a stream often dipped below minimum quality thresholds. For instance, to handle live sports ingestion, they needed a minimum of 4Mbps for a 30-fps stream of 720p HD-quality video. When bitrates fell below this, the resultant video quality was poor.

With the introduction of Global Accelerator, they have seen higher bitrates and can now maintain more high quality ingest streams than before. They achieved this through a combination of use of the Amazon backbone, as well as the automatic routing of traffic in a balanced fashion across the whole Flowplayer estate.

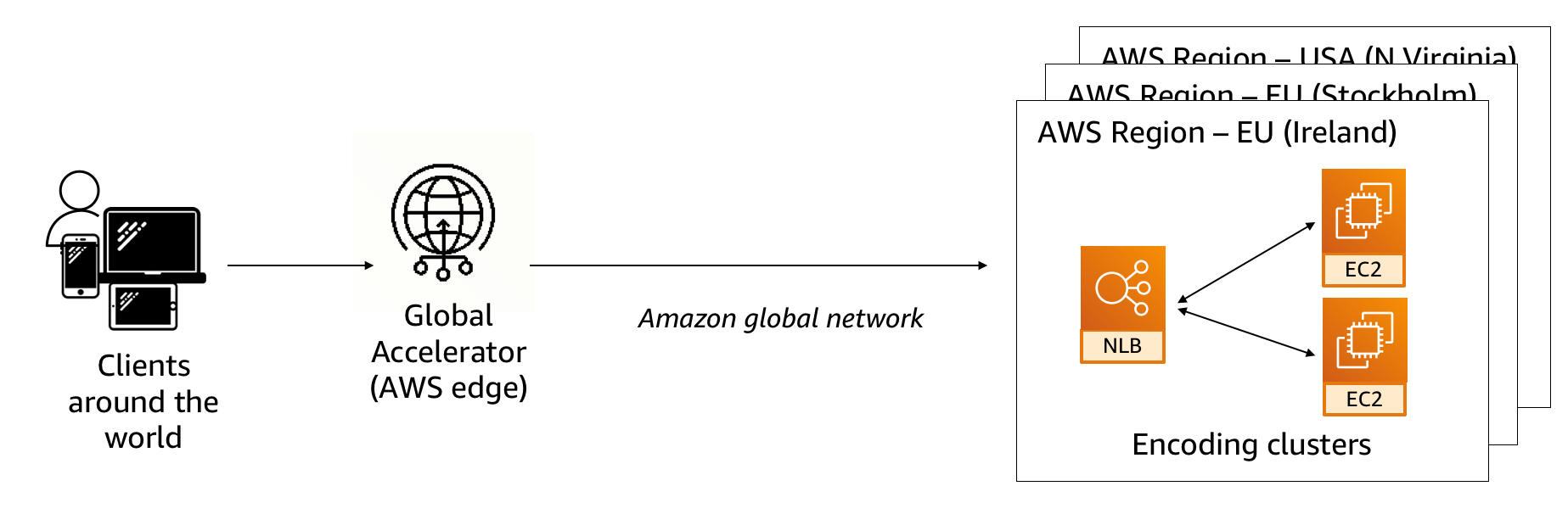

With the adoption of Global Accelerator, Flowplayer has now deployed their application stack in multiple AWS Regions around the world. Each Region contains multiple video ingestion transcoding clusters, fronted by Network Load Balancers, a simplified version of which is shown in the following diagram.

.

By taking advantage of network zones, Flowplayer can provide their customers with two redundant entry points into their application. This is because Global Accelerator provides you two static IPv4 addresses per deployed accelerator. Each is linked to a different network zone at the AWS edge locations. The infrastructure deployed for each network zone is isolated from the other zones, thus providing entry point redundancy.

This powerful feature completely removes the need for Flowplayer to worry about endpoint failover. No matter how they update their application behind the scenes, they do not have to worry about updating DNS records or reconfiguring their client applications. They can spend all their time instead working on their platform, delivering additional features to their customer base and leaving the global network routing problem to AWS.

As the Flowplayer application was rolled out into additional AWS Regions, they defined each of these deployments as additional endpoint groups. This allowed them to take advantage of the health check mechanism that is built into AWS Global Accelerator.

For Flowplayer, this meant that if a deployment in one particular Region was declared unhealthy then traffic would automatically be re-routed to the closest healthy endpoint. This has helped increase the overall global availability of the application.

In addition to all of this, the required time to run the server operations was less within the AWS environment with Global Accelerator. The developers can now deploy code more quickly to the test and production environments, thus boosting overall productivity. Flowplayer estimates that they are saving at least 10 hours per month alone just by not having to build, operate, monitor, and maintain their own custom, load-balanced ingestion service.

Active Traffic Management

Now that this system is in place, Flowplayer is currently looking at the next phase of their plans. They plan to perform more active management of the levels of traffic sent to each individual AWS Region. Initially, this covers their two chosen Regions in the EU, Dublin and Stockholm, but will later be rolled out to two additional Regions in North America. Each endpoint group within a Global Accelerator deployment can have a traffic dial, which dictates the amount of traffic that a particular endpoint group can receive.

Due to the distributed nature of Flowplayer’s customer base, there are times when specific endpoint groups receive large spikes in traffic. It would be possible to simply scale up the resources within that endpoint group to handle the increased load, but Flowplayer would instead like to send some of that traffic automatically to currently underused resources in a different endpoint group in a different, geographically close AWS Region. For example, if Dublin was fully utilized and Stockholm had spare capacity, then dialing down the Dublin endpoint group to 75% would redirect 25% of the traffic that would normally be destined for Dublin to Stockholm.

Summary

Using Global Accelerator, Flowplayer improved the reliability and resilience of live video ingestion on their platform. They were able to perform many global traffic management operations that simply weren’t possible before. By taking advantage of native AWS networking features, they were also able to simplify their overall software development stack. They made efficiency gains in their DevOps processes by simultaneously moving more of their video platform to AWS.

“Our open-source video player for the web allows users to stream video in their pages, from their own video origins. Our video platform is hosted on AWS and uses Global Accelerator to improve the performance and availability of video ingest for our users all around the world.”

— Erik Viklund, Vice President, Dev Ops, Flowplayer