AWS Robotics Blog

Testing a PR2 Robot in a simulated Hospital World

Background

Nowadays, risk of spreading disease is a key concern in hospitals, where doctors, nurses and other caregivers are on the front lines helping patients. Hospitals have started using robots in daily operations such as contactless delivery and room disinfection to reduce risk of spreading disease. As the need for robots in healthcare grows, better tools are needed to build, test, and deploy robotics applications quickly and safely.

Introduction

Testing robots in a physical environment is time consuming, and testing new code in a hospital environment can pose a safety risk due to the unpredictability of untested code. Testing in a virtual environment, or a simulated world, increases test coverage, reduces safety risk, and decreases development time. However, creating virtual worlds for simulation is costly, time consuming, and requires specialized skills in 3D modeling. For that reason, Amazon Web Services (AWS) has developed a Gazebo simulated Hospital World and published it as open source so that robotics companies within the Healthcare industry can more easily test their robots in a simulated hospital environment. In this blog, I will provide an overview of the hospital and share my experience using it to test a PR2 robot, including software failures that I encountered that you may find helpful.

In order to test and demonstrate the features of the Hospital World, I wanted to use a robot that moves and looks like I would expect a hospital based robot to move and look if I were to develop one myself. I researched available options from the open-source community, searching for robots that had existing simulation packages, and the one that achieved these needs was the PR2 robot. The PR2 was developed by Willow Garage in conjunction with ROS as a development and research platform. It’s great for simulation, running navigation and SLAM, and it’s fun to play with!

PR2 navigating the Hospital World

Overview

Now that we have background on why this matters and what robot was chosen for testing, we will dive into these three areas:

- A ‘quick tour’ of the Hospital World

- Testing the PR2 robot in the Hospital World

- Getting started with the Hospital World

The quick tour

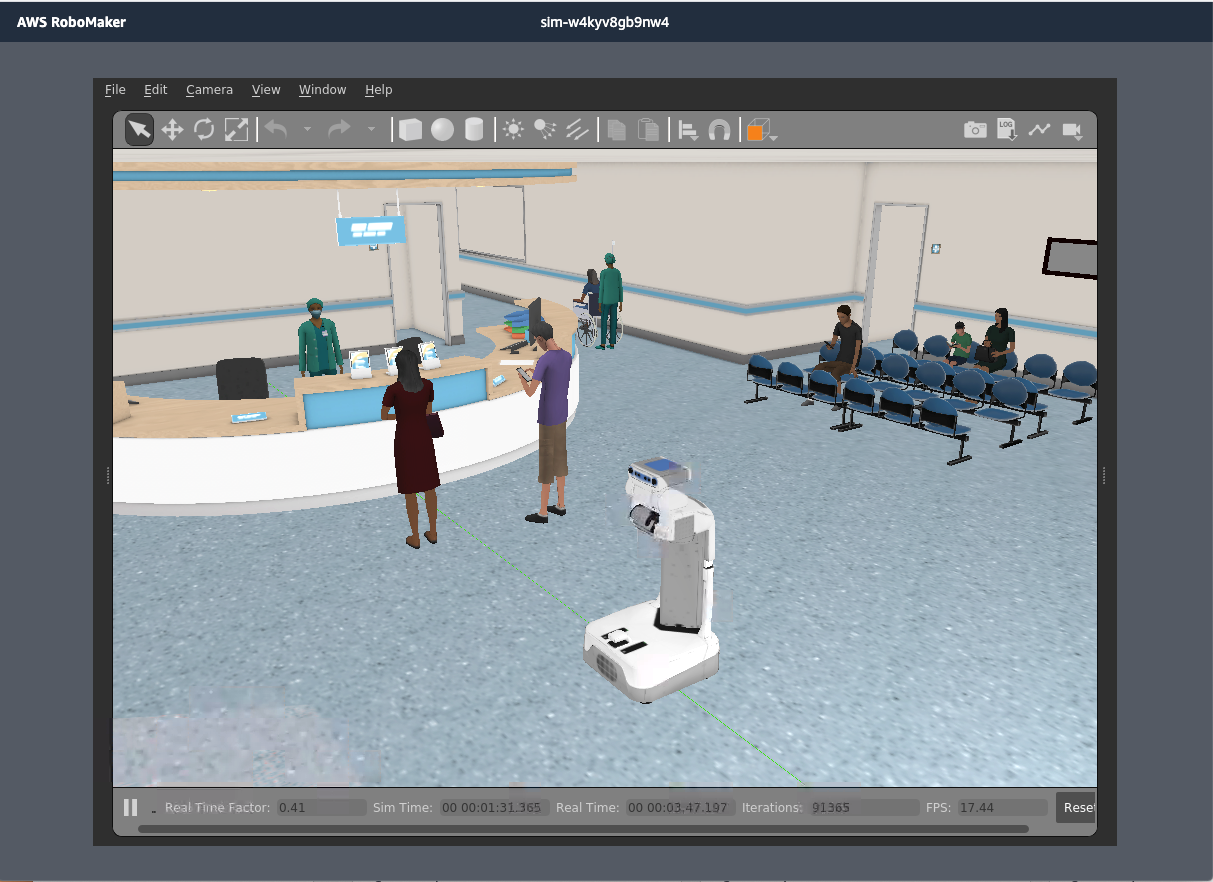

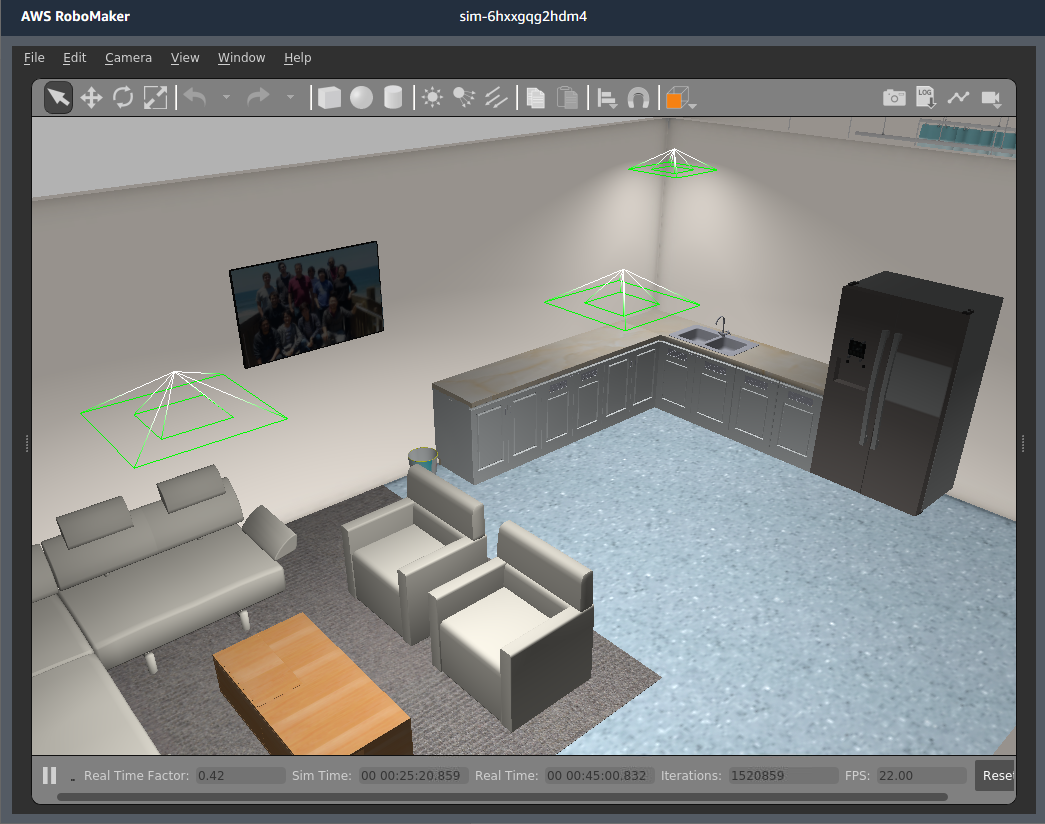

The first thing to point out is the lobby of the Hospital World, where there’s a front desk with a waiting area, patients and medical staff nearby.

Figure 1: The PR2 in the lobby with front desk and waiting area

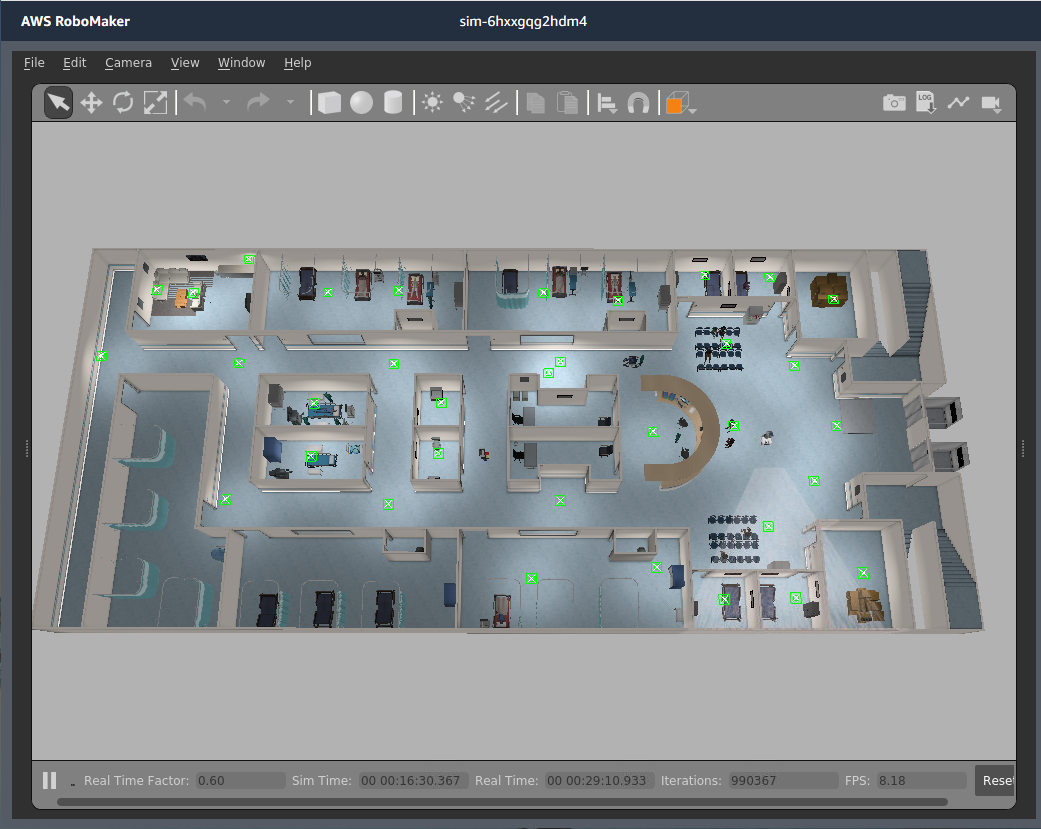

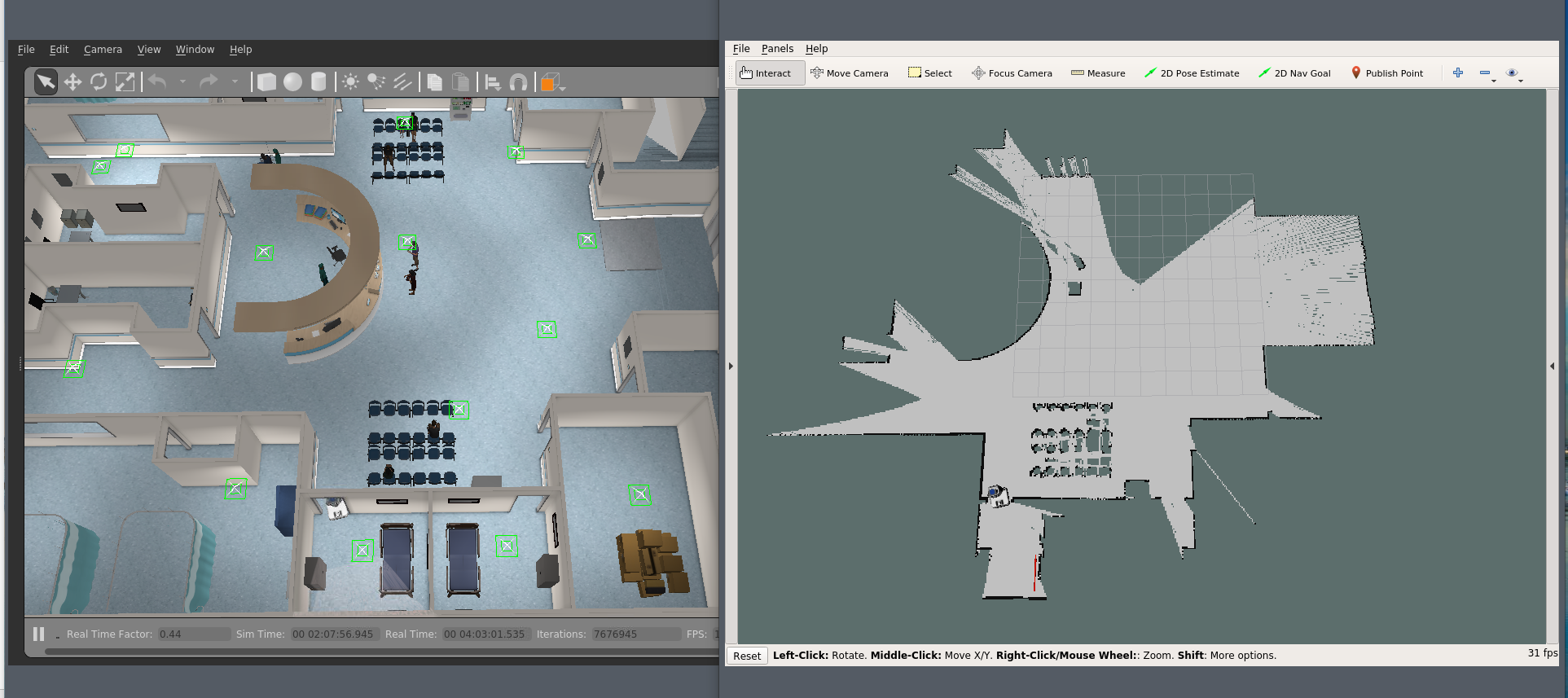

The second thing to note is the size and layout of the hospital.

Figure 2: Top down view of the Hospital World

The hospital is much larger than the other AWS open source worlds such as Bookstore World and the Small Warehouse World, and has more rooms, including exam rooms, patient rooms, storage, and a staff break room. Larger, complex worlds are great for testing navigation and especially SLAM algorithms. In the rooms, there are hospital beds, chairs, and furniture, which makes testing obstacle avoidance algorithms realistic. In addition, there are stairways, a ramp, steps into the lobby, and elevators, which are all excellent for testing robot perception and navigation behaviors.

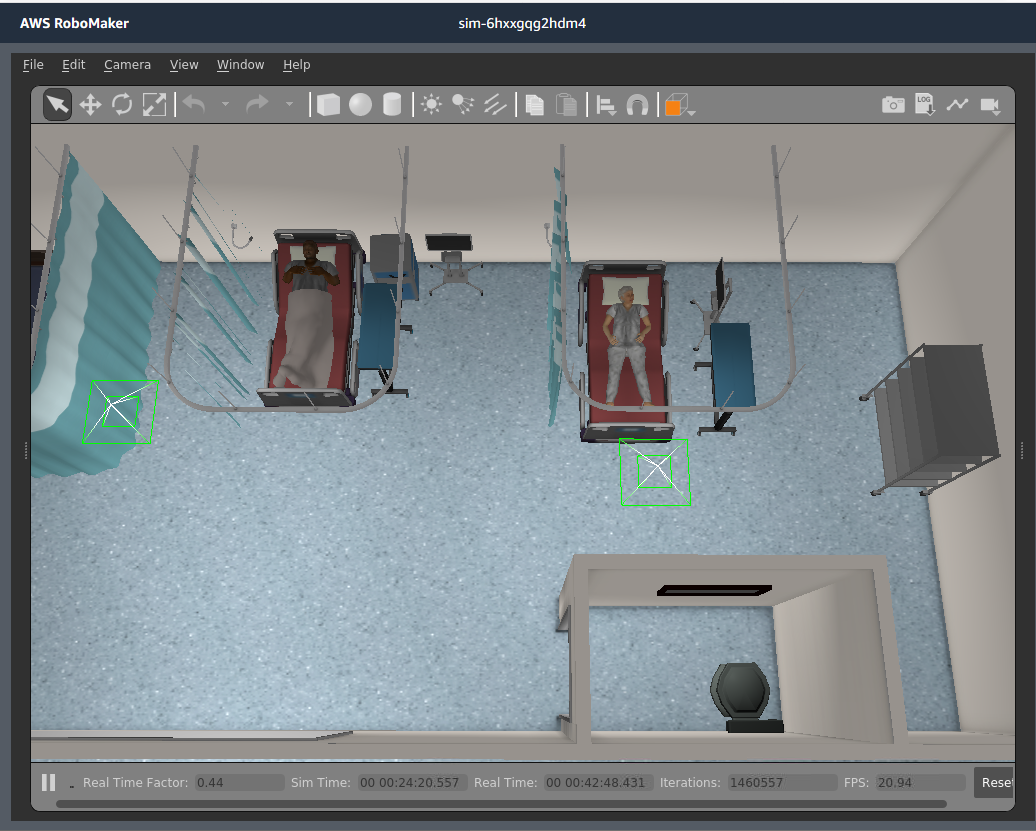

Figure 3: Patient room and hospital staff

Figure 4: Top down view of the patient room

Figure 5: Hospital staff break room

Now that we’ve had the quick tour, I’ll share my experience testing the PR2 in this Hospital World.

Testing the PR2 in the Hospital World

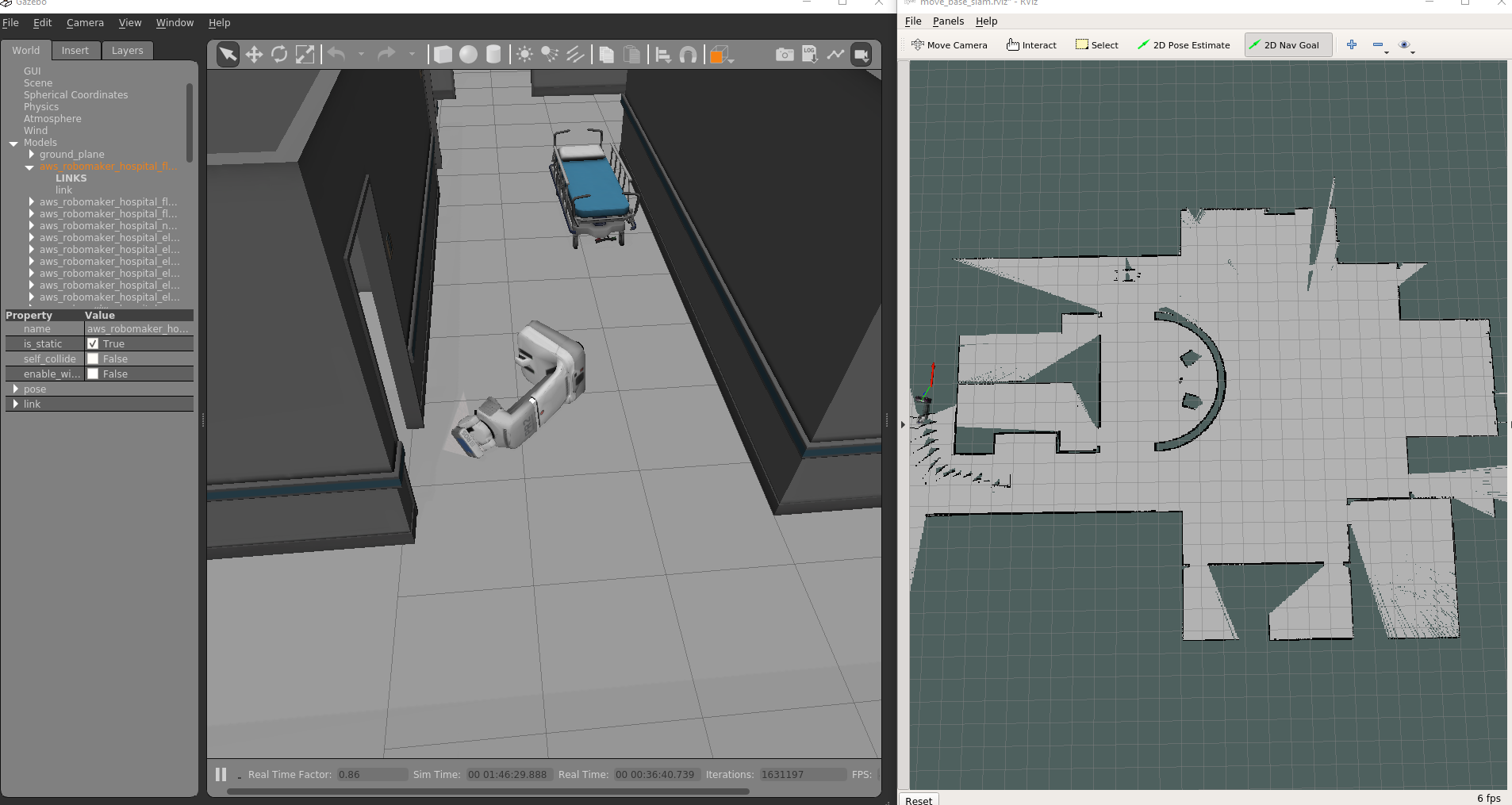

I decided to spawn my PR2 in the lobby of the hospital, which I accomplished by using the pr2_no_arms.launch file from the pr2_gazebo ROS package. After spawning the robot, I wanted it to navigate autonomously from point to point. In order to do that I needed a map or I needed to create a map dynamically by running SLAM. The PR2 has an existing ROS package to run navigation and SLAM together, pr2_2dnav_slam, which made it simple to get those algorithms running.

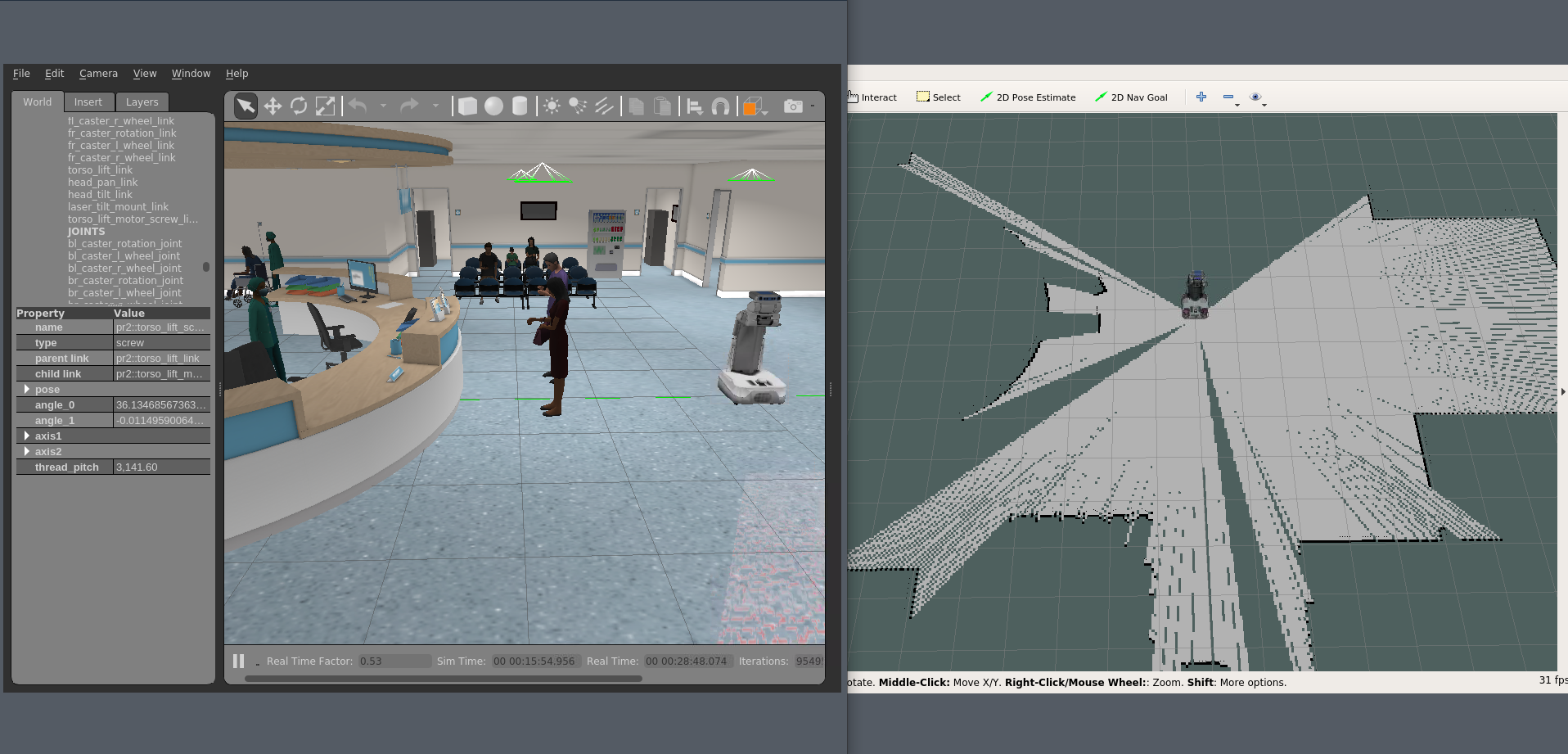

With those packages running, I opened RViz the ROS visualization tool to see the map as it was being generated.

Figure 6: Gazebo and RViz views of PR2 in hospital lobby

Once I had a map showing in RViz, I had the ability to send the PR2 navigation goals via the ‘2D Nav Goal’ tool in the GUI. Initially, I tried to navigate the PR2 into a small exam room directly off the lobby area, as a simple test to validate that the navigation and SLAM components were running correctly. The robot only made it to the doorway but wasn’t able to navigate through the doorway. Was the doorway too narrow for the robot or was the software not working correctly? I wasn’t sure yet, so I tried navigating to a few other locations to see if those would work.

Figure 7: PR2 stuck in the doorway

After navigating back to the starting point in the lobby, I decided to see if the robot was able to navigate behind the desk to map the area. It created a plan and attempted to navigate there, but the robot got stuck trying to turn the corner to go behind the desk. Now I had a second failure, which was making me suspicious of the navigation software.

Figure 8: PR2 stuck on the desk corner

After tele-operating the robot using the ROS pr2_teleop package to get it unstuck, I decided to send it down a hallway to explore and extend the map. What I hadn’t noticed was there was a wheeled trolley bed in the hallway, and I watched as the PR2 tried to avoid the trolley bed. Halfway around the bed, the robot hit the edge and began pushing the trolley bed. It pushed the bed down the hall and around the corner before losing balance and falling over.

Figure 9: PR2 fell over after running into a hospital bed

With all of the errors, I was glad to be testing in a simulation environment and not with a physical robot in a real hospital. The expensive robot could have been damaged if this failure had happened in reality. With multiple failures, I was becoming confident that something was wrong with the robot navigation software.

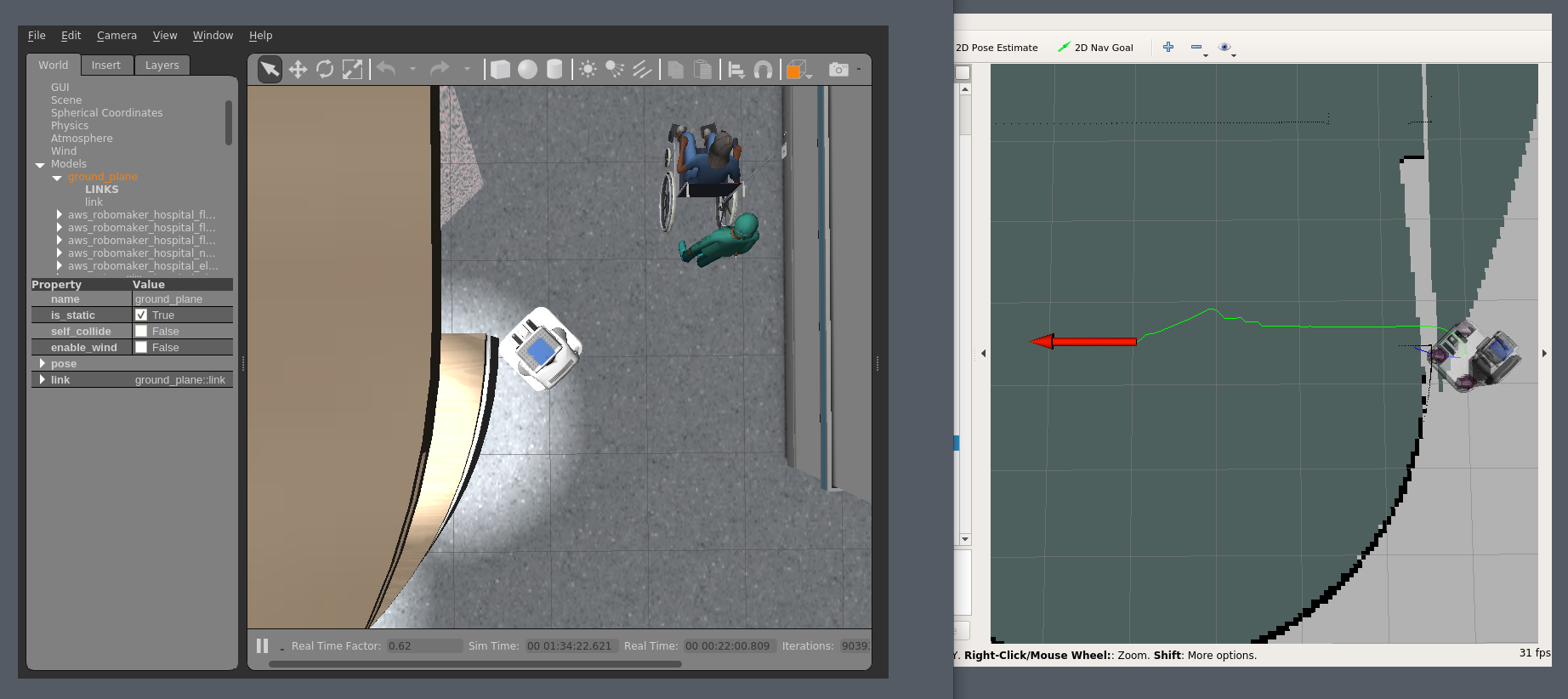

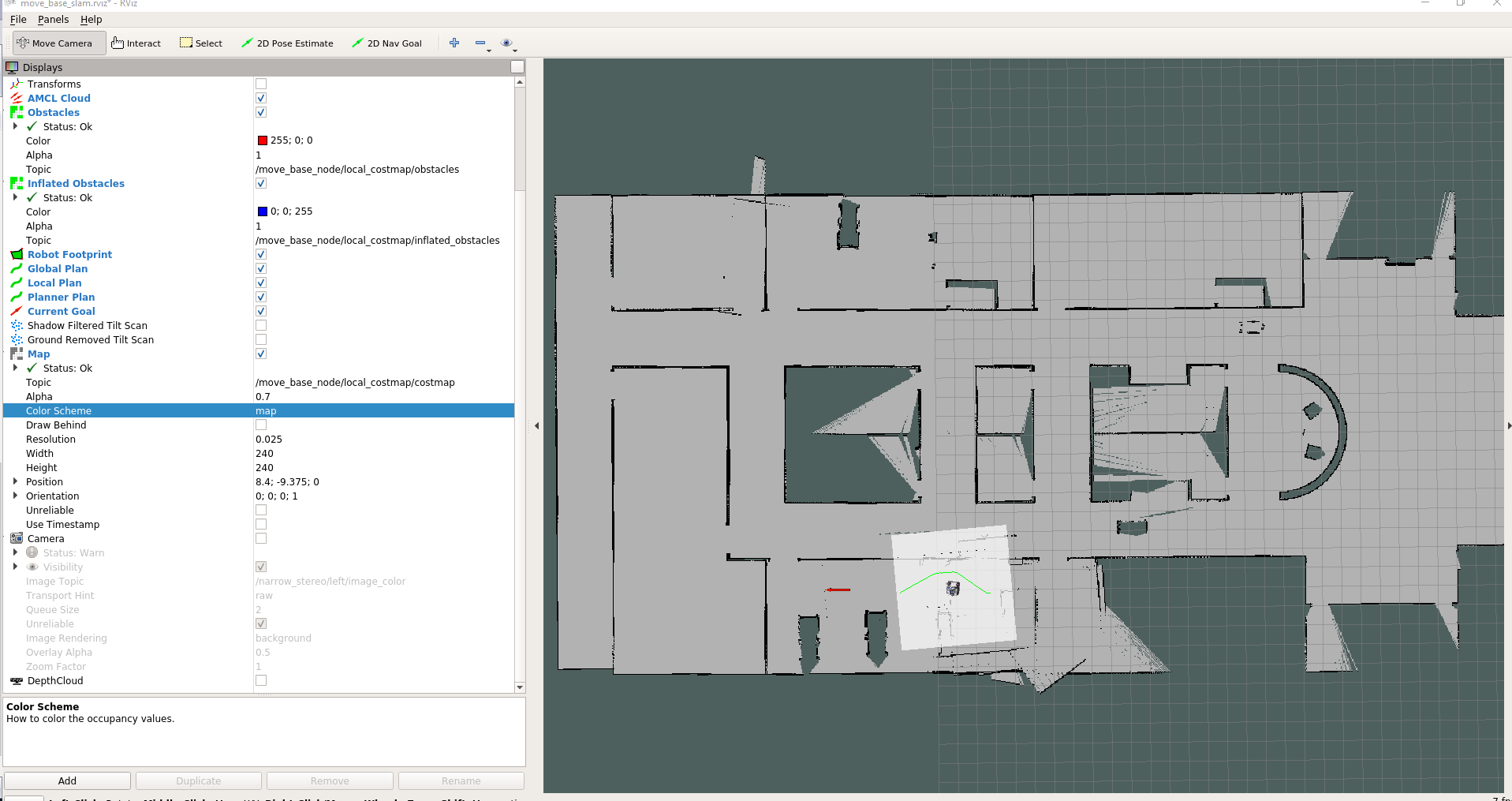

If you know how ROS navigation works, the obstacle avoidance portion of the stack is the local costmap. I was highly suspicious that the local costmap wasn’t working, so I tried to visualize it in RViz. Sure enough, the local costmap wasn’t showing any obstacles. I continued testing to see if visualization was the only problem or if the robot really couldn’t navigate local obstacles. I ended up crashing the robot again, this time in one of the patient rooms. In RViz the robot’s local costmap region (the square highlighted on the map) wasn’t inflating the obstacles like it should. The local costmap showed up as clear instead of inflating the obstacles using color gradients.

Figure 10: RViz view of the local costmap

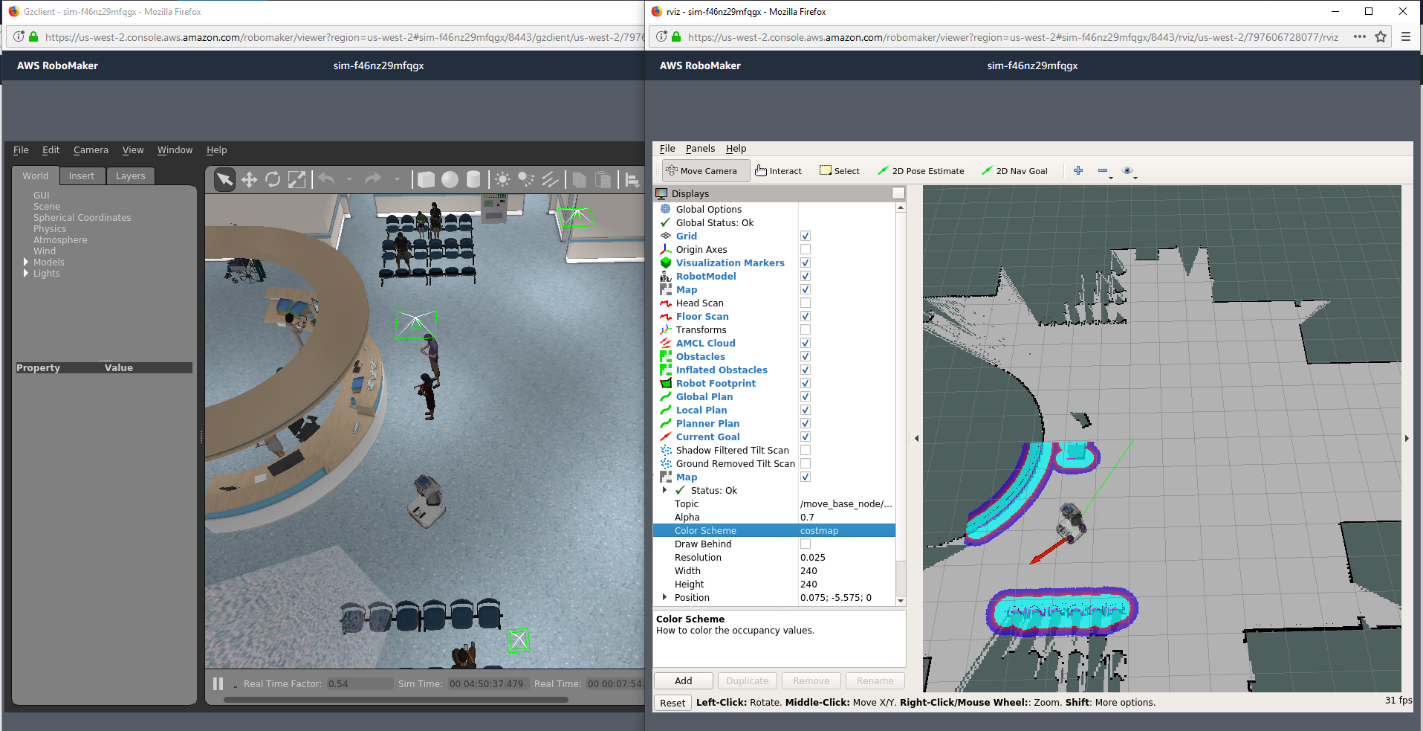

After a little more investigation, by checking the parameters for the local costmap, I found the problem. One of the local costmap inputs was clearing the costmap data. After a parameter change, I was able to get the local costmap working and inflating obstacles, which now showed colorfully in RViz. The PR2 could now navigate around the corners and beds without crashing

Figure 11: Gazebo and RViz view with the local costmap inflating obstacles

Once I had everything working correctly, I wanted to generate a map of the entire hospital and save it to a map file. Mapping the entire hospital by manually sending new goal locations to the PR2 would be time consuming. Instead, I wanted the PR2 to map the Hospital World autonomously. To do this, I added the ROS explore_lite package alongside the existing navigation + SLAM I was already running. With the explore_lite package running, the PR2 was driving around exploring on its own! As I watched it drive around creating the map, it was fun to guess where it was going to try and go next.

While the robot was exploring and mapping, I did encounter several more failure modes. First, it fell down the stairs! That was bad, obviously. The sensors didn’t detect the drop off and the PR2 mistakenly thought the stairway was a hallway. Testing robots near stairways and ramps is a great use of simulation, so I tested it on the ramp to the elevators, and found that it got stuck on the transition from the top of the ramp to the elevator platform. I believe that this failure indicates a mechanical problem as the wheels couldn’t climb over the final transition.

Finally, but much more common, was that the PR2 would get stuck oscillating between a doorway it needed to pass through and a goal pose that was outside the door in the other direction. For example, if the goal was in a room to the east but the doorway to exit the room it was in was on the west side, it got stuck oscillating forever. I believe more parameter tuning could alleviate this oscillation.

Getting started with Hospital World

If you want to try testing the PR2 in the Hospital World yourself, a sample application is available for download on GitHub. You can get the source code here and follow the README.md document for instructions on how to run. The sample application makes it easy to get started whether you’re working in AWS RoboMaker or on a local system.

Conclusion

I hope you found my tour of the Hospital World simulation and learnings while testing interesting. It shows how useful an environment like this can be for finding different types of software problems and even physical / mechanical design issues before testing on hardware. If you want to know more about testing robots in simulation, read our blog titled: Introduction to Automatic Testing of Robotics Applications

If you have further questions about the Hospital World, the sample application, or AWS RoboMaker, reach out to AWS for further information!