AWS Big Data Blog

Using CombineInputFormat to Combat Hadoop’s Small Files Problem

James Norvell is a Big Data Cloud Support Engineer for AWS

Many Amazon EMR customers have architectures that track events and streams and store data in S3. This frequently leads to many small files. It’s now well known that Hadoop doesn’t deal well with small files. This issue can be amplified when migrating from Hadoop 1 to Hadoop 2. Jobs that took an acceptable amount of time in Hadoop 1 can suddenly take much longer to complete. Hadoop 2 doesn’t reuse task JVMs as in Hadoop 1 so each small file is sent as input to a completely new YARN container, which incurs extra overhead and prolongs the entire job. Methods such as aggregating your files using S3DistCP can alleviate the problem, but they add extra steps and time to the job flow.

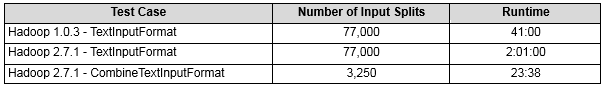

Many applications can avoid this without changing the input by using Hadoop’s built in CombineTextInputFormat. This input format class creates splits composed of multiple files to send to each mapper up to the value of mapreduce.input.fileinputformat.split.maxsize. The following table shows the results of a WordCount application run on an input set of 77,000 small files (< 64MB):

Using CombineTextInputFormat is simple: just change the InputFormatClass and set mapreduce.input.fileinputformat.split.maxsize in the configuration:

import org.apache.hadoop.mapreduce.lib.input.CombineTextInputFormat;

...

conf.set("mapreduce.input.fileinputformat.split.maxsize","268435456");

...

job.setInputFormatClass(CombineTextInputFormat.class);

You can also set configurations at launch time by providing a configuration object in the latest EMR releases.

If you have questions or suggestions, please leave a comment below.

——————-

Related

Using LDAP via AWS Directory Service to Access and Administer Your Hadoop Environment