AWS Compute Blog

Deep Dive on Amazon EC2 VT1 Instances

This post is written by: Amr Ragab, Senior Solutions Architect; Bryan Samis, Principal Elemental SSA; Leif Reinert, Senior Product Manager

Introduction

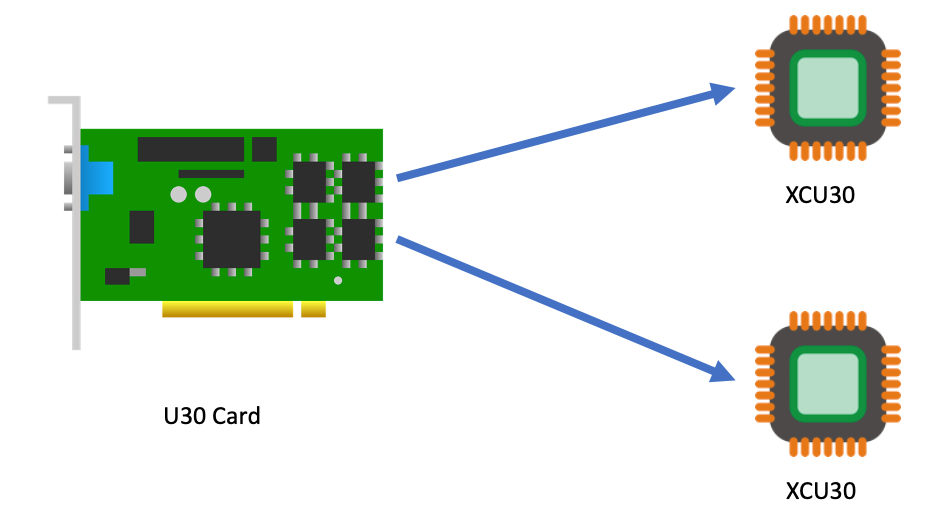

We at AWS are excited to announce that new Amazon Elastic Compute Cloud (Amazon EC2) VT1 instances are now generally available in the US-East (N. Virginia), US-West (Oregon), Europe (Ireland), and Asia Pacific (Tokyo) Regions. This instance family provides dedicated video transcoding hardware in Amazon EC2 and offers up to 30% lower cost per stream as compared to G4dn GPU based instances or 60% lower cost per stream as compared to C5 CPU based instances. These instances are powered by Xilinx Alveo U30 media accelerators with up to eight U30 media accelerators per instance in the vt1.24xlarge. Each U30 accelerator comes with two XCU30 Zynq UltraScale+ SoCs, totaling 16 addressable devices in the vt1.24xlarge instance with H.264/H.265 Video Codec Units (VCU) cores.

Currently, the VT1 family consists of three sizes, as summarized in the following:

| Instance Type | vCPUs | RAM | U30 accelerator cards | Addressable XCU30 SoCs |

| vt1.3xlarge | 12 | 24 | 1 | 2 |

| vt1.6xlarge | 24 | 48 | 2 | 8 |

| vt1.24xlarge | 96 | 182 | 8 | 16 |

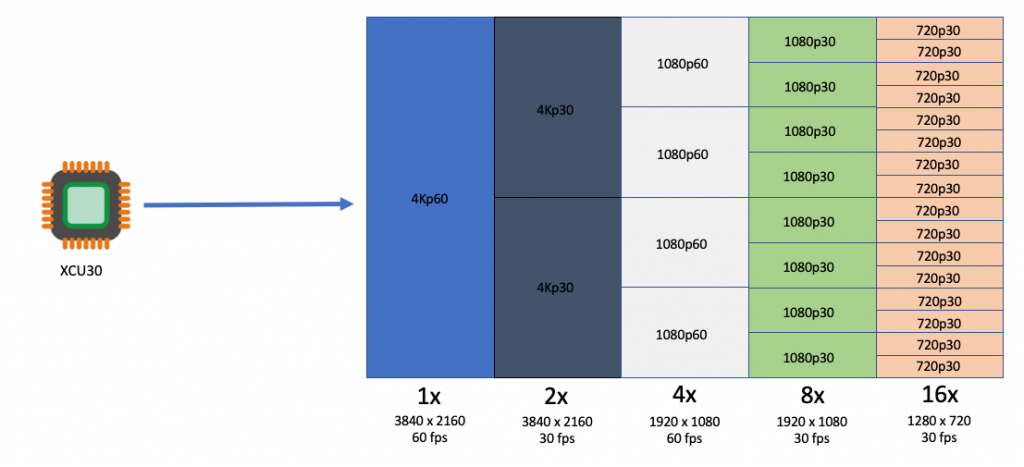

Each addressable XCU30 SoC device supports:

- Codec: MPEG4 Part 10 H.264, MPEG-H Part 2 HEVC H.265

- Resolutions: 128×128 to 3840×2160

- Flexible rate control: Constant Bitrate (CBR), Variable Bitrate(VBR), and Constant Quantization Parameter(QP)

- Frame Scan Types: Progressive H.264/H.265

- Input Color Space: YCbCr 4:2:0, 8-bit per color channel.

The following table outlines the number of transcoding streams per addressable device and instance type:

| Transcoding | Each XCU30 SoC | vt1.3xlarge | vt1.6xlarge | vt1.24xlarge |

| 3840x2160p60 | 1 | 2 | 4 | 16 |

| 3840x2160p30 | 2 | 4 | 8 | 32 |

| 1920x1080p60 | 4 | 8 | 16 | 64 |

| 1920x1080p30 | 8 | 16 | 32 | 128 |

| 1280x720p30 | 16 | 32 | 64 | 256 |

| 960x540p30 | 24 | 48 | 92 | 384 |

Customers with applications such as live broadcast, video conferencing and just-in-time transcoding can now benefit from a dedicated instance family devoted to video encoding and decoding with rescaling optimizations at the lowest cost per stream. This dedicated instance family lets customers run batch, real-time, and faster than real-time transcoding workloads.

Deployment and Quick Start

To get started, you launch a VT1 instance with prebuilt VT1 Amazon Machine Images (AMIs), available on the AWS Marketplace. However, if you have AMI hardening requirements or other requirements that require you to install the Xilinx software stack, you can reference the Xilinx Video SDK documentation for VT1.

The software stack utilizes a driver suite that is a combination of the driver stack as well as management and client tools. The following terminology will be used in this instance family:

- XRT – Xilinx Runtime Library

- XRM – Xilinx Runtime Management Library

- XCDR – Xilinx Video Transcoding SDK

- XMA – Xilinx Media Accelerator API and Samples

- XOCL – Xilinx driver (xocl)

To run workloads directly on Amazon EC2 instances, you must load both the XRT and XRM stack. These are conveniently provided by loading the XCDR environment. To load the devices, run the following:

source /opt/xilinx/xcdr/setup.sh

With the output:

-----Source Xilinx U30 setup files-----

XILINX_XRT : /opt/xilinx/xrt

PATH : /opt/xilinx/xrt/bin:/usr/local/sbin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin

LD_LIBRARY_PATH : /opt/xilinx/xrt/lib:

PYTHONPATH : /opt/xilinx/xrt/python:

XILINX_XRM : /opt/xilinx/xrm

PATH : /opt/xilinx/xrm/bin:/opt/xilinx/xrt/bin:/usr/local/sbin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin

LD_LIBRARY_PATH : /opt/xilinx/xrm/lib:/opt/xilinx/xrt/lib:

Number of U30 devices found : 16

Running Containerized Workloads on Amazon ECS and Amazon EKS

To help build AMIs for Amazon Linux2, Ubuntu 18/20, Amazon ECS and Amazon Elastic Kubernetes Service (Amazon EKS), we have provided a Github project in order to simplify the build process utilizing Packer:

https://github.com/aws-samples/aws-vt-baseami-pipeline

At the time of writing, Xilinx does not have an officially supported container runtime. However, it is possible to pass the specific devices in the docker run ... stanza, and in order to set this environment download this specific script. The following example is the output for vt1.24xlarge:

[ec2-user@ip-10-0-254-236 ~]$ source xilinx_aws_docker_setup.sh

XILINX_XRT : /opt/xilinx/xrt

PATH : /opt/xilinx/xrt/bin:/usr/local/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/home/ec2-user/.local/bin:/home/ec2-user/bin

LD_LIBRARY_PATH : /opt/xilinx/xrt/lib:

PYTHONPATH : /opt/xilinx/xrt/python:

XILINX_AWS_DOCKER_DEVICES : --device=/dev/dri/renderD128:/dev/dri/renderD128

--device=/dev/dri/renderD129:/dev/dri/renderD129

--device=/dev/dri/renderD130:/dev/dri/renderD130

--device=/dev/dri/renderD131:/dev/dri/renderD131

--device=/dev/dri/renderD132:/dev/dri/renderD132

--device=/dev/dri/renderD133:/dev/dri/renderD133

--device=/dev/dri/renderD134:/dev/dri/renderD134

--device=/dev/dri/renderD135:/dev/dri/renderD135

--device=/dev/dri/renderD136:/dev/dri/renderD136

--device=/dev/dri/renderD137:/dev/dri/renderD137

--device=/dev/dri/renderD138:/dev/dri/renderD138

--device=/dev/dri/renderD139:/dev/dri/renderD139

--device=/dev/dri/renderD140:/dev/dri/renderD140

--device=/dev/dri/renderD141:/dev/dri/renderD141

--device=/dev/dri/renderD142:/dev/dri/renderD142

--device=/dev/dri/renderD143:/dev/dri/renderD143

--mount type=bind,source=/sys/bus/pci/devices/0000:00:1d.0,target=/sys/bus/pci/devices/0000:00:1d.0 --mount type=bind,source=/sys/bus/pci/devices/0000:00:1e.0,target=/sys/bus/pci/devices/0000:00:1e.0

Once the devices have been enumerated, start the workload by running:

docker run -it $XILINX_AWS_DOCKER_DEVICES <image:tag>

Amazon EKS Setup

To launch an EKS cluster with VT1 instances, create the AMI from the scripts provided in the repo earlier.

https://github.com/aws-samples/aws-vt-baseami-pipeline

Once the AMI is created, launch an EKS cluster:

eksctl create cluster --region us-east-1 --without-nodegroup --version 1.19 \

--zones us-east-1c,us-east-1d

Once the cluster is created, substitute the values for the cluster name, subnets, and AMI IDs in the following template.

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: <cluster-name>

region: us-east-1

vpc:

id: vpc-285eb355

subnets:

public:

endpoint-one:

id: subnet-5163b237

endpoint-two:

id: subnet-baff22e5

managedNodeGroups:

- name: vt1-ng-1d

instanceType: vt1.3xlarge

volumeSize: 200

instancePrefix: vt1-ng-1d-worker

ami: <ami-id>

iam:

withAddonPolicies:

imageBuilder: true

autoScaler: true

ebs: true

fsx: true

cloudWatch: true

ssh:

allow: true

publicKeyName: amrragab-aws

subnets:

- endpoint-one

minSize: 1

desiredCapacity: 1

maxSize: 4

overrideBootstrapCommand: |

#!/bin/bash

/etc/eks/bootstrap.sh <cluster-name>

Save this file, and then deploy the nodegroup.

eksctl create nodegroup -f vt1-managed-ng.yaml

Once deployed, apply the FPGA U30 device plugin. The daemonset container is available on the Amazon Elastic Container Registry (ECR) public gallery. You can also access the daemonset deployment file.

kubectl apply -f xilinx-device-plugin.yml

Confirm that the Xilinx U30 device(s) are seen by K8s API server and can be allocatable in your job.

Capacity:

attachable-volumes-aws-ebs: 39

cpu: 12

ephemeral-storage: 209702892Ki

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 23079768Ki

pods: 15

xilinx.com/fpga-xilinx_u30_gen3x4_base_1-0: 1

Allocatable:

attachable-volumes-aws-ebs: 39

cpu: 11900m

ephemeral-storage: 192188443124

hugepages-1Gi: 0

hugepages-2Mi: 0

memory: 22547288Ki

pods: 15

xilinx.com/fpga-xilinx_u30_gen3x4_base_1-0: 1

Video Quality Analysis

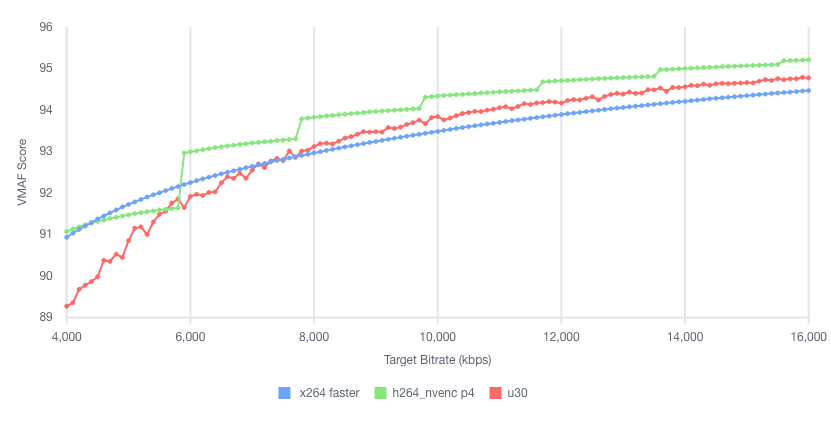

The video quality produced by the U30 is roughly equivalent to the “faster” profile in the x264 and x265 codecs, or the “p4” preset using the nvenc codec on G4dn. For example, in the following test we encoded the same UHD (4K) video at multiple bitrates into H264, and then compared Video Multimethod Assessment Fusion (VMAF) scores:

Stream Density and Encoding Performance

To illustrate the VT1 instance family stream density and encoding performance, let’s look at the smallest instance, the vt1.3xlarge, which can encode up to eight simultaneous 1080p60 streams into H.264. We chose a set of similar instances at a close price point, and then compared how many 1080p60 H264 streams they could encode simultaneously to an equivalent quality:

| Column 1 | Column 2 | Column 3 | Column 4 | Column 5 |

| Instance | Codec | us-east-1 Hourly Price* | 1080p60 Streams / Instance | Hourly Cost / Stream |

| c5.4xlarge | x264 | $0.680 | 2 | $0.340 |

| c6g.4xlarge | x264 | $0.544 | 2 | $0.272 |

| c5a.4xlarge | x264 | $0.616 | 3 | $0.205 |

| g4dn.xlarge | nvenc | $0.526 | 4 | $0.132 |

| vt1.3xlarge | xma | $0.650 | 8 | $0.081 |

* Prices accurate as of the publishing date of this article.

As you can see, the vt1.3xlarge instance can encode four times as many streams as the c5.4xlarge, and at a lower hourly cost. It can also encode two times the number of streams as a g4dn.xlarge instance. Thus, yielding in this example a cost per stream reduction of up to 76% over c5.4xlarge, and up to 39% compared to g4dn.xlarge.

Faster than Real-time Transcoding

In addition to encoding multiple live streams in parallel, VT1 instances can also be utilized to encode file-based content at faster-than-real-time performance. This can be done by over-provisioning resources on a single XCU30 device so that more resources are dedicated to transcoding than are necessary to maintain real-time.

For example, running the following command (specifying -cores 4) will utilize all resources on a single XCU30 device, and yield an encode speed of approximately 177 FPS, or 2.95 times faster than real-time for a 60 FPS source:

$ ffmpeg -c:v mpsoc_vcu_h264 -i input_1920x1080p60_H264_8Mbps_AAC_Stereo.mp4 -f mp4 -b:v 5M -c:v mpsoc_vcu_h264 -cores 4 -slices 4 -y /tmp/out.mp4

frame=43092 fps=177 q=-0.0 Lsize= 402721kB time=00:11:58.92 bitrate=4588.9kbits/s speed=2.95x

To maximize FPS further, utilize the “split and stitch” operation to break the input file into segments, and then transcode those in parallel across multiple XCU30 chips or even multiple U30 cards in an instance. Then, recombine the file at the output. For more information, see the Xilinx Video SDK documentation on Faster than Real-time transcoding.

Using the provided example script on the same 12-minute source file as the preceding example on a vt1.3xlarge, we can utilize both addressable devices on the U30 card at once in order to yield an effective encode speed of 512 fps, or 8.5 times faster than real-time.

$ python3 13_ffmpeg_transcode_only_split_stitch.py -s input_1920x1080p60_H264_8Mbps_AAC_Stereo.mp4 -d /tmp/out.mp4 -i h264 -o h264 -b 5.0

There are 1 cards, 2 chips in the system

...

Time from start to completion : 84 seconds (1 minute and 24 seconds)

This clip was processed 8.5 times faster than realtime

This clip was effectively processed at 512.34 FPS

Conclusion

We are excited to launch VT1, our first EC2 instance with dedicated hardware acceleration for video transcoding, which provides up to 30% lower cost per stream as compared to G4dn or 60% lower cost per stream as compared to C5. With up to eight Xilinx Alveo U30 media accelerators, you can parallelize up to 16 4K UHD streams, for batch, real-time, and faster than real-time transcoding. If you have any questions, reach out to your account team. Now, go power up your video transcoding workloads with Amazon EC2 VT1 instances.