AWS Compute Blog

Using an Amazon MQ network of broker topologies for distributed microservices

This post is written by Suranjan Choudhury Senior Manager SA and Anil Sharma, Apps Modernization SA.

This blog looks at ActiveMQ topologies that customers can evaluate when planning hybrid deployment architectures spanning AWS Regions and customer data centers, using a network of brokers. A network of brokers can have brokers on-premises and Amazon MQ brokers on AWS.

Distributing broker nodes across AWS and on-premises allows for messaging infrastructure to scale, provide higher performance, and improve reliability. This post also explains a topology spanning two Regions and demonstrates how to deploy on AWS.

A network of brokers is composed of multiple simultaneously active single-instance brokers or active/standby brokers. A network of brokers provides a large-scale messaging fabric in which multiple brokers are networked together. It allows a system to survive the failure of a broker. It also allows distributed messaging. Applications on remote, disparate networks can exchange messages with each other. A network of brokers helps to scale the overall broker throughput in your network, providing increased availability and performance.

Types of ActiveMQ topologies

Network of brokers can be configured in a variety of topologies – for example, mesh, concentrator, and hub and spoke. The topology depends on requirements such as security and network policies, reliability, scaling and throughput, and management and operational overheads. You can configure individual brokers to operate as a single broker or in an active/standby configuration.

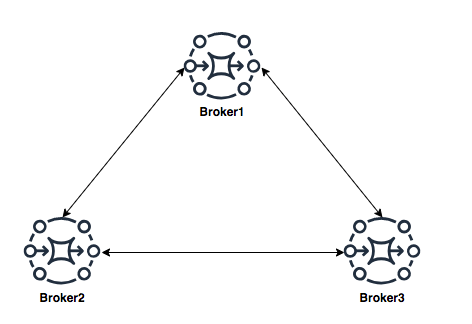

Mesh topology

A mesh topology provides multiple brokers that are all connected to each other. This example connects three single-instance brokers, but you can configure more brokers as a mesh. The mesh topology needs subnet security group rules to be opened for allowing brokers in internal subnets to communicate with brokers in external subnets.

For scaling, it’s simpler to add new brokers for incrementing overall broker capacity. The mesh topology by design offers higher reliability with no single point of failure. Operationally, adding or deleting of nodes requires broker re-configuration and restarting the broker service.

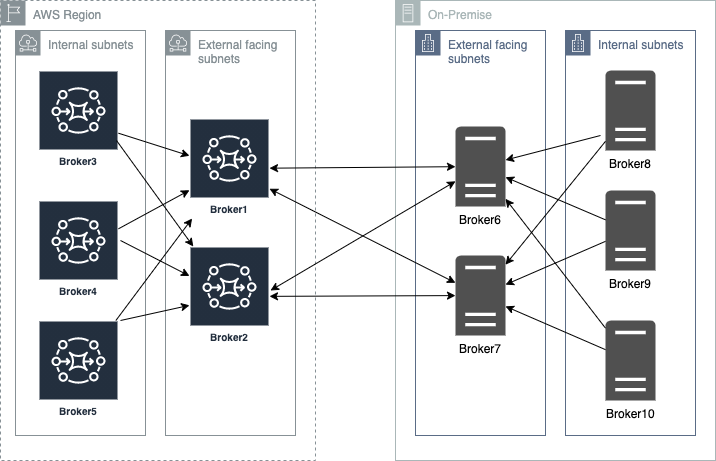

Concentrator topology

In a concentrator topology, you deploy brokers in two (or more) layers to funnel incoming connections into a smaller collection of services. This topology allows segmenting brokers into internal and external subnets without any additional security group changes. If additional capacity is needed, you can add new brokers without needing to update other brokers’ configurations. The concentrator topology provides higher reliability with alternate paths for each broker. This enables hybrid deployments with lower operational overheads.

Hub and spoke topology

A hub and spoke topology preserves messages if there is disruption to any broker on a spoke. Messages are forwarded throughout and only the central Broker1 is critical to the network’s operation. Subnet security group rules must be opened to allow brokers in internal subnets to communicate with brokers in external subnets.

Adding brokers for scalability is constrained by the hub’s capacity. Hubs are a single point of failure and should be configured as active-standby to increase reliability. In this topology, depending on the location of the hub, there may be increased bandwidth needs and latency challenges.

Using a concentrator topology for large-scale hybrid deployments

When planning deployments spanning AWS and customer data centers, the starting point is the concentrator topology. The brokers are deployed in tiers such that brokers in each tier connect to fewer brokers at the next tier. This allows you to funnel connections and messages from a large number of producers to a smaller number of brokers. This concentrates messages at fewer subscribers:

Deploying ActiveMQ brokers across Regions and on-premises

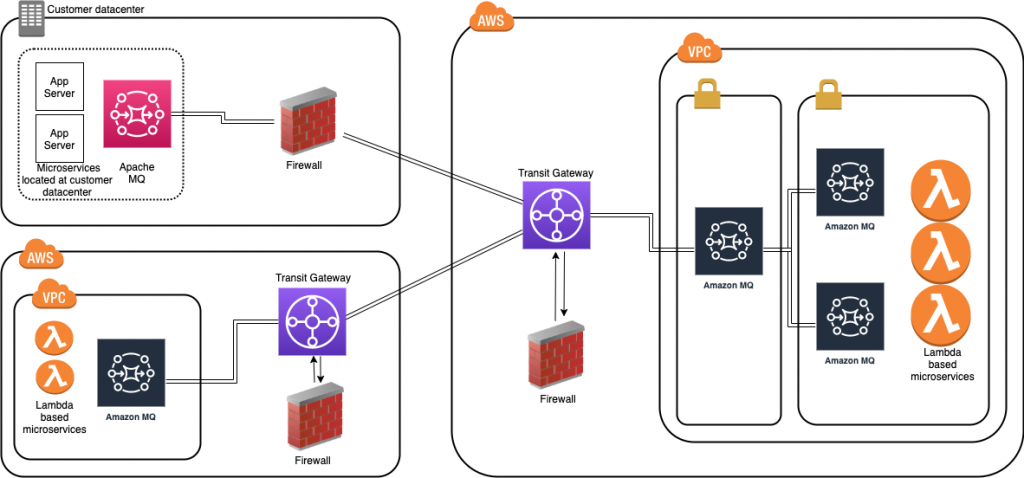

When placing brokers on-premises and in the AWS Cloud in a hybrid network of broker topologies, security and network routing are key. The following diagram shows a typical hybrid topology:

Amazon MQ brokers on premises are placed behind a firewall. They can communicate to Amazon MQ brokers through an IPsec tunnel terminating on the on-premises firewall. On the AWS side, this tunnel terminates on an AWS Transit Gateway (TGW). The TGW routes all network traffic to a firewall in AWS in a service VPC.

The firewall inspects the network traffic and routes all inspected traffic sent back to the transit gateway. The TGW, based on routing configured, sends the traffic to the Amazon MQ broker in the application VPC. This broker concentrates messages from Amazon MQ brokers hosted on AWS. The on premises brokers and the AWS brokers form a hybrid network of brokers that spans AWS and customer data center. This allows applications and services to communicate securely. This architecture exposes only the concentrating broker to receive and send messages to the broker on premises. The applications are protected from outside, non-validated network traffic.

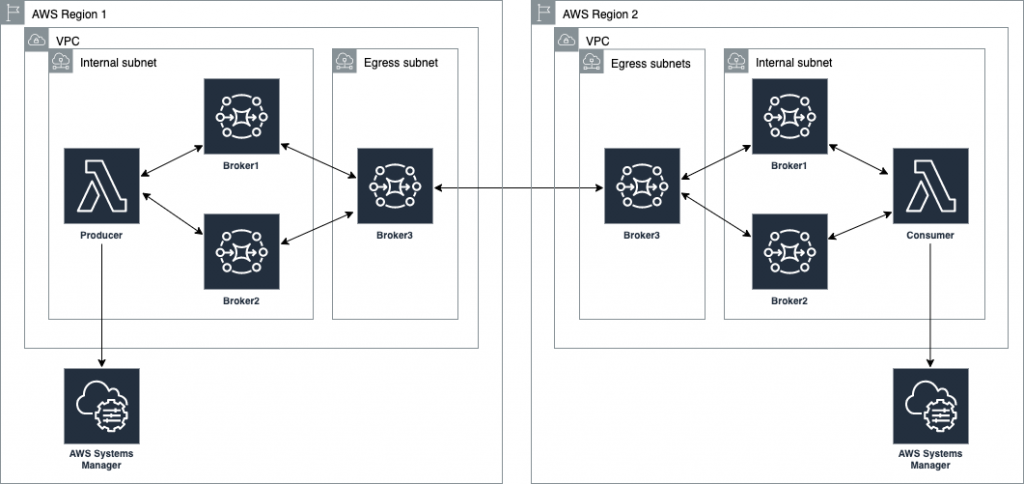

This blog shows how to create a cross-Region network of brokers. This topology removes multiple brokers in the internal subnet. However, in a production environment, you have multiple brokers’ internal subnets catering to multiple producers and consumers. This topology spans an AWS Region and an on-premises customer data center represented in a second AWS Region:

Best practices for configuring network of brokers

Client-side failover

In a network of brokers, failover transport configures a reconnect mechanism on top of the transport protocols. The configuration allows you to specify multiple URIs to connect to. An additional configuration using the randomize transport option allows for random selection of the URI when re-establishing a connection.

The example Lambda functions provided in this blog use the following configuration:

//Failover URI

failoverURI = "failover:(" + uri1 + "," + uri2 + ")?randomize=True";

Broker side failover

Dynamic failover allows a broker to receive a list of all other brokers in the network. It can use the configuration to update producer and consumer clients with this list. The clients can update to rebalance connections to these brokers.

In the broker configuration in this blog, the following configuration is set up:

<transportConnectors> <transportConnector name="openwire" updateClusterClients="true" updateClusterClientsOnRemove = "false" rebalanceClusterClients="true"/> </transportConnectors>Network connector properties – TTL and duplex

TTL values allow messages to traverse through the network. There are two TTL values – messageTTL and consumerTTL. Another way is to set up the network TTL, which sets both the message and consumer TTL.

The duplex option allows for creating a bidirectional path between two brokers for sending and receiving messages. This blog uses the following configuration:

<networkConnector name="connector_1_to_3" networkTTL="5" uri="static:(ssl://xxxxxxxxx.mq.us-east-2.amazonaws.com:61617)" userName="MQUserName"/>Connection pooling for producers

In the example Lambda function, a pooled connection factory object is created to optimize connections to broker:

// Create a conn factory

final ActiveMQSslConnectionFactory connFacty = new ActiveMQSslConnectionFactory(failoverURI);

connFacty.setConnectResponseTimeout(10000);

return connFacty;

// Create a pooled conn factory

final PooledConnectionFactory pooledConnFacty = new PooledConnectionFactory();

pooledConnFacty.setMaxConnections(10);

pooledConnFacty.setConnectionFactory(connFacty);

return pooledConnFacty;Deploying the example solution

- Create an IAM role for Lambda by following the steps at https://github.com/aws-samples/aws-mq-network-of-brokers#setup-steps.

- Create the network of brokers in the first Region. Navigate to the CloudFormation console and choose Create stack:

- Provide the parameters for the network configuration section:

- In the Amazon MQ configuration section, configure the following parameters. Ensure that these two parameter values are the same in both Regions.

- Configure the following in the Lambda configuration section. Deploy mqproducer and mqconsumer in two separate Regions:

- Create the network of brokers in the second Region. Repeat step 2 to create the network of brokers in the second Region. Ensure that the VPC CIDR in region2 is different than the one in region1. Ensure that the user name and password are the same as in the first Region.

- Complete VPC peering and updating route tables:

- Configure the network of brokers and create network connectors:

- In region1, choose Broker3. In the Connections section, copy the endpoint for the openwire protocol.

- In region2 on broker3, set up the network of brokers using the networkConnector configuration element.

- Edit the configuration revision and add a new NetworkConnector within the NetworkConnectors section. Replace the uri with the URI for the broker3 in region1.

<networkConnector name="broker3inRegion2_to_ broker3inRegion1" duplex="true" networkTTL="5" userName="MQUserName" uri="static:(ssl://b-123ab4c5-6d7e-8f9g-ab85-fc222b8ac102-1.mq.ap-south-1.amazonaws.com:61617)" />

- Send a test message using the mqProducer Lambda function in region1. Invoke the producer Lambda function:

aws lambda invoke --function-name mqProducer out --log-type Tail --query 'LogResult' --output text | base64 -d - Receive the test message. In region2, invoke the consumer Lambda function:

aws lambda invoke --function-name mqConsumer out --log-type Tail --query 'LogResult' --output text | base64 -d

The message receipt confirms that the message has crossed the network of brokers from region1 to region2.

Cleaning up

To avoid incurring ongoing charges, delete all the resources by following the steps at https://github.com/aws-samples/aws-mq-network-of-brokers#clean-up.

Conclusion

This blog explains the choices when designing a cross-Region or a hybrid network of brokers architecture that spans AWS and your data centers. The example starts with a concentrator topology and enhances that with a cross-Region design to help address network routing and network security requirements.

The blog provides a template that you can modify to suit specific network and distributed application scenarios. It also covers best practices when architecting and designing failover strategies for a network of brokers or when developing producers and consumers client applications.

The Lambda functions used as producer and consumer applications demonstrate best practices in designing and developing ActiveMQ clients. This includes storing and retrieving parameters, such as passwords from the AWS Systems Manager.

For more serverless learning resources, visit Serverless Land.