Containers

Amazon VPC CNI plugin increases pods per node limits

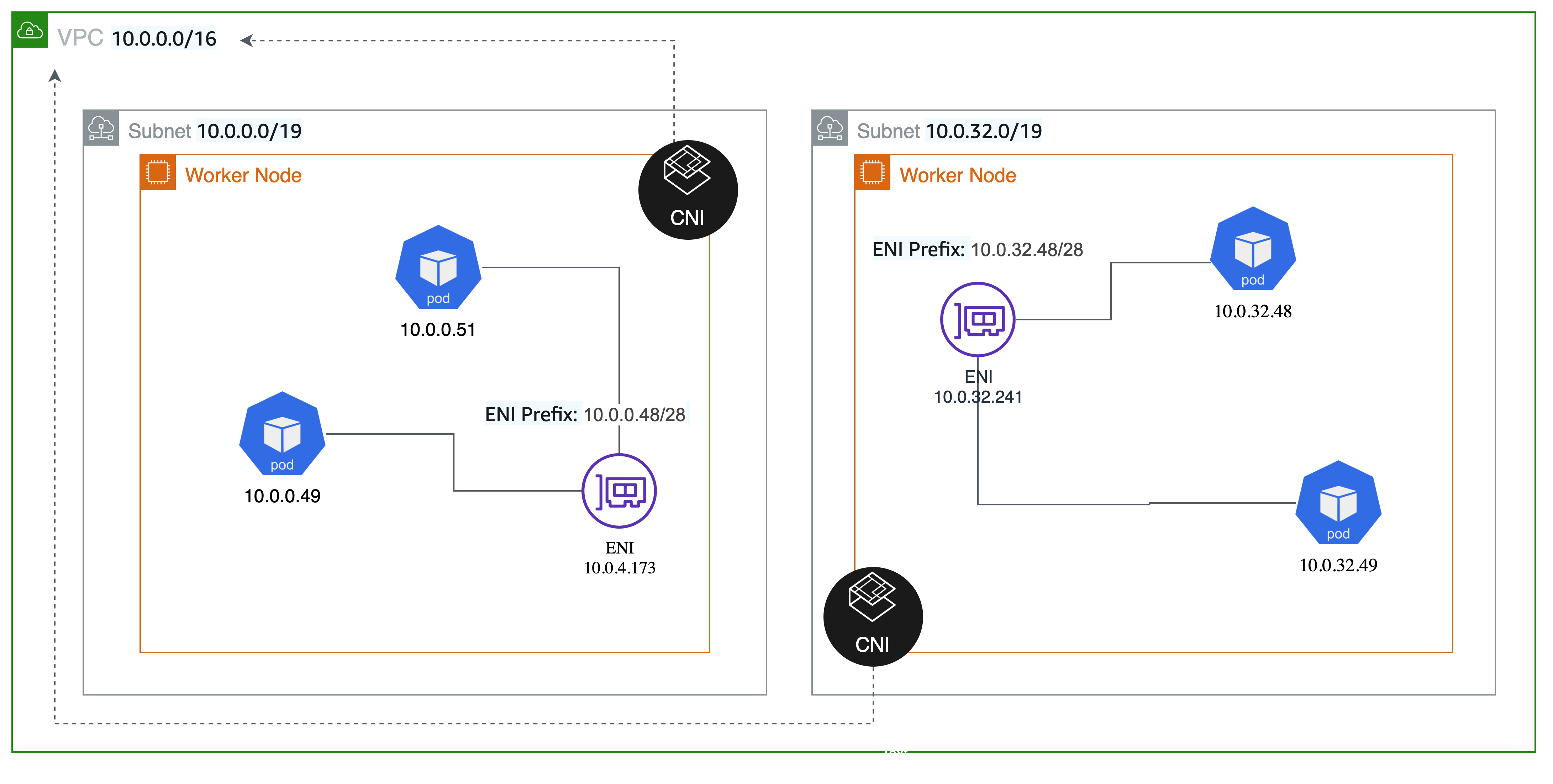

As of August 2021, Amazon VPC Container Networking Interface (CNI) Plugin supports “prefix assignment mode”, enabling you to run more pods per node on AWS Nitro based EC2 instance types. To achieve higher pod density, the VPC CNI plugin leverages a new VPC capability that enables IP address prefixes to be associated with elastic network interfaces (ENIs) attached to EC2 instances. You can now assign /28 (16 IP addresses) IPv4 address prefixes, instead of assigning individual secondary IPv4 addresses to network interfaces. This significantly increases number of pods that can be run per node.

In this post, we’ll look under the hood at how the feature is implemented, walk through how to configure VPC CNI with prefix assignment enabled, and discuss use cases and key considerations.

Amazon VPC CNI prefix assignment mode

Pods are the smallest deployable units of computing that can be created and managed in Kubernetes. A pod requires a unique IP address to communicate in the Kubernetes cluster (host networking pods being an exception). Amazon Elastic Kubernetes Services (EKS) by default runs the VPC Container Networking Interface (CNI) Plugin to assign IP address to a pod by managing network interfaces and IP addresses on EC2 instances. The VPC CNI plugin integrates directly with EC2 networking to provide high performance, low latency container networking in Kubernetes clusters running on AWS. This plugin assigns an IP address from the cluster’s VPC to each pod. By default, the number of IP addresses available to assign to pods is based on the maximum number of elastic network interfaces and secondary IPs per interface that can be attached to an EC2 instance type.

With prefix assignment mode, the maximum number of elastic network interfaces per instance type remains the same, but you can now configure Amazon VPC CNI to assign /28 (16 IP addresses) IPv4 address prefixes, instead of assigning individual IPv4 addresses to network interfaces. The pods are assigned an IPv4 address from the prefix assigned to the ENI.

How it works

Amazon VPC CNI is deployed on worker nodes as a Kubernetes Daemonset with the name aws-node. The plugin consists of two primary components: Local IP Address Management (L-IPAM) and CNI plugin.

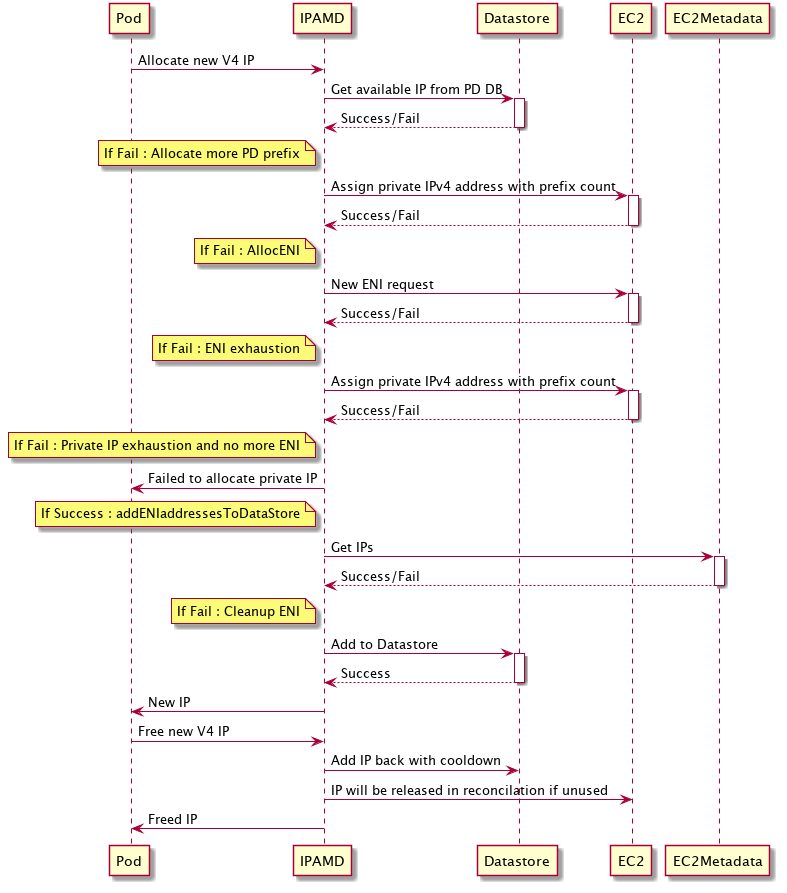

- L-IPAM daemon (IPAMD) is responsible for creating and attaching network interfaces to worker nodes, assigning prefixes to network interfaces, and maintaining a warm pool of IP prefixes on each node for assignment to pods as they are scheduled.

- The CNI plugin is responsible for wiring the host network (for example, configuring the network interfaces and virtual ethernet pairs) and adding the correct network interface to a pod’s namespace. The CNI plugin communicates with IPAMD via Remote Procedure Calls.

The following commands are examples of EC2 API calls that shows how VPC CNI interacts with the EC2 control plane.

Under the hood, IPAMD on worker node initialization, will request EC2 to assign a CIDR block prefix to the primary ENI.

aws ec2 assign-private-ipv4-addresses --network-interface-id eni-38664474 --ipv4-prefix-count 1 --secondary-private-ip-address-count 0As IP needs increase more prefixes will be requested for the existing ENI.

aws ec2 assign-private-ipv4-addresses --network-interface-id eni-38664474 --ipv4-prefix-count 1 --secondary-private-ip-address-count 0When the number of prefixes for an ENI reaches the limit, secondary ENIs will be allocated.

aws ec2 create-network-interface --subnet-id subnet-9d4a7abc --ipv4-prefix-count 1IPAMD will maintain a mapping of the number of IPs consumed in a prefix. And the prefix will be unset when no IPs are used in the prefix.

aws ec2 unassign-ipv4-addresses --IPv4-prefix --network-interface-id eni-38664473Reserving space within a subnet specifically for prefixes can also be useful if there are special requirements to avoid conflicts with other AWS services such as EC2, or to minimize fragmentation within a subnet (we’ll discuss fragmentation in detail later in the blog). The Amazon VPC CNI plugin will automatically use the reserved prefix for IP address assignment. Note that the VPC CNI plugin does not automatically make the below API call, this is something you will need to perform out of band.

aws ec2 create-subnet-address-reservation --subnet-id subnet-1234 --type prefix --cidr 69.89.31.0/24When prefix mode is enabled, the following diagram illustrates the pod IP allocation process.

Getting started

In this section we will create EKS cluster and configure prefix assignment mode. Prefix assignment mode works with VPC CNI version 1.9.0 or later.

Create an EKS cluster

Use eksctl to create a cluster. Make sure you are using the latest version of eksctl for this example. Note we are instructing eksctl to automatically discover and install the latest version of VPC CNI through EKS add-ons. Copy the following configuration and save it to a file called cluster.yaml:

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: pd-cluster

region: us-west-2

iam:

withOIDC: true

addons:

- name: vpc-cni

version: latesteksctl create cluster -f cluster.ymlEnabling prefix assignment mode

Use the parameter ENABLE_PREFIX_DELEGATION to configure the VPC CNI plugin to assign prefixes to network interfaces.

kubectl set env daemonset aws-node -n kube-system ENABLE_PREFIX_DELEGATION=trueConfirm if environment variable is set.

kubectl describe daemonset -n kube-system aws-node | grep ENABLE_PREFIX_DELEGATIONScale faster, preserve IPv4 addresses

The Amazon VPC CNI supports setting WARM_PREFIX_TARGET or either/both WARM_IP_TARGET and MINIMUM_IP_TARGET. The recommended (and default value set in the installation manifest) configuration is to set WARM_PREFIX_TARGET to 1. You can set this value manually using the command below if your manifest does not already have it set.

kubectl set env ds aws-node -n kube-system WARM_PREFIX_TARGET=1When the Prefix IP mode is utilized, you cannot set the values for WARM_PREFIX_TARGET, WARM_IP_TARGET, or MINIMUM_IP_TARGET variables to zero. The settings for these parameters will be determined by use cases. If set, WARM_IP_TARGET and/or MINIMUM_IP_TARGET will take precedence over WARM_PREFIX_TARGET.

With the default setting, WARM_PREFIX_TARGET will allocate one additional complete (/28) prefix even if the existing prefix is used by only one pod. If the ENI does not have enough space to assign a prefix, a new ENI is generated. When a new ENI is created, IPAMD determines how many prefixes are required to maintain the WARM_PREFIX_TARGET, and then allocates those prefixes. New ENIs will be attached only when all prefixes assigned to existing ENIs have been exhausted.

In most cases, the recommended value of 1 for WARM_PREFIX_TARGET will provide a good mix of fast pod launch times while minimizing unused IP addresses assigned to the instance. This behavior is an improvement over the default WARM_ENI_TARGET of 1 with individual secondary IP address mode, where the number of IP addresses allocated to a node is dependent on instance type. For example with a c5.18xlarge, the maximum 50 IP addresses per ENI would be allocated by default. With prefix assignment, IP addresses are always allocated in /28 (16 IP address) chunks, independent of instance type.

If you have a need to further conserve IPv4 addresses per node, with only a minor performance penalty to pod launch time, you can instead use WARM_IP_TARGET and MINIMUM_IP_TARGET settings, which override WARM_PREFIX_TARGET if set. By setting WARM_IP_TARGET to a value less than 16, you can prevent IPAMD from keeping one full free prefix attached.

For a concrete example, let’s imagine a user that estimates with steady state, they will have 25 pods deployed per node. They are using m5.large instances, which support 3 ENIs and 9 prefixes per ENI. This user is operating in a VPC with limited IPv4 address space, and is willing to sacrifice some pod launch time performance to minimize unused IP addresses per node, so they decide to set WARM_IP_TARGET and MINIMUM_IP_TARGET instead of relying on the default recommended behavior of WARM_PREFIX_TARGET set to 1. They set MINIMUM_IP_TARGET to 25, because they expect at least 25 pods to be scheduled on every node as a baseline. They also set WARM_IP_TARGET to 5. Keep in mind, that with prefix assignment, IPAMD can only allocate IPv4 addresses in chunks of 16.

When a node is started in their cluster, IPAMD will allocate 2 prefixes (32 IP address) to the primary ENI (this user is not using CNI custom networking, so only the primary ENI is used in this example) to satisfy the MINIMUM_IP_TARGET of 25. Now 25 pods get scheduled to the worker node, and no action is taken by IPAMD, because enough IP addresses are already allocated to meet the pod requirements, and there are 7 unused IP addresses, which satisfies the WARM_IP_TARGET requirement of 5. Next, their application experiences a spike in traffic, and an additional 12 pods are scheduled to the worker node to meet the demand. When the 3rd pod gets scheduled, IPAMD will calculate that their are only 4 free IP addresses left on the node, less than the WARM_IP_TARGET of 5, and will call EC2 to attach an additional prefix to the ENI. 7 of the 12 additional pods will immediately be given IP addresses from the existing pool, however, the last 5 pods may see a slight delay in starting as the additional prefix gets attached. Once this new steady state is reached, the worker node will still have only the primary ENI attached, with 3 prefixes, for a total of 48 IPs allocated, and 37 pods running on the node.

For more details on the use cases and examples of combination of these settings, see the VPC CNI documentation. Note, MINIMUM_IP_TARGET is not required to be set when using WARM_IP_TARGET, but is recommended if you have an expectation of the baseline pods per node to be scheduled in your cluster. If not set in the example above, IPAMD would have only allocated a single prefix on node launch, and an additional prefix would have been attached during the initial scheduling of the 25 pods. An additional network interface only needs to be attached if the maximum number of prefixes is reached on an existing ENI. In the case of this m5.large example, that’s 9 prefixes, or 144 IP addresses, so it’s highly unlikely you’ll need an additional ENI. There is no performance impact from running all pods through one ENI, because traffic still runs through a single underlying network card (only the recently launched p4d.24xl instance type supports more than one network card).

It’s more performant to use WARM_IP_TARGET and MINIMUM_IP_TARGET with prefix assignment mode, compared to setting these variables in the older individual secondary IP address networking mode. In that networking mode, you can get one at a time control over IP address allocation, but setting WARM_IP_TARGET can drastically increase the number of EC2 API calls needed to achieve that fine grained allocation, resulting in API throttling and delays attaching any new ENIs or IPs to worker nodes. Allocating an additional prefix to an existing ENI is a faster EC2 API operation compared to creating and attaching a new ENI to the instance, which gives you the better performance characteristics while being frugal with IPv4 address allocation. Attaching a prefix typically completes in under a second, where attaching a new ENI can take up to 10 seconds. For most use cases, IPAMD will only need a single ENI per worker node when running in prefix assignment mode. If you can afford (in the worst case) up to 15 unused IPs per node, we strongly recommend using the newer prefix assignment networking mode, and realizing the performance and efficiency gains that come with it.

Calculate max pods

When using VPC CNI, the maximum amount of pods that can be run per node has been dependent on VPC CNI settings. As part of this launch, we’ve updated EKS managed node groups to automatically calculate and set the recommended max pod value based on instance type and VPC CNI configuration values, as long as you are using at least VPC CNI version 1.9. The max pods value will be set on any newly created managed node groups, or node groups updated to a newer AMI version. This helps for both prefix assignment use cases, as well as CNI custom networking where you previously needed to manually set a lower max pods value.

Important: Managed node groups looks for the VPC CNI plugin installed in the kube-system namespace with daemonset name aws-node and container name aws-node. If you’ve customized your VPC CNI installation to run elsewhere, the managed node groups auto max pod calculation process will be skipped, and the default value built into the EKS optimized AMI will remain set.

If you are using self-managed node groups or a managed node group with a custom AMI ID, you must manually compute the recommended max pods value. Let us see how the max pod value is calculated in prefix assignment mode. Note that the total number of prefixes and private IP addresses is limited by the number of private IPs allowed on the instance. For example, ENIs on m5.large instance have a limit of 10 slots, one of which is needed for the ENI primary IP addresses, which leaves 9 slots that can be used for /28 prefixes.

You can use the following formula to determine the maximum number of pods you can deploy on a node when Prefix IP mode is enabled.

(Number of network interfaces for the instance type × (the number of slots per network interface - 1)* 16)For backwards compatibility reasons, the default max pods value per instance type in the EKS optimized Amazon Linux AMI will not change. When using prefix attachments with smaller instance types like the m5.large, you’re likely to exhaust the instance’s CPU and memory resources long before you exhaust its IP addresses, and max_pods might differ from the result of the above formula.

To help simplify this process for self managed and managed node group custom AMI users, we’ve introduced a max-pod-calculator.sh script to find Amazon EKS recommend number of maximum pods based on your instance type and VPC CNI configuration settings.

Download max-pods-calculator.sh.

curl -o max-pods-calculator.sh https://raw.githubusercontent.com/awslabs/amazon-eks-ami/master/files/max-pods-calculator.shMark the script as executable on your computer.

chmod +x max-pods-calculator.shRun the script to find max_pods value.

./max-pods-calculator.sh --instance-type m5.large --cni-version 1.9.0 --cni-prefix-delegation-enabledThe maximum number of pods recommended by Amazon EKS for a m5.large instance is:

The actual number of IPv4 addresses that can be attached to an m5.large with prefix assignment enabled is actually much higher (3 ENIs × (9 prefixes per ENI)* 16 IPs per prefix) = 432 IPs. However, the max pods calculator script limits the return value to 110 based on Kubernetes scalability thresholds and recommended settings. If your instance type has greater than 30 vCPUs, this limit jumps to 250, a number based on internal EKS scalability team testing. Prefix assignment mode is especially relevant for users of CNI custom networking where the primary ENI is not used for pods. With prefix assignment, you can still attach at least 110 IPs on nearly every Nitro instance type, even without the primary ENI used for pods. In the example above, an m5.large with CNI custom networking and prefix assignment enabled can still be allocated 288 IPv4 addresses.

Create a managed node group

Prefix assignment mode is supported on AWS Nitro based EC2 instance types. Choose one of the Amazon EC2 Nitro Amazon Linux 2 instance type. This capability is not supported on Windows.

eksctl create nodegroup \

--cluster pd-cluster \

--region us-west-2 \

--name pg-nodegroup \

--node-type m5.large \

--nodes 1Deploy Sample Application

Let us now deploy a sample NGINX application with replica size 80, to demonstrate Prefix IP assignment.

cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 80

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: public.ecr.aws/nginx/nginx:1.21

ports:

- containerPort: 80

EOFList the pods created by the deployment:

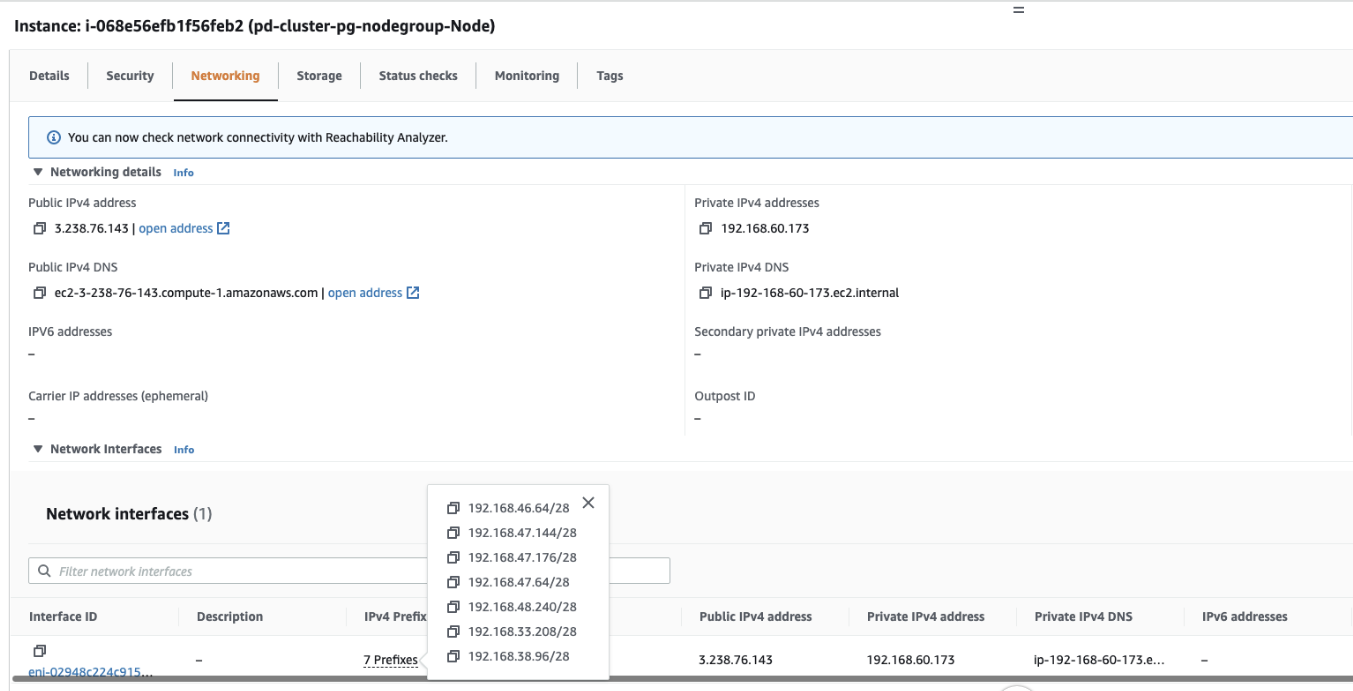

kubectl get pods -l app=nginxYou may now access Amazon EC2 in the Amazon Management Console and navigate to the instance pd-cluster-pg-nodegroup-Node. Under the networking tab, you’ll notice IPv4 prefixes assigned to ENI. Prefixes and ENI counts may vary according to the replicas/pods selected. In this case, the replica count is 80 with a minimum of five prefixes (/28).

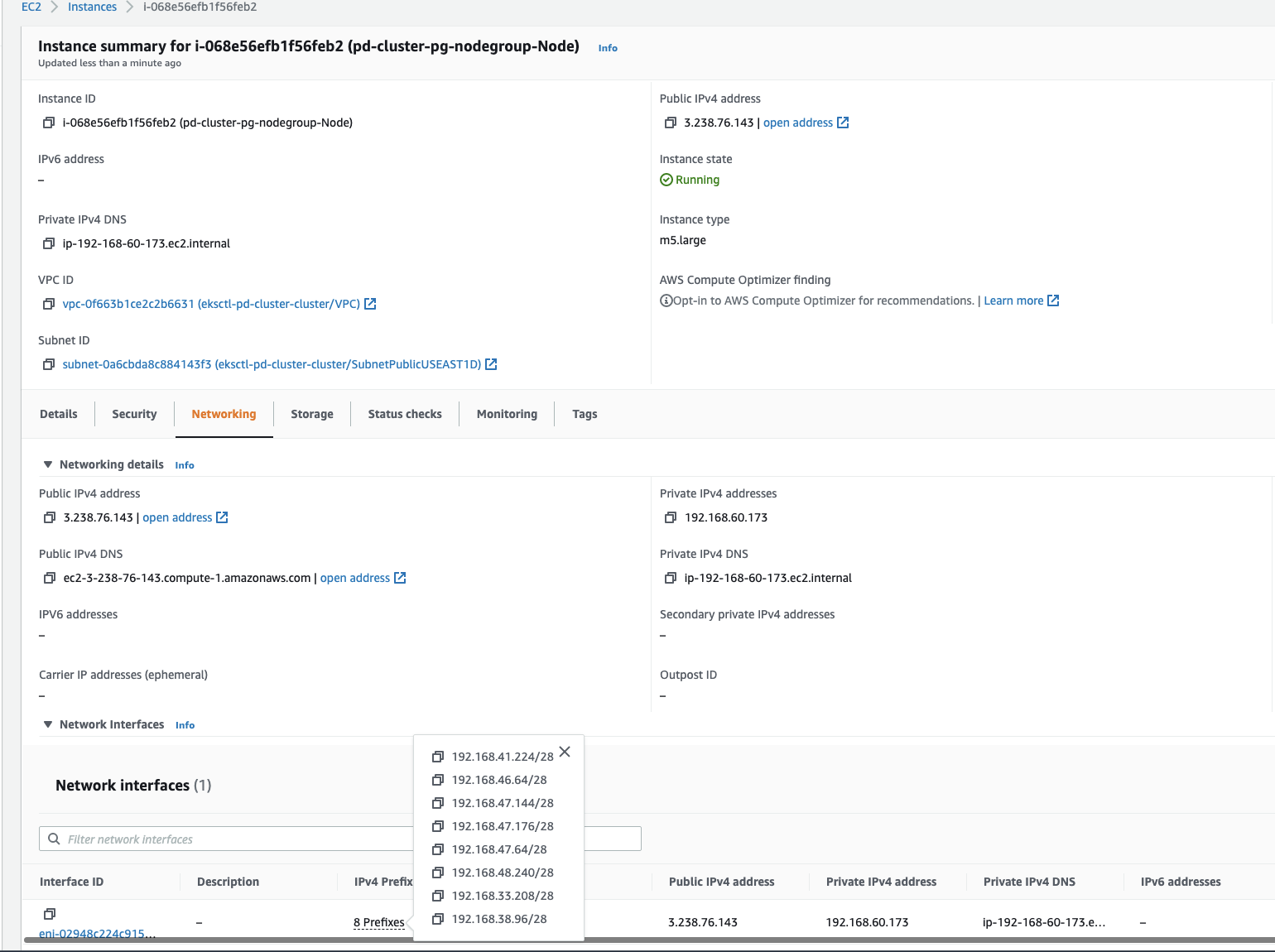

Let’s scale deployment to observe a new in IPv4 Prefix added to ENI.

kubectl scale deployment.v1.apps/nginx-deployment --replicas=110In the image above you will see new IPv4 prefix attached to the ENI.

Key considerations

Subnet fragmentation

When EC2 allocates a /28 IPv4 prefix to an ENI, it has to be a contiguous block of IP addresses from your subnet. If the subnet that the prefix is generated from is fragmented (highly used subnet with scattered secondary IP addresses), the prefix attachment may fail, and you will see the following error message in VPC CNI logs:

To avoid fragmentation and have sufficient contiguous space for creating prefixes, you can use VPC Subnet CIDR reservations, a feature introduced recently along with prefix assignment. With this feature, you can reserve IP space within a subnet for exclusive use by prefixes. EC2 will not use the space to assign individual IP addresses to ENIs or EC2 instances, avoiding fragmentation of this space. Once you create a reservation, the VPC CNI plugin will call EC2 APIs to assign prefixes that are automatically allocated from the reserved space.

You can create a prefix reservation even if some of the space in the reservation range is currently taken up secondary IP addresses. Once IP addresses from that space are released, EC2 won’t reassign them. As a best practice, it’s recommended to create a new subnet, reserve space for prefixes, the enable prefix assignment with VPC CNI for worker nodes running in that subnet. If the new subnet is dedicated only for pods running in your EKS cluster with VPC CNI prefix assignment enabled, then you can skip the prefix reservation step.

Upgrade/downgrade behavior

Prefix mode works with VPC CNI version 1.9.0 and later. Downgrading of Amazon VPC CNI add-on to a version lower than 1.9.0 must be avoided once the prefix mode is enabled and prefixes are assigned to ENIs. You must delete and recreate nodes if you decide to downgrade the VPC CNI.

It is highly recommended you create new nodes and node group to increase the amount of available IP addresses. And, cordon and drain all the existing nodes to safely evict all of your existing pods. Pods on new nodes will be assigned IP from prefix assigned to ENI. After you confirm pods running, you can delete old nodes and node groups.

Resource requests

Another important consideration to keep in mind is setting pod resource requests and limits. As a best practice, you should always be setting at least pod resource requests in your workload specifications. If you don’t set these values, you may see resource contention on nodes given the increase in pods that can be scheduled with prefix assignment enabled. With prefix assignment, IPv4 addresses are no longer a pods per node limiting factor when using the VPC CNI plugin.

Comparison to security groups for pods

Prefix assignment is a good networking mode choice if your workload requirements can be met with a node level security group configuration. With prefix assignment, multiple pods are shared across an ENI, and the security groups associated with that ENI. If you have requirements where each pod needs distinct security groups, then you can leverage the security groups for pods feature. You need to choose your networking mode/strategy based on your use case and requirements. If pod launch time and pod density on smaller instance types are important to you, and you can work with node level security groups, then use prefix assignment. If you have security requirements where pods need a specific set of security groups, then apply a SecurityGroupPolicy custom resource and pods will be allocated dedicated branch network interfaces with those security groups applied. A pod is wired up to the primary IP address of the branch interface. Currently, no secondary IP addresses or prefixes are allocated to branch interfaces.

The max pods calculator script is not relevant for security groups for pods, because the max number per node is instead limited through Kubernetes extended resources, where the number of branch network interfaces is advertised as an extended resource, and any pod that matches a SecurityGroupPolicy is injected by a webhook for a branch interface resource request. When the pod with a branch interface requirement is scheduled, then a separate component, the VPC resource controller (running on the EKS control plane), calls an EC2 API to create and attach a branch interface to the worker node. The max number of branch interfaces per instance type can be found in the EKS documentation, and more details on this behavior can be found in the launch blog. Note that pods allocated branch network interfaces and pods allocated an IP from an ENI prefix can co-exist on the same node.

At the moment, there is no option to get the best of both worlds – pod level security groups with high density on small instance types and fast pod launch time. One potential idea is described in this containers roadmap issue, which we are researching. However, that becomes a tricky scheduling problem.

Cleanup

To avoid future costs, delete the Amazon EKS cluster created for this exercise. This action, in addition to deleting the cluster, will also delete the node group.

eksctl delete cluster --name pd-clusterConclusion

In the container roadmap issues 138 and 1557, you asked us for more details on prefix assignment and the various configuration options possible with the Amazon VPC CNI plugin. In this post, we have discussed in detail prefix assignment mode, summarized VPC CNI workflows, and described installation and setup choices. The key considerations and use cases section provide guidance on using prefix assignment mode.

We covered how to use prefix assignment mode on Amazon EKS to increase pod density. Additionally, we are constantly learning from your use of prefix assignment and planning on upgrades to VPC CNI’s graceful retry methods when dealing with fragmented subnets to address prefix assignment failures.

You can visit EC2 user guide to learn more about assigning prefixes to Amazon EC2 network interfaces and visit the VPC guide for Subnet CIDR reservations. Please visit the Amazon EKS user guide for prefix assignment installation instructions and for any recent product improvements. You may provide feedback on the VPC CNI plugin, evaluate our roadmaps, and suggest new features on the AWS Containers Roadmap, hosted on GitHub.