Containers

Deep Dive on AWS App Runner VPC Networking

AWS App Runner, introduced in 2021, is a fully managed service for running web applications and API servers. App Runner greatly simplifies the experience to build and run secure web server applications with little to no infrastructure in your account. You provide the source code or a container image, and App Runner will build and deploy your application containers in the AWS Cloud, automatically scaling and load-balancing requests across them behind the scenes. All you see is a service URL against which HTTPS requests can be made.

Yesterday, AWS announced VPC support for App Runner services. Until the launch of this feature, applications hosted on App Runner could only connect to public endpoints on the internet. With this new capability, applications can now connect to private resources in your VPC, such as an Amazon RDS database, Amazon ElastiCache cluster, or other private services hosted in your VPC. This blog post takes you behind the scenes of App Runner to detail the network connection between your App Runner hosted application and your VPC, specifically the network interfaces created in your VPC and the traffic flows that are expected to flow over them.

Before we dive into the details of VPC access, let us first understand the inner workings of App Runner in the default public networking mode that existed prior to the launch of this feature.

Public Networking Mode

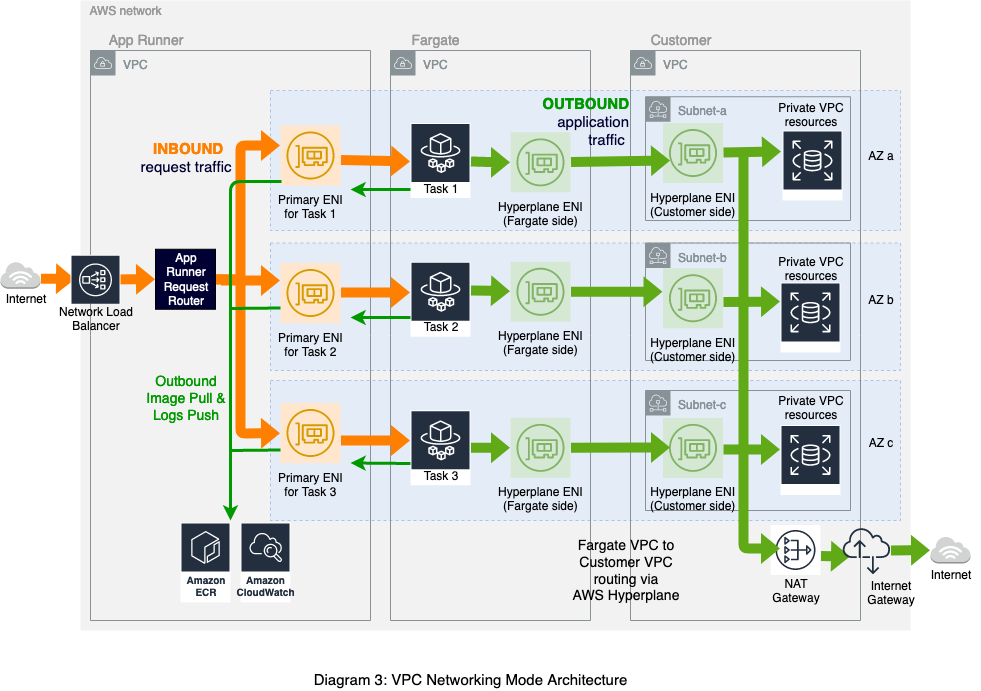

When you create a service, behind the scenes, App Runner deploys your application containers as AWS Fargate tasks orchestrated by Amazon Elastic Container Service in an App Runner-owned VPC. Within the App Runner VPC, is an internet-facing network load balancer that receives incoming requests and a layer-7 request router that forwards the request to an appropriate Fargate task. The Fargate tasks themselves are hosted in Firecracker microVMs running in a Fargate-owned VPC. The tasks are launched with awsvpc networking mode and are cross-connected to the App Runner VPC. This means that each Fargate task is attached to an elastic network interface (ENI) in the App Runner VPC, which we call the primary ENI of the task. The request router addresses each task privately via its primary ENI’s private IP address. In fact, the ENI is bi-directional, and all network traffic to and from the task traverses through it. There are four distinct flows of traffic:

- Request/Response traffic: Incoming requests enter the App Runner VPC through the internet-facing load balancer. They are forwarded to the request router, which further routes the request to a particular Fargate task through its primary ENI (bold orange arrows in Diagram 1). The response follows the same path back.

- Outbound application traffic: All outbound traffic initiated by the application originates from the task’s primary ENI and is routed to the internet via a NAT Gateway and an internet gateway provisioned in the App Runner VPC (bold green arrows in Diagram 1). In this mode, outbound traffic from your application cannot reach private endpoints within your VPC and must be destined for a public endpoint.

- Image pull: New Fargate tasks may be spun up during service creation, updates, deployments, or scale-up activities. During task bootstrap, the application image is pulled from Amazon ECR over its primary ENI (narrow green arrow in Diagram 1).

- Logs push: Application logs are pushed to AWS CloudWatch via the primary ENI as well (narrow green arrow in Diagram 1).

As you can see, in the public networking mode, all traffic flows are supported entirely behind the scenes through the App Runner VPC. As a customer, you don’t need to provision a VPC or any networking resources in your account in order to deploy an application that only requires outbound internet access and no VPC access.

However, many applications operate in an ecosystem of private resources and require access to a customer-owned VPC. In the following sections, we will walk through how we enabled this capability on the existing architecture.

VPC Networking Mode Introduction

In order to deploy an application on App Runner that has outbound access to a VPC, you must first create a VPCConnector by specifying one or more subnets and security groups to associate with the application. You can then reference the VPCConnector in the Create/UpdateService via the CLI as follows:

The Fargate tasks spun up behind the scenes are evenly spread across the zones that map to the VPCConnector subnets. We recommend selecting subnets across at least three Availability Zones (or all supported Availability Zones if the Region has less than three zones) for high availability.

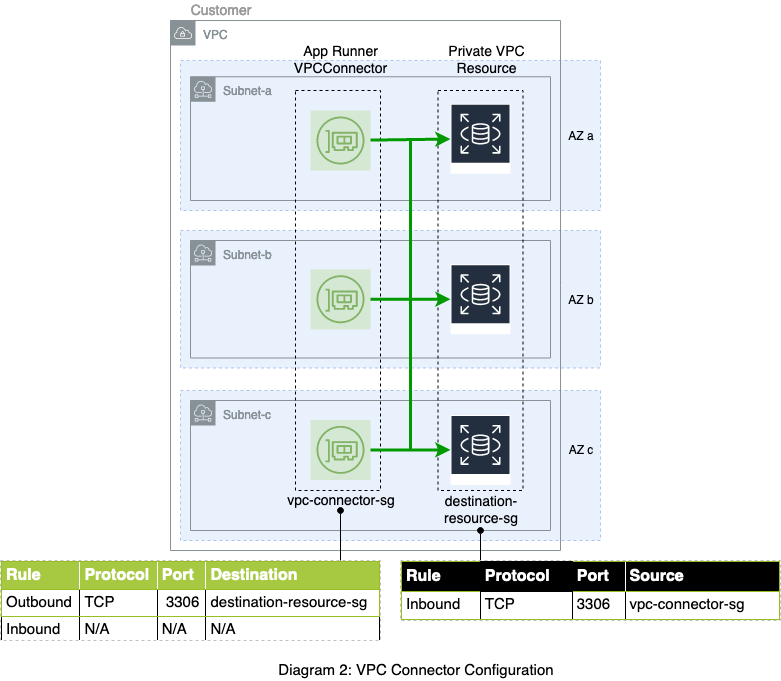

With regards to the security groups, it is important to note that the VPCConnector is only used for outbound communication from your application (more on why this is so later). Thus, the inbound rules of the security group(s) are not relevant and are effectively ignored. What matters is the outbound rules, which should allow communication to the desired destination endpoints. You must also ensure that any security group(s) associated with the destination resource have appropriate inbound rules to allow traffic from the VPCConnector security group(s), as shown in Diagram 2.

VPC Networking Mode Under the Hood

Now let’s go back under the hood to understand how the connection is established between your VPC and your application containers running within the Fargate VPC. The technology we use for this is AWS Hyperplane. AWS Hyperplane is an internal service that has been powering many AWS networking offerings. Specifically, it has supported inter-VPC connectivity for AWS Private Link and AWS Lambda VPC networking, and it is a perfect fit for our use case of establishing a connection between your VPC and the Fargate VPC that hosts the application tasks. More about why we chose Hyperplane in the next section, but first, let’s understand the architecture of the VPC networking mode.

When you create a VPCConnector and associate it with a service, a special type of ENI, referred to as a Hyperplane ENI, is created in your subnet(s). A similar Hyperplane ENI exists in the Fargate subnet that hosts the task microVM. When a task is launched, a tunnel is established between the two Hyperplane ENIs, thus creating a VPC-to-VPC connection.

A Hyperplane ENI created in your VPC looks very similar to a regular ENI, in that it will be allocated a private IP address from the subnet’s CIDR range. However, there are a few key differences to note. Hyperplane ENIs are managed network resources whose lifecycle is controlled by App Runner. While you can view the ENI and access its flow logs, you cannot detach or delete the ENI. Another distinguishing factor about the Hyperplane ENI is that it is unidirectional and only supports outbound traffic initiated by the application. Thus, you cannot send any inbound requests to the Hyperplane ENI’s IP address. Incoming requests to your application will continue to come through the service URL. In fact, let us re-examine all the traffic flows in this new set-up:

- Request/Response traffic: The Fargate task continues to be configured with its primary ENI in the App Runner VPC. Requests sent to the service URL will follow the same route as before through the NLB, the request router, and to the Fargate task via its primary ENI, all within the App Runner VPC (bold orange arrows in Diagram 3). This is the reason that the inbound rules of the VPCConnector security groups are not relevant, since inbound traffic does not pass through your VPC.

- Outbound application traffic: For outbound traffic, the Fargate task is configured with a secondary network interface in addition to the primary ENI. All network traffic initiated by the application container is routed to this secondary interface, from where it is forwarded to the Hyperplane ENI on the Fargate side, through the Hyperplane dataplane, and over to the Hyperplane ENI in the customer VPC. Once in the customer VPC, the traffic is forwarded to the destination endpoint per the customer VPC routing table (bold green arrows in Diagram 3). Note that if you have traffic destined for the internet, you must enable the appropriate path via NAT and Internet Gateways in your VPC.

- Image pull: ECR image pull continues to flow over the task’s primary ENI in the App Runner VPC (narrow green arrow in Diagram 3). You do not need to enable any special paths in your VPC for image pull.

- Logs push: Similarly, CloudWatch logs push also continues to occur over the task’s primary ENI in the App Runner VPC, and no special paths are needed in your VPC (narrow green arrow in Diagram 3).

Benefits of AWS Hyperplane for VPC Networking Mode

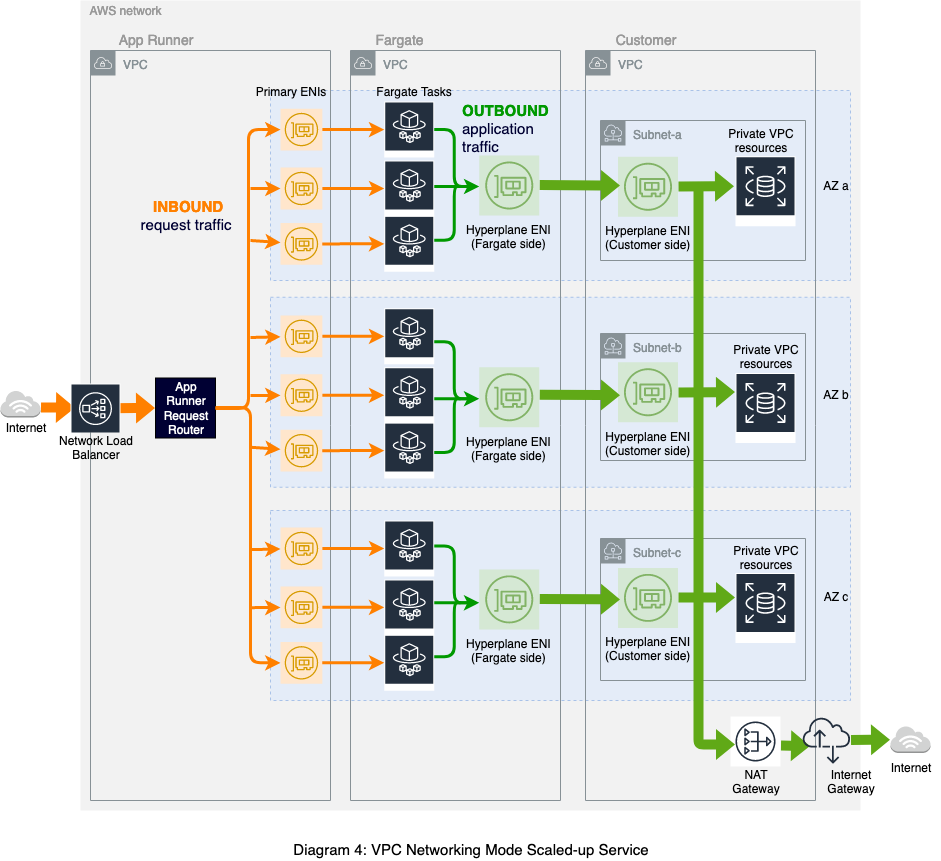

So why did we choose to use Hyperplane instead of regular ENIs to establish connectivity to your VPC? Hyperplane provides high throughput and low latency network function virtualization capabilities. With Hyperplane ENIs, we achieve a higher degree of sharing compared to regular ENIs, which would be created one per Fargate task. Hyperplane ENIs are tied to a subnet and one or more security group(s) combinations. Fargate tasks that share the same combination can send traffic through the same Hyperplane ENI. In our case, the security groups(s) specified in the VPCConnector apply the same to all Fargate tasks belonging to an App Runner service. Since there can be multiple subnets in the VPCConnector, a Hyperplane ENI is created per subnet. Fargate tasks are spread evenly across these subnets, and all tasks within a given subnet can send traffic through the shared Hyperplane ENI.

The previous diagram (Diagram 3) shows a simplified view of only one Fargate task per subnet and Hyperplane ENI. However, the benefits of Hyperplane are most visible when your service is scaled up, as shown in Diagram 4. Although there is a one-to-one relationship between a Fargate task and its primary ENI in App Runner VPC (which is a regular ENI), there is only one Hyperplane ENI per subnet in the customer VPC. All Fargate tasks within that availability zone send traffic through the same shared ENI in your VPC.

This architecture has a few benefits for you:

- Reduced IP address consumption in your VPC: Because the Hyperplane ENI is shared, typically only a handful of ENIs are required per service, even as we scale up the number of Fargate tasks required to handle request load. In fact, if you reference the same VPCConnector across multiple App Runner services, the underlying Hyperplane ENI(s) will be shared across these services, resulting in efficient use of the IP space in your VPC.

- Reduced task bootstrap time: The Hyperplane ENI is created once when the service is associated with a VPCConnector. Any subsequent tasks launched for scale-up or deployment simply establish a tunnel to the pre-created ENI, and we don’t have to pay a latency tax for provisioning the secondary ENI on the task launch path.

Conclusion

We’re excited to bring you this capability that allows App Runner applications to connect to private resources in your VPC using the power and scalability of AWS Hyperplane. This has been one of the most heavily requested features for this service, and we look forward to hearing your feedback on the App Runner roadmap on GitHub. To get started, check out our blog post which walks you through the setup of an example web application that connects to a private RDS database using this feature.

Follow me on Twitter @ArchanaSrikanta