Containers

How to automate Amazon EKS preventative controls in CI/CD using CDK and OPA/Conftest

Amazon Elastic Kubernetes Services (Amazon EKS) is a fully managed service that helps customers run their Kubernetes clusters at scale by minimizing the efforts required to operate a Kubernetes control plane. AWS customers are accelerating EKS adoption to run large-scale Kubernetes workloads. As a result, customers are facing challenges to enforce security policies at scale to protect Kubernetes workloads, especially in terms of implementing preventative controls. In this context, it’s important to automate security guardrail controls in CI/CD pipelines for Kubernetes workloads, so that any setting in the Kubernetes manifest files that are not compliant with control policies can be detected in the early stage of the development cycle well before deployment.

In the context of Amazon EKS adoption, threats can be grouped at two levels: Kubernetes API server and node components, and workloads on top. Customers can and should implement automatic compliance checks at the early stages of the Software Development Life Cycle (SDLC), i.e. shift-left and DevOps mode. For the infrastructure level, by utilizing EKS and other AWS services combined with infrastructure code analysis tools, such as Cloudformation Guard, customers can implement the automatic guardrail checks. Then for the Kubernetes workload level, we can also automate security compliance checks to ensure Kubernetes manifests are compliant with enterprise security control policies before they pass through the CI/CD pipelines.

In this post, we demonstrate how to automate preventative controls against Kubernetes workloads. Every component of this solution is deployed via AWS Cloud Development Kit (CDK) as the infrastructure-as-code tool, including EKS cluster, Kubernetes workloads and a CI/CD pipeline. Inside the CI/CD pipeline, the solution uses Conftest, an open-source tool within CNCF Open Policy Agent suite, to automate preventative controls before the deployment step. Customers can reuse these CDK code packages to build a CI/CD pipeline equipped with Kubernetes preventative controls. They can also combine it with other preventative controls, e.g. controls within CI/CD pipeline that scans the IaC (CFN/TerraForm) and fails the deployment on detection of deviations, and/or run-time controls, such as Gatekeeper, to provide defence in depth full SDLC coverage for their EKS workloads.

Conftest provides a utility to test structured configuration data, in particular, Kubernetes manifest files, against enterprise security control policies. The enterprise security control policies are codified in Rego language from OPA.

| Time to read | 30 mins |

| Time to complete | 60 mins |

| Cost to complete | $1 (est) |

| Learning Level | Advanced (300) |

| Services used | Amazon EKS, CDK, AWS CodePipeline, AWS CodeCommit, AWS CodeBuild |

Solution overview

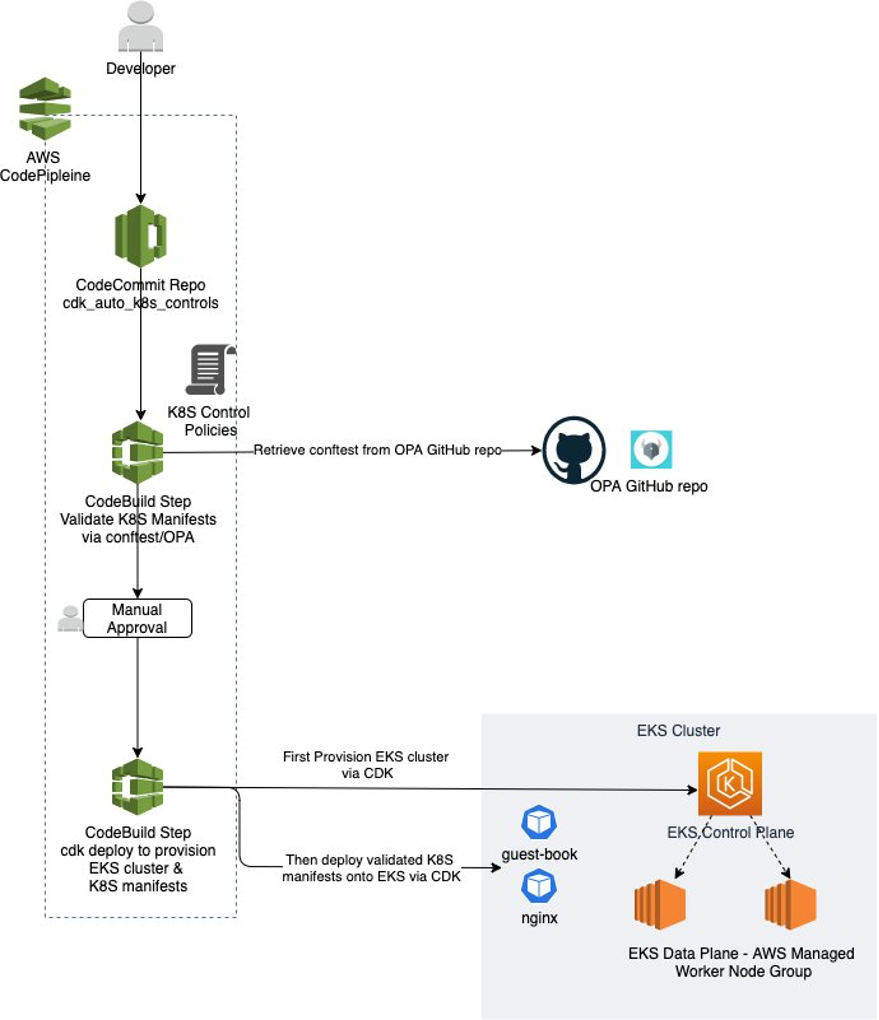

In this post, we will stand up a CI/CD pipeline using AWS CodePipeline, AWS CodeCommit, and AWS CodeBuild via AWS CDK. When a commit is made to the CodeCommit repo, the pipeline will be triggered and will automatically use Conftest to validate the Kubernetes manifest files against a set of control policies codified in OPA Rego. If any control policy is violated, the validation step will fail and stop the pipeline. Only if all control policies are satisfied by the Kubernetes manifest files, the pipeline will move onto the deployment step, where the EKS cluster and Kubernetes manifests will be deployed via CDK. The solution architecture is depicted in the diagram below:

This post provides an E2E solution to codify and automate Kubernetes preventative controls in CI/CD pipeline. The whole solution is codified as AWS CDK codes in Typescript, so it can be easily reused or embedded into customers’ existing CDK library.

The pipeline in this solution starts with a commit to the CodeCommit repo with the Kubernetes manifest files to be deployed onto EKS cluster. CodeBuild will then run Conftest prepackaged with control policies to validate the Kubernetes manifest files. If any control policy is breached by the Kubernetes manifest files, CodeBuild will stop the process and prevent the Kubernetes manifest files from being deployed into the run-time environment. If all control policies are satisfied by the Kubernetes manifest files, then the pipeline will pass the validation step and move on to deployment. The deployment step will provision an EKS cluster with an AWS managed worker node group then deploy the Kubernetes manifests onto the EKS cluster. Customers can also start with this blueprint and add other preventative controls, such as CloudFormation validation (CloudFormation Guard or custom OPA policies), to validate all deployment artefacts against preventative control policies.

In this post, we have demonstrated two policies codified in Rego as examples to check Kubernetes manifest files. Although Conftest itself does not carry any built-in REPO policies, but customers can refer to various OPA Rego policy libraries provided by the OPA open-source community. For example, this library provides OPA Rego policies to check common Kubernetes security best practices and implement controls equivalent to Kubernetes Pod Security Policies (PSP). Additionally, this library provides OPA Rego policies to check Kubernetes security settings against the CIS Kubernetes Benchmark. Note that in addition to providing security suggestions on Kubernetes workload manifests, the CIS Kubernetes Benchmark also suggests on Kubernetes control plane and data plane settings, which can be checked by kube-bench and is beyond the scope of this article. Also, customers can develop custom Rego policies according to their own security requirements by leveraging community-provided policies as templates.

Walkthrough

At a high level, the steps to deploy and validate the solution include:

- Create a new empty AWS CodeCommit Repo that will be used by the CI/CD pipeline as the code source.

- Download the blog source code from the AWS public repo and check into the empty CodeCommit repo created in step 1.

- On the development terminal, run the CDK deploy command to provision the CI/CD pipeline.

- Validate the Kubernetes validation step in the CI/CD pipeline stops the pipeline due to the Kubernetes manifests violating preventative control policies.

- Fix the Kubernetes manifests and push the changes to CodeCommit repo.

- Validate the CI/CD pipeline is triggered again and this time it passes the Kubernetes validation step.

- Tick the manual approval step in the CI/CD pipeline.

- Validate the pipeline successfully provisions an EKS cluster with Kubernetes manifests via CDK deploy.

Prerequisites

To deploy this stack, you would need:

- AWS CLI version 2

- Git

- NPM

- kubectl

- CDK

- An existing AWS VPC with 2 or more private subnets which have route to AWS NAT Gateway configured hence can reach internet outbound.

Deployment steps

Now let the game begin! Execute the following steps to deploy the pipeline to your AWS account.

- First, follow step 1 & 2 in this article to create a new empty AWS CodeCommit repo and git clone that empty repo to your development terminal. Please record the repo name, as we will need it in a later step. In our example, we named the repo as “cdk_auto_k8s_controls”.

$ git clone codecommit::ap-southeast-2://cdk_auto_k8s_controls Cloning into 'cdk_auto_k8s_controls'... warning: You appear to have cloned an empty repository. - On your development terminal, git clone the source code of this blog post from the AWS public repo:

$ git clone https://github.com/aws-samples/eks-preventative-controls.git Cloning into 'eks-preventative-controls'... ... Resolving deltas: 100% (13/13), done. $ cd eks-preventative-controls/ - On your development terminal, copy the source code of the blog post cloned in step 2 to the other local directory linked to the empty CodeCommit repo created in step 1, change two context parameters, and commit and push all the code files to the CodeCommit repo.

$ cp -r ./* ../cdk_auto_k8s_controls $ cd ../cdk_auto_k8s_controls $ vi cdk-eks/cdk.json (Set the cluster name as you like) "cluster-name": "cdk-auto-k8s-controls", (Replace the following example with real VPC ID value) "vpc-id": "vpc-0xx12345x1230x123", $ git add . $ git commit -m "push blog post source code to codecommit repo" $ git push - On your development terminal, configure the CDK context parameters that will be used to provision the pipeline in your AWS account via “cdk deploy”.

$ cd cdk-pipeline $ vi cdk.json (Set the pipelie name as you like) "pipeline-name": "EksDeployPipeline", (Verify the conftest-download-url as below) "conftest-download-url": "https://github.com/open-policy-agent/conftest/releases/download/v0.25.0/conftest_0.25.0_Linux_x86_64.tar.gz", (Set the repo name as the one you used in Step 1 described above) "codecommit-repo-name": "cdk_auto_k8s_controls" - Now on your development terminal, provision the CodePipeline instance in your AWS account via “cdk deploy”. It takes around five to six minutes. Please note if you have not CDK-bootstrapped your AWS account yet, then the “npx cdk deploy” will fail due to missing required SSM parameters. In this case, run “export CDK_NEW_BOOTSTRAP=1; cdk bootstrap aws://

ACCOUNT-NUMBER/REGION” to CDK-bootstrap your account first then run “npx cdk deploy”.$ npm install $ npm run build $ npx cdk ls CdkPipelineStack $ npx cdk deploy ... Do you wish to deploy these changes (y/n)?y - Finish.

By now, we have successfully provisioned a CI/CD pipeline with embedded Kubernetes preventative control policies. Let’s validate it. Log onto the AWS Management Console and go to the CodePipeline service page, you should see a pipeline named EksDeployPipeline as shown below:

This simple pipeline has three stages:

- First, it has a source action linked to the CodeCommit repo you created in previous step 1. The repo name is cdk_auto_k8s_controls in our case.

- In the second step, it has a CodeBuild step that downloads the Conftest binary, validating the Kubernetes manifest files under the k8s-manifests folder in the code package, which are to be deployed by the pipeline.

- Lastly, the pipeline has CodeBuild step that uses the “cdk deploy” to first provision an EKS cluster with an AWS managed worker node group and deploy Kubernetes manifests onto the EKS cluster.

However, we can see the first pipeline run is stopped due the failure at the second step. Let’s click the “Details” link in the ValidateK8sManifests step to check it out.

First, we can find in the PRE_BUILD phase, the CodeBuild step download the Conftest binary to the conftest/bin directory as shown below:

Then in the BUILD phase, it uses Conftest to validate the Kubernetes manifest files under k8s-manifests the Kubernetes preventative control policies and has found two policies have been breached. One policy breach is a Kubernetes object defined in the guestbook-all-in-one.yaml that is using hostNetwork, which breaches the Kubernetes preventative control policy of not using hostNetwork. The other is a Kubernetes object defined in the nginx.yaml that does not have mandatory label “env” defined. Hence, the CodeBuild step sets its BUILD state as FAILED and stops the pipeline from deploying the Kubernetes workloads into runtime environment.

In order to deploy the Kubernetes manifests, we need to fix those non-compliant issues first. According to the messages in the screenshot above, on your development terminal, you should fix the issues with k8s-manifests/guestbook-all-in-one.yaml and k8s-manifests/guestbook-all-in-one.yaml, then push the changes to the CodeCommit repo to trigger the rerun of the pipeline.

$ cd ../k8s-manifests/

$ vi guestbook-all-in-one.yaml

At line 41, put "#" in front to comment out the line of "hostNetwork: true"

$ vi nginx.yaml

Uncomment out the line 7 of "env: prod"

$ git add guestbook-all-in-one.yaml nginx.yaml

$ git commit -m "fix Kubernetes manifests"

$ git pushNow in CodePipeline in the AWS Management Console, you should see the pipeline is triggered again. And in this run, the validation step should succeed as shown below:

Click the “Review” button in the ApproveDeployment step and approve the deployment (we have purposely added this step in our solution, however you can remove it from the pipeline setting if you prefer fully automatic deployment). Then the last step of “DeployCDKApp” should start to provision the EKS cluster and deploy the Kubernetes manifest files on top of the ESK cluster. It will take around 15 to 20 minutes; the vast majority of that time is spent on EKS cluster provisioning. Enough time for a coffee, or two!

When you come back, you should see the step of “DeployCDKApp” succeeds.

Then click its “Details” link. You should see this CodeBuild step first compiles the CDK codes under the cdk-eks folder.

Next it uses “cdk deploy” to provision the EKS cluster and apply the Kubernetes manifests on top.

You can validate that the Kubernetes workloads have been deployed successfully by using kubectl commands on your development terminal. First we need to copy the “aws eks update-kubeconfig” command printed in the CodeBuild window above then check the example Kubernetes workloads ( i.e. nginx and guest-book) are running.

$ aws eks update-kubeconfig --name cdk-auto-k8s-controls --region ap-southeast-2 --role-arn arn:aws:iam::123456789012:role/CdkEksStack-AutoK8sContro-clusterownerroleXX111XX-XXXXXXXXX20X

Updated context arn:aws:eks:ap-southeast-2:123456789012:cluster/cdk-auto-k8s-controls in /Users/jaswang/.kube/config

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

frontend-56fc5b6b47-6m8c5 1/1 Running 0 17m

frontend-56fc5b6b47-gs2fj 1/1 Running 0 17m

frontend-56fc5b6b47-q8r5s 1/1 Running 0 17m

nginx-deployment-6b474476c4-4zthr 1/1 Running 0 17m

nginx-deployment-6b474476c4-7d8xx 1/1 Running 0 17m

nginx-deployment-6b474476c4-dvgjl 1/1 Running 0 17m

...You can also go to Amazon EKS in the AWS Management Console to validate that a new EKS cluster of cdk-auto-k8s-controls has been provisioned successfully.

Code elaboration

The code used in this post is wrapped up in four sub-folders according to their purpose.

- The cdk-pipeline folder has CDK code to provision the CI/CD pipeline based on AWS CodePipeline and CodeBuild.

- The k8s-manifests folder has Kubernetes manifest YAML files that will be deployed by the CI/CD pipeline. As examples, it has two typical Kubernetes workloads,

nginxandguest-book. It can be extended by adding your own own Kubernetes workload manifests. - The conftest folder includes the Conftest tool configuration file, Kubernetes preventative control policies in Rego and data used in the policies. In the ValidateK8sManifests step, the pipeline uses Conftest to validate Kubernetes manifest files against Kubernetes preventative control policies.

- The cdk-eks folder has CDK codes to provision an EKS cluster and deploy Kubernetes workloads defined by the Kubernetes manifest files under the k8s-manifests folder. In the deployment step, the pipeline compiles CDK codes in this folder and uses “cdk deploy” to provision the EKS cluster and deploy Kubernetes manifest files.

CDK code for the CI/CD pipeline

The CDK codes for provisioning CI/CD pipeline is under the sub-folder of cdk-pipeline. As a normal CDK app repo structure, the cdk-pipeline.ts file under bin folder is the entry file which defines the CDK app and invoke constructor of the stack defined under lib folder.

In the cdk-pipeline-stack.ts file under lib folder, it defines the CodePipeline instance and steps in the pipeline. Those steps include a CodeCommit source action and two CodeBuild actions. The first CodeBuild action is to validate Kubernetes manifests against Kubernetes preventative control policies. The second CodeBuild action is to run CDK codes under cdk-eks folder to provision EKS cluster and deploy Kubernetes manifests on top.

The validation CodeBuild step defines the steps of downloading Conftest and validates Kubernetes manifests with the following code snippet:

const k8sValidationBuildSpec = codebuild.BuildSpec.fromObject({

version: "0.2",

phases: {

pre_build: {

commands: [

"ls -rtla $CODEBUILD_SRC_DIR/",

"cd conftest/bin",

"ls -rtla",

`wget ${this.conftestDownloadUrl} >/dev/null 2>&1`,

`tar xzf ${conftestFileName}`,

"ls -rtla"

],

},

build: {

commands: [

"./conftest test ../../k8s-manifests/ --combine"

]

},

}

});The deploy CodeBuild step defines the steps of compiling the CDK codes under cdk-eks then using “cdk deploy” to provision an EKS cluster and deploy Kubernetes manifests with the following code snippet:

const cdkDeployActionSpec = codebuild.BuildSpec.fromObject({

version: "0.2",

phases: {

pre_build: {

commands: [

"ls -rtla $CODEBUILD_SRC_DIR/",

"cd cdk-eks",

"ls -rtla",

"npm install",

"npm run build",

],

},

build: {

commands: [

"npx cdk deploy --require-approval never"

]

},

}

});Kubernetes manifest YAML files

Under the k8s-manifests folder, two standard Kubernetes manifest YAML files ( nginx.yaml and guestbook-all-in-one.yaml) are provided as examples. As described in the CDK code in the previous section, the validate step of the pipeline will validate those Kubernetes manifest files against Kubernetes preventative control policies. Also in the CDK code under cdk-eks which will be elaborated in the next section, the Kubernetes manifest files under this folder will be deployed onto EKS cluster.

Conftest configuration and OPA policies for Kubernetes preventative controls

Under the folder conftest, it has the Conftest configuration file under bin sub-folder, Kubernetes preventative control policies in Rego under policy sub-folder and contextual data under data sub-folder which are used in the Rego policy logics. As elaborated in previous section, the validation step in the pipeline uses Conftest configuration and OPA policies under this folder to validate the Kubernetes manifest files.

Under policy sub-folder, we have provided two OPA policy Rego files, host_networking.rego to make sure no Kubernetes workload is using host network and required-labels.rego to make sure every Kubernetes object must have the mandatory labels defined in data/required-labels.yaml, i.e. app and env.

Under bin sub-folder, the conftest.toml file is a standard Conftest configuration files, which defines Conftest to get policy from “../policy” and get data from “../data”.

CDK code For EKS and Kubernetes workloads

Under the folder cdk-eks, it has the CDK codes which provision EKS cluster and deploy Kubernetes workloads. The CDK codes here are compiled and deployed by the deployment step in the CI/CD pipeline described above. As a normal CDK app structure, the file cdk-eks.rego under bin folder is the entry file which defines the CDK app and invoke constructor of the stack defined under lib folder.

Under the lib folder, the file of cdk-eks-stack.rego first provisions an EKS cluster with an AWS managed worker node group composed of 2 m5.large EC2 instances. Such worker node group is the default setting provided by CDK aws-eks module.

const cluster = new eks.Cluster(this, 'my-cluster', {

version: eks.KubernetesVersion.V1_21,

...

clusterName: props.clusterName,

outputClusterName: true,

endpointAccess: eks.EndpointAccess.PUBLIC,

vpc:

props.vpcId == undefined

? undefined

: ec2.Vpc.fromLookup(this, 'vpc', { vpcId: props?.vpcId! }),

vpcSubnets: [{ subnetType: ec2.SubnetType.PRIVATE }],

});Then it iterates the Kubernetes manifest files under the folder of ./k8s-manifests described above and deploy each of them onto the EKS cluster created above.

// Get list of file names under the folder

const fileList = fs.readdirSync(k8sManifestFileDir);

console.log(fileList);

console.log(k8sManifestFileDir);

for (let fileName of fileList) {

if (fileName.includes('yaml')) {

let fileFullPath = `${k8sManifestFileDir}/${fileName}`;

let k8sYaml = fs.readFileSync(fileFullPath);

let k8sManifest = yaml.safeLoadAll(k8sYaml.toString());

let componentName = fileName.split('.')[0];

cluster.addManifest(`${componentName}`, ...k8sManifest);

}

};Cleanup

Now we clean up the AWS resources deployed in the previous section. On your development terminal, go to folder of cdk-eks and destroy the EKS cluster together with its Kubernetes workloads. This step step may take 15-20 minutes.

$ cd ../cdk-eks/

$ npm install

$ npm run build

$ npx cdk destroyThen go to the folder of cdk-pipeline and destroy the CodePipeline instance itself with other related CodeBuild projects. Please note the CodeCommit repo will remain since it’s created manually.

$ cd ../cdk-pipeline/

$ npx cdk destroyLastly go to the AWS console to remove the CodeCommit repo created at the beginning.

Conclusion

This post demonstrates that by utilising Conftest and OPA Rego policies you can automate Kubernetes preventative controls using CI/CD. As a result, the Kubernetes manifests can be deployed into runtime environment only if they are compliant with all Kubernetes control policies required by the enterprise. And with the power of AWS services, including EKS, CodePipeline, CodeBuild, as well as infrastructure-as-code tool of AWS CDK, we can codify and automate the whole validation and deployment process E2E which will fast-track your adoption of EKS and deploying Kubernetes workloads while keep being compliant with enterprise control policies.