Containers

Turbocharging EKS networking with Bottlerocket, Calico, and eBPF

This post is co-authored by Alex Pollitt, Co-founder and CTO at Tigera, Inc.

Recently Amazon announced support for Bottlerocket on Amazon Elastic Kubernetes Service (Amazon EKS). Bottlerocket is an open source Linux distribution built by Amazon to run containers focused on security, operations, and manageability at scale. You can learn more about Bottlerocket in this announcement blog.

Amazon EKS has great networking built in thanks to the Amazon VPC CNI plugin that enables pods to have VPC routable IP addresses. If network policy support is desired on top of this base networking, EKS supports Calico, which can be added to any EKS cluster with a single kubectl command as described in the Amazon EKS documentation.

So what changes with EKS support for Bottlerocket? In addition to the reduced footprint and resource requirements, Bottlerocket also provides a rapid release cycle, which means that it’s among the first EKS-optimized operating systems to support eBPF functionality in the Linux kernel. Calico can build on top of this foundation to enhance the base networking for EKS beyond just adding network policy support. In this blog, we’ll take a closer look at the benefits, including accelerating network performance, removing the need to run kube-proxy, and preserving client source IP addresses when accessing Kubernetes Services from outside the cluster (and why this is exciting!).

Accelerating network performance

Before getting into details of how Calico makes use of eBPF, it is important to note that in the context of this blog, Calico is viewed as an additional networking layer on top of VPC CNI providing functionality that goes beyond the traditional network policy engine. In particular, the standard instructions to install Calico network policy engine with EKS use a version of Calico that pre-dates eBPF mode GA. For this blog, we will use a prerelease manifest in order to install a suitable version of Calico with eBPF support as described in our guide, “Creating an EKS cluster for eBPF mode.”

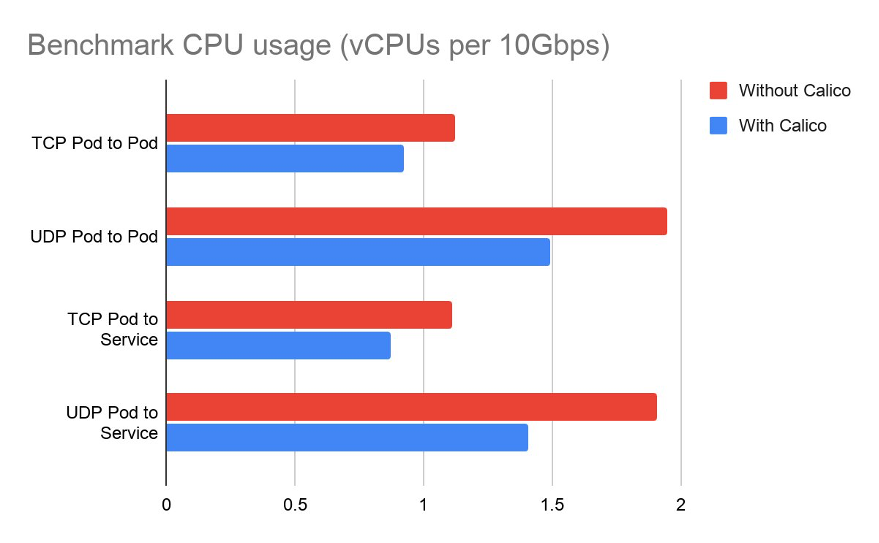

To show how Calico can accelerate network performance of EKS using eBPF, the Calico team ran a series of network performance benchmarks based on the k8s-bench-suite. In this test, they compared the latest Calico eBPF enabled release with a vanilla EKS cluster in both cases using nodes backed by AWS-provided Bottlerocket AMIs.

Test setup

To ensure there was no risk of the underlying AWS cloud network being the bottleneck in the benchmarks, all tests were run using c5n.metal nodes, which can reach up to 100Gbps network bandwidth. Each benchmark then measured the CPU usage required to drive 10Gbps of traffic, staying well below the limits of the underlying network.

The performance benchmarks focussed on the most common traffic flows between two nodes in a typical cluster: direct pod-to-pod traffic and pod-to-service-to-pod traffic, for both TCP and UDP flows.

Test Results

The chart below shows the total CPU utilization consumed by the benchmarks, measured in vCPUs. This is the sum of both client and server CPU utilization. The results indicate that adding Calico reduces CPU utilization by up to 25% compared to a cluster without Calico.

What this translates to beyond the controlled benchmark environment is that for the same amount of CPU utilization, Calico eBPF can achieve a higher network throughput. This aspect is important for resource management, since both throughput and CPU are finite resources. Lower CPU utilization for networking means that more CPU is available to run application workloads, which in turn can translate into significant cost savings if you are running network intensive workloads.

Replacing kube-proxy

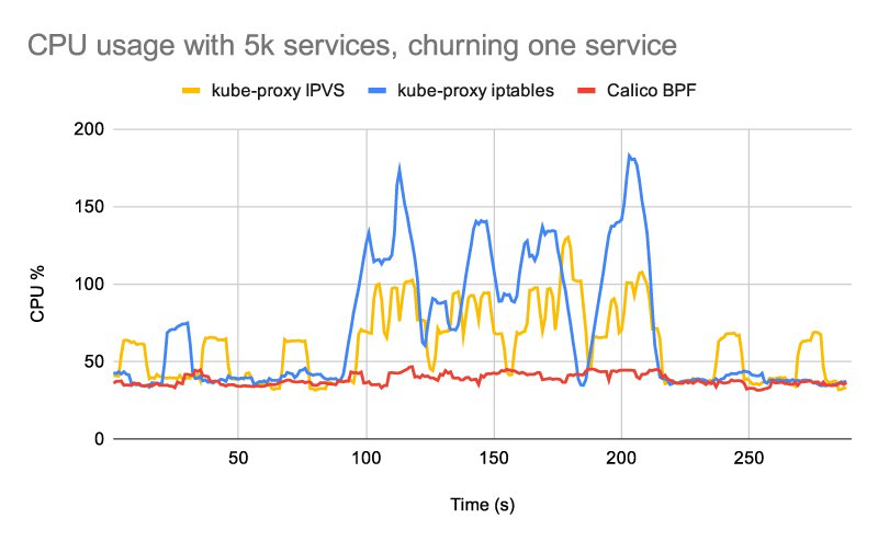

Calico’s eBPF data plane includes native service handling, so you no longer need to run kube-proxy. Calico’s native service handling outperforms kube-proxy both in terms of networking and control plane performance, and supports features such as source IP preservation.

The differences in performance are most noticeable if you have particularly network latency sensitive workloads or if you are running large numbers of services. You can read more about these performance advantages and find a range of different benchmark charts comparing kube-proxy performance with Calico’s native service handling in this blog from the Calico team.

Source IP preservation

The other big advantage of Calico’s native service handling is that it preserves client source IP addresses.

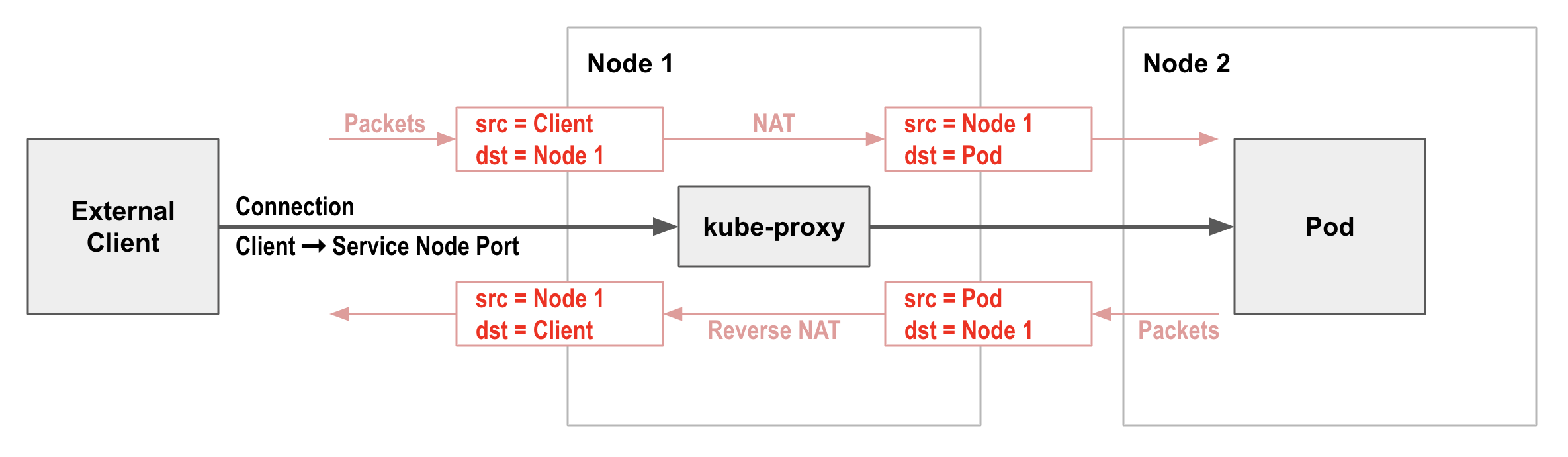

One frequently encountered friction point with Kubernetes networking is the use of Network Address Translation (NAT) by kube-proxy on incoming network connections to Kubernetes services (e.g. via a service node port). In most cases, this has the side effect of removing the original client source IP address from incoming traffic and replacing it with the node’s IP address.

This means that Kubernetes network policies cannot restrict incoming traffic to specific external clients, since by the time the traffic reaches the pod it no longer has the original client IP address. This can also make troubleshooting applications harder, since logs don’t indicate the real client. In addition, for some applications, knowing the source IP address is desirable or required. For example, an application may need to perform geolocation decisions based on the client address.

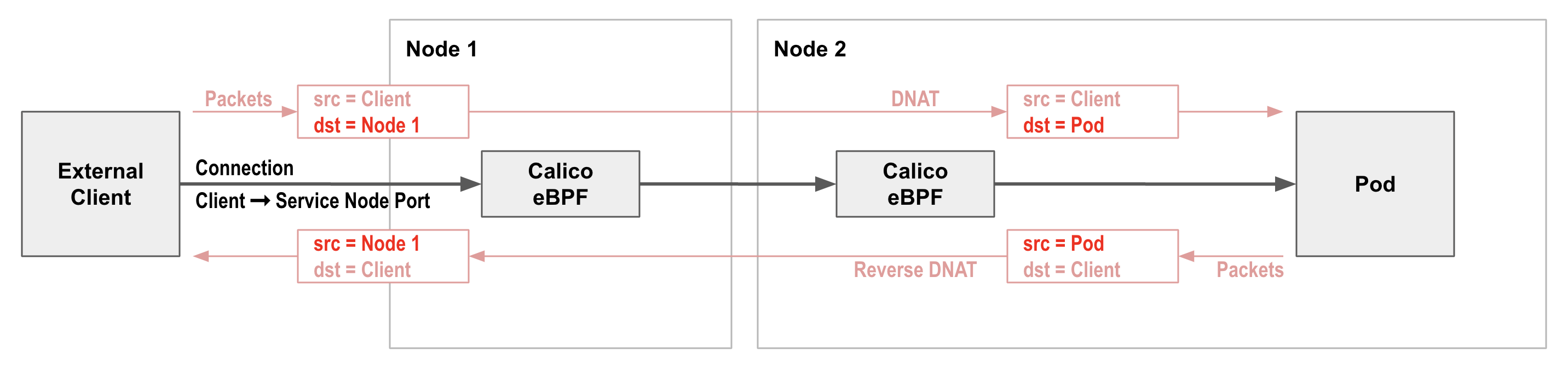

Calico’s native service handling is able to preserve client source IP addresses.

To take advantage of this in an EKS cluster, you would typically create a Kubernetes Service of type LoadBalancer, and specify the service should use an AWS Network Load Balancer (NLB). The resulting NLB load balancer directs incoming connections to the corresponding service node port, preserving the client source IP. Calico’s native service handling then load balances the node port to the backing pods, again preserving client source IP. So you get end-to-end client source IP address preservation all the way to the backing pods!

This is great for making logs and troubleshooting easier to understand, and means you can now write network policies for pods that restrict access to specific external clients if desired.

Conclusion

Bottlerocket is an exciting OS, and with the latest eBPF capabilities it supports, it has everything you need to run the Calico eBPF data plane.

By adding Calico to a Bottlerocket based EKS cluster with standard Amazon VPC CNI networking, you can significantly improve network performance, no longer need to run kube-proxy, and can preserve external client source IP address all the way to the pods backing your services. For network intensive workloads the performance improvements can represent a significant cost saving when running at scale. Preservation of client source IP addresses makes logs and troubleshooting applications easier, and allows Kubernetes network policy to restrict access to specific external clients if desired.

Importantly, Calico’s eBPF features run on top of, rather than replace, EKS’s standard Amazon VPC CNI networking. So all the features supported by EKS networking continue to work and behave exactly as expected.

To try this out for yourself, follow the step-by-step instructions in our guide “Creating an EKS cluster for eBPF mode” that shows how to create a Bottlerocket-based EKS cluster and then easily add Calico on top, to gain all the benefits discussed in this blog.