Containers

Under the hood: AWS Fargate data plane

Today, we launched a new platform version (1.4) for AWS Fargate, which bundles a number of new features and capabilities for our customers. You can read more about these features in this blog post. One of the changes we are introducing in platform version 1.4 is replacing Docker Engine with Containerd as Fargate’s container execution engine. In this blog post, we will go into the details of what this means for your container workloads, motivations behind this change and how this rearchitected container execution environment (also referred to as the Fargate data plane) looks under the hood.

Glossary of terms

Let’s review some of the terms and concepts we’re going to use in the rest of the blog.

- Container runtime/execution engine: Also referred to commonly as the “container runtime,” this is any piece of software that is used to create, start and stop containers. Examples here include Docker Engine, Containerd, CRI-O etc.

- runC: A tool for spawning and running containers based on Open Containers Initiative (OCI) runtime specification.

- Containerd: A daemon that manages the lifecycle of containers on hosts, typically using runC. You can find more details about how runC and Containerd interact with each other in this blog post by Michael Crosby.

- Docker Engine: The container engine offered by Docker. Generally, this includes the Docker daemon binary, which needs to be installed on hosts where containers need to run. Docker Engine builds on top of Containerd which builds on top of runC.

What does the switch to Containerd mean for your containers running on Fargate?

In short, there’s no impact to your workloads because of this switch.

Even though Fargate has switched to using Containerd for managing containers, your applications themselves will see no differences in how they are executed. Containerd helps improve the Fargate data plane architecture by simplifying it and making it more flexible (more details in the section below). Your applications themselves will be agnostic to this change. This is because of three main reasons:

- OCI compatibility: Containerd includes full support for OCI images and Docker images. This means that all of your container images that are built with Dockerfiles and Docker toolchain will continue to run seamlessly on Fargate. In fact, this was one of the pre-requisite requirements for us to adopt Containerd as we wanted to ensure that applications built from all of our customers’ existing toolchains and CICD integrations continue to work with Fargate.

- Orchestrator abstractions: The workloads on Fargate are specified either using Amazon Elastic Container Service (ECS) task definitions or Amazon Elastic Kubernetes Service (EKS) pod specifications. Since these orchestrators provide a higher level of abstraction than containers themselves, container workloads will not be affected as long as Fargate supports these abstractions. For example, neither ECS nor EKS support specifying logging options for containers the way they are supported via the Docker CLI’s run command parameters. ECS supports log driver configurations in the task definition and the FireLens configuration, whereas EKS integrates with logging agents. We have taken care to make sure that all of the features and container interfaces that are currently supported in Fargate continue to function seamlessly with this switch.

- Managed infrastructure: Since Fargate takes on the undifferentiated heavy lifting of infrastructure setup and maintenance for running containers, it is also responsible for maintaining the lifecycle of these containers. This includes bootstrapping container images, capturing and streaming container logs to appropriate destinations and making available the appropriate interfaces for storage, networking, IAM role credentials and metadata retrieval for these containers. Customer applications remain unaffected since Fargate maintains the compatibility of these integration points.

Why Containerd?

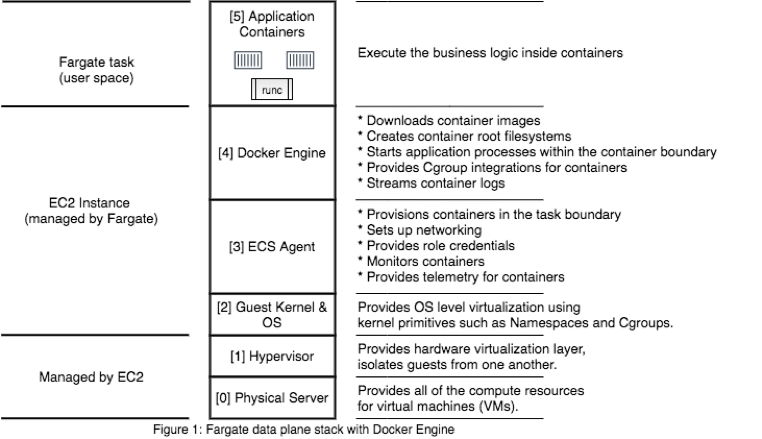

Let’s take a quick look at components of the Fargate data plane. Figure 1 shows how they stacked up prior to platform version 1.4. You can watch this talk from re:Invent 2019 for more details.

Containerd integration will increase the flexibility of this stack to offer you new features with lower overhead by simplifying it, increasing reliability and by minimizing the footprint of Fargate-owned components. Let’s explore these aspects in more detail next.

Need for a minimal container runtime

As we’ve expanded the feature set offered by Fargate, we’ve continued to customize different components of this stack. For example:

- Container native VPC networking support: To enable support for awsvpc networking mode, we bypassed all of the networking capabilities offered by Docker Engine and instead relied on Container Networking Interface (CNI) plugins (available in the amazon-ecs-cni-plugins GitHub repo) to implement this functionality in Fargate. This was the most maintainable, customer friendly path that we could choose. These networking plugins run outside the context of Docker Engine to set up container networking on Fargate.

- Extensible logging via FireLens: To extend the logging capabilities for ECS tasks on Fargate, we added support for FireLens. FireLens enables advanced capabilities like sending logs to multiple destinations and filtering at source. We decided to implement the FireLens logging driver as a side-car container running alongside the containers in your task; again, the enhancements here were outside Docker Engine.

- Metadata endpoint for ECS tasks: Some containers and applications want to query metadata and stats about other containers in the task. Outside Fargate, the most common way to do this is by accessing the container runtime directly. However, security and privilege separation of workloads is one of our top concerns with Fargate; providing access to the underlying container runtime (like Docker Engine) could weaken the security posture and make it relatively straightforward for malicious actors to mount an “elevation of privilege” attack. Instead, we decided to serve this data ourselves via a local HTTP endpoint made available to containers.

This has been a general trend for many of the features that we’ve built; most needed changes in layers #3 and #2 in the diagram above. We only require a minimal container runtime engine in Fargate, where we only need the basic container CRUD APIs from the container runtime. Everything else is already handled by other components of the Fargate data plane stack.

Containerd fits nicely to this model as it maintains very minimal state and supports exactly what’s needed by Fargate; nothing more, nothing less. For example, Containerd supports using network namespaces created externally when starting containers. This means that Fargate no longer needs to run the pause container for ECS tasks on the platform version 1.4. The pause container was necessitated by the fact that this was the only way in which we could create network namespaces with Docker and ensure that networking was properly configured prior to starting application containers. Since it’s no longer required, Fargate data plane has one less container to manage and one less container image to maintain.

Minimal management overhead

Containerd also has a minimal footprint both in terms of resources consumed when it’s running and in terms of additional packages that need to be installed. For example, with platform version 1.4, we don’t need to install any client-facing CLI for managing containers or container images on any of the VMs as Fargate never had any use for such a CLI in the first place (Fargate integrates programmatically with respective SDKs to manage containers). Similarly, Fargate can get rid of the components that are required to build container images since there’s no need for them either. Since Fargate takes care of curating the execution environment on behalf of customers, it’s always beneficial to minimize the software footprint. Fewer packages installed result in fewer packages to maintain and patch, which results in a more robust platform and a better operational posture.

Extensible architecture

While Containerd serves as a minimal container execution engine and simplifies the Fargate data plane, it also offers extensibility via its plugin based architecture. Containerd lets clients configure pretty much any part of the container execution lifecycle via plugins. This is highly desirable for Fargate, as it enables Fargate to extend the feature set in meaningful ways that deliver value for containers running on Fargate.

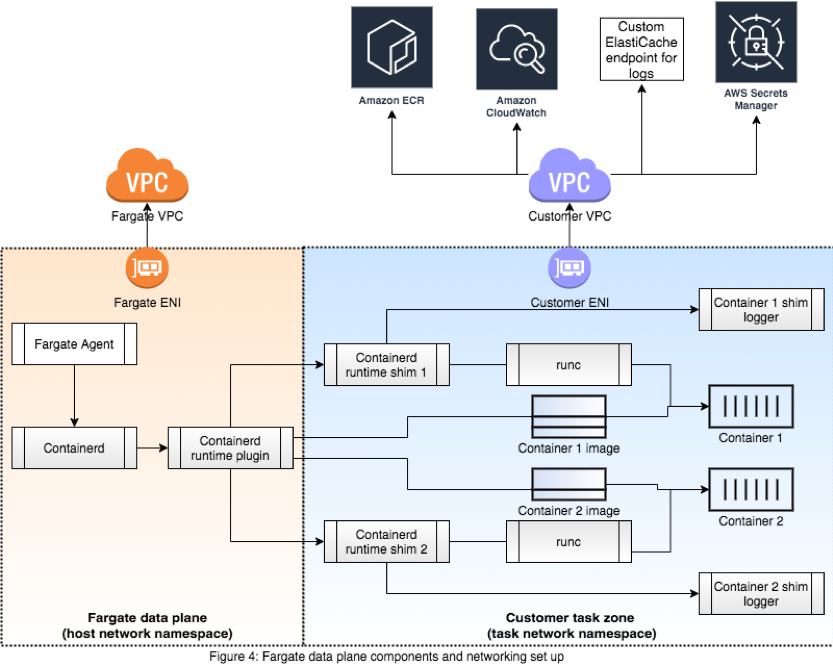

For example, we wanted to add support for streaming container logs to various destinations already supported in Fargate. It was pretty straight forward for us to do this by extending Containerd’s shim logging plugins. We created a set of shim logger plugins for routing container logs, which we are open sourcing today. You can find these on GitHub in the amazon-ecs-shim-loggers-for-containerd repo. These replace Docker Engine’s in-process logging drivers, which Fargate uses prior to platform version 1.4 and provide the same set of features. If you’re experimenting with or using Containerd and are looking for an extensible logging solution, you can start using these in your Containerd implementations. More details about using these plugins can be found here.

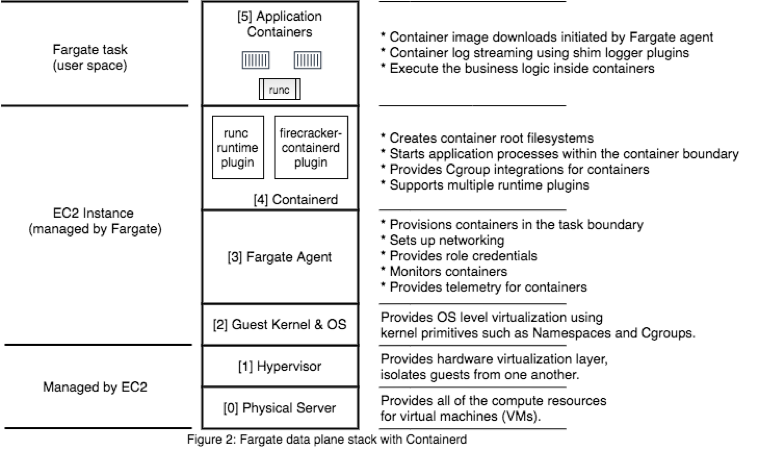

As another example, Fargate can leverage a VM-based runtime for containers such as Firecracker VMM by simply switching Containerd’s runtime plugin to firecracker-containerd instead of runC. This plugin enables Containerd to manage containers as Firecracker microVMs. The flexibility of switching container runtimes with minimal configuration change is highly desirable for satisfying different kinds of use-cases currently supported by Fargate.

Fargate data plane architecture

Once we made the decision to adopt Containerd, a natural progression from that was to create Fargate data plane specific components instead of using the ECS agent to orchestrate containers on Fargate instances. We wrote a new Fargate agent for this purpose, which is responsible for interacting with Containerd for orchestrating containers on a VM and replaces the ECS agent. Not only does this new Fargate agent integrate with Containerd, it is also optimized for Fargate. This has already enabled Fargate to deliver features such as supporting EKS pods on Fargate in a seamless manner. This new architecture also allows Fargate to utilize Firecracker microVMs to run containers via the firecracker-containerd runtime. Figure 2 illustrates the new architecture of Fargate data plane stack.

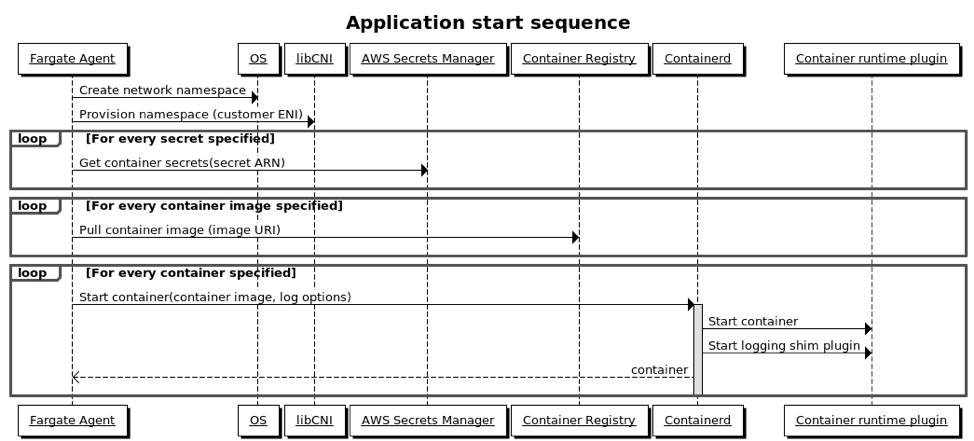

Figure 3 shows a rough sequence of events that lead to Fargate agent running customer containers on a VM when starting an ECS task.

- The Fargate agent receives a message that it needs to start a task. This message also contains details about the elastic network interface (ENI) that has been provisioned for the task.

- It then sets up the networking for that task by creating a new network namespace and provisioning the network interface to this newly created network namespace (see Figure 3 below).

- Next, it downloads the container images, any secrets and configuration needed to bootstrap containers using the ENI from customer’s account.

- Customer containers are started next using Containerd APIs.

- Containerd in turn creates shim processes that serve as parent processes for containers. These shim processes are also used to spin up containers using runC.

- The Fargate agent also specifies what kind of Containerd logger shims need to be started based on the configuration specified by customers. Containerd uses this to start logging plugins for containers.

Figure 4 illustrates a high-level view of how these components are laid out:

Conclusion

We are excited with the robustness, simplicity, and flexibility this new architecture brings as we are always looking to continuously innovate the Fargate platform and add capabilities that unlock new use-cases for our customers. We would love to hear your feedback on these changes and the shim loggers repo in the comments section. You can also reach out to us on the containers roadmap repo on GitHub.