AWS DevOps & Developer Productivity Blog

How FactSet automates thousands of AWS accounts at scale

This post is by FactSet’s Cloud Infrastructure team, Gaurav Jain, Nathan Goodman, Geoff Wang, Daniel Cordes, Sunu Joseph, and AWS Solution Architects Amit Borulkar and Tarik Makota. In their own words, “FactSet creates flexible, open data and software solutions for tens of thousands of investment professionals around the world, which provides instant access to financial data and analytics that investors use to make crucial decisions. At FactSet, we are always working to improve the value that our products provide.”

At FactSet, our operational goal to use the AWS Cloud is to have high developer velocity alongside enterprise governance. Assigning AWS accounts per project enables the agility and isolation boundary needed by each of the project teams to innovate faster. As existing workloads are migrated and new workloads are developed in the cloud, we realized that we were operating close to thousands of AWS accounts. To have a consistent and repeatable experience for diverse project teams, we automated the AWS account creation process, various service control policies (SCP) and AWS Identity and Access Management (IAM) policies and roles associated with the accounts, and enforced policies for ongoing configuration across the accounts. This post covers our automation workflows to enable governance for thousands of AWS accounts.

AWS account creation workflow

To empower our project teams to operate in the AWS Cloud in an agile manner, we developed a platform that enables AWS account creation with the default configuration customized to meet FactSet’s governance policies. These AWS accounts are provisioned with defaults such as a virtual private cloud (VPC), subnets, routing tables, IAM roles, SCP policies, add-ons for monitoring and load-balancing, and FactSet-specific governance. Developers and project team members can request a micro account for their product via this platform’s website, or do so programmatically using an API or wrap-around custom Terraform modules. The following screenshot shows a portion of the web interface that allows developers to request an AWS account.

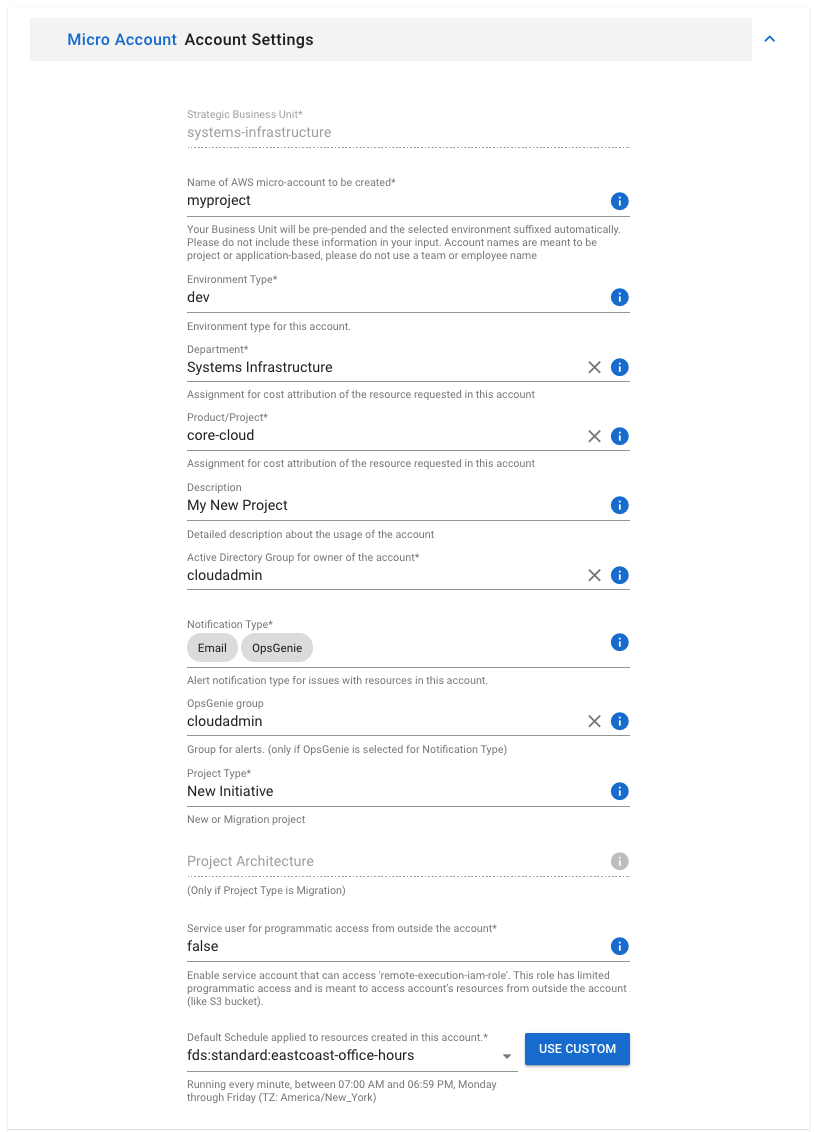

A user requests a micro account by providing relevant information, such as:

- FactSet product

- Department

- Owner

- Target group for alert notification

- Whether the account is for development or production

- The master AWS organization to which the account should be tied

The following screenshot shows the options available when creating a new account.

Assuming there are no collisions with existing accounts, and that manager approval is secured, automation then starts behind the scenes to fulfill that request.

The automation for creating a micro account has the following steps:

- We create an AWS account via APIs and place it in a preexisting AWS Organizational Unit (OU) corresponding to a major FactSet corporate division, as determined by the user-selected department. This OU placement not only helps with policy enforcement, but also allows for the costs generated in the account to be charged to the correct internal budget.

- A newly created AWS account comes with a default VPC, but we immediately delete this and add a shared subnet managed by the central networking team. This helps enforce networking policies such as not having an egress route to the internet but connectivity to the corporate network. Similarly, pre-curated SCPs as per FactSet’s governance rules are applied to the account.

- We also use Amazon Simple Email Service (Amazon SES) to generate an account-unique email address, with a small team of cloud administrators as recipients. Although it’s quite rare for us to have to log in to developer-created accounts as a root user, when we need to do so, we ask for the root password to be regenerated, receive it via that email address, and then log in.

Desired state management using FactSet’s automation modules

At FactSet, the desired state of every AWS account follows an infrastructure as code (IaC) paradigm via a collection of automation modules. FactSet’s automation module can be YAML or DSL supported by a configuration management platform, such as Terraform or Ansible, or by code written in a programming language such as Go or Python. These modules are called at account creation time and on subsequent enforcement runs. Each module has an entry point that the enforcement processes looks for. It then runs the module logic and checks for a successful exit code. Each module in the automation pipeline is idempotent in nature and when run, ensure that certain global and account-specific settings are in place. These settings constitute the desired state being enforced by the module. Every module run then either does nothing, if all objects and configurations defined in source code are already present, or creates those items if they don’t exist, or corrects drift that may have occurred between the IaC code definition and what is actually present in AWS at the moment.

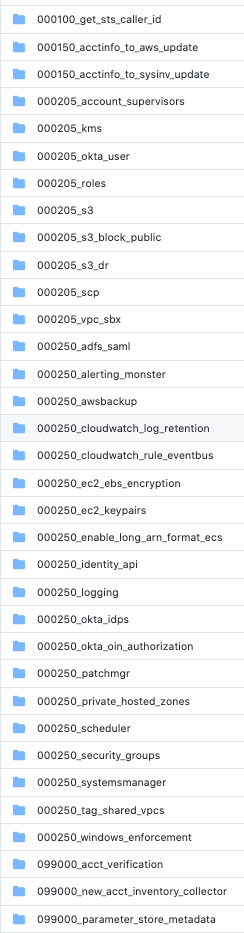

Every module is responsible for configuring an entity within the AWS account. The following screenshot shows some of the modules used to enforce account settings.

A few in particular are worth calling out, such as the roles module, which ensures the presence of all the different IAM identities as desired by FactSet’s governance process. The various s3 modules provide users with a default set of useful buckets in their accounts that can have pre-defined trust relationships. The patchmgr code configures useful policies for regular Amazon Elastic Compute Cloud (Amazon EC2) patching. The AMI sharing module shares any AMIs created by central teams with all micro accounts immediately.

The average runtime of modules across accounts, and errors thrown by the module run, are monitored using InfluxDB and Grafana charts. Module authors are notified in case of discrepancies and failures.

Separation of module logic and configuration settings

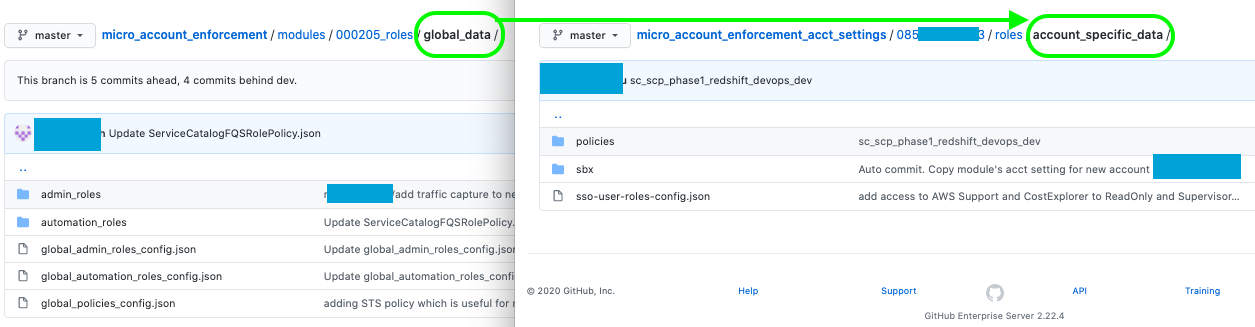

Each module also contains the default global settings that apply to a new AWS account. The module source code and the default global settings are decoupled and stored in separate source code repositories. The global settings repository is automatically updated with a new directory corresponding to the configuration of an AWS account upon its creation. The configuration files in this directory can be modified to override the global settings that are created by default. The following screenshot shows global settings in a module repo being overridden in an account-specific repository.

All the desired changes to AWS account configuration can occur using standard source version control workflows. The Git repository containing the automation module code has a development branch apart from the primary branchThe enforcement for development accounts are run via the development branch, thereby testing the changes before they are applied to production accounts. We also perform additional unit testing on automation modules.

Enforcing and updating account states

The modules to ensure account settings are invoked twice a day for all AWS accounts tied to development and once daily for all other account types. These enforcement runs help detect any deviations from the governance policies that were applied when the AWS account was first created.

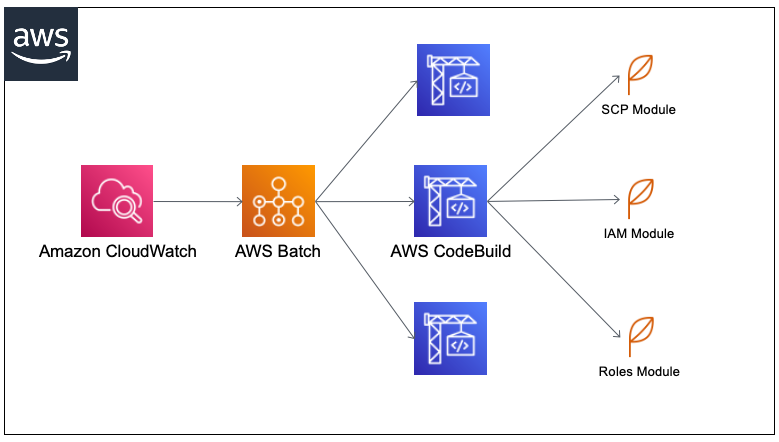

The runs are initiated using a scheduled Amazon CloudWatch trigger of a long-running AWS Batch job. The orchestrating process for all of the enforcement runs as an AWS Batch job and starts parallel enforcement runs for each AWS account, in sets of 20 or so at a time so we don’t exceed API limits. Each of these account-specific enforcement calls takes the form of an AWS CodeBuild job that runs the modules against the account. The modules are run in stages, with a successful run status as a prerequisite for other modules to run in the next stage, and so forth. The failure of any one module stops the CodeBuild job.

Each CodeBuild job runs within a custom Alpine container with dependencies such as the programming language libraries required by the automation modules. The job assumes a trusted administrator role within the micro account, which grants it sufficient privileges to run enforcement checks. SCP enforcement, which operates via the primary organization account, is enabled via one of the IAM roles associated with the CodeBuild run that is trusted by a role in that master account. The following diagram illustrates this workflow.

Conclusion and looking forward

FactSet’s micro account strategy has enabled our product teams to provision resources and iterate quickly. As we look forward to enhance our project teams’ experience, our own internal roadmap is focused on addressing the following areas, among others:

- Our automated testing and module runtime can always be better!

- There is sometimes a mismatch between the response times of our automation modules and the response times and availability of the various AWS APIs with which they interact. As a result, our CodeBuild enforcement jobs can fail unpredictably, and this has been hard to completely solve.

- Due to API limits and quotas, our implementation can only run a certain number of CodeBuild enforcement jobs in parallel. Due to the thousands of AWS accounts, a complete enforcement run across all accounts can take 3–4 hours. We would like to reduce this time.

- AWS doesn’t provide APIs to delete or rename an account. In our use case, this doesn’t hinder us. We delete the AWS accounts for ad hoc workloads and canceled projects manually.

We hope you enjoyed reading about how FactSet operates in the AWS Cloud, and hope it gives you ideas on how to balance developer velocity with security and governance at scale in your cloud journey.