AWS DevOps & Developer Productivity Blog

Securing Amazon EKS workloads with Atlassian Bitbucket and Snyk

This post was contributed by James Bland, Sr. Partner Solutions Architect, AWS, Jay Yeras, Head of Cloud and Cloud Native Solution Architecture, Snyk, and Venkat Subramanian, Group Product Manager, Bitbucket

One of our goals at Atlassian is to make the software delivery and development process easier. This post explains how you can set up a software delivery pipeline using Bitbucket Pipelines and Snyk, a tool that finds and fixes vulnerabilities in open-source dependencies and container images, to deploy secured applications on Amazon Elastic Kubernetes Service (Amazon EKS). By presenting important development information directly on pull requests inside the product, you can proactively diagnose potential issues, shorten test cycles, and improve code quality.

Atlassian Bitbucket Cloud is a Git-based code hosting and collaboration tool, built for professional teams. Bitbucket Pipelines is an integrated CI/CD service that allows you to automatically build, test, and deploy your code. With its best-in-class integrations with Jira, Bitbucket Pipelines allows different personas in an organization to collaborate and get visibility into the deployments. Bitbucket Pipes are small chunks of code that you can drop into your pipeline to make it easier to build powerful, automated CI/CD workflows.

In this post, we go over the following topics:

- The importance of security as practices shift-left in DevOps

- How embedding security into pull requests helps developer workflows

- Deploying an application on Amazon EKS using Bitbucket Pipelines and Snyk

Shift-left on security

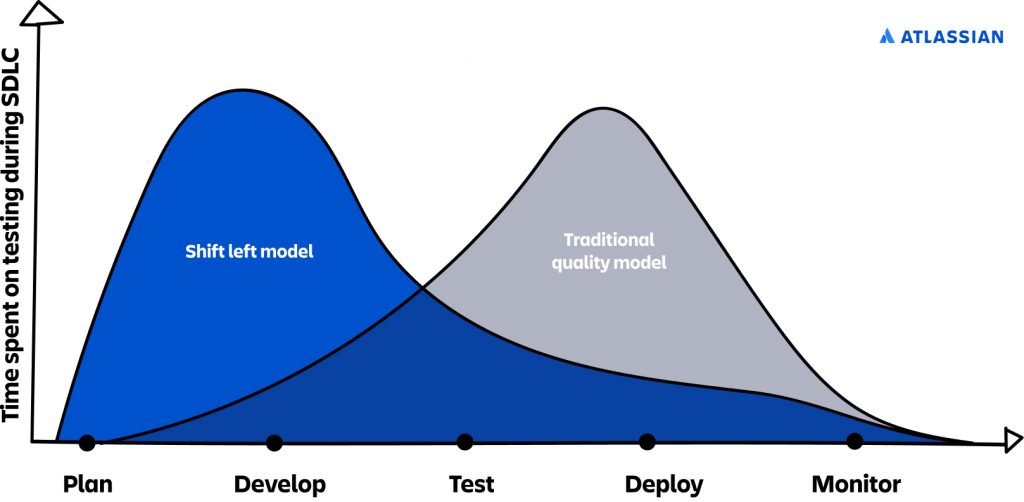

Security is usually an afterthought. Developers tend to focus on delivering software first and addressing security issues later when IT Security, Ops, or InfoSec teams discover them. However, research from the 2016 State of DevOps Report shows that you can achieve better outcomes by testing for security earlier in the process within a developer’s workflow. This concept is referred to as shift-left, where left indicates earlier in the process, as illustrated in the following diagram.

There are two main challenges in shifting security left to developers:

- Developers aren’t security experts – They develop software in the most efficient way they know how, which can mean importing libraries to take care of lower-level details. And sometimes these libraries import other libraries within them, and so on. This makes it almost impossible for a developer, who is not a security expert, to keep track of security.

- It’s time-consuming – There is no automation. Developers have to run tests to understand what’s happening and then figure out how to fix it. This slows them down and takes them away from their core job: building software.

Enabling security into a developer’s workflow

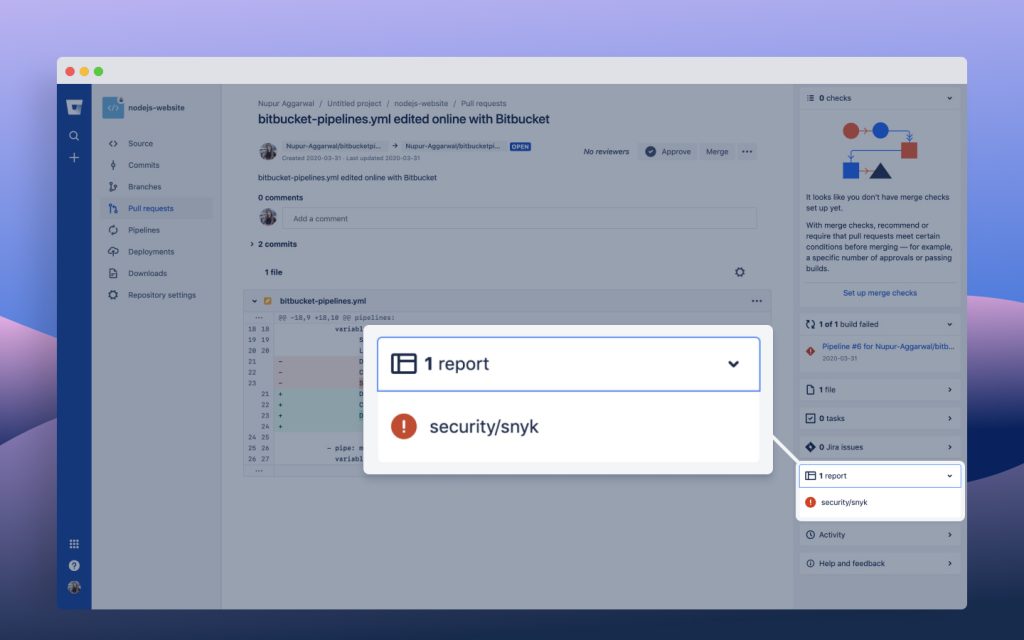

Code Insights is a new feature in Bitbucket that provides contextual information as part of the pull request interface. It surfaces information relevant to a pull request so issues related to code quality or security vulnerabilities can be viewed and acted upon during the code review process. The following screenshot shows Code Insights on the pull request sidebar.

In the security space, we’ve partnered with Snyk, McAfee, Synopsys, and Anchore. When you use any of these integrations in your Bitbucket Pipeline, security vulnerabilities are automatically surfaced within your pull request, prompting developers to address them. By bringing the vulnerability information into the pull request interface before the actual deployment, it’s much easier for code reviewers to assess the impact of the vulnerability and provide actionable feedback.

When security issues are fixed as part of a developer’s workflow instead of post-deployment, it means fewer sev1 incidents, which saves developer time and IT resources down the line, and leads to a better user experience for your customers.

Securing your Atlassian Workflow with Snyk

To demonstrate how you can easily introduce a few steps to your workflow that improve your security posture, we take advantage of the new Snyk integration to Atlassian’s Code Insights and other Snyk integrations to Bitbucket Cloud, Amazon Elastic Container Registry (Amazon ECR, for more information see Container security with Amazon Elastic Container Registry (ECR): integrate and test), and Amazon EKS (for more information see Kubernetes workload and image scanning. We reference sample code in a publicly available Bitbucket repository. In this repository, you can find resources such as a multi-stage build Dockerfile for a sample Java web application, a sample bitbucket-pipelines.yml configured to perform Snyk scans and push container images to Amazon ECR, and a reference Kubernetes manifest to deploy your application.

Prerequisites

You first need to have a few resources provisioned, such as an Amazon ECR repository and an Amazon EKS cluster. You can quickly create these using the AWS Command Line Interface (AWS CLI) by invoking the create-repository command and following the Getting started with eksctl guide. Next, make sure that you have enabled the new code review experience in your Bitbucket account.

To take a closer look at the bitbucket-pipelines.yml file, see the following code:

script:

- IMAGE_NAME="petstore"

- docker build -t $IMAGE_NAME .

- pipe: snyk/snyk-scan:0.4.3

variables:

SNYK_TOKEN: $SNYK_TOKEN

LANGUAGE: "docker"

IMAGE_NAME: $IMAGE_NAME

TARGET_FILE: "Dockerfile"

CODE_INSIGHTS_RESULTS: "true"

SEVERITY_THRESHOLD: "high"

DONT_BREAK_BUILD: "true"

- pipe: atlassian/aws-ecr-push-image:1.1.2

variables:

AWS_ACCESS_KEY_ID: $AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY: $AWS_SECRET_ACCESS_KEY

AWS_DEFAULT_REGION: "us-west-2"

IMAGE_NAME: $IMAGE_NAME

In the preceding code, we invoke two Bitbucket Pipes to easily configure our pipeline and complete two critical tasks in just a few lines: scan our container image and push to our private registry. This saves time and allows for reusability across repositories while discovering innovative ways to automate our pipelines thanks to an extensive catalog of integrations.

Snyk pipe for Bitbucket Pipelines

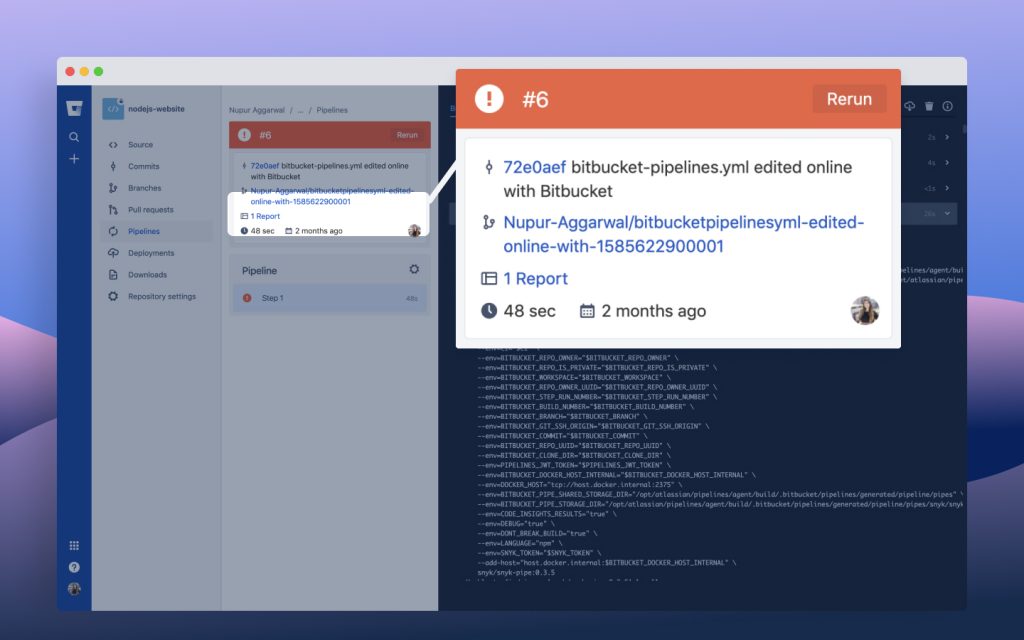

In the following use case, we build a container image from the Dockerfile included in the Bitbucket repository and scan the image using the Snyk pipe. We also invoke the aws-ecr-push-image pipe to securely store our image in a private registry on Amazon ECR. When the pipeline runs, we see results as shown in the following screenshot.

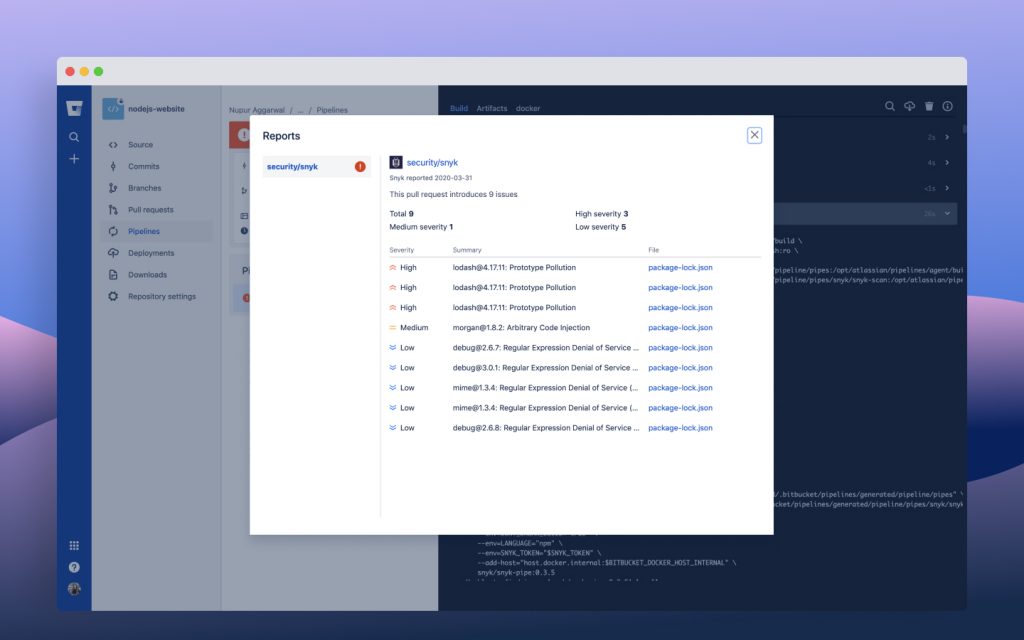

If we choose the available report, we can view the detailed results of our Snyk scan. In the following screenshot, we see detailed insights into the content of that report: three high, one medium, and five low-severity vulnerabilities were found in our container image.

Snyk scans of Bitbucket and Amazon ECR repositories

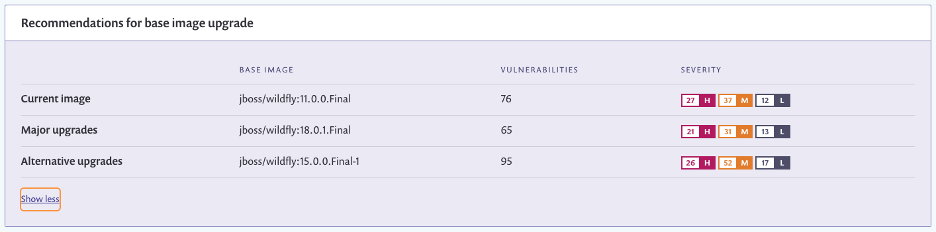

Because we use Snyk’s integration to Amazon ECR and Snyk’s Bitbucket Cloud integration to scan and monitor repositories, we can dive deeper into these results by linking our Dockerfile stored in our Bitbucket repository to the results of our last container image scan. By doing so, we can view recommendations for upgrading our base image, as in the following screenshot.

As a result, we can move past informational insights and onto actionable recommendations. In the preceding screenshot, our current image of jboss/wilfdly:11.0.0.Final contains 76 vulnerabilities. We also see two recommendations: a major upgrade to jboss/wildfly:18.0.1.FINAL, which brings our total vulnerabilities down to 65, and an alternative upgrade, which is less desirable.

We can investigate further by drilling down into the report to view additional context on how a potential vulnerability was introduced, and also create a Jira issue to Atlassian Jira Software Cloud. The following screenshot shows a detailed report on the Issues tab.

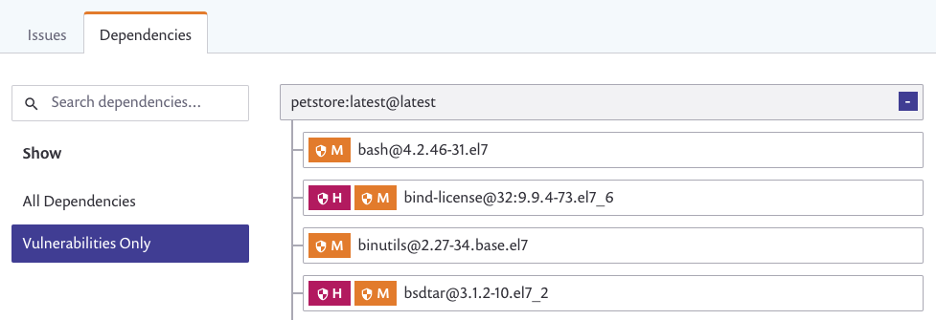

We can also explore the Dependencies tab for a list of all the direct dependencies, transitive dependencies, and the vulnerabilities those may contain. See the following screenshot.

Snyk scan Amazon EKS configuration

The final step in securing our workflow involves integrating Snyk with Kubernetes and deploying to Amazon EKS and Bitbucket Pipelines. Sample Kubernetes manifest files and a bitbucket-pipeline.yml are available for you to use in the accompanying Bitbucket repository for this post. Our bitbucket-pipeline.yml contains the following step:

script:

- pipe: atlassian/aws-eks-kubectl-run:1.2.3

variables:

AWS_ACCESS_KEY_ID: $AWS_ACCESS_KEY_ID

AWS_SECRET_ACCESS_KEY: $AWS_SECRET_ACCESS_KEY

AWS_DEFAULT_REGION: $AWS_DEFAULT_REGION

CLUSTER_NAME: "my-kube-cluster"

KUBECTL_COMMAND: "apply"

RESOURCE_PATH: "java-app.yaml"

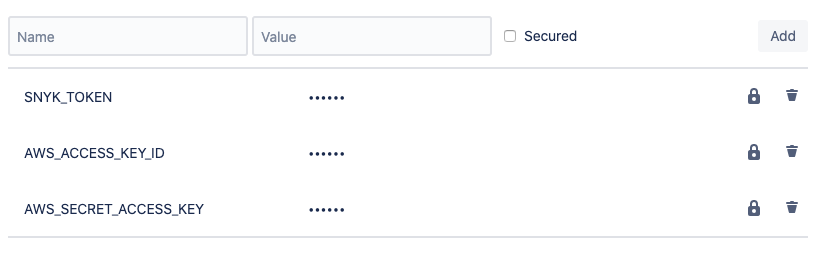

In the preceding code, we call the aws-eks-kubectl-run pipe and pass in a few repository variables we previously defined (see the following screenshot).

For more information about generating the necessary access keys in AWS Identity and Access Management (IAM) to make programmatic requests to the AWS API, see Creating an IAM User in Your AWS Account.

Now that we have provisioned the supporting infrastructure and invoked kubectl apply -f java-app.yaml to deploy our pods using our container images in Amazon ECR, we can monitor our project details and view some initial results. The following screenshot shows that our initial configuration isn’t secure.

The reason for this is that we didn’t explicitly define a few parameters in our Kubernetes manifest under securityContext. For example, parameters such as readOnlyRootFilesystem, runAsNonRoot, allowPrivilegeEscalation, and capabilities either aren’t defined or are set incorrectly in our template. As a result, we see this in our findings with the FAIL flag. Hovering over these on the Snyk console provides specific insights on how to fix these, for example:

- Run as non-root – Whether any containers in the workload have securityContext.runAsNonRoot set to false or unset

- Read-only root file system – Whether any containers in the workload have securityContext.readOnlyFilesystem set to false or unset

- Drop capabilities – Whether all capabilities are dropped and CAP_SYS_ADMIN isn’t added

To save you the trouble of researching this, we provide another sample template, java-app-snyk.yaml, which you can apply against your running pods. The difference in this template is that we have included the following lines to the manifest, which address the three failed findings in our report:

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsNonRoot: true

capabilities:

drop:

- all

After a subsequent scan, we can validate our changes propagated successfully and our Kubernetes configuration is secure (see the following screenshot).

Conclusion

This post demonstrated how to secure your entire flow proactively with Atlassian Bitbucket Cloud and Snyk. Seamless integrations to Bitbucket Cloud provide you with actionable insights at each step of your development process.

Get started for free with Bitbucket and Snyk and learn more about the Bitbucket-Snyk integration.

“The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post.”