AWS for Games Blog

Pain in the Asset Library: How Machine Learning can make your production pipeline 1000x faster

Finding related textures in a texture library can be a black hole of wasted time. Using Amazon Rekognition, Amazon’s machine learning API, you can tag your textures and do searches to find them in seconds…

In game development, we commonly have a large asset library of textures or scenes that become our “painter’s palette”. This palette is what we use to bring all of the 3D landscape and geometry to life. The choices we make are very important as they can change the entire environment of how someone perceives our world. A couple of textures can make an entire landscape look like real life, a comic shaded land, or a cell styled apocalyptic land.

Since there are so many textures and styles to choose from, it’s not uncommon to have thousands of them to choose from. The main issue here is that they are seldom tagged correctly, and relying on folders and filenames can be either misleading or partially correct. To be the most accurate, you would need to find a way to tag these, and doing it by hand is a monumental task, which in most cases is unrealistic.

To accomplish accurate tagging, one or more individuals would have to open each one, look at it, give their opinion on what it is, then tag each file with that information. That would take too much time in any scenario.

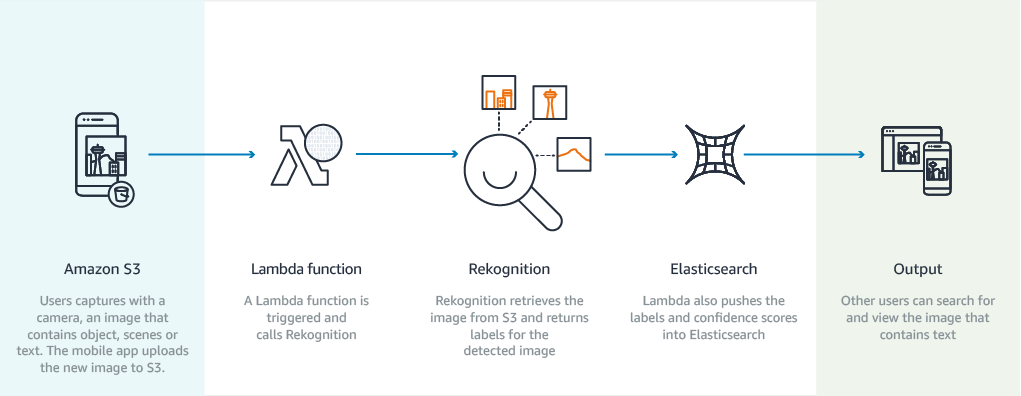

But when using Amazon Rekognition, Amazon’s image recognition that utilizes machine learning, this manual process suddenly becomes incredibly simple and exponentially faster. By comparison, if an artist took 40 seconds to open a file, look at the image, form an opinion, and write multiple tags to it, then store in a database, it would take them about over 55 working hours to process 5,000 files. By comparison, this can be batched and completed in the time it takes to upload the images using Rekognition. In our test code, uploading at a speed of 200 megabits per second, it took a little less than 3 minutes to complete.

Now let’s try it

If you want to try single images, you can check it out here:

https://console.aws.amazon.com/rekognition/home?region=us-east-1#/label-detection

Just upload the image and look at the responses. Next, let’s do this using Python. We’re going to use the AWS SDK which you can install from here:

https://aws.amazon.com/tools/#sdk

Once installed, we’re going to walk through a simple example where you specify a folder, and when you run the code, it will upload all of the JPEG/PNGs in the folder to Amazon Rekognition, and store the meta tags into a SQLite database with the filenames and allow you to do a simple search showing the images you requested. For this example, I’ll show you a simple and quick way to do it using Python. If you’re not a Python fan, you can also use your favorite language to do the same thing. First thing is to install the AWS SDK for Python here:

https://boto3.readthedocs.io/en/latest/guide/quickstart.html

The quick and dirty is to install the ‘Boto3’ package:

Also, make sure you configure an AWS access key: https://console.aws.amazon.com

Click in the console under IAM -> Users -> Security Credentials -> Create Access key

Take the Access Key ID and Secret Access Key and configure your credentials and config files which can be found in the quickstart link above. Just to note, Windows is located at %USERPROFILE%\.aws\credentials

With just a few lines, you can be up and running.

1. Import boto3 library

2. Set the Rekognition API to a variable with the region you plan to use

3. Pass in the bytes or a S3 bucket and file for the image to detect with the detect_labels call. You may want to include the max number of labels, and the minimum confidence.

For more details: http://boto3.readthedocs.io/en/latest/reference/services/rekognition.html

(Ignore the docs on passing a base64-encoded image, just pass the bytes themselves)

if imagebytes is None:

else:

4. Parse out the response ‘Labels’ list which will contain the labels and % of confidence return response ‘Labels’

That’s it!

I’ve assembled a quick demo that allows you to select a folder and update the local database with tags for each image, and allow you to type in a phrase, which will show you all of the images that match the phrase. You can find the code here: https://github.com/AmazonGameTech/AWSTextureML and a short video of me walking through the code here:

And of course the documentation on Amazon Rekognition: https://docs.aws.amazon.com/rekognition/latest/dg/what-is.html

Got some ideas or are there other things you would like to see? Hit me up at obriroya@amazon.com, or let me know in the comments below.

About Royal O’Brien: I’ve been doing software development since my first TRS-80 back in 1978. I continued learning multiple software languages and hardware hacking through my teenage years (back when computers weren’t cool at all). Learned about discipline in the US Marines, and the finer details working on their F/A-18 aircraft for 4 years. I spent a few years learning how to handle an unruly crowd by having fun as a live DJ and then went into business for myself. I raised a family as a serial entrepreneur for the last 23 years. As a businessman, I raised funds, built, and divested multiple companies. At the same time, as an engineer, I patented multiple process methods for VOIP, video correction, and video game software distribution technologies. I have worked with multiple game companies at the code, process and infrastructure levels, as well as provided guidance on the marketing, fiscal, and commercialization aspects of the business. I’ve lead and played in multiple top world ranked guilds on multiple games, and am a hardcore min/max gamer with never enough time to play. Passion is my fuel, and to me, it is priceless.