Networking & Content Delivery

TCP BBR Congestion Control with Amazon CloudFront

One of the fundamental value propositions of a content delivery network (CDN) is performance. Two of the key aspects of great performance are latency and throughput: that is, delivering a large volume of bits quickly and consistently. These attributes play a critical role in content delivery of all kinds, from video streams to API calls. We are continuously refining Amazon CloudFront technology and infrastructure to maximize these performance attributes and provide the best quality experience for the broadest set of content and workloads.

During March and April 2019, CloudFront deployed a new TCP congestion control algorithm to help improve latency and throughput: the TCP bottleneck bandwidth and round trip (BBR) congestion control algorithm. BBR is an algorithm developed by Google that aims to improve performance for internet traffic. BBR works by observing how fast a network is already delivering traffic and the latency of current roundtrips. It then uses that data as input to packet-pacing heuristics that can improve performance.

The BBR algorithm provides a number of advantages over other congestion control methods. All TCP congestion control algorithms—such as CUBIC, Reno, and Vegas—attempt to balance packet transmission fairness with how they detect or respond to congestion or packet loss. Older algorithms can result in latency and throughput oscillation because they more aggressively back off packet transmission or recover more cautiously in response to packet loss detection. In contrast, BBR evaluates congestion by measuring roundtrip times, which provides higher, more stable throughput, while also improving latency. BBR responds more agilely to changing conditions, but continues to aggressively attempt to transmit as much data as possible even when there are transient issues.

Using BBR in CloudFront has been favorable overall, with performance gains of up to 22% improvement on aggregate throughput across several networks and regions. These performance gains depend on a number of factors, including the resource size as well as the quality, capacity, and distance of the connectivity in a given last-mile network.

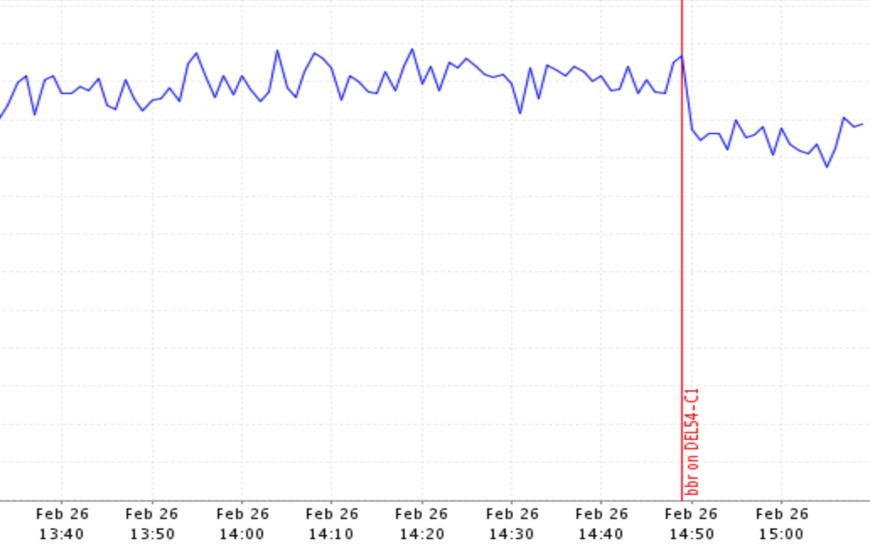

As an example of how BBR can affect performance, see the following graph, which shows latency for a single POP in India before and after we enabled BBR during our pre-deployment testing. The graph shows the measurable improvement in latency—a 14% improvement—with BBR.

Customers and industry observers have noticed the results as well. For example, Tutela, an independent, crowd-sourced data company collects network performance and quality of experience data worldwide, helping organizations in the mobile industry to understand and improve the world’s networks. They gather information on mobile infrastructure and test users’ wireless experience by leveraging millions of download tests every day against content delivery networks, including CloudFront. The test results are collected from a set of over 3000 instrumented mobile apps used by 300 million smartphone users.

The chart below shows the change that Tutela observed in global average download throughput when a 2MB test file was downloaded from the CloudFront CDN. The effect of BBR shows up on a continental level, as you can see in the following graph, with Asia in particular experiencing a significant (about 28%) improvement almost immediately. Because Tutela’s methodology tests the end-to-end performance of real-world user devices, the measured performance improvement demonstrates a direct tangible benefit for users.

The change is even clearer when you look at a single regional area. In the US, for example, viewers in mobile networks that had previously experienced slower CloudFront download speeds improved the most with BBR. In the following graph, you can see that two operators had a substantial improvement in average download throughput (over 100% improvement) for CloudFront requests.

Our ongoing research and experimentation led to the deployment of this improvement, which is just one of the many ways in which we continue to invest in full-stack optimizations in CloudFront. Our continuing goal is to maximize performance and scale on the infrastructure that we deploy so that we can keep improving the experience for our customers and their users.

Co-authors:

Ted Middleton, Principal Product Manager, Amazon CloudFront

Adam Johnson, Principal Engineer, Amazon CloudFront

| Blog: Using AWS Client VPN to securely access AWS and on-premises resources | ||

| Learn about AWS VPN services | ||

|

Watch re:Invent 2019: Connectivity to AWS and hybrid AWS network architectures |