AWS Startups Blog

Caltech’s Pietro Perona on How Deep Learning Can Help You Classify Birds and Trees

Imagine, for a moment, that you discover an irregular mole on yourself. Whereas nowadays you still need to take time to schedule a dermatological appointment and then wait weeks to get your test results, Caltech Professor Pietro Perona is eagerly awaiting the day when you can snap a picture of the mole with your smartphone and then learn instantaneously if it’s dangerous or not. And he should know. As the co-creator, with Cornell Tech Professor Serge Belongie, of the AI and machine learning-based visual classification system Visipedia, Perona has spent the past seven years working on a “switchboard” that lets anyone, everywhere ask questions and immediately obtain an answer.

Imagine, for a moment, that you discover an irregular mole on yourself. Whereas nowadays you still need to take time to schedule a dermatological appointment and then wait weeks to get your test results, Caltech Professor Pietro Perona is eagerly awaiting the day when you can snap a picture of the mole with your smartphone and then learn instantaneously if it’s dangerous or not. And he should know. As the co-creator, with Cornell Tech Professor Serge Belongie, of the AI and machine learning-based visual classification system Visipedia, Perona has spent the past seven years working on a “switchboard” that lets anyone, everywhere ask questions and immediately obtain an answer.

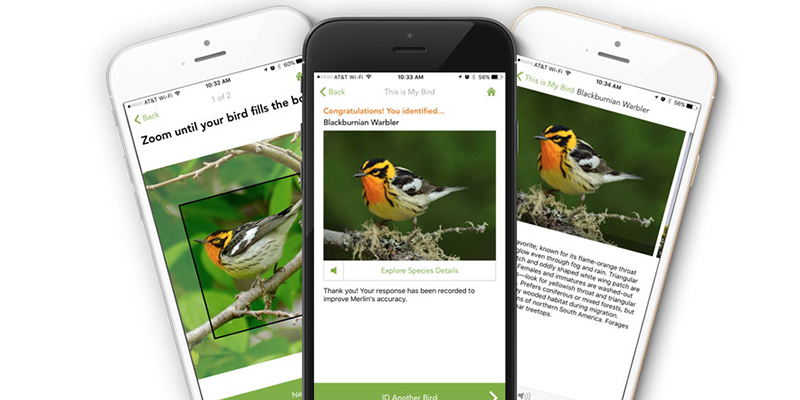

Perona notes that after years of slow progress, he and Belongie were able to make their big breakthrough roughly two to three years ago, thanks to the rapid ascendance of three things: deep learning, more powerful computers, and Internet-enabled annotated data sets. By using all three tools in tandem, Perona and his team were able to harness the power of computer vision technology and launch products like the Merlin Bird Photo ID mobile app. Though currently limited to North American species, the app can quickly recognize hundreds of bird species from a single smartphone snapshot, thanks to a deep network that has “learned” from 475 million observations.

While most of Visipedia’s work has centered thus far on classifying birds and trees, Perona envisions his platform as ultimately being limitless. When everyone can contribute images and expertise, and communities can come together and train in a new domain, learning becomes far more effective and efficient.

For more on the Visipedia project, listen here.

https://soundcloud.com/user-648279757/caltechs-pietro-perona-on-how-ai