AWS Startups Blog

How Backbase Leveraged EC2 Hibernation to Reduce Compute Spending by 30%

Guest post by Yared Ayalew, Product Owner & Acacio Santos, Senior System Engineer, Backbase

Backbase is a leader in digital first, omni-channel banking platform and creator of the Backbase Digital-First Banking Platform, a state-of-the-art digital banking software solution that unifies data and functionality from traditional core systems and new fintech players into a seamless, digital customer experience. Backbase gives financials the speed and flexibility to create and manage seamless customer experiences across any device and deliver measurable business results. Backbase’s digital banking platform is based on a modern microservice architecture with the option to run it on-premise, on a private or public cloud or as-a-service.

Architecture and technology stack

The Backbase platform includes five layers which are full-blown products in their own right. These are experience manager, digital banking, identity and entitlement, onboarding, origination and finally cloud deployment.

- Experience manager: end-to-end solutions to create engaging, beautiful and amazing experiences to end-user customers both for web and mobile form factors. This layer consists of backend microservices built using Spring Boot, web widgets based on Angular and Typescript, native mobile widgets for both Android and iOS.

- Digital Banking is the API powered backend to complement existing core banking systems. This is a modern microservice architecture based on Spring Boot and forms the foundation for Banking-as-a-Platform capabilities to our customers and supports both traditional on-prem (bare metal or VM) as well as modern cloud-native deployments.

- Identity and entitlement is our unified identity and access management solution which provides secure access to customers, employees, and partners to your banking data. It also provides facilities for native mobile banking applications through APIs.

- Onboarding and origination allows customers to create a streamlined business process automation platform. It can be used to automate and apply business rules for customer onboarding, account opening, self-service processes while providing direct integration with your backend systems.

- Backbase cloud is our approach to open standards-based cloud-native deployment of our product providing the choice of deployment scenarios for our customers: on-prem, private cloud or public cloud. We use a combination of Ansible and helm charts, depending on the deployment scenario.

As you can guess from the above description of our platform, we use several technologies and tools to get our job done. Also, when you factor in the challenge of developing and testing microservices (we have close to 100) for different developer profiles (backend, frontend, and mobile) and supported platforms, things tend to be complicated. In order to bridge this gap, Backbase uses a home-grown automation solution to deploy all or part of the platform on AWS for development and testing purposes taking away the complexity. The application is heavily used by our developers and QAs for their day-to-day workflow and on any regular working day, we have more than 500 EC2 instances running in AWS to serve the needs. The main reason we built this solution is to address the following concerns:

- To ease the process of creating a QA environment containing only a specific part of the platform. Imagine when you have close to 100 microservices spread over a number of products, with the possibility to deploy them in several combinations of operating systems, application servers, and databases; the process becomes quite complex.

- There are thousands of automated test cases used for validating and verifying the fitness of the product. These tests require specific configurations both at the application level as well as the underlying environment

- Being able to quickly spin up a specific combination of applications (the Backbase platform is composed of several products and each product is composed of multiple capabilities) for demo or exploratory testing purposes.

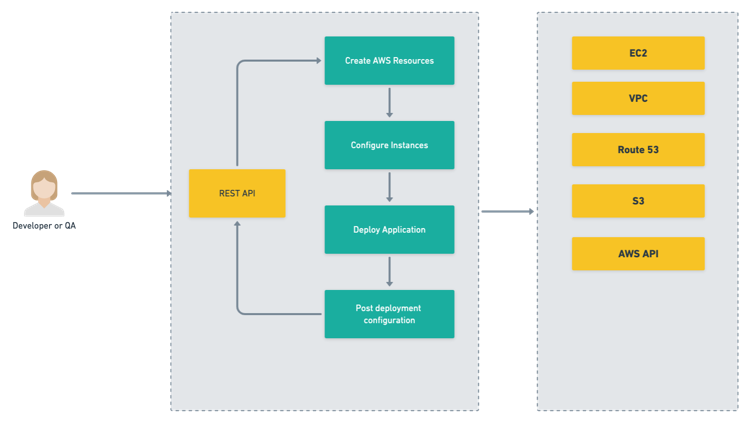

These are just a few of the major pain points that triggered our initiative to build an internal automation to address them. We make extensive use of AWS cloud and it was the first choice for developers of this internal tool following the approach described in the diagram below.

The tools is used by QAs as follows:

1. A developer/QA uses a web interface to provide information about which products/capabilities they need, what database to use (MySQL, MSSQL, or Oracle), what app server among the available configurations. In addition, the tool also has a REST API for the purpose of invoking it from pipelines.

2. The service will then use the information provided to:

○ Create the necessary amount of EC2 virtual machines using custom AMIs

○ Add the newly created VMs to the internal VPC where they will have access to git and artifact repositories

○ Deploy application components to the instances using Ansible

○ Run post-deployment configurations once the services are up and running

○ Create Route 53 DNS entry under the internal VPC

3. Manage the lifecycle of the environments → create, stop, re-start, delete

4. Reconfigure existing environments by passing additional configuration parameters. For example, updating one of the services in the created environment to a new version.

Challenges

As the company continues to grow and more people are working on the product, so does the need for more AWS resources to create, which in turn increased our cost significantly. Initially, when a developer creates an environment, it used to run for 72 hours before it was claimed by the tool which means it will be running during weekends or off business hours even when it was not used.

Our initial approach to address this problem was to incorporate the following features in the tool:

● We included a custom script in the AMI images we use to create all of our EC2 instances. The script’s main purpose was to track the services we deployed on the EC2 instance and report back to the automation tool if no activity is detected for a period of 30mins.

● When the automation tool receives an “idle event” from the script, we use AWS SDKs to trigger a shutdown of the instance so that we can save compute cost. We also keep track of the list of all EC2 instances that make up an environment. The average environment we create with the tool has at least a minimum of 4-5 EC2 instances. We made sure that all EC2 instances in an environment are reported as idle before we shut them down to avoid an error case where a service to be shut down because of inactivity while other services which depend on it are still actively in use.

● A user is able to bring an environment back up through a web interface provided by the tool.

There were a handful of challenges we were facing with our approach namely:

- We were able to save money by shutting down the instances but it was still slow for users as we still have to do a lot of configuration when the services are up again. There were configurations which were lost when the instance was restarted.

- We still have to deal with the startup order of the services which is no fun to manage. There were lots of unpredictable behaviors for the environments which caused frustration and loss of trust on the feature from the user point of view.

- Because it depends on a lot of moving parts, this was by far the most unstable feature of our tool.

When we heard about native EC2 Hibernation feature we were very excited to give it a try and see if it can help us reduce our bill and also improve developer experience. First we did a quick proof-of-concept to validate the feature if it fits for our case and found out that it did solve most of our problems but we had a huge challenge to deal with. You see, most of our AMIs are based on CentOS and we had to recreate them to take advantage of native hibernation. Next we followed our first approach and did another proof-of-concept to validate our ansible application configuration scripts if they work with Amazon Linux distribution and found out that there were no issues there. After that we used our already existing automated process of creating AMIs using Packer to re-create all of our AMIs based on Amazon Linux. Later on we refactored our tool to hibernate instances instead of shutting them down. Finally we also tuned up our idle tracking script based on the changes. So in summary the steps we followed were:

- Recreate all AMIs we use based on Amazon Linux which supports hibernation.

- Limit EC2 instance types which can be used for creating Dev/QA environments to those which support Hibernation. We had reserved instances (RI) for some types which don’t have support for Hibernation, Therefore, we exchanged to a type that supports Hibernation having a similar CPU and memory profile.

- Refactor our algorithms to detect if an EC2 instance hosting the microservices is idle or not.

Results

● Cost management: we were able to reduce our monthly cost by 30% by utilizing the EC2 Hibernation feature. Instances are now immediately hibernated after two hours of inactivity.

● Out of the box provided by AWS: this means that we can throw away some of the custom implementations we have. Less code = fewer bugs = less stuff to maintain and support!

● Speed: we noticed a considerable difference in speed of bringing up hibernated vs stopped instances. It used to take us 5-10 mins to bring up stopped environments in our custom implementation while with the built-in hibernation our services are back up in 30 seconds.

● Better developer experience: developers are now happy that their test environments are there whenever they need them with retained state, they feel confident they are not wasting resources when they are not in use. In addition, we saw a significant reduction in errors between restarting and resuming instances because in hibernation we don’t need to do all sorts of service start-up logic since the memory of the instance is retained during the process.

● Works as advertised: we were extremely surprised that the hibernation feature “just works.”

Conclusion

Hibernation has helped us to optimize our EC2 usage, leading to lower compute costs since we integrated the feature in our internal tooling. It also brought a direct positive impact on developer experience as it now takes less than a minute to bring up a group of instances which were hibernated while in our initial solution a developer had to wait for several minutes before they could start interacting with it. In addition, the tooling team was able to iterate on the solution faster as now there is no need for us to implement and maintain our previous logic for shutting down and bringing back an instance.

About us

Backbase

We are the creators of the Backbase Digital-First Banking Platform, a state-of-the-art digital banking software solution that unifies data and functionality from traditional core systems and new fintech players into a seamless, digital customer experience. We give financials the speed and flexibility to create and manage seamless customer experiences across any device, and deliver measurable business results. We believe that superior digital experiences are essential to stay relevant, and our software enables financials to rapidly grow their digital business.

Yared Ayalew is a Product Owner in Backbase R&D, CI/CD infrastructure and tooling team, and Acacio Santos is a Senior System Engineer in Backbase R&D, CI/CD infrastructure and tooling team.