AWS News Blog

Category: Compute

AWS Week In Review – July 11, 2022

This post is part of our Week in Review series. Check back each week for a quick roundup of interesting news and announcements from AWS! In France, we know summer has started when you see the Tour de France bike race on TV or in a city nearby. This year, the tour stopped in the […]

New – Amazon EC2 M1 Mac Instances

Last year, during the re:Invent 2021 conference, I wrote a blog post to announce the preview of EC2 M1 Mac instances. I know many of you requested access to the preview, and we did our best but could not satisfy everybody. However, the wait is over. I have the pleasure of announcing the general availability […]

AWS Week in Review – July 4, 2022

This post is part of our Week in Review series. Check back each week for a quick roundup of interesting news and announcements from AWS! Summer has arrived in Finland, and these last few days have been hotter than in the Canary Islands! Today in the US it is Independence Day. I hope that if […]

AWS Week in Review – June 20, 2022

This post is part of our Week in Review series. Check back each week for a quick roundup of interesting news and announcements from AWS! Last Week’s Launches It’s been a quiet week on the AWS News Blog, however a glance at What’s New page shows the various service teams have been busy as usual. […]

AWS Week in Review – June 13, 2022

This post is part of our Week in Review series. Check back each week for a quick roundup of interesting news and announcements from AWS! Last Week’s Launches I made a short trip to Austin, Texas last week in order to visit and learn from some customers. As is always the case, the days when […]

New – Amazon EC2 R6id Instances with NVMe Local Instance Storage of up to 7.6 TB

In November 2021, we launched the memory-optimized Amazon EC2 R6i instances, our sixth-generation x86-based offering powered by 3rd Generation Intel Xeon Scalable processors (code named Ice Lake). Today I am excited to announce a disk variant of the R6i instance: the Amazon EC2 R6id instances with non-volatile memory express (NVMe) SSD local instance storage. The […]

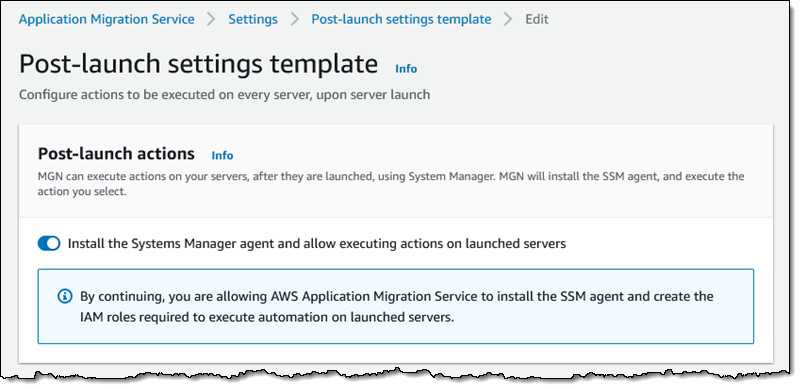

AWS MGN Update – Configure DR, Convert CentOS Linux to Rocky Linux, and Convert SUSE Linux Subscription

Just about a year ago, Channy showed you How to Use the New AWS Application Migration Server for Lift-and-Shift Migrations. In his post, he introduced AWS Application Migration Service (AWS MGN) and said: With AWS MGN, you can minimize time-intensive, error-prone manual processes by automatically replicating entire servers and converting your source servers from physical, […]

AWS Week In Review – May 30, 2022

Today, the US observes Memorial Day. South Korea also has a national Memorial Day, celebrated next week on June 6. In both countries, the day is set aside to remember those who sacrificed in service to their country. This time provides an opportunity to recognize and show our appreciation for the armed services and the important […]