AWS Big Data Blog

Continuous monitoring with Sumo Logic using Amazon Kinesis Data Firehose HTTP endpoints

February 9, 2024: Amazon Kinesis Data Firehose has been renamed to Amazon Data Firehose. Read the AWS What’s New post to learn more.

Amazon Kinesis Data Firehose streams data to AWS destinations such as Amazon Simple Storage Service (Amazon S3), Amazon Redshift, and Amazon OpenSearch Service. Additionally, Kinesis Data Firehose supports destinations to third-party partners. This ability to send data to third-party partners is a vital feature for customers who already use these AWS partner platforms; especially partners specializing in continuous monitoring and intelligence. Kinesis Data Firehose includes sending logs and metrics to Sumo Logic—a cloud machine data analytics and AWS Advanced Technology partner with competencies in containers, data and analytics, DevOps, and security. Sumo Logic helps organizations get better real-time visibility into their IT infrastructure as a result of integrations across cloud and on-premises services, making it simple to aggregate data and giving a full view of your operational, business, and security analytics.

In this post, we go over the Kinesis Data Firehose and Sumo Logic integration and how you can use this feature to stream logs and metrics from Amazon CloudWatch and other AWS services to Sumo Logic. We also go over the key mechanism employed by Sumo Logic to consume logs and its benefits with CloudWatch and AWS Lambda with an example use case.

With this integration, AWS services via CloudWatch metrics have a dedicated and reliable delivery mechanism to Sumo Logic via Kinesis Data Firehose, along with automatic retry capabilities and performant and less intrusive log collection. Let’s look at these benefits in detail:

- Reliable delivery – This streaming solution doesn’t consume your AWS quota by making calls to the CloudWatch APIs. This rules out the possibility of potential data loss due to API throttling events.

- Automatic retry capabilities – Kinesis Data Firehose has an automatic retry mechanism and routes all failed logs and metrics to a customer-owned S3 bucket for later recovery.

- Performant and less intrusive log collection – The solution eliminates the need for creating separate log forwarders such as Lambda functions, which require Sumo Logic to run proprietary code in customer AWS accounts that are limited by timeout, concurrency, and memory limits.

- Cost benefits – This integration also addresses the additional overhead that comes with the lack of a managed and low code ingestion pipeline, manual management of API quotas, and other ingestion mechanisms such as polling that leads to additional cost.

Solution overview

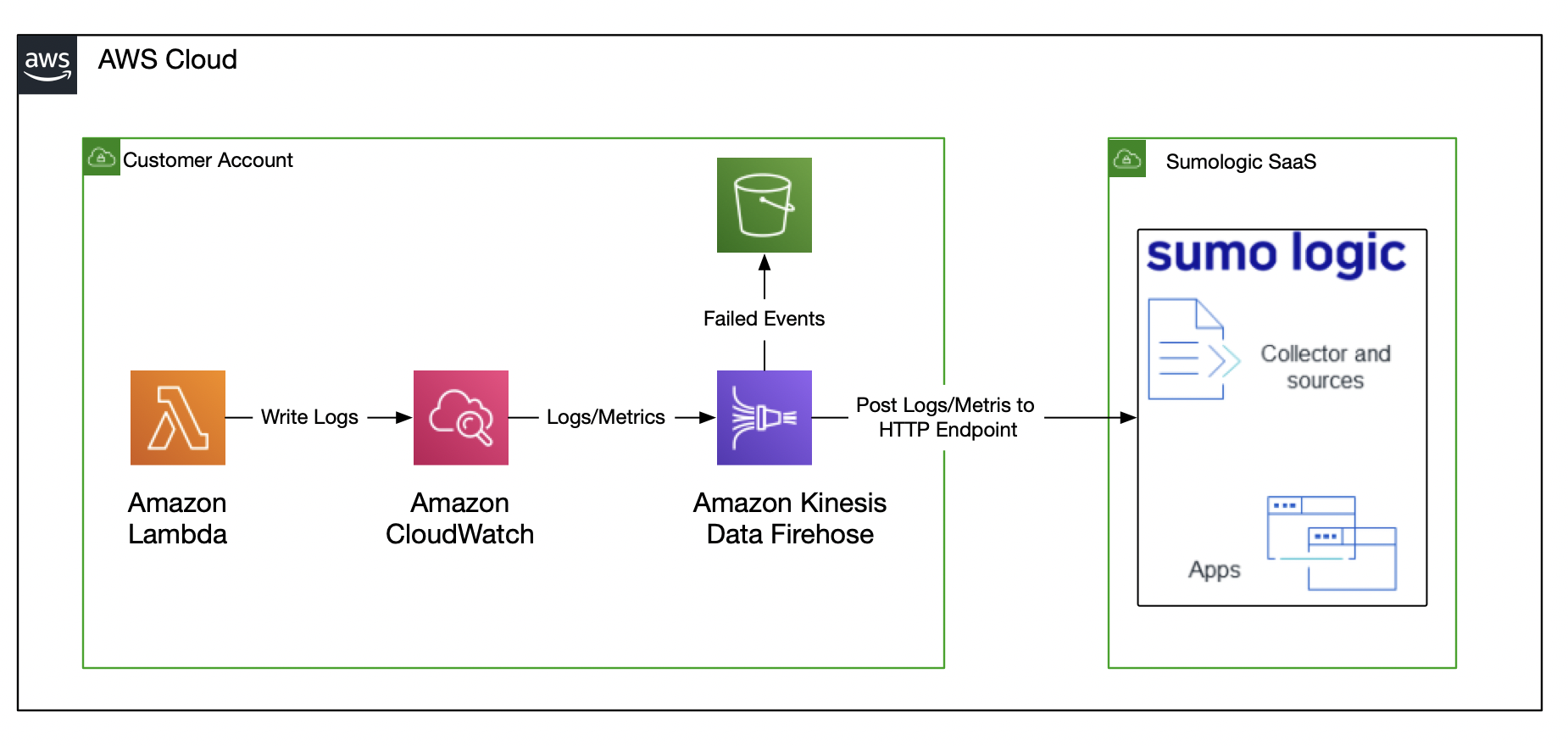

To illustrate this integration, a sample Lambda function vends metrics and logs to CloudWatch, and are consumed by Sumo Logic through this integration. The following diagram illustrates consumption of CloudWatch logs and metrics for a Lambda function through a Kinesis Data Firehose HTTP endpoint for Sumo Logic.

In the subsequent sections, we show you the Sumo Logic hosted collector and Kinesis Data Firehose setup processes that convert the architecture into meaningful insights.

Prerequisites

Make sure that you have a Lambda function with logs and metrics forwarded to CloudWatch. To get started, use the sample Hello World application.

Configure CloudWatch logs and metrics to be forwarded to Kinesis Data Firehose. You can use the latest CloudWatch features such as subscription filters here as well.

Also sign up for a trial version of Sumo Logic, if you don’t have an account already.

Set up the integration on Sumo Logic

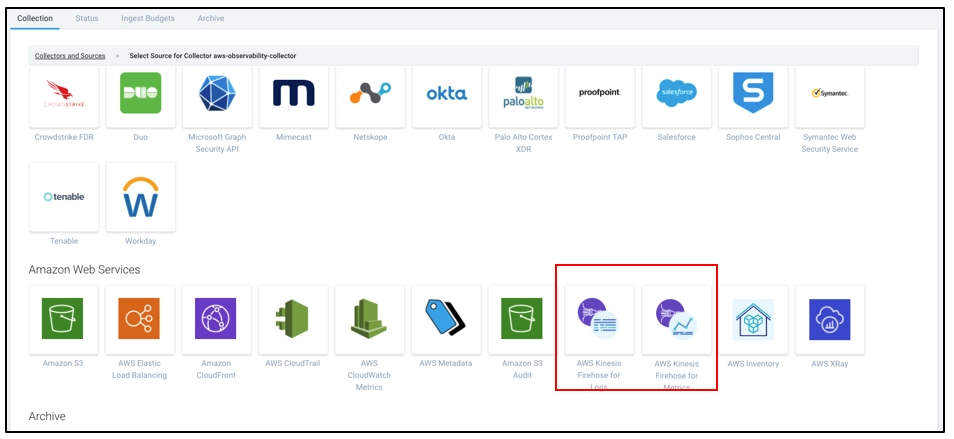

Complete the following instructions to set up a hosted collector on Sumo Logic for Kinesis Data Firehose metrics or logs:

- After logging in to Sumo logic, choose Manage Data.

- On the Collection menu, and choose Add source.

- For the collector selection source, choose Collector-Name.

- Choose AWS Kinesis Firehose for Logs or AWS Kinesis Firehose for Metrics.

- Provide a collector name and source category required for querying later.

- You also need a cross-account role (Sumo Logic provides an AWS CloudFormation template to create the role).

- Save your settings.

After you save, an HTTP source address is generated.

Sumo Logic provides different endpoints for logs and metrics, for example:

- Logs –

https://endpoint4.collection.us2.sumologic.com/receiver/v1/kinesis/log/<tokenid> - Metrics –

https://endpoint4.collection.us2.sumologic.com/receiver/v1/kinesis/metric/<tokenid>

Note down the endpoints to use in the following section.

Set up Kinesis Data Firehose

To create a delivery stream on Kinesis Data Firehose, complete the following steps:

- On the Kinesis Data Firehose console, choose Create delivery stream.

- For Delivery stream name, enter a name.

- For Source, select Direct PUT of other sources.

- For Destination, choose Sumo Logic.

- For HTTP endpoint URL, enter the endpoint URL of the source from Sumo Logic (logs or metrics) that you noted earlier.

- In the Backup settings section, select Failed data only.

- Keep the rest of the settings at their default values and choose Next.

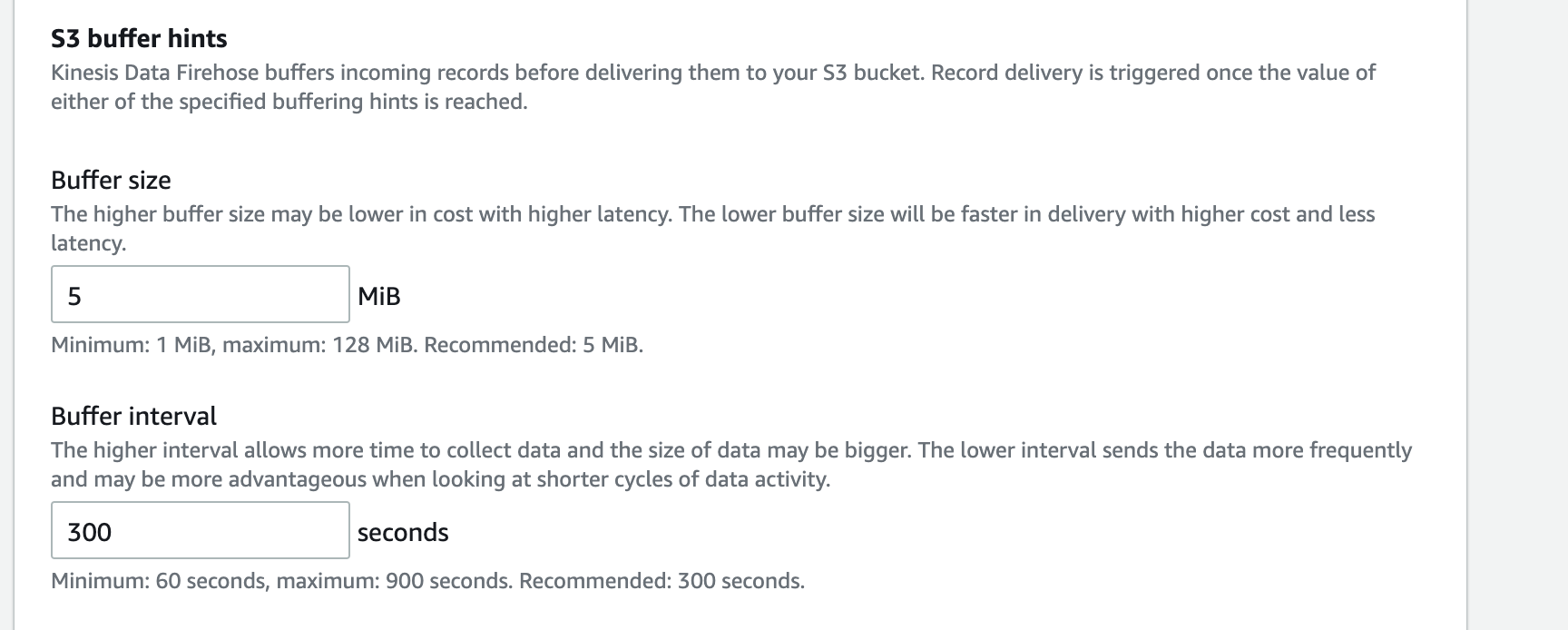

- For Sumo logic Buffer conditions, enter a buffer size of 5 MiB for logs or 1 MiB for metrics.

Configuring the preceding values by type (logs or metrics) is required to achieve optimum latency.

- Leave the rest of the settings at their default values and chose Next.

- Review your settings and choose Create delivery stream.

Logs and metrics to your delivery stream are now available in Sumo Logic. The following screenshot illustrates a Sumo Logic dashboard for Lambda, which comes out of the box and can be used for observability, analysis, and alerting.

Conclusion

With Kinesis Data Firehose HTTP endpoint delivery, AWS service logs and metrics are available for analysis quickly, so you can identify issues and challenges within your applications. Sumo Logic allows you to gain intelligence across your entire application lifecycle and stack along with analytics and insights to build, operate, and secure your modern applications and cloud infrastructure. As a fully managed service, Kinesis Data Firehose coupled with Sumo Logic compression techniques provides seamless access to logs and metrics.

About the Authors

Dilip Rajan is a Partner Solutions Architect at AWS. His role is to help partners and customers design and build solutions at scale on AWS. Before AWS, he helped Amazon Fulfillment Operations migrate their Oracle Data Warehouse to Redshift while designing the next generation big data analytics platform using AWS technologies.

Dilip Rajan is a Partner Solutions Architect at AWS. His role is to help partners and customers design and build solutions at scale on AWS. Before AWS, he helped Amazon Fulfillment Operations migrate their Oracle Data Warehouse to Redshift while designing the next generation big data analytics platform using AWS technologies.

Vinay Maddi is a Senior Solutions Architect for AWS, and passionate about helping customers build modern event driven applications using latest AWS services. An experienced developer, he loves keeping up to date with the latest open-source projects. His main topics are serverless, IoT, AI/ML and blockchain.

Vinay Maddi is a Senior Solutions Architect for AWS, and passionate about helping customers build modern event driven applications using latest AWS services. An experienced developer, he loves keeping up to date with the latest open-source projects. His main topics are serverless, IoT, AI/ML and blockchain.