Integration & Automation

How to automatically subscribe to Amazon CloudWatch Logs groups

In this blog post, we discuss a way of discovering new log groups and adding them as triggers to existing Lambda functions. By adopting a continuous integration and continuous delivery (CI/CD) pipeline, you can deploy applications without manual intervention, which reduces the time to market for new applications and features for existing applications.

Many applications require a centralized, automated operation for logging and monitoring, and every application that runs on AWS generates metrics for debugging purposes. The logs are maintained within different log groups inside Amazon CloudWatch. The log groups are generated for AWS applications and services when logging and monitoring are enabled, but logs can also be manually created. The logs from Amazon CloudWatch Logs groups can be collected and used for analytic use cases that generated insights about event and system details. The logs are a source of information for multiple use cases for log analytics. Collecting and aggregating logs are also required for compliance in addition to analytics purposes that are the basis for implementing an AWS Well-Architected Framework.

In this post, Amazon CloudWatch provides a mechanism to subscribe and export logs to other services, such as Amazon Kinesis Data Firehose, Amazon Kinesis Data Streams, AWS Lambda, and Amazon Simple Storage Service (Amazon S3). The logs are grouped within Amazon CloudWatch Logs groups. Through AWS console or AWS Command Line Interface (AWS CLI), you can enable subscriptions to get access to a real-time feed of events from Amazon CloudWatch Logs. You can then deliver it to other services for analysis or loading to other systems.

You can subscribe only after log groups are created. This requires manual steps to subscribe to new log groups through the Amazon CloudWatch console or AWS CLI. But if you are onboarding applications that use automatic scaling to generate new log groups, manually subscribing is either a repetitive task or requires an AWS CLI script.

In the scenarios when there are huge numbers of log groups generated during deployment, it can be difficult for you to manually enable subscription on log groups. Also, in a completely automated flow of operation, you may want to avoid manual interventions to enable subscription. Hence, an automation that can initiate a log-group subscriptions, based on new log-group creation, can automate the subscription and export the groups to Lambda.

AWS Lambda is widely used for logging architectures in order to ingest, parse, and store Amazon CloudWatch Logs. The logs are stored in document-oriented storage, such as AWS managed Amazon OpenSearch Service (successor to Amazon Elasticsearch Service).

| About this blog post | |

| Time to read | ~10 min. |

| Time to complete | ~30 min. |

| Cost to complete | $0 |

| Learning level | Advanced (300) |

| AWS services | AWS Lambda

Amazon CloudWatch |

Overview

The following steps automate the subscription of newly created log groups in Amazon CloudWatch:

- Capturing events when new log groups are created.

- Processing event information through different AWS services (AWS Lambda in this case), and using Python libraries (Java and other language API operations and libraries can also be used) that can interact with AWS services (the Boto3 library in this case).

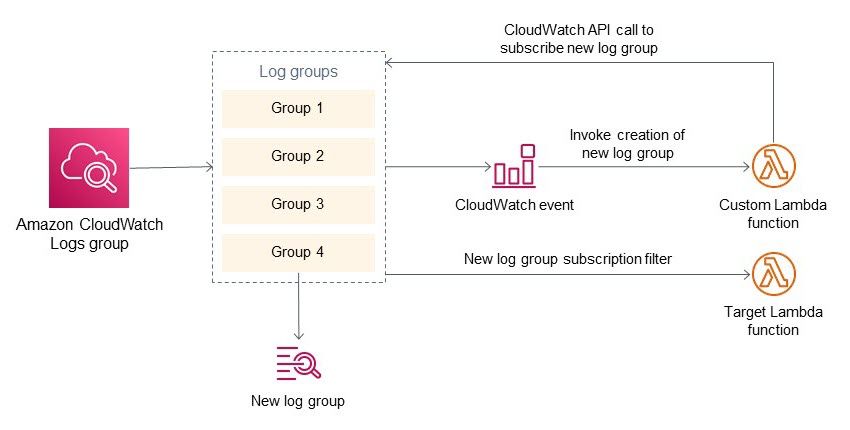

Figure 1 shows the architecture and flow of the automation.

Figure 1. Architecture diagram for automatically subscribing to Amazon CloudWatch Logs groups

- New applications and services create a new log group that Amazon CloudWatch registers to its repository.

- A custom CloudWatch event rule invokes a Lambda function when a new log group is created.

- The first Lambda function contains Python code that captures the name of new log groups from the event. This first Lambda function also has details about the target Lambda (the one that reads log messages) and custom filters. The custom filters can be further used to filter log group subscriptions (in case you want only certain log groups to subscribe).

- This first Lambda function calls the Amazon CloudWatch API to create a subscription filter for the log group. The target of this subscription filter is a second Lambda function.

- On completion of these steps, subscriptions are enabled on a newly created Amazon CloudWatch Logs group. The subscription points to a second Lambda (target Lambda in the diagram). This second Lambda function can have custom code to read and process Amazon CloudWatch Logs messages.

Prerequisites

This walkthrough requires the following:

- An AWS account. If you don’t have an account, sign up at https://aws.amazon.com.

- A basic understanding of Python.

Walkthrough

Amazon CloudWatch Logs groups

Amazon CloudWatch can collect, access, and correlate logs on a single platform from across all of your AWS resources, applications, and services that run on-premises servers. This can help you distill data silos so you can gain system-wide visibility and resolve issues.

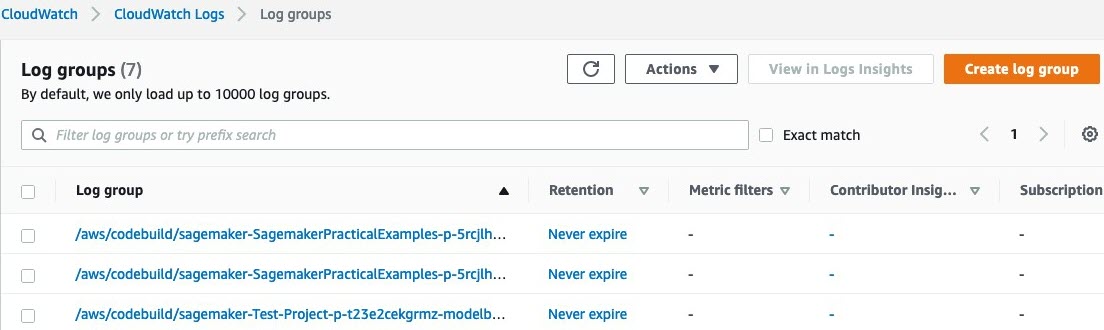

Logs are stored in multiple log groups, which define groups of log streams that share the same retention, monitoring, and access-control settings. Each log stream must belong to one log group.

Figure 2. Amazon CloudWatch event rule

Amazon CloudWatch event rules

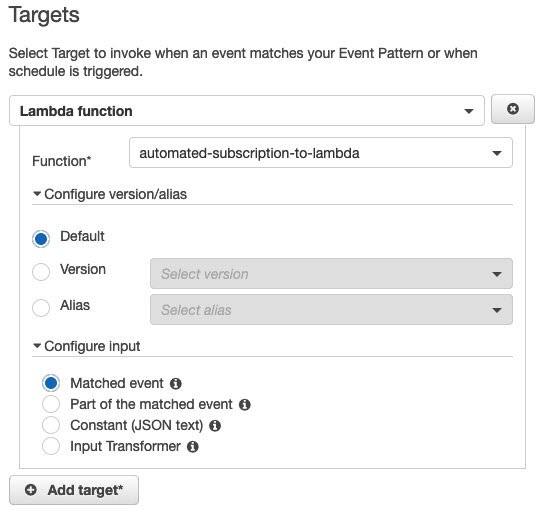

Whenever new log groups are created, an event is initiated that can be used in an Amazon CloudWatch event rule to invoke a Lambda function. This Lambda function has a custom code that configures the subscription of new log groups to target Lambda.

The event message that is sent to Lambda contains the name and associated metadata of the new log group.

When a new log group is created, the previously defined CloudWatch event rule initiates an AWS Lambda function. The following Lambda function reads the newly created log-group name and associated metadata. This information is used in the custom code of Lambda, and, through Boto3 libraries, we subscribe new log groups to a target Amazon Resource Name (ARN). The target Lambda can read, transform, and write log messages to storage.

Figure 4. Amazon CloudWatch event rule targets

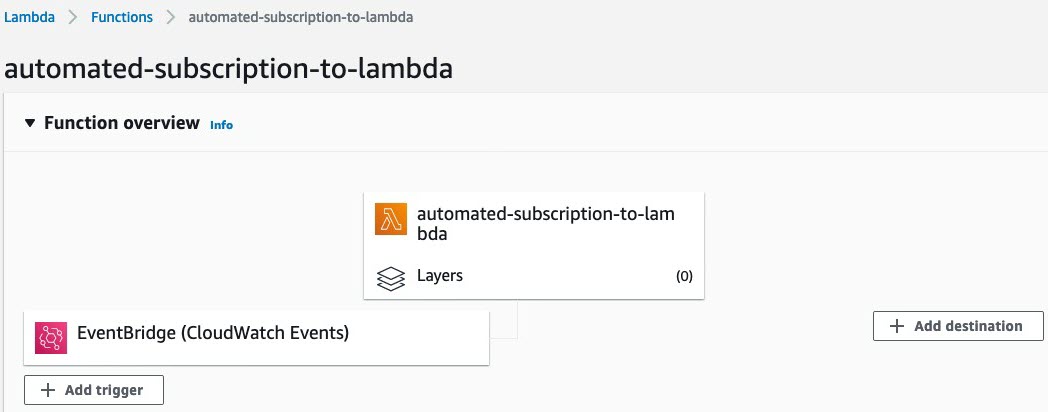

Figure 5. Adding a trigger to AWS Lambda

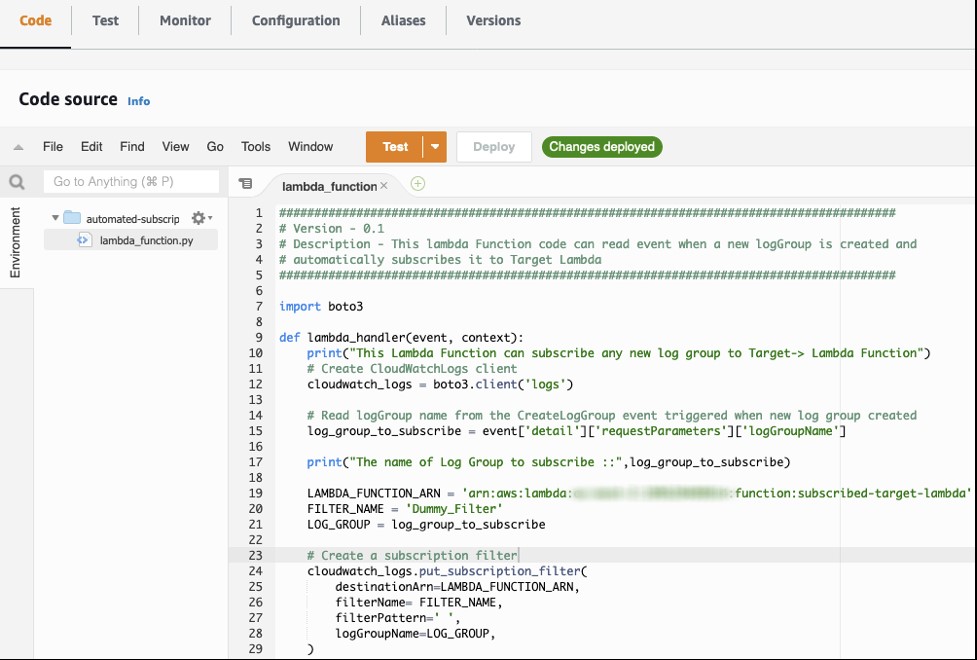

Figure 6. Lambda function code to automate the subscription of a new log group

In the following code snippet, the log group is read from the event message in nested JSON format. There could be additional configurations defined like filter patterns to pass only a few messages (for example, message from specific log streams and error messages) to target Lambda. The Python library uses the put_subscription_filter function with a log group name and target Lambda ARN (and additional filter parameters) to subscribe a new log group to the target Lambda.

In addition to the preceding configuration, add permission to the Lambda target to subscribe Amazon CloudWatch Logs.

The Python code in the Lambda function can use libraries that can add Lambda permissions to subscribe Amazon CloudWatch, but keep the application code and AWS Identity and Access Management (IAM) permission as separate and independent modules. Also, in the IAM permission commands, you can restrict permissions on specific log groups. For example, in the previous IAM permissions command, we see log-group:*:* instead of *, we can provide matching patterns. Because the resource-based policy has a limit of 20 kilobytes, it is recommended to use patterns rather than individual log-group names.

In the previous Lambda function code, we subscribe to all newly created log groups, but filter on the log-group name. We can use multiple approaches:

- To match the newly created log-group name, add a string or pattern filter to the Lambda Python code. For example, if you want to subscribe to only AWS CodeBuild logs, add a filter and match on

/aws/codebuild/*. Also, you can match the log-group name from a collection of strings (for example, a list or array). - If you want the log group to send only specific log patterns from a log group, add patterns to the argument

filterPatterninside theput_subscription_filter()operation.

Cleanup

To avoid incurring future charges, complete the following steps to delete the resources created by the process:

- Navigate to the AWS Lambda console.

- Navigate to Functions.

- Delete the following Lambda function:

automated-subscription-to-lambda. - Navigate to the Amazon CloudWatch console.

- Navigate to Event > Rules.

- Delete the Amazon CloudWatch rule.

Extending the example

Apart from Lambda functions, that we covered in this blog post, the same log group auto-subscribe function can be applied to other AWS services. Kinesis Data Firehose and Kinesis Data Streams can be used for autosubscription by defining the destinationARN parameter of the Boto3 library. For example, when Amazon CloudWatch generates a new log group, logs can be ingested into Data Stream or Data Firehose.

Currently, the following destinations are supported for automatic subscription:

- Kinesis Data Streams that belong to the same account as the subscription filter for same-account delivery.

- A logical destination (specified using an ARN) that belongs to a different account, for cross-account delivery. If you set up a cross-account subscription, the destination must have an IAM policy associated with it that allows the sender to send logs to the destination. For more information, see PutDestinationPolicy.

- Kinesis Data Firehose delivery stream that belongs to the same account as the subscription filter for same-account delivery.

- An AWS Lambda function that belongs to the same account as the subscription filter for same-account delivery.

Conclusion

In this blog post, we showed you how to implement a Lambda function that uses Amazon CloudWatch and AWS Lambda to automatically subscribe to new groups in Amazon CloudWatch Logs.

The operation invokes an existing Lambda function for new log groups so that you can monitor all of your deployed applications on AWS. This will help you to automatically correlate, visualize, and analyze logs, so you can act quickly to resolve issues, speed up troubleshooting and debugging, and reduce overall mean-time-to-resolution (MTTR).

If you want to dig deeper into Lambda functions, see What is AWS Lambda? To extend the Lambda function to interact with multiple AWS services, see the Boto3 documentation.

Use the comments section to let us know your thoughts and how you use this feature.