AWS Compute Blog

Previewing environments using containerized AWS Lambda functions

This post is written by John Ritsema (Principal Solutions Architect)

Continuous integration and continuous delivery (CI/CD) pipelines are effective mechanisms that allow teams to turn source code into running applications. When a developer makes a code change and pushes it to a remote repository, a pipeline with a series of steps can process the change. A pipeline integrates a change with the full code base, verifies the style and formatting, runs security checks, and runs unit tests. As the final step, it builds the code into an artifact that is deployable to an environment for consumption.

When using GitHub or many other hosted Git providers, a pull request or merge request can be submitted for a particular code change. This creates a focused place for discussion and collaboration on the change before it is approved and merged into a shared code branch.

A powerful mechanism for collaboration involves deploying a pull request (PR) to a running environment. This allows stakeholders to preview the changes live and see how they would look. Spinning up a running environment quickly allows teammates to provide almost immediate feedback, expediting the entire development process.

Deploying PRs to ephemeral environments encourages teams to make many small changes that can be previewed and tested in parallel. This avoids having to first merge into a common source branch and deploy to long-lived environments that are always on and incur costs.

Creating this mechanism has several challenges including setup complexity, environment creation time, and environment cost. This post addresses these challenges by showing how to create a CI/CD pipeline for previewing changes to web applications in ephemeral, quick-to-provision, low-cost, and scale-to-zero environments. This post walks through the steps required to set up a sample application.

Example architecture

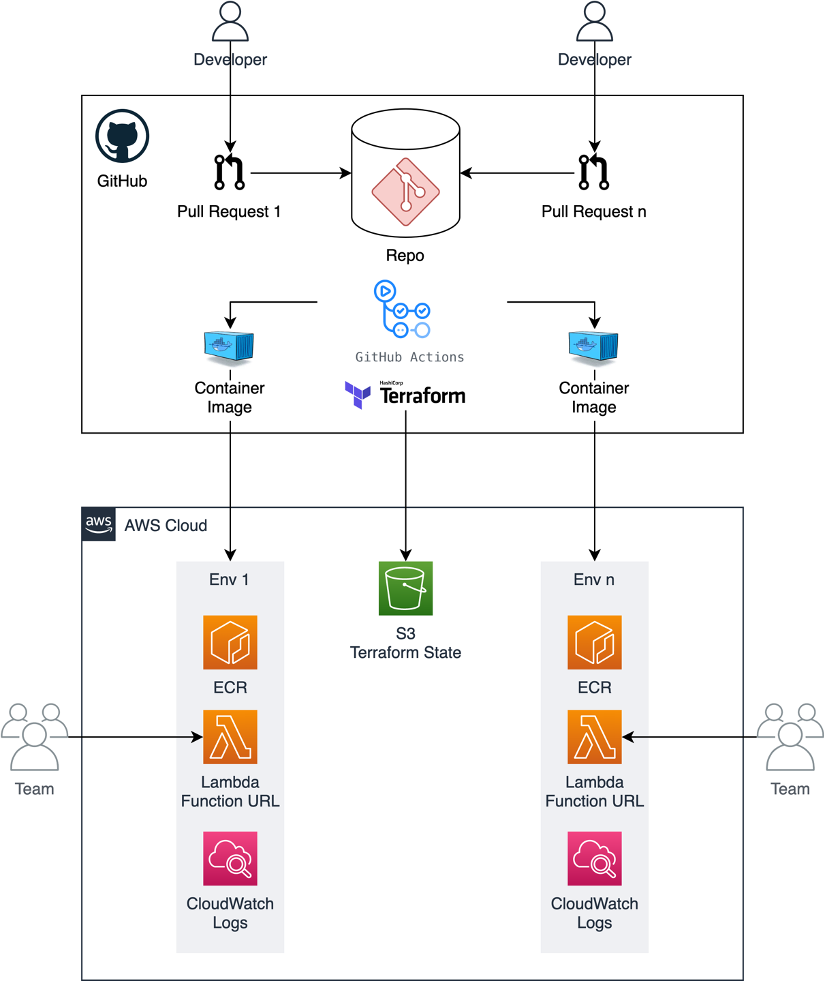

The concepts in this post can be implemented using a number of tools and hosted Git providers that connect to CI/CD pipelines. The example code shared in this post uses GitHub Actions to trigger a workflow. The workflow uses a small Terraform module with Docker to build the application source code into a container image, push it to Amazon Elastic Container Registry (ECR), and create an AWS Lambda function with the image.

The container running on Lambda is accessible from a web browser through a Lambda function URL. This provides a dedicated HTTPS endpoint for a function.

This is used instead of AWS App Runner, Amazon ECS Fargate with an Application Load Balancer (ALB), or Amazon EKS with ALB ingress because of speed of provisioning and low cost. Lambda function URLs are ideal for occasionally used ephemeral PR environments as they can be provisioned quickly. Lambda’s scale-to-zero compute environment leads to lower cost, as charges are only incurred for actual HTTP requests. This is useful for PRs that may only be reviewed infrequently and then sit idle until the PR is either merged or closed.

This is the example architecture:

Setting up the example

The sample project shows how to implement this example. It consists of a vanilla web application written in Node.js. All of the code needed to implement the architecture is contained within the .github directory. To enable ephemeral environments for a new project, copy over the .github directory without cluttering your project files.

There are two main resources needed to run Terraform inside of GitHub Actions: an AWS IAM role and a place to store Terraform state. AWS credentials are required to give the pipeline permission to provision AWS resources.

Instead of using static IAM user credentials that must be rotated and secured, assume an IAM role to obtain temporary credentials. Terraform remote state is needed to dispose of the environment when the PR is merged or closed. The sample project uses an Amazon S3 bucket to store Terraform state.

You can use the Terraform module located under .github/setup to create these required resources.

-

- Provide the name of your GitHub organization and repository in the

terraform.tfvarsfile as input parameters. You can replace aws-samples with your GitHub user name: - To provision the resources using Terraform, run:

Store the outputted terraform.tfstate file safely so that you can manage these resources in the future if needed. - Place the Region, generated IAM role, and bucket name into the configuration file located under .github/workflows/config.env. This configuration file is read and used by the GitHub Actions workflow.

This IAM role has an inline policy that contains the minimum set of permissions needed to provision the AWS resources. This assumes that your application does not interact with external services like databases or caches. If your application needs this additional access, you can add the required permissions to the policy located here.

- Provide the name of your GitHub organization and repository in the

Running a web server in Lambda

The sample web (HTTP) application includes a Dockerfile that contains instructions for packaging the web app into a process-based container image. A Lambda extension called Lambda Web Adapter enables you to run this standard web server process on Lambda. The CI/CD workflow makes a copy of the Dockerfile and adds the following line.

This line copies the Lambda Web Adapter executable binary from a public ECR image and writes it into the container in the /opt/extensions/ directory. When the container starts, Lambda starts the Lambda Web Adapter extension. This translates Lambda event payloads from HTTP-based triggers into actual HTTP requests that it proxies to the web app running inside the container. This is the architecture:

By default, Lambda Web Adapter assumes that the web app is listening on port 8080. However, you can change this in the Dockerfile by setting the PORT environment variable.

The containerized web app experiences a “cold start”. However, this is likely not too much of a concern, as the app will only be previewed internally by teammates.

Workflow pipeline

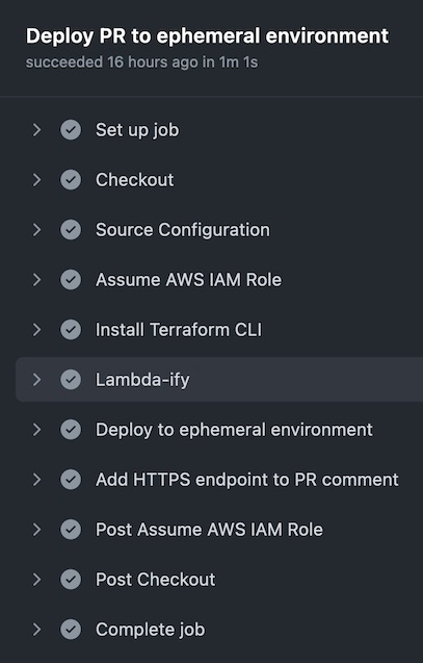

The GitHub Actions job defined in the up.yml workflow is triggered when a PR is opened or reopened against the repository’s main branch. The following is a summary of the steps that the Job performs.

- Read the configuration from .github/workflows/config.env

- Assume the IAM Role, which has minimal permissions to deploy AWS resources

- Install the Terraform CLI

- Add the Lambda Web Adapter extension to the copy of the Dockerfile

- Run terraform apply to provision the AWS resources using the S3 bucket for Terraform remote state

- Obtain the HTTPS endpoint from Terraform and add it to the PR as a comment

The following code snippet shows the key steps (4-6) from the up.yml workflow.

The main.tf file (in the same directory) includes infrastructure as code (IaC) that is responsible for creating an ECR repository, building and pushing the container image to it, and spinning up a Lambda function based on the image. The following is a snippet from the Terraform configuration. You can see how concisely this can be configured.

Terraform outputs the generated HTTPS endpoint. The workflow writes it back to the PR as a comment so that teammates can click on the link to preview the changes:

The workflow takes about 60 seconds to spin up a new isolated containerized web application in an ephemeral environment that can be previewed.

Pull request collaboration

The following screenshot shows an example PR as the author collaborates with their team. After implementing this example, when a new PR arrives, the changes are deployed to a new ephemeral environment. Stakeholders can use the link to preview what the changes look like and provide feedback.

Once the changes are approved and merged into the main branch, the GitHub Actions down.yml workflow disposes of the environment. This means that the ephemeral environment is de-provisioned, including resources like the Lambda function and the ECR repository.

Conclusion

This post discusses some of the benefits of using ephemeral environments in CI/CD pipelines. It shows how to implement a pipeline using GitHub Actions and Lambda Function URLs for fast, low-cost, and ephemeral environments.

With this example, you can deploy PRs quickly, and the cost is based on HTTP requests made to the environment. There are no compute costs incurred while a PR is open and no one is previewing the environment. The only charges are for Lambda invocations, while stakeholders are actively interacting with the environment. When a PR is merged or closed, the cloud infrastructure is disposed of. You can find all of the example code referenced in this post here.

For more serverless learning resources, visit Serverless Land.