Containers

Building container images on Amazon ECS on AWS Fargate

Note: The Kaniko project has been archived and is not actively maintained.

Building container images is the process of packaging an application’s code, libraries, and dependencies into reusable file systems. Developers create a Dockerfile alongside their code that contains all the commands to assemble a container image. This Dockerfile is then used to produce a container image using a container image builder tool, such as the one built into Docker Engine. The resulting container image is used to create containers in containerized environments such as Amazon ECS and EKS. As part of the development workflow, a developer builds container images locally on their machine, for example, running a docker build command against a local Docker Engine.

While this practice works well when there’s only one developer who’s writing the code and building it, it’s not a scalable process. Given that multiple developers simultaneously modify code in a typical development team, one developer cannot be responsible for building container images. DevOps engineers solve this problem using continuous delivery (CD) pipelines where developers check-in their code in a central code repository such as a Git repository, and container builds are automated using tools like Jenkins or CodePipeline. Through customer feedback, we have learned that many DevOps teams that manage their CD pipelines choose to run it on Amazon Elastic Container Service (Amazon ECS) or Amazon Elastic Kubernetes Service (Amazon EKS). Container orchestrators like ECS and EKS simplify scaling the infrastructure based on the demands on the CD system. For example, in Jenkins, ECS can autoscale EC2 instances as Jenkins pipelines get triggered and additional compute capacity to run the builds is required. Customers have also expressed interest in running their CD workloads on Fargate as it eliminates the need to manage servers.

Building container images in containers

In contrast with building containers on your local machine, Jenkins (or a similar tool) running in an ECS cluster will build container images inside a running container. However, common container image builders, such as the one included in the Docker Engine, cannot run in the security boundaries of a running container. Restricted access to Linux Systems Calls (via seccomp) and Linux Security Modules (AppArmour or SELinux) prevent Docker Engine from running inside a container.

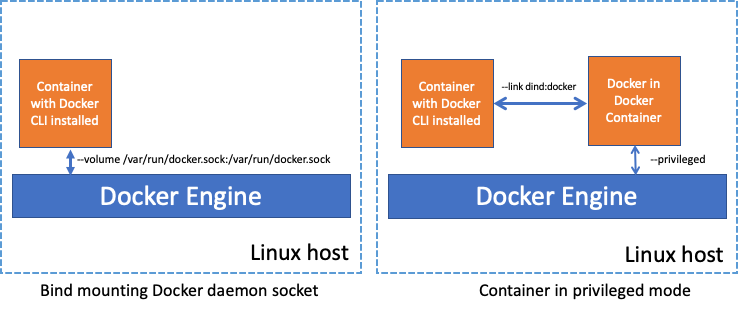

Therefore, customers have two options if they want to build containers images using the traditional docker build method, while running in a container on an EC2 instance:

- Bind mount the Unix Socket of the Docker Engine running on the host in to the running container, which permits the container full access to the underlying Docker API.

- Run a container in privileged mode, removing the Linux System Call and Security Module restrictions, allowing a Docker Engine to run in a container.

There are inherent risks involved in both of these approaches. Containers that have access to the host’s Docker daemon or run in privileged mode can also perform other malicious actions on the host. For example, a container with access to the host’s Docker Engine through a mounted Unix socket would have full access to the underlying Docker API. This would give the Container the privileges to start and stop any other container running on that Docker Engine, or even docker exec into other containers. Even in single-tenant ECS clusters, this can lead to severe ramifications as it exposes a back door for hostile actors.

AWS Fargate runs each container in a VM-isolated environment. It also imposes security best practices, including prohibiting running containers from mounting directories or sockets from the underlying host and preventing containers from running with additional linux capabilities or using the --privileged flag. As a result, customers cannot build container images inside Fargate containers using the builder within Docker Engine.

kaniko

New tools have emerged in the past few years to address the problem of building container images without requiring privileged mode. kaniko is one such tool that builds container images from a Dockerfile, much like the traditional Docker does. But unlike Docker, it doesn’t require root privileges, and it executes each command within a Dockerfile entirely in userspace. Thus, it permits you to build container images in rootless ways, such as in a running container. kaniko is an excellent standalone image builder, purposefully designed to run within a multi-tenant container cluster.

kaniko is designed to run within the constraints of a containerized environment, such as the one provided by Fargate. It does not require any additional Linux capabilities, for Linux Security Modules to be disabled, or any other access to the underlying host. It is, therefore, an ideal utility for building images on AWS Fargate.

In the next section, we will show you how to build container images in Fargate containers using kaniko.

Solution

To build images using kaniko with Amazon ECS on AWS Fargate, you would need:

- AWS CLI version 2

- Docker

- An existing AWS VPC and Subnet. The ECS tasks deployed in this subnet will also need access to your application’s source code repository. This could be a public GitHub repository; if so, the subnets would need internet access.

Let’s start by storing the IDs of the VPC and subnet you plan on using:

export KANIKO_DEMO_VPC=<YOUR VPC ID e.g., vpc-055e876skjwshr1dca1>

export KANIKO_DEMO_SUBNET=<SUBNET ID e.g., subnet-09a9a548da32bbbbe>Create an ECS cluster:

aws ecs create-cluster \

--cluster-name kaniko-demo-clusterCreate an ECR repository to store the demo application.

export KANIKO_DEMO_REPO=$(aws ecr create-repository \

--repository-name kaniko-demo \

--query 'repository.repositoryUri' --output text)

export KANIKO_DEMO_IMAGE="${KANIKO_DEMO_REPO}:latest"Create an ECR repository to store the kaniko container image:

export KANIKO_BUILDER_REPO=$(aws ecr create-repository \

--repository-name kaniko-builder \

--query 'repository.repositoryUri' --output text)

export KANIKO_BUILDER_IMAGE="${KANIKO_BUILDER_REPO}:executor"The upstream image provided by the kaniko community may work for you depending on your container repository. However, in this walk through, we need to pass a configuration file to allow kaniko to push to Amazon ECR. To push images to an ECR repository, the ECR Credential Helper will authenticate using AWS Credentials. The upstream kaniko container image already includes the ECR Credentials Helper binary. However, a configuration file is required to instruct kaniko to use the ECR Credential Helper for ECR authentication.

# Create a directory to store the container image artifacts

mkdir kaniko

cd kaniko

# Create the Container Image Dockerfle

cat << EOF > Dockerfile

FROM gcr.io/kaniko-project/executor:latest

COPY ./config.json /kaniko/.docker/config.json

EOF

# Create the Kaniko Config File for Registry Credentials

cat << EOF > config.json

{ "credsStore": "ecr-login" }

EOFBuild and push the image to ECR:

docker build --tag ${KANIKO_BUILDER_REPO}:executor .

aws ecr get-login-password | docker login \

--username AWS \

--password-stdin \

$KANIKO_BUILDER_REPO

docker push ${KANIKO_BUILDER_REPO}:executorCreate an IAM role for the ECS task that allows pushing the demo application’s container image to ECR:

# Create a trust policy

cat << EOF > ecs-trust-policy.json

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Principal": {

"Service": "ecs-tasks.amazonaws.com"

},

"Action": "sts:AssumeRole"

}

]

}

EOF

# Create an IAM policy

cat << EOF > iam-role-policy.json

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"ecr:GetAuthorizationToken",

"ecr:InitiateLayerUpload",

"ecr:UploadLayerPart",

"ecr:CompleteLayerUpload",

"ecr:PutImage",

"ecr:BatchGetImage",

"ecr:BatchCheckLayerAvailability"

],

"Resource": "*"

}

]

}

EOF

# Create an IAM role

ECS_TASK_ROLE=$(aws iam create-role \

--role-name kaniko_ecs_role \

--assume-role-policy-document file://ecs-trust-policy.json \

--query 'Role.Arn' --output text)

aws iam put-role-policy \

--role-name kaniko_ecs_role \

--policy-name kaniko_push_policy \

--policy-document file://iam-role-policy.jsonCreate an ECS task definition in which we define how the kaniko container will run, where the application source code repository is, and where to push the built container image:

# Create an Amazon CloudWatch Log Group to Store Log Output

aws logs create-log-group \

--log-group-name kaniko-builder

# Export the AWS Account ID

AWS_ACCOUNT_ID=$(aws sts get-caller-identity \

--query 'Account' \

--output text)

# Create the ECS Task Definition.

cat << EOF > ecs-task-defintion.json

{

"family": "kaniko-demo",

"taskRoleArn": "$ECS_TASK_ROLE",

"executionRoleArn": "arn:aws:iam::${AWS_ACCOUNT_ID}:role/ecsTaskExecutionRole",

"networkMode": "awsvpc",

"containerDefinitions": [

{

"name": "kaniko",

"image": "$KANIKO_BUILDER_IMAGE",

"logConfiguration": {

"logDriver": "awslogs",

"options": {

"awslogs-group": "kaniko-builder",

"awslogs-region": "$(aws configure get region)",

"awslogs-stream-prefix": "kaniko"

}

},

"command": [

"--context", "git://github.com/ollypom/mysfits.git",

"--context-sub-path", "./api",

"--dockerfile", "Dockerfile.v3",

"--destination", "$KANIKO_DEMO_IMAGE",

"--force"

]

}],

"requiresCompatibilities": ["FARGATE"],

"cpu": "512",

"memory": "1024"

}

EOF

aws ecs register-task-definition \

--cli-input-json file://ecs-task-defintion.jsonRun kaniko as a single task using the ECS run-task API. This run-task API can be automated through a variety of CD and automation tools. If the subnet is a public subnet, the “assignPublicIp” field should be set to “ENABLED”.

Create a security group and create a kaniko task:

# Create a security group for ECS task

KANIKO_SECURITY_GROUP=$(aws ec2 create-security-group \

--description "SG for VPC Link" \

--group-name KANIKO_DEMO_SG \

--vpc-id $KANIKO_DEMO_VPC \

--output text \

--query 'GroupId')

# Start the ECS Task

cat << EOF > ecs-run-task.json

{

"cluster": "kaniko-demo-cluster",

"count": 1,

"launchType": "FARGATE",

"networkConfiguration": {

"awsvpcConfiguration": {

"subnets": ["$KANIKO_DEMO_SUBNET"],

"securityGroups": ["$KANIKO_SECURITY_GROUP"],

"assignPublicIp": "DISABLED"

}

},

"platformVersion": "1.4.0"

}

EOF

# Run the ECS Task using the "Run Task" command

aws ecs run-task \

--task-definition kaniko-demo:1 \

--cli-input-json file://ecs-run-task.jsonOnce the task starts you can view kaniko logs using CloudWatch:

aws logs get-log-events \

--log-group-name kaniko-builder \

--log-stream-name $(aws logs describe-log-streams \

--log-group-name kaniko-builder \

--query 'logStreams[0].logStreamName' --output text) The task will build an image from source code. Once it pushes the image to ECR, the task will terminate.

List images in your ECR repository to verify that the built image has been pushed successfully:

# List images in the kaniko-demo repository

aws ecr list-images --repository-name kaniko-demo

# A succesful response would look like

{

"imageIds": [

{

"imageDigest": "sha256:500200c2a854a0ead82c5c4280a3ced8774965678be20c117428a0119f124e33",

"imageTag": "latest"

}

]

}Cleanup

# Remove the two ECR repos

aws ecr delete-repository --repository-name kaniko-demo --force

aws ecr delete-repository --repository-name kaniko-builder --force

# Remove ECS Cluster

aws ecs delete-cluster --cluster kaniko-demo-cluster

# Remove ECS Task Defintion

aws ecs deregister-task-definition --task-definition kaniko-demo:1

# Remove CloudWatch Log Group

aws logs delete-log-group --log-group-name kaniko-builder

# Remove IAM Policy

aws iam delete-role-policy --role-name kaniko_ecs_role --policy-name kaniko

_push_policy

# Remove IAM Role

aws iam delete-role --role-name kaniko_ecs_role

# Remove security group

aws ec2 delete-security-group --group-id $KANIKO_SECURITY_GROUPConclusion

With the increased security profile of AWS Fargate, customers leveraging traditional container image builders have been unable to take advantage of serverless compute and have been left provisioning and managing servers to support CD pipelines. In this blog post, we have shown how modern container image builders, such as kaniko, can run without additional Linux privileges in an Amazon ECS task running on AWS Fargate.

- To see how kaniko can be used in a Jenkins Pipeline on Amazon EKS, see this blog post.

- To learn more about kaniko, find additional documentation on their github repository.