Containers

Self-service AWS native service adoption in OpenShift using ACK

AWS Controllers for Kubernetes (ACK) is an open-source project that allows you to define and create AWS resources directly from within OpenShift. Using ACK, you can take advantage of AWS-managed services to complement the application workloads running in OpenShift without needing to define resources outside of the cluster or run services that provide supporting capabilities like databases or message queues.

Customers running OpenShift on AWS can choose from deploying self-managed Red Hat OpenShift Container Platform or managed OpenShift in the form of the Red Hat OpenShift Service on AWS (ROSA).

ROSA provides an integrated experience to use OpenShift, making it easier for you to focus on deploying applications and accelerating innovation by moving the cluster lifecycle management to Red Hat and AWS. With ROSA, you can run containerized applications with your existing OpenShift workflows and reduce the complexity of management.

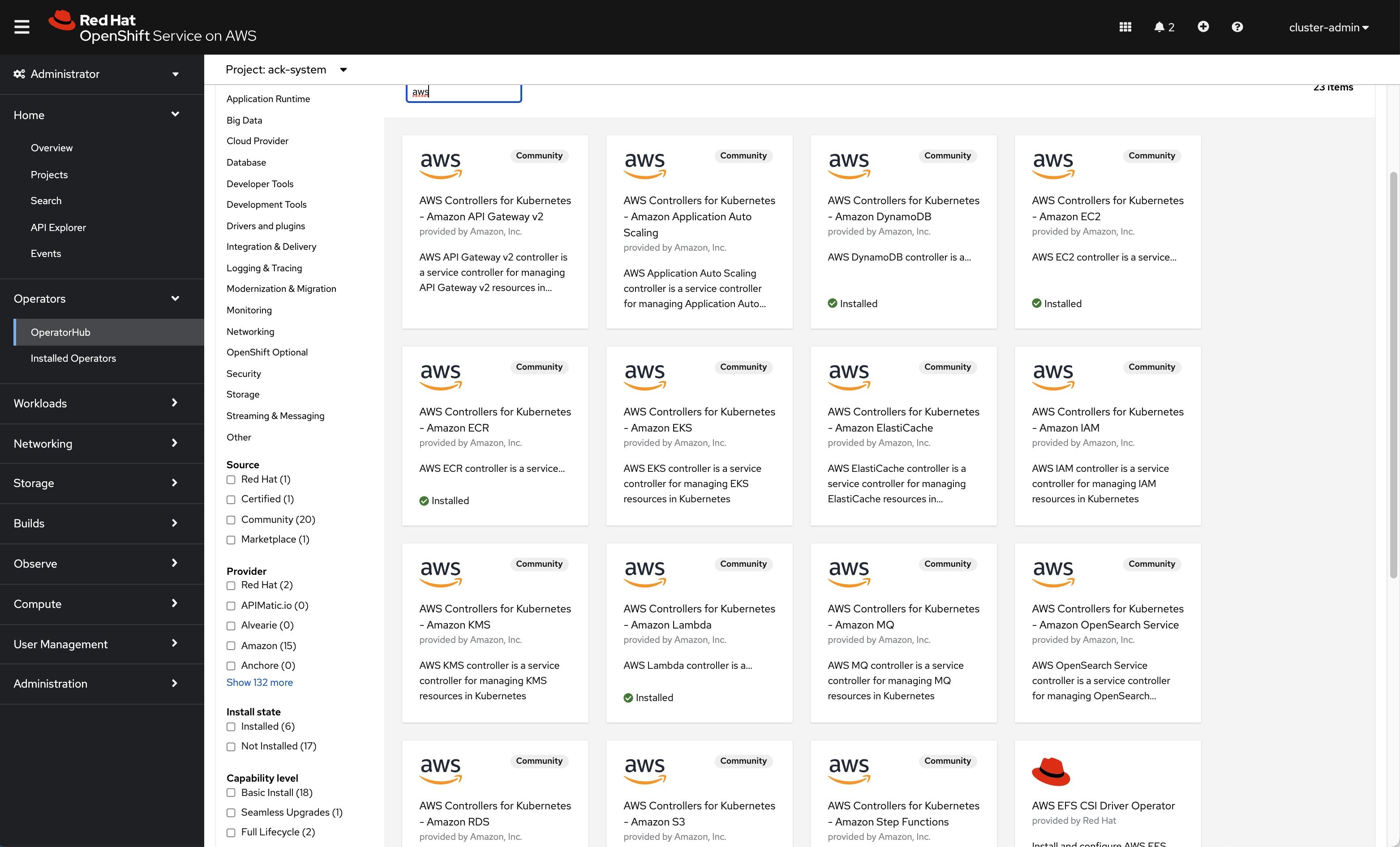

Amazon Controller for Kubernetes has now been integrated into OpenShift and is being used to provide a broad collection of AWS native services now available on the OpenShift OperatorHub.

ACK Operators available on Red Hat OpenShift console

In this post, I will describe how to connect an application or pod running on ROSA to an Amazon Relational Database (Amazon RDS) for MySQL provisioned and configured using ACK service controllers. In this use case, both ROSA and the RDS instance are in their own dedicated VPC.

For this use case, I will use AWS Controllers for Kubernetes – Amazon EC2 (ACK EC2) and AWS Controllers for Kubernetes – Amazon RDS (ACK RDS).

Prerequisites

- AWS account

- ROSA enabled in the AWS account

- A ROSA cluster created

- Access to Red Hat OpenShift console

Tutorial

Here are the steps I followed to demonstrate connecting an Amazon RDS for MySQL database from an application running on a ROSA cluster:

- Create a ROSA cluster. The ROSA cluster installation will create the VPC’s cluster as well.

- Install AWS Controllers for Kubernetes.

- Install AWS Controllers for Kubernetes – Amazon EC2 (ACK EC2) for creating the VPC, subnet, and VPC security groups for the RDS instance.

- Install AWS Controllers for Kubernetes – Amazon RDS (ACK RDS) to create the RDS instance.

- Provision Amazon RDS for MySQL.

- Connect the ROSA cluster VPC and RDS VPC using VPC peering.

- Validate the connection to RDS.

Create a ROSA cluster

The most common deployment pattern is to deploy ROSA with —STS AWS Security Token Service (STS). The official user guide for provisioning a ROSA cluster using the STS workflow guide is here.

Get the Red Hat OpenShift console. Use cluster-admin user name and password to sign in to the console.

Install AWS Controllers for Kubernetes

Before installing the ACK service controllers, some preinstall steps are necessary. An AWS Identity and Access Management (IAM) user needs to be created and policies attached. Please refer to the ACK documentation for this.

Create the installation namespace

This is the namespace for installing the ACK service controllers.

❯ oc new-project ack-system

Bind an AWS IAM principal to a service user account

Create a user with aws CLI named ack-service-controller. This user will be used by both ACK EC2 and ACK RDS.

❯ aws iam create-user --user-name ack-service-controller

Enable programmatic access for the user you just created:

Save AccessKeyId and SecretAccessKey to be used later.

Create ack-config and ack-user-secrets for authentication

Create a file named config.txt with the following variable, leaving ACK_WATCH_NAMESPACE blank so the controller can properly watch all namespaces, and change any other values to suit your needs:

Use the config.txt to create a ConfigMap in your ROSA cluster:

Create another file called secrets.txt with the following authentication values.

I am going to store these credentials within the OpenShift secrets store.

Delete config.txt and secrets.txt.

Attach ack-service-controller user to the IAM policies

You need to attach the IAM to the required policies. Because you will use ACK EC2 and ACK, you will attach the following IAM policies.

Note: You can choose to attach to the user custom or specific policies required by your needs.

Once the above has been completed, the AWS native services operators can be consumed from the OpenShift OperatorHub. Open the Red Hat OpenShift console using username and go to the OperatorHub. Filter items using aws keyword. A list with all the ACK service controllers available will pop up on the screen.

As I mentioned at the beginning of the post, from this list, you will install AWS Controllers for Kubernetes – Amazon EC2 (ACK EC2) and AWS Controllers for Kubernetes – Amazon RDS (ACK RDS).

Install AWS Controllers for Kubernetes – Amazon EC2 (ACK EC2)

Leave all the parameters as default and select Install.

After a short while, you should notice the operator is installed.

Do the same for installing AWS Controllers for Kubernetes – Amazon RDS (ACK RDS)

Install AWS Controllers for Kubernetes – Amazon RDS (ACK RDS)

Choose the OperatorHub on the left navigation pane, filter operators list by aws keyword, and choose AWS Controllers for Kubernetes – Amazon RDS.

Select Install, leaving all the parameters as default.

After a short while, the ACK RDS operator should be ready.

If you choose Installed Operators on the left navigation pane, you should be able to confirm both operators are installed and ready.

Or you can verify this using oc CLI.

Set up Amazon RDS for MySQL

Before starting to create AWS resources using Kubernetes manifests, create a namespace for deploying them.

❯ oc new-project ack-workspace

Create the VPC

I am going to create a separate Amazon VPC for the RDS instance with CIDR block 100.68.0.0/18.

You can validate VPC creations using aws CLI.

Create the subnets

Next, you will create subnets in two Availability Zones so that you can take advantage of the resilience provided by multi-AZ RDS. These subnets will be used when creating a DB subnet group. A DB subnet group is a collection of subnets (typically private) that you create in a VPC and that you then designate for your DB instances. A DB subnet group allows you to specify a particular VPC when creating DB instances using the CLI or API.

Create DB subnet group

Here, you will create the DB subnet group.

Create VPC security group

Before creating the DB instance, you must create a VPC security group to associate it with the DB instance.

Create the RDS DB instance

Create a Secret to store the master admin for the RDS DB instance.

Create the DB instance

You can check that the DB instance was created in the UI or using aws CLI.

Connect the ROSA cluster VPC and RDS VPC using VPC peering

A VPC peering connection is a networking connection between two VPCs that enables you to route traffic between them using private IPv4 addresses or IPv6 addresses. Instances in either VPC can communicate with each other as if they are within the same network. You can create a VPC peering connection between your own VPCs or with a VPC in another AWS account. The VPCs can be in different Regions (also known as an inter-Region VPC peering connection).

Create and accept a VPC peering connection between ROSA VPC and RDS VPC

Get the VpcId of the ROSA VPC.

Create the peering connection between ROSA VPC and RDS VPC.

Accept the VPC peering connection.

aws ec2 accept-vpc-peering-connection --vpc-peering-connection-id $VPC_PEER_ID

Update ROSA VPC route table

Get the route associated with the three public subnets of the ROSA VPC and add a route to the table so that all traffic to the RDS VPC CIDR block is via the VPC peering connection.

Update RDS instance security group

Update the security group to allow ingress traffic from the ROSA cluster to the RDS instance on port 3306.

Validate the connection to RDS

Now you are ready to validate the connection to the RDS MySQL database from a pod running on the ROSA cluster.

Create a Kubernetes service named mysql-service of type ExternalName, aliasing the RDS endpoint.

Connect to the RDS MySQL database from a pod using mysql-57-rhel7 image.

Conclusion

Customers looking to further modernize their application stacks by using AWS native services to complement their application workloads running in OpenShift can now use AWS service operators powered by the Amazon Controller for Kubernetes. This provides a prescriptive self-service approach where application owners do not need to leave the familiar interface and context of Kubernetes and OpenShift.