AWS Database Blog

Convert the NUMBER data type from Oracle to PostgreSQL – Part 2

July 2023: This post was reviewed for accuracy.

An Oracle to PostgreSQL migration in the AWS Cloud can be a multistage process with different technologies and skills involved, starting from the assessment stage to the cutover stage. For more information about the migration process, see Database Migration—What Do You Need to Know Before You Start? and the following posts on best practices, including the migration process and infrastructure considerations, source database considerations, and target database considerations for the PostgreSQL environment.

This series is divided into two posts. In the first post, we cover two analysis methods to determine the ideal data type column in PostgreSQL (INT, BIGINT, DOUBLE PRECISION, NUMERIC) depending on how the NUMBER data type is defined in the Oracle database and what values are stored in the columns’ data. In this post, we discuss how to perform data type mapping for the Oracle NUMBER column using SQL queries and the AWS Schema Conversion Tool (AWS SCT) to change the data types in the target PostgreSQL instance.

One of the analysis methods from the previous post is metadata-based data type conversion, where Oracle catalog tables are queried to find the precision and scale for the current NUMBER data type columns. The following table summarizes how to determine the target database’s ideal data type for performance and storage gain.

| Precision(m) | Scale(n) | Oracle | PostgreSQL |

| <5 | 0 | NUMBER(m,n) | SMALLINT |

| 5 >= m <= 9 | 0 | NUMBER(m,n) | INT |

| 10 <= m <=18 | 0 | NUMBER(m,n) | BIGINT |

| m | n>0 | NUMBER(m,n) | NUMERIC(m,n) |

With the NUMBER data type without precision and scale in Oracle, we can refer to the following decision matrix based on underlying dataset stored.

| min_value | max_value | max_precision + max_scale | min_scale | max_scale | Oracle | PostgreSQL |

| NULL | NULL | NULL | NULL | NULL | NUMBER | DOUBLE PRECISION |

| -2147483648 | 2147483647 | NA | NULL | NULL | NUMBER | INT |

| -9223372036854775808 | 9223372036854775807 | NA | NULL | NULL | NUMBER | BIGINT |

| NA | NA | NA | >=38 | >=38 | NUMBER | NUMERIC |

| NA | NA | <=15 | NA | NA | NUMBER | DOUBLE PRECISION |

| NA | NA | >=15 | NA | NA | NUMBER | NUMERIC |

The first row of the preceding table is when the table is empty on Oracle. However, choosing DOUBLE PRECISION depends on the type of data you store. For example, if you use the column to store money, make sure you convert it to NUMERIC in PostgreSQL to avoid arithmetic inaccuracies.

After you determine what the target database is, the next step is to apply those changes to the target database or change the target database’s data type accordingly.

Prerequisites

Before we go through how to change data types, we create a sample table with all the NUMBER data types in Oracle.

We need an Oracle database to create a sample table and populate it with the necessary data:

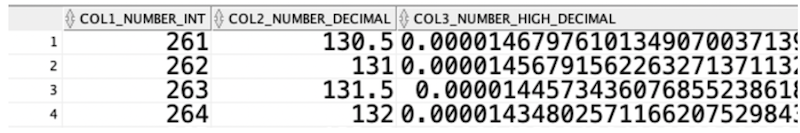

Generate some random sample data for the different characteristics of number data types using the following command:

The following screenshot shows our data.

You can deal with these conversions in different ways. For this post, we use AWS SCT to change the data types through two different methods:

- Convert only the primary or foreign key columns to BIGINT using AWS SCT

- Generate data type recommendations and convert using the AWS SCT data type mapping rules

Convert only primary or foreign key columns to BIGINT using AWS SCT

In this section, we discuss the default configuration option available in AWS SCT, which converts only primary or foreign key columns to BIGINT.

Starting from AWS SCT build 628, you can change the default data type mapping for columns with PK and FK referring to a modified PK. For example, you can convert a NUMBER data type with scale and precision to BIGINT, for performance improvement. Previously, when the NUMBER data type migrated from Oracle to PostgreSQL, AWS SCT defaulted the conversion to NUMERIC in PostgreSQL.

To set up this option, complete the following steps:

- On the AWS SCT console, on the Settings menu, choose Project settings.

The Current project settings box appears. - In the navigation pane, choose Conversion Settings.

- Select Convert NUMBER Primary\Foreign key to BIGINT ones.

- Choose OK.

By default, all NUMBER data types without precision or scale are converted to DOUBLE PRECISION, and those with some precision or scale are converted to NUMERIC. This conversion is not always correct. You will have to analyze the column data and come up with the right data type. The following screenshot shows the conversion for our sample table.

It implicitly converts NUMBER data types to BIGINT but only for the primary and foreign key column. The following screenshot shows that COL1_NUMBER_INT is converted to BIGINT because of the primary key on it.

See the following PostgreSQL code:

This approach doesn’t consider all NUMBER data type column variants in a database, only primary key or foreign key columns. If you want to convert all NUMBER data type columns to their respective data types in the target, follow the procedure in the next section.

Generate data type recommendations and convert using AWS SCT data type mapping rules

As we saw in the previous example, NUMBER can be declared with necessary precision and scale or as default (neither precision nor scale is defined). When migrating from Oracle to PostgreSQL, we can leverage metadata dictionaries as well as dynamically form a query to get actual data insights in terms of precision and scale.

We use the AWS SCT mapping rules to map data types for tables to the recommended type.

The idea is to generate data type recommendations using a SQL script and feed those recommendations into AWS SCT. These recommendations are generated based on metadata and actual data in Oracle.

The following sections walk you through the steps to map your ideal data type:

- Gather relevant information using a SQL script.

- Generate the mapping rules based on the recommended data type.

- Load the JSON mapping files into AWS SCT.

Gather relevant information

In the following SQL script, we set the values for run_actual_for_number_without_prec_scale , run_metadata_for_number_with_prec_scale, and run_only_for_indexed_column to govern how it fetches data from Oracle:

- run_actual_for_number_without_prec_scale = ‘Y’ – Forms a dynamic query on the target table and runs in parallel to get precision and scale information, but only for the NUMBER data type without any precision or scale. Based on the information it captures, it recommends the ideal PostgreSQL data type.

- run_metadata_for_number_with_prec_scale = ‘Y’ – Generates the ideal PostgreSQL data type based on Oracle metadata tables and doesn’t query only tables. However, it’s not ideal for cases when NUMBER is not defined with any precision or scale.

- run_only_for_indexed_column = ‘Y’ – Runs the script only for columns that are part of any indexes.

By default, the script is configured to run for all NUMBER data types. Running the script populates a table with all the relevant information gathered, as shown in the following screenshot.

Generate AWS SCT mapping rules

Based on the output from the SQL script we ran, we generate mapping rules that we can upload to AWS SCT. This script also generates the ALTER TABLE command, which you can use to change the target data type after you applied the AWS SCT generated scripts on the target. However, you need to be aware of dependent objects while running ALTER statements. For example, you can ALTER a foreign key column without running the ALTER for the primary key column. Also, make sure you change data type of the parameters and variables in the stored proceudres/functions where these columns are involved. Otherwise, it may result in bad query plans and cause performance issues.

We use a Python script to generate mapping rules, which is similar to the script in Automating table mappings creations in AWS DMS.

To generate transformation rules as per the new recommended data type, complete the following steps:

- Populate .csv files with the output generated from the previous step and save the file as sctdatamapping.csv.

- Copy the sctdatamapping.csv file to the

MappingFilefolder and get the complete path for the file. - Run the Python program as Python 3 compatible:

- Feed in the folder location captured as part of previous steps and run.

This process generates AWS SCT mapping files in the same location as the sctdatamapping.json file.

The following is a sample JSON file:

Load the JSON mapping files into AWS SCT

Upload the generated mapping rules into AWS SCT using the following steps:

- On the AWS SCT console, on the Settings menu, choose Mapping rules.

The Mapping Rules box appears. The top pane contains mapping (transformation) rules. - Choose Import script into SCT.

- Upload the JSON file you generated in the previous step.

You can see all the mapping rules after the file is uploaded. - Save the rules and convert the table by choosing the table (right-click) and choosing Convert.

The following screenshot shows our converted reference table.

The AWS SCT mapping rules to convert data types takes care of all the changes, so you don’t need to worry about the dependent objects as you do in ALTER statements.

Summary

This series of posts discussed how to convert the NUMBER data type from Oracle to PostgreSQL. In this post, we talked in detail about how AWS SCT helps convert the data types by using mapping rules. With mapping rules, AWS SCT converts the Oracle NUMBER data type to the respective PostgreSQL INT, BIGINT, DOUBLE PRECISION, or NUMERIC data types.

If you have any questions, comments, or other feedback, share your thoughts on the Amazon Aurora Discussion Forums.

About the authors

Baji Shaik is a Consultant with AWS ProServe, GCC India. His background spans a wide depth and breadth of expertise and experience in SQL/NoSQL database technologies. He is a Database Migration Expert and has developed many successful database solutions addressing challenging business requirements for moving databases from on-premises to Amazon RDS and Aurora PostgreSQL/MySQL. He is an eminent author, having written several books on PostgreSQL. A few of his recent works include “PostgreSQL Configuration“, “Beginning PostgreSQL on the Cloud”, and “PostgreSQL Development Essentials“. Furthermore, he has delivered several conference and workshop sessions.

Baji Shaik is a Consultant with AWS ProServe, GCC India. His background spans a wide depth and breadth of expertise and experience in SQL/NoSQL database technologies. He is a Database Migration Expert and has developed many successful database solutions addressing challenging business requirements for moving databases from on-premises to Amazon RDS and Aurora PostgreSQL/MySQL. He is an eminent author, having written several books on PostgreSQL. A few of his recent works include “PostgreSQL Configuration“, “Beginning PostgreSQL on the Cloud”, and “PostgreSQL Development Essentials“. Furthermore, he has delivered several conference and workshop sessions.

Sudip Acharya is a Sr. Consultant with the AWS ProServe team in India. He works with internal and external Amazon customers to provide guidance and technical assistance on database projects, helping them improve the value of their solutions when using AWS.

Sudip Acharya is a Sr. Consultant with the AWS ProServe team in India. He works with internal and external Amazon customers to provide guidance and technical assistance on database projects, helping them improve the value of their solutions when using AWS.

Deepak Mahto is a Consultant with the AWS Proserve Team in India. He has been working as Database Migration Lead, helping and enabling customers to migrate from commercial engines to Amazon RDS.His passion is automation and has designed and implemented multiple database or migration related tools.

Deepak Mahto is a Consultant with the AWS Proserve Team in India. He has been working as Database Migration Lead, helping and enabling customers to migrate from commercial engines to Amazon RDS.His passion is automation and has designed and implemented multiple database or migration related tools.