AWS Cloud Enterprise Strategy Blog

5 Tips for Keeping Pace with AWS’s Innovation

“It is not the strongest or the most intelligent who will survive but those who can best manage change.” -Charles Darwin

We’re constantly inventing and improving at AWS. And, while we currently offer customers more than 90 services (with many, like EC2, also providing a wide variety of sub-choices), the pace of our innovation roll-out continues to accelerate. Last year, for example, AWS released over 1,000 new features and changes, which means that developers can wake up to approximately 3 new changes on the AWS platform each and every day.

After talking with dozens of customers, there’s no question that they truly value this enriched and turbo-charged approach to innovation as they embark and proceed on their cloud journeys. But some organizations are asking for a series of best practices and processes that will help them keep up with the rapid pace of change. Their goal, of course, is to embrace and take full advantage of what’s new and improved at AWS.

Many enterprise customers have traditionally taken a measured approach when it comes to adopting new services and features because, even though there’s so much to gain from the fresh innovation, they’ve built up value in existing data, assets, and intellectual property. .

In addition, before launching a service or feature, enterprises prioritize the vetting of security, operations, and maintenance aspects of the new solution. In many cases, they’re forced, or look, to adhere to 3rd-party risk assurance and compliance frameworks (particularly regulated companies) in order to assess the security posture of their technology platforms. In the end, the challenge for organizations boils down to this — How do they rapidly take advantage of new products and features without abandoning operations and security discipline?

Tip #1 Define your operating model and tenets

Some enterprise customers face a different challenge when word gets out that AWS has been adopted. In this case, the cloud team is often overwhelmed with requests for access to the AWS platform and associated services. It may seem like a trivial problem, but in enterprises with hundreds, or even thousands, of applications and teams, the scaling challenges make this a significant issue.

To help organizations deal with each of these issues, and get the most out of new AWS services and features, enterprise leaders need to ask themselves what their future-state operating model is going to look like. Or, put another way, what’s the new culture, or set of tenets, they want to establish?

For most customers, the spectrum of operating model and culture extends from conservative, controlled, and centralized decision-making all the way to decentralized, DevOps-style decision making. As I’ve said in a previous post, there’s no right or wrong approach here; and, in most cases, enterprises use multiple models because some workloads and applications require more control and others need freedom, flexibility, and agility.

If an organization wants to give developers more freedom and autonomy, for instance, it will allow them to decide which AWS services they need to build the best cloud-based experiences. If, on the other hand, an organization believes in a more centralized, controlled model, it will vet AWS services before they’re adopted. These are the extremes and I’ve found that most organizations end up somewhere between these book ends.

Having said this, I’d like to share a few best practices and processes that will help with AWS service adoption, no matter where your organization sits on the spectrum.

Tip #2 Contain blast radius and trust but verify

One way to help introduce more services with greater control and less risk in the decentralized — or DevOps-oriented — model is by provisioning an AWS account for each team. This essentially gives the team free reign. And more freedom can be safely granted because the blast radius of any issue is contained within the individual account, assuming that workloads from other teams aren’t moved into the account. Connectivity to services in other accounts can be made available, however, through VPC peering. This approach, in which the team owns everything in the account, also makes billing and cost management relatively easy.

To help provide guard rails to these accounts, security controls can be enforced through AWS Organizations. Security policies can also be integrated at the time of account provisioning. And you can run policy checks periodically. I call this the trust-but-verify model. In addition to AWS’ different security control mechanisms, like AWS Organizations, some companies have looked to 3rd-party tools like Cloud Custodian (an open source product created by Capital One) or Turbot. We’ve also seen customers create custom policies and rules using AWS Lambda. I’m not necessarily endorsing these tools, but I’ve seen customers leverage them to deal with cross-account policy enforcement.

Tip #3 Create an AWS service assessment framework

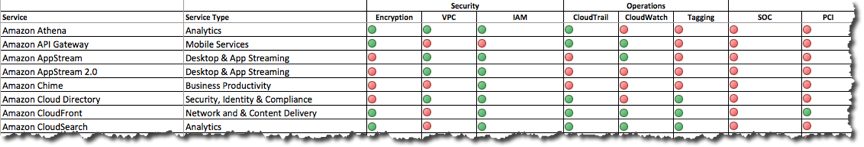

Let’s turn to a centralized model now. One process that breaks logjams and adds speed in this command-and-control culture involves creating a documented disposition of AWS services to help the cloud team with requests from the organization. I helped introduce this process at my old company, and the first step is capturing all the AWS services and relevant meta-data for each service in a document (Figure 1, below). Then you can evaluate each service based on security, operations, integration, and architectural standards and compatibility that make sense for your company.

When we started using this process in my previous company, we were committed to a single proprietary and single open source database engine. And we didn’t want to proliferate other database engines without good reason because of the expertise we’d already built up. It was a bit tedious gathering all the data, yet it was well worth it. Once we pulled the data together, our cloud team had a scalable way to address the large volume of inbound questions and service requests, and requests for new services or exceptions were managed through our architecture governance process, which provided guidance rather than black-and-white rules. For example, before DynamoDB offered out-of-the-box encryption, it went against our standards to have encryption on by default for cloud storage services. But since DynamoDB provided so much value in terms of speed, agility, and scale, we created an exception and allowed its usage for less critical classes of data.

There are other ways to open things up in an organization with a centralized model.

Tip #4 Define an AWS service adoption lifecycle

Setting up a sandbox environment where teams can play with any AWS service, for example, will definitely help encourage exploration and experimentation. The sandbox environment shouldn’t be connected to any production environment, and the services can be automatically decommissioned after just a few weeks to help ensure there are no run-away costs. But if a team decides that it wants to pilot a new AWS service after exploration and experimentation, it can then work with the cloud COE to share early learnings and get started. And once a team starts a pilot, it owns the responsibility for operating and securing the new service through the production phase. (Figure 2, below.)

This approach may seem extremely bureaucratic to DevOps-minded enterprises, but in a conservative, centralized company that’s not quite ready to jump into a host of new AWS services it can help strike a reasonable balance.

We tried to strike that balance at my previous company, which had an operational culture where application teams often depended on centrally managed services to help them with incidents in production (e.g. performance issues). But in order to provide that type of service, we had a limited portfolio of technologies the shared service teams could support. We created a distinction between “App Managed” versus “Enterprise Managed” services, and only architectures and services that became mainstream were graduated to the “Enterprise Managed” status that allowed teams to ask for shared service help.

Tip #5 Get your Cloud Center of Excellence Agile

If you’re trying to gradually move from a centralized and controlled operating model, you’ll undoubtedly have to face several significant challenges in the process.

As I noted above, the cloud team is often inundated with requests from application teams that are eager to take advantage of AWS’s cornucopia of services and the speed in which they can be provisioned. But turning on services in an enterprise environment often requires that the security and operational controls and processes are in place, and this can take time. So, if an organization isn’t managing this well, the cloud can quickly feel more like an on-premise data center with long wait times to provision and configure infrastructure.

Another challenge is that the cloud team is often heavily staffed with infrastructure-skilled resources. This makes a lot of sense, but approximately two-thirds of AWS services are “up the stack” and sit above what legacy infrastructure teams are accustomed to managing (i.e. compute, storage, networking, etc.). As a result, the cloud team isn’t yet trained on these services, and it’s not in a position to help turn them on.

To help tackle these challenges, the cloud team and architects (both infrastructure and application) need to take several concrete steps.

First, the cloud team must change the way it manages its work. And, clearly, it’s got a lot on its plate — everything from running the migration factory to managing and engineering the cloud platform to dealing with process and cost optimization. Many cloud teams manage their work using ticketing and waterfall-based methodologies, but these approaches make it really difficult to prioritize tasks and often cause frustration within an organization. In addition, waterfall-based plans quickly become irrelevant in a rapidly changing environment.

That’s why we hired a professional Agile coach when my prior organization faced similar challenges. Initially, there were a lot of skeptics, because most team members didn’t have much experience using Agile; but after just a few sprints, the flow of work, as well as team satisfaction, changed dramatically. Indeed, everyone was now on the same page because of the back-log prioritization, daily stand-ups and sprint retrospectives, and a chunk of work that had been agreed upon by all parties, including the requesting team, was regularly delivered every two weeks.

These mechanisms are not mutually exclusive to any model, and they can be mixed and matched if an organization is supporting multiple models. For example, you can give one set of accounts and teams a lot of freedom in a “trust-but-verify” model and completely lock down another set of accounts because of the workloads that exist there.

I’d love to hear what you’ve learned, or if there’s anything else you’d like to add here. But, regardless of your organization’s operating model or culture, there are many ways to take full advantage of AWS’ fast-paced re-invention efforts.

Never stop innovating,

Joe

chung@amazon.com

@chunjx

http://aws.amazon.com/enterprise/