AWS for Industries

Automating the Installation Qualification (IQ) Step to Expedite GxP Compliance

Good practice (GxP) guidelines were established by the Food and Drug Administration (FDA) and aim to ensure organizations working in life sciences develop, manufacture, and distribute products that are safe, meet quality guidelines, and are fit for use. GxP compliance has been a part of the life sciences industry for many years and heavily influences how HCLS customers need to deliver computer systems as part of their quality management system. One key point is the need to qualify and validate computer systems. Customers are usually familiar with how to do this on-premise but may be unsure how to do so when it comes to the cloud. The process to create and execute a validation plan has traditionally been manual and labor-intensive. In this post, we propose an approach that can automate one of the first components of a validation plan – the Installation Qualification (IQ).

As per GAMP 5 A Risk-Based Approach to Compliant GxP Computerized Systems, Installation Qualification (IQ) is the verification, and documentation, that a system is installed according to a pre-approved specification. Verification is achieved through testing that shows that the installation and configuration of software and hardware was correct. We will use this definition to form the key requirements for this automation.

First, let’s consider the “pre-approved specification.” It is an AWS best practice to define the required infrastructure through the use of an Infrastructure as Code (IaC) template. This template describes the required resources and their configuration. The configuration may change slightly between environments (QA/UAT/PRE-PROD/PROD) and regions, but the core IaC template does not. This flexibility is achieved through template parameters. This template is then used by AWS CloudFormation to provision the resources.

These templates are controlled in a similar way as source code. By storing them in a source code repository it enables us to version the template and keep a complete history of its evolution over time.

Another key part of that phrase is “pre-approved.” There are many ways that a customer can handle the approval. For example, a Jira workflow or a pull request approval in their source code repository. Whatever the method it will be vetted and approved by the customers Quality IT or Compliance team. The net result is a specific version of the template in the source code repository being recorded as approved.

So, we now have a pre-approved specification describing the resources we want deployed. Once approved, the automated pipeline is triggered to deploy the resources. We then need to look at the next requirement, to demonstrate the installation was correct. This can be done by comparing the resources actually deployed by AWS CloudFormation into the account against the pre-approved template we have under source control.

Approaches

Our proposed architecture can be deployed in a couple of ways:

- Automate individual account

- Centralized setup

Individual account

In this approach, every AWS CloudFormation that is run can be integrated with the continuous integration and continuous delivery (CI/CD) pipeline and IQ output can be produced. This approach works well where you already have CI/CD pipeline available. Refer here for details on how to set up CI/CD pipelines. This approach also offers the flexibility to customize per account.

Centralized setup

In this approach, the shared services account will host the core of the software. Every account that needs to perform an automated IQ simply needs to install a CloudWatch rule that sends events to the centralized software hosted in the shared services account, and a role enabling the automation to reach into every account to query the deployed resources.

This approach has the added benefit that management and upgrades are centralized. If there is any change then it will have to be deployed only once into the shared services account. However, on the downside, if additional permissions are needed to query newer resources, then those have to be deployed into every account, but this can be automated too.

Architecture overview

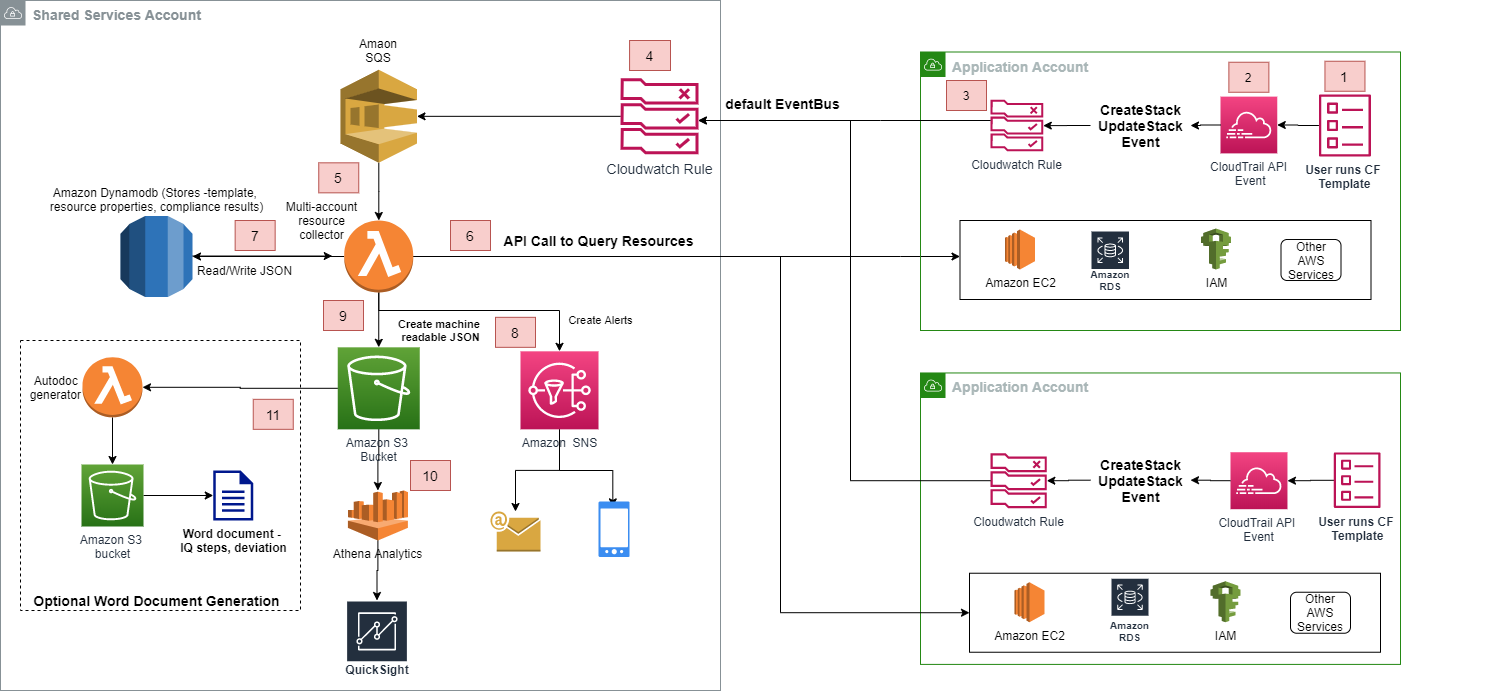

Figure 1: Architecture Overview

Application Account flow:

- User runs CloudFormation template

- This generates CloudTrail API Event

- CloudWatch rule is triggered.

Shared Services Account flow:

- CloudWatch rule receives event from various application accounts and forwards it to SQS

- The SQS triggers lambda function

- Lambda function queries resources created by CloudFormation in the Application account and compares against baseline.

- Store the results in DynamoDB as JSON

- Assess if IQ step passed or failed. If any compliance issues are found, fail the IQ step and send notification via SNS

- Create a JSON of resources, properties, alerts, and compliance store it S3 bucket

- Use Athena with Quicksight to use the JSON from S3 and create reports/dashboards

- Use Autodoc generator lambda to optionally create Word document and store in S3

Detailed Explanation

In each Application account CloudWatch Event rules are configured to be triggered on CloudTrail API calls for CreateStack and UpdateStack. The target is set to the EventBus of the Shared Services account.

AWS CloudFormation Snippet for Creating Events Rule in Application account:

Every AWS account has one default Amazon EventBus, which can receive events generated by AWS services, custom applications, other services and accounts.

The shared services account EventBusPolicy enables it to receives the CloudWatch events originating from the event rules setup in the application accounts.

AWS CloudFormation snippet shared services account EventBus policy:

The Events could trigger a Lambda directly, but setting up Amazon SQS as a target offers a reliable, highly scalable hosted queue for storing events for consumption.

AWS CloudFormation snippet shared services account CloudWatch event rule:

Messages on the Amazon SQS queue trigger the multi account resource collector AWS Lambda which consumes the events. For a cost-effective solution, the resource collector Lambda filters the events based on status and state of stack. It filters out the event if the stack is in pending or incomplete status and only processes the events which are in a stack complete status. The resource collector Lambda also filters out the CreateStack and UpdateStack events from its own shared services account. The CreateStack and UpdateStack Events contain the stack id, stack name, and other details that enable the Lambda to make API calls to application accounts to query the CloudFormation stack and its resources.

Correct permissions should be set at the application account level so that Lambda functions in the shared services account can assume a role to get the details about the resources created in the application account. This forms the basis for centralized setup to collect information on the resources created in distributed accounts in different Regions.

The Multi-account resource collector Lambda is assigned a role which enables it to assume the necessary AWS STS role within the application accounts to get details of resources created by AWS CloudFormation. Also, it creates and maintains additional logs like audit logs and validation data.

There are a few possible ways the Multi-account resource collector Lambda could pull infrastructure information from the application accounts. Assuming that the approved version of the CloudFormation template is kept in a code repository like AWS Codecommit, the lambda could pull the template and its run time parameters from there. Another option would be to store the template in an S3 bucket to which the lambda has access. The lambda then queries the resources/stack definition now deployed in the account and does the comparison to determine if the IQ step should pass or fail.

The CloudFormation parameters, infrastructure, and resource properties can also be queried via the CloudFormation API and then stored in DynamoDB as depicted in the architecture diagram. In this case, the DynamoDB serves as the golden approved copy of the template. This provides some advantage of persistence of additional data including custom and granular properties by making API calls on stacks and its resources. However, for this option the template first has to be run in some environment via a special ‘unqualified ‘ mode so that it can record an approved or ‘golden’ baseline. Later, we run the same template in ‘qualified’ mode and compare against the baseline. This architecture uses the Dynamo DB option.

The properties of the deployed infrastructure can be classified as dynamic or static properties. For example, the “public ip address” property of EC2 instance will be classified as a dynamic property because it can change vs “AMI Id” for a region will be classified as a static property. For dynamic properties check is made for the existence of properties and for static properties the actual values are to be compared. For audit purposes, the results are also stored in DynamoDB.

The resultant comparison data is then stored in a controlled Amazon S3 bucket in JSON format. The comparison data includes information of properties of resources and status of comparison and can be used for further analysis and can be integrated with other services for better reporting, monitoring, and analytics. For example, we could use Amazon Athena to query and get the results on deviation of the resources from standard benchmark.

(Optional) IQ Documentation Generator

A word on the use of documents. The use of documents has long been the default format to capture evidence during Computer Systems Validation. However, they are just another format of the same records captured in the JSON files. These JSON files can be controlled just as well, if not better, than documents in a document management system. If JSON is not considered sufficiently human readable, then a report is a better option to convert JSON into another format. This conversion can then be done when needed, not as a default step, removing the document management burden entirely.

However, for customers that still have SOPs mandating some form of document, it is possible to trigger the creation of an IQ Document based on a template.

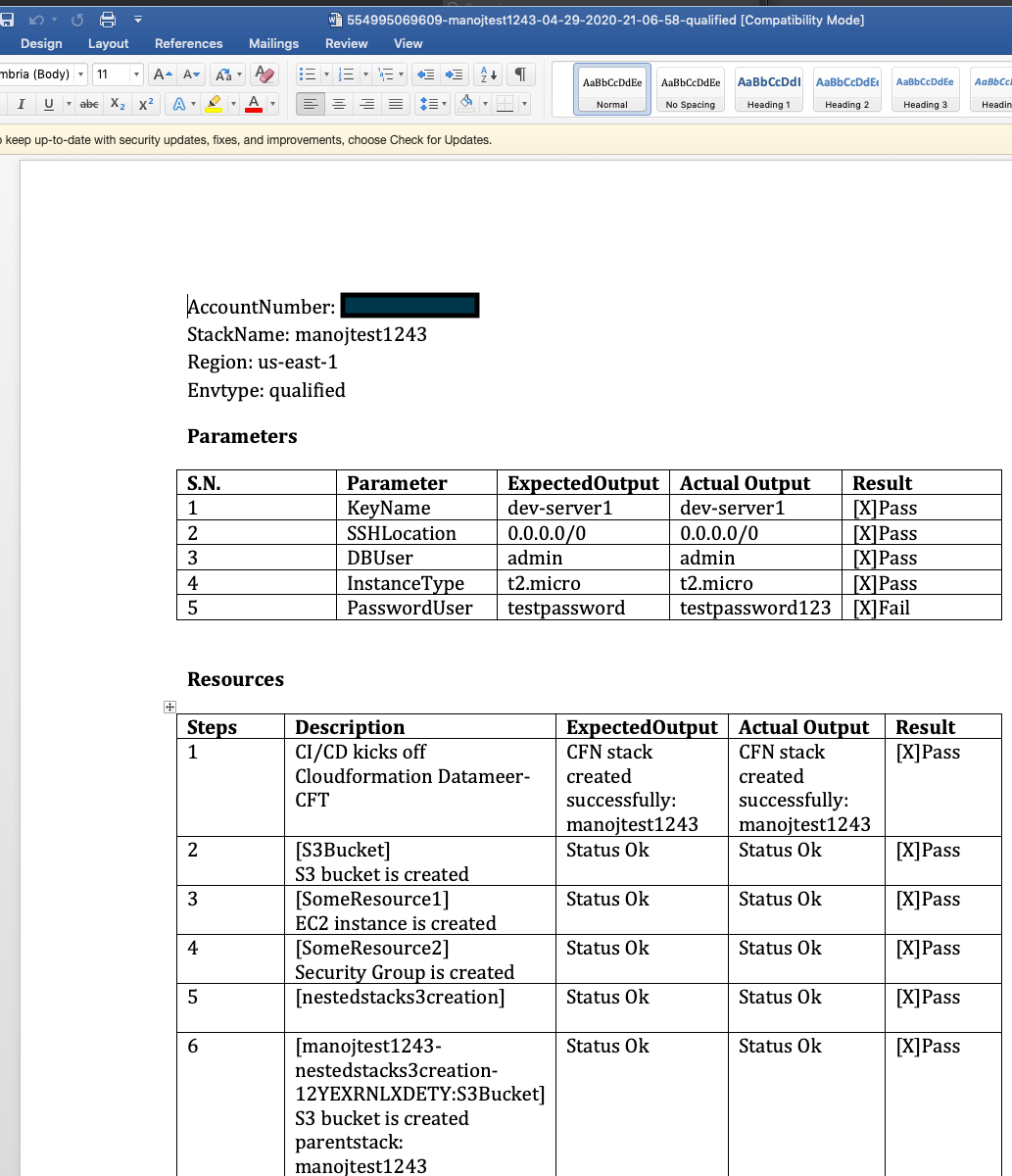

The creation of a JSON file in the Amazon S3 source bucket can be used to trigger another AWS Lambda (Autodoc generator) to generate a document. The Autodoc generator Lambda reads the JSON file and formats it into a more readable and presentable word document. This word document can be used for compliance proof and documentation.

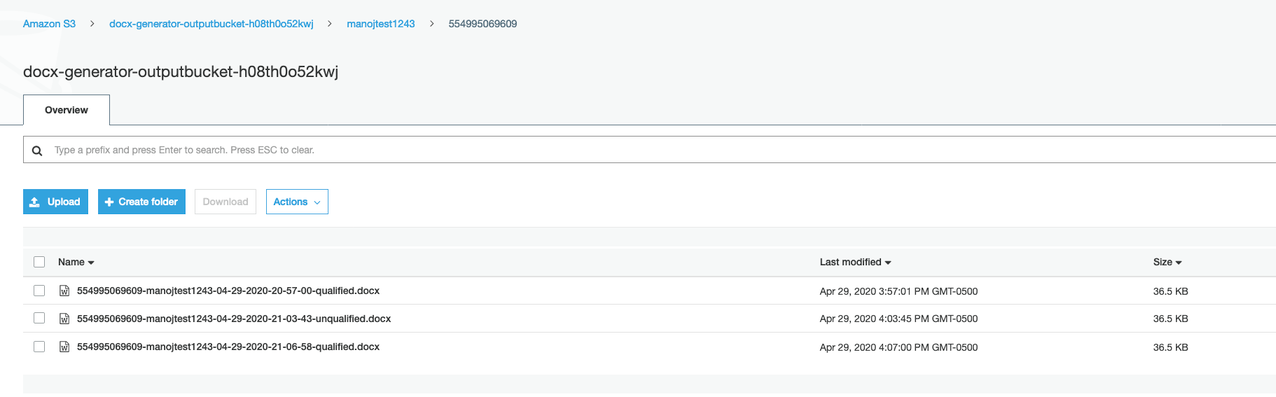

Figure 2: S3 bucket showing word documents

This entire architecture is a serverless and event-based architecture. Any standard library like Python docx can be used, and the generated docx is stored into Amazon S3 output bucket. The customer can move the documents to a document management system if mandated by an SOP.

Figure 3: Auto-generated Word document

Security considerations

Since this is a multi-account set up, special care has to be taken so that only necessary Amazon IAM policies are granted to application and shared services accounts. First, the application account sends events to the shared services account, and for this the shared services account needs to grant permissions to each application account it will receive events from. Every time new application accounts are added they need adding to the CloudWatch default Amazon EventBus permissions. Consider account bootstrapping to accomplish this in an automated fashion.

The shared services account is going to reach into the application account to query the resources. It will need permissions to query all resources but only allow the services that you are interested in and/or are approved for use. Also, the permissions should be limited to list/get so that the shared services account can never modify any resource in the application account. Also, these permissions should be limited to the Lambda function ARN from the shared services account so that no other resource can start querying the application account.

Follow the principle of least privileged for the S3 buckets that stores the JSON and optional word document. Object level permission can be granted to the owner of the CloudFormation template. Alternatively, a dashboard application can be created to access these objects and permissions are maintained separately.

Conclusion

This blog post shows an architecture that can be used to automate the Installation Qualification step of Computer System Validation. This architecture should be used in compliance with the company’s SOPs to create appropriate evidence that can demonstrate a deployment was done according to specification.

The whitepaper “Considerations for Using AWS Products in GxP Systems” is a good read to understand what you need to consider for GxP compliance. Here is also a blog post that shows an architecture that is a per account setup alternative and is more suited for software that is being developed in-house that has a CI/CD pipeline defined.

To learn more about GxP compliance on AWS, visit the GxP Compliance Page. Also, you can download GxP related access-controlled documents from AWS Artifact after logging into your AWS Management Console.