Networking & Content Delivery

Using multiple content delivery networks for video streaming – part 1

Introduction

Today, viewing video content is a prevalent form of online activity whether in entertainment, education, marketing, or information. For example, as a Solutions Architect at AWS, I tend to watch hours of video a week to learn about technologies, and I also leverage video content to convey ideas and best practices in a scalable way. According to Sandvine’s Global Internet Phenomena Report in 2018, video accounts for almost 58% of the total downstream traffic on the internet.

Research shows that the quality of streaming video has a considerable impact on user engagement, which determines how much value is generated from video content. For example, a 2018 study by Nice People At Work found that when a viewer experiences a buffer ratio higher than 2%, the chance of user churn increases by 30%. In general, video streaming quality depends on multiple components like encoding, player heuristics, and video delivery.

In this two-part series, I will focus on video delivery. In part 1, I will explain how a content delivery network (CDN) like Amazon CloudFront can improve your video delivery quality, and whether using multiple CDNs is beneficial for your company to improve quality further. I’ll conclude part 1 with recommendations on how to measure the performance of a CDN. Part 2 is intended for companies who have made the decision to use multiple CDNs. In part 2, I will provide prescriptive guidance and also some questions you should consider when pursuing a multi-CDN strategy.

Performance and availability of Amazon CloudFront

As a mature and robust CDN, CloudFront has implemented systems and optimizations that benefit our customers with the levels of availability and performance that they expect from AWS.

Every time a user establishes a TCP connection to a CloudFront point of presence (PoP), we measure the round trip time during the 3 way handshake, and then use these data points to compile, in real time, a table of PoP-to-user network latencies. Additionally, we enrich this table with latency measurements initiated from various experiment systems (such as Amazon web properties) that provide us representative latencies for viewers on thousands of global networks. Whenever a user makes a DNS query to resolve a hostname accelerated by CloudFront, it resolves to IP addresses of the best PoP in terms of latency. This way, viewers receive the best experience by being served from the best location for them at that time based on continually updated performance data. By combining this latency data with other performance and load conditions, CloudFront ensures the best connection characteristics for the video delivery and is very responsive to changing network conditions.

CloudFront is designed around the principles of systemic redundancy and component expendability. Any given component in CloudFront is designed to minimize the impact to the overall system if the component fails. For CloudFront, component failures can be due to a variety of causes such as hardware or physical failures, network failures, single host failures, capacity overload or DDoS attacks, or even planned maintenance activities which take components offline. Below is a sample of availability detection mechanisms CloudFront has put in place to detect different kind of failures:

- CloudFront measures availability and performance at each host and PoP level through internal monitoring systems measuring server errors and connection failures. We have set up extensive alarms that trigger automated mitigation measures to route around sick hosts, take individual hosts offline, and/or alert operators as appropriate to minimize impact to our customers.

- In addition to third-party availability monitoring, CloudFront runs an external availability canary on several external hosts globally that probes CloudFront as if it was a customer. A PoP going offline is typically detected within seconds by the CloudFront health checker and this data is used to route the requests instead to the nearest or the next best PoP. CloudFront has implemented capacity management systems that measure bandwidth and server capacity in each PoP in real-time as well as looking at metrics like link-level congestion, and feed this data into the request routing system to adjust the traffic being directed to a PoP. With a DNS TTL of 60 seconds, we see the traffic being routed away from the bad PoP within minutes.

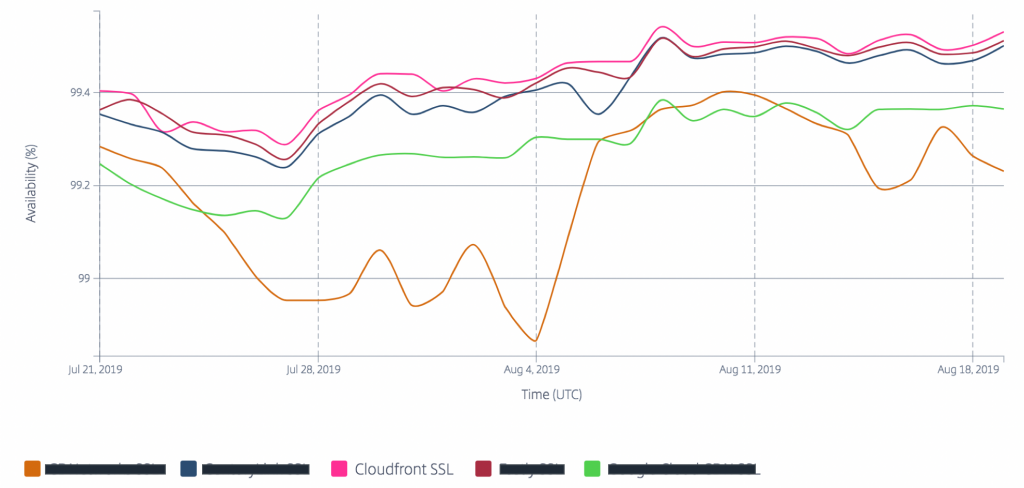

The resiliency of CloudFront due to its design is demonstrated in the following availability report from Radar Community measurements by Cedexis (Now Citrix ITM) spanning over 30 days in Europe. Cedexis collects CDN performance data from real user’s browser using a JavaScript script running on some popular websites.

For more details about how CloudFront optimizes routing for availability and performance, you can watch the following talk from reInvent 2017.

Finally, CloudFront has multiple built-in features that improve performance for video delivery:

- Regional edge caches (RECs) are an additional layer of caching that sits between CloudFront PoPs and your origin. This layer of caching reduces the load on your origin, and keeps your content in the CloudFront cache for longer time (more cache width).

- CloudFront streams content to users as soon as it is received from your origin, which reduces the time to the first byte on cache misses. This feature reduces the time to play for pseudo-streaming long tail VoD, and enables low latency live streaming.

- Request collapsing at different caching layers reduces the number of requests sent back to your origin during popular live streaming events, where all users are asking for the same video segments at the same time. Instead of forwarding all of these initial requests to your origin, we collapse them into fewer ones.

- When CloudFront receives a byte range request for an object, it might fetch a larger range from the origin to speed up subsequent byte range requests from the user. After multiple byte ranges on the same object, CloudFront fetches in the background the full object from the origin to increase the cache hit ratio. This feature boosts the performance of progressive downloads of media files.

Adopting a multi-CDN strategy

For many content publishers, using a single CDN satisfies their video delivery requirements. However, some customers use multiple CDNs for a variety of reasons such as redundancy, performance, capacity needs, and commercial considerations.

For example, when you have high capacity requirements on the order of petabytes per month, perhaps concentrated in a specific region, you might need to source capacity from multiple CDNs in certain networks or geographies to meet your overall need. Another example is when your audience is spread across many geographies, you might need to use CDNs which are the only ones present locally in some geographies. In these situations, load-balancing between CDNs is fairly simple and static.

When I talk about multi-CDN, I specifically mean load-balancing traffic dynamically across CDNs based on their instantaneous performance to maximize delivery quality. If streaming quality is critical to your business, then serving your content from a CDN’s second best PoP in the event of PoP failure or saturation might not be satisfying for you.

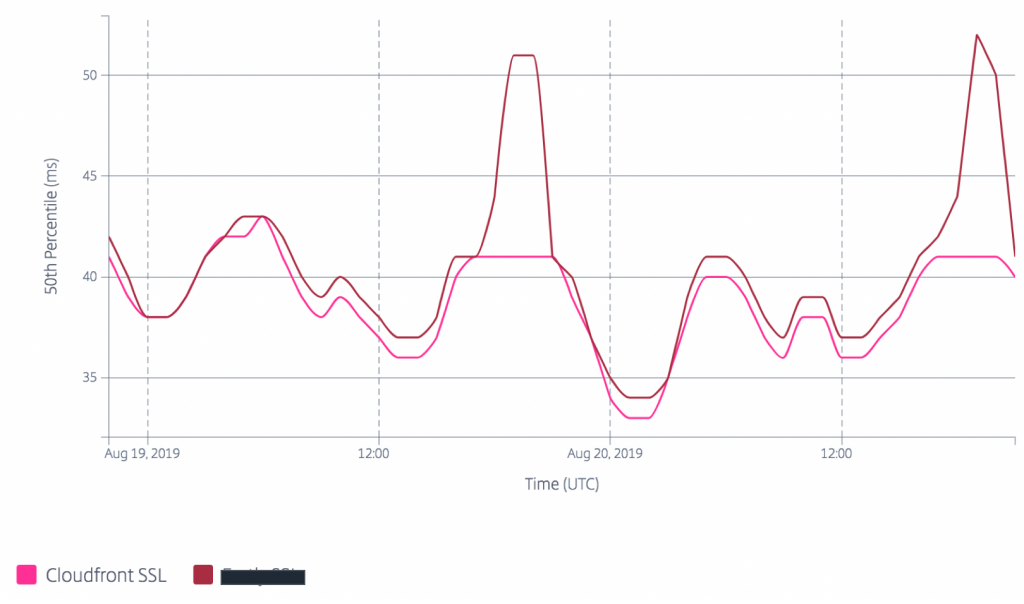

Unfortunately, these kind of events happen frequently and CDNs are constantly competing over performance. As an illustration of this, the following median latency report from Radar Community shows the performance of two CDNs spanning a recent two day period in France. You can see how the latency of one CDN increased by 10ms during peak hours each day.

When you have the same level of requirements in terms of video delivery quality, then you might want to consider a multi-CDN strategy. However, before taking this approach, you need to balance its benefits with its drawbacks:

- Managing multiple CDNs is not without overhead. You need to manage multiple vendors, and constantly test and configure all CDNs whenever you need to roll out a new change like adding token protection.

- Even though basic video delivery is not always demanding in terms of CDN features, you might have CDNs without feature parity. As a result, your feature possibilities will be limited to the lowest common denominator of the CDNs. In rare occasions, this may work against your initial goal if the missing feature is one that helps optimize performance.

- Load balancing traffic between multiple CDNs requires additional resources and cost, whether you build it yourself or use a commercial solution. It might also introduce an additional point of failure to your video delivery.

- Sometimes, the performance gain is minimal. For example, in certain geographies where CDNs and networks are mature and have massive presence, your content will most likely be served with good performance.

- Spreading traffic across multiple CDNs reduces the global cache hit ratio. In fact, your traffic now needs to populate the cache of multiple CDNs instead of a single one, which might be a concern according to your CDNs “cache warming” thresholds, your content size and popularity, and your traffic volume and geographical distribution. For example, cache hit ratio is more of a concern for VoD or linear TV than for popular live events. As I mentioned earlier, you might need petabyte scale to make a multi-CDN strategy relevant for you.

Measuring the performance of CDNs

Regardless of your CDN strategy, you should measure a CDN in a relevant way. This is an important step, because relying on inaccurate data leads to suboptimal decisions whether it is for benchmarking CDNs during procurement or for dynamic routing across multiple CDNs. Today, there are two common approaches for this.

The first one is consuming third party real user measurements (RUM) data like Radar community measurements provided by Cedexis. This approach has the benefit of being simple to implement, and it is quite reliable for monitoring the performance trend of a single CDN. However, it has some undesirable biases when used to compare CDNs:

- In methodology: It measures the performance of a CDN for hot objects with 100Kb size, while your video content is a mix of hot, warm, and cold objects with larger object sizes.

- In configuration: Your CDN setup might not be the same as the one used by Cedexis for measurement, for example in terms of CDN maps or optimizations.

You can mitigate some of these these biases by using Cedexis’ private measurements instead. In private measurements, you measure quality of service (QoS) metrics like throughput, latency, and availability of your own CDN setup. If you go this path, I recommend that you use a larger object size like 500Kb on the same CDN hostname of your video delivery, and to highly index on throughput and availability metrics in your CDN scoring and switching algorithm.

This is why I recommend the second approach, which is about measuring the video performance as experienced by your own users when consuming your own content. You can achieve it by implementing in your player an agent that measures the metrics you mostly care about. You can do it yourself using AWS services like Amazon Kinesis Data Firehose to ingest player events and Amazon Athena to query data. See this lab from re:Invent 2018 to learn how to build an AWS analytics solution to monitor the video streaming experience. If you don’t have the resources to do it yourself, you can use commercial solutions like Nice People at Work, Conviva, or Mux where you measure the quality of experience (QoE) as perceived by your audience, such as buffer ratio, bitrate, startup times, and so on.

However, in certain situations, you might not have enough data points to measure performance reliably using this approach, for example when you are operating in countries with a low number of users. In such situations, you can compensate with community RUM measurements.

Conclusion

In general, CDNs like CloudFront have mechanisms to serve users from the best PoP locations with the aim of optimizing availability and performance within the possibilities of their infrastructure. Some companies who operate video business at the scale of petabytes per month go beyond using a single CDN and invest in a multi-CDN strategy to optimize further video delivery. In part 2, I will discuss how to implement a multi-CDN strategy.

| Blog: Using AWS Client VPN to securely access AWS and on-premises resources | ||

| Learn about AWS VPN services | ||

|

Watch re:Invent 2019: Connectivity to AWS and hybrid AWS network architectures |