AWS Startups Blog

Expanding Kloudless’s Global Reach by Bridging Regions with Amazon Route 53

Guest post by Vinod Chandru, Co-founder, Kloudless

Kloudless is a developer tool that enables applications to connect to any storage service through a single, unified API. In addition to basic operations such as traversing directories and uploading or downloading files, developers can use advanced features like search, access controls, event notifications, and more across any storage platform.

As Kloudless has grown to support developers from around the world, a big challenge is to ensure that a developer located thousands of miles away is able to use the API without a drastic drop-off in performance.

A Bit of History: B2C to B2D

At Kloudless, our mission has always been to help people deal with the growing number of storage platforms, but “people” has not always meant developers.

When we started Kloudless three years ago, consumerization of the enterprise was just starting to reshape the landscape of enterprise IT. People were putting personal and work data all over the place. To help users manage all this data, we started out building tools for consumers.

While working on the B2C business, we encountered a huge developer pain: storage APIs are extremely fragmented. The problem extends to documentation, feature sets, and even execution of seemingly the same feature. Having to work across all of them sucked up valuable time and resources.

Providing a service for developers gives us a much bigger reach than a B2C business ever could. But, it also presents us with a new set of performance challenges.

A Reliable Developer Platform

As the transaction layer that connects apps to storage platforms, it is very important for us to maintain high performance standards. We needed to consider two major infrastructure optimizations:

- Be just as reliable as the apps and storage platforms that we’re connecting together. High availability, reliability and global accessibility are key here.

- Minimize latency. Proximity to our customers and storage platforms helps us optimize throughput, which reduces latency.

These two factors played a large role in our choice to host our infrastructure on AWS. This blog post explains how we used AWS to optimize for minimal latency.

Amazon VPC and Amazon Route 53 have been invaluable in helping us expand globally. Even from the very beginning, we needed to be conscious of where our customers were geographically located in order to offer the best possible experience to them.

Consider this case: A user located in Brazil is using a messaging app to save a file to Amazon Cloud Drive. If the Kloudless servers are located only in a North American region, all data transfer between the messaging app’s users in South America and Amazon Cloud Drive would have to travel north first. The data would then have to travel back to the location of the storage service’s servers, which could potentially be adjacent to the user. This means we just added an entirely unnecessary intercontinental round trip!

This issue is exacerbated when server-to-server communication is involved and our API servers are located far away from our customers. With VPC, we can set up private networks in each region that are connected to one other, facilitating data transfer and eventual consistency.

That said, replicating infrastructure in every possible region would be prohibitively expensive and unnecessary.

The Kloudless server-side architecture has two major components: API servers and data-transfer servers. API servers and their associated processing clusters handle normal API requests, but offload bandwidth-intensive workloads to the data-transfer servers. By building a network of lightweight servers that are distributed across regions, we solve the round-trip problem described previously. Regional ‘hubs’ contain a replica of our infrastructure, while ‘spokes’ in nearby AWS regions and other cloud hosts minimize data transfer latency.

Bridging Regions

Communication between the data transfer and API servers, as well as eventual consistency of database information, requires interconnected VPCs in each region. But a quick check of the documentation shows that VPC peering can’t be performed between VPCs in different regions. Fortunately, we learned how to connect two AWS regions, as the rest of this section explains.

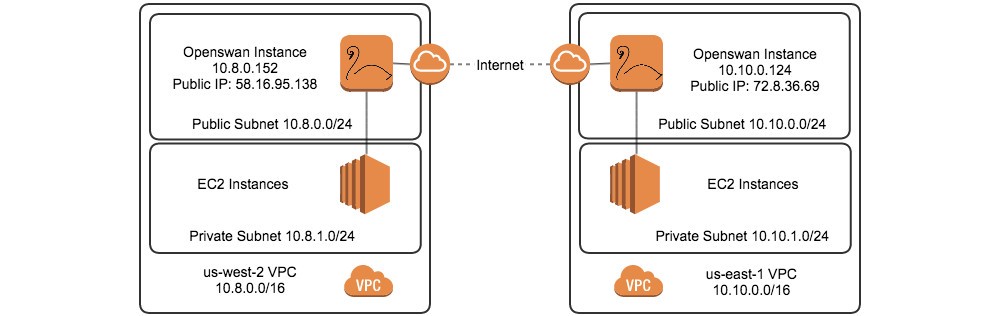

The solution is to run an Openswan server in each VPC’s public subnet and establish an IPSec connection between them. We can then route traffic from a subnet in one region to a subnet in another region through the Openswan servers. This enables instances in each VPC’s subnets to connect to each other using private addresses.

Launching the Instances

Let’s walk through an example. Launch a server in the first VPC as well as one in the second VPC to use to establish the IPSec tunnel. Make sure the instance sizes for these tunnel servers are adequate for the throughput you expect. Smaller instance sizes have less bandwidth available to them, which will cause a bottleneck in your network. For this example, we will be using an m3.medium running Ubuntu 12.04 LTS.

First, configure both servers to forward traffic:

$ sudo sysctl -p /etc/sysctl.d/99-openswan.conf

Disable each instance’s Source/Dest. Check by right-clicking on each in the EC2 console (Network Interface page). Also configure an Elastic IP for each instance and configure the instances’ security groups to allow UDP ports 500, 4500 and IP protocols 50, 51 from each other’s Elastic IPs.

Openswan

The next step is to install and configure Openswan.

$ sudo apt-get install openswan

Make sure that IPsec configuration file has the last line below:

Let’s assume the instance’s VPC is us-east-1 and the other VPC is us-west-2. Create an IPsec configuration file named us-west-2.conf with contents of the form specified below.

Replace LOCAL_PRIVATE_SUBNET with the CIDR address of the subnet containing the instance. For example, it would be 10.10.0.0/16 for the VPC shown in the previous infrastructure diagram. The REMOTE_ELASTIC_IP should be the Elastic IP of the other tunnel server (e.g., 58.16.95.138), and REMOTE_PRIVATE_SUBNET is its subnet’s address (e.g., 10.8.0.0/16).

IPsec can use pre-shared keys for authentication. Continuing from the example above, create files for the secrets:

REMOTE_PRIVATE_SUBNET contains the same value as previously, and RANDOM_KEY should be replaced with a long random string.

Perform the same steps above for the instance in us-west-2, switching the local and remote values, and using the name “us-east-1” instead wherever us-west-2 was specified.

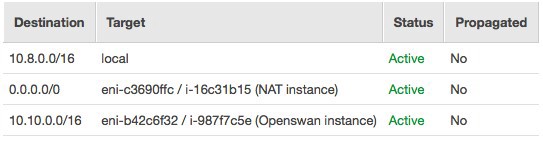

AWS needs to be aware of this routing as well. In the VPC console, navigate to the Route Tables for each VPC and enter the CIDR address for the REMOTE_PRIVATE_SUBNET (the subnet in the other VPC) as a Destination, with the target set to the tunnel instance. For example, the main route table for us-west-2 might look like this:

Turn on IPSec and reload your network settings on both instances:

$ sudo service ipsec start $ sudo service network restart $ sudo service ipsec status # Check

You should now be able to connect from an instance in one VPC to an instance in the other! When attempting this, make sure your security group configuration allows access from the CIDR address of the instance in the other VPC to the appropriate port.

Directing Traffic

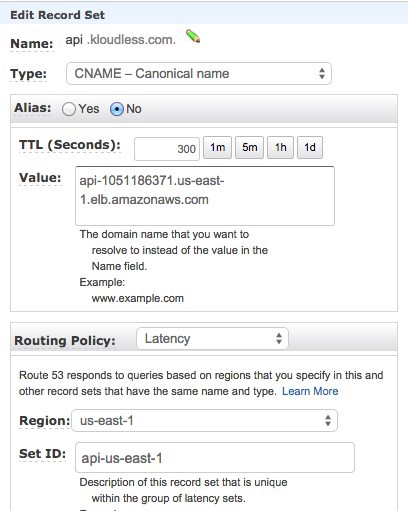

Route 53 provides an easy way to direct requests to servers in the correct region. I’m going to use api.kloudless.com as an example. Let’s assume we deploy API servers behind an Elastic Load Balancing load balancer in us-east-1 and duplicate this setup in us-west-2. We would prefer users with less latency to us-east-1 hit the load balancer in that region, while those with faster access to us-west-2 hit the load balancer there instead.

Latency-based routing solves this problem. We can create a CNAME from api.kloudless.com to each load balancer, assigning a different ID for each record set. Here is an example of one:

Create one more for us-west-2 with the corresponding changes to the Routing Policy fields, and you’re set!

This method works for routing traffic to internal servers such as databases as well. We run PostgreSQL on EC2 instances. Amazon RDS does offer cross-region read replicas for MySQL.

Onwards

Building on top of AWS has been tremendously beneficial. With the frequent releases of new features, we can provide increasingly better service to our customers.

One recently announced feature that is useful for the latency-based routing mentioned previously is the ability to use Route 53 for private DNS. For example, you can use this to ensure servers in each region only contact the database server closest to them and not be concerned about this data leaking to the outside world.

In addition to features that assist our own architecture, we plan on making the recently announced Amazon S3 event notifications available for developers on our platform. Many workflows built using Kloudless use event notifications to trigger further processing.

AWS has been instrumental in helping Kloudless scale to support developers from around the world without having to compromise performance. We’re excited that AWS continues to innovate and offer services that are key to our business.