AWS Startups Blog

HackerEarth Scales Up Continuous Integration for Future Needs with AWS

Guest post by Payal Moondra, Staff SDET at HackerEarth

HackerEarth provides enterprise software that helps organizations with their technical hiring needs. HackerEarth is used by organizations for technical skill assessment and remote video interviewing. Since its inception in 2012, HackerEarth has built a base of 5.5M+ developers, along with a community around it.

One of the goals of every fast-paced organization is to have a Continuous Integration (CI) pipeline that ensures every check-in is best verified before it can be pushed to production. HackerEarth wanted to achieve a CI model that has enough safety nets for every check-in that goes into each Pull Request (PR), as well as make the process scalable and cost effective. The safety nets in the CI pipeline includes unit tests, integration tests, functional tests, security tests, and static code analysis. These safety nets in the pipeline provide constructive feedback for the PR, and the necessary steps are then taken to mitigate the gaps. For integration tests in this pipeline, HackerEarth used AWS CodeBuild along with Amazon S3 and Amazon Elastic Container Registry (Amazon ECR).

What does Continuous Integration mean?

For a robust CI pipeline, each line of code written should be well tested before code changes are merged back to trunk in the code repository. For every check-in, HackerEarth wanted to have a pipeline that runs a series of tests (static code analysis, unit, integration, security tests among others) to ensure its mergeability (pass a set of checks before they can be merged). This helps in identifying major issues well in advance in the deployment cycle and prevents issues from going to production. While HackerEarth had this setup in place for some time, it had a few disadvantages, including high cost, high maintenance, and scalability limitations. The team was looking to solve this problem and prepare it for future scalability needs. In the process, HackerEarth discovered AWS CodeBuild, a fully managed continuous integration service that compiles source code, runs tests, and produces software packages that are ready to deploy. Before we jump into how AWS CodeBuild has simplified the process, let us walk through the problems associated with our former architecture.

Former Architecture:

Here is what would happen in the former architecture when a PR was created or updated by a developer:

1. Jenkins webhook would trigger Integration tests.

2. The pipeline would divide Integration tests into two parts and send them to two Amazon EC2 instances. The tests would run in parallel in these EC2 instances to reduce the total execution time.

EC2 Instance 1 : NODE_TOTAL=2 NODE_INDEX=1 nosetests --with-parallel

EC2 Instance 2 : NODE_TOTAL=2 NODE_INDEX=2 nosetests --with-parallel

Note: These two EC2 instances that ran all the time would have already been configured with required services like Redis, MongoDB, rabbitMQ, and MySQL database.

3. After the tests, Jenkins would collect the test reports from both machines and merge them into the final HTML report.

Problems with the former architecture:

- High Maintenance:

EC2 instances required constant maintenance. This included making sure required services are always up and running, stopping EC2 instances when they aren’t being used, periodic volume cleanup for maximum space utilization, keeping the directory counts in check etc. - Cost for unused resources:

The need to keep the EC2 instances up and running 24×7 incurred costs even on days when there weren’t many PRs to make use of them all the time. - Scaling:

In order to reduce queue time for PRs, they need to run in parallel. The issue with the existing setup is that every PR needs to have its own code directory. Hence, only a limited number of PRs could run at a time, making the pipeline less scalable. - Multiple concurrent builds running on common set of instances:

If something went wrong with either of the two instances, it affected all the tests on them. - One database shared across multiple builds:

Since we had our instances set up in advance, every build would use the same database, and that created conflicts when the nature of PRs was different. - Longer wait time:

Every PR needs to check out its own codebase directory. Even with a larger EC2 machine, tests for not more than two PRs could run at a time (without bumping up the size of attached EBS volume). So every PR triggered after the first two would be in the queue and that delayed the feedback for them. This led to a situation where continuous integration wasn’t happening quickly enough, and the feedback was delayed.

HackerEarth’s goal was to fix all the problems mentioned above to simplify the CI pipeline and make it as efficient as possible. The team realized AWS CodeBuild was the single answer to all the problems. It is a fully managed continuous integration service that compiles source code, runs tests, and produces software packages that are ready to deploy. With CodeBuild, you don’t need to provision, manage, and scale your own build servers. It scales based on your build needs (observing service limits), and you pay for only the time you use it!

The improved, simplified, and cost optimized CI architecture:

Here is what would happen in the new architecture when a PR was created or updated by a developer:

- Jenkins webhook triggers AWS CodeBuild to read buildspec.yml file stored in the project directory.

- The buildspec.yml (from the project files) has details of docker images stored in Amazon ECR. Amazon Codebuild pulls the image and launches.

- AWS CodeBuild project can run a number of parallel builds corresponding to the scenarios defined in the Jenkins pipeline.

- Once the execution within the container completes, including persisting the output XML file into S3, CodeBuild destroys the container.

- Jenkins creates the final HTML report by combining the XML files in S3 (using jenkins junit plugin).

Since tight integration with Jenkins already existed, the team leveraged AWS CodeBuild Jenkins plugin (instead of AWS Codebuild support for webhooks with Bitbucket).

Creating an AWS CodeBuild Project:

1. Source:

Source provider: Bitbucket

Repository URL: https://bitbucket.org/<YOUR_USER>/<YOUR_REPO>.git

Source: Leave it blank because will be managed by branch name environment variable from Jenkins.

2. Environment:

Environment image: Custom image

Environment type: Linux

Privilege: Yes

3. Buildspec:

You can create a file called buildspec.yml in your project directory. A sample of builspec.yml shown below pulls an Amazon ECR image and runs its own container, sets it up with required services and DB (setup.sh), runs the tests, and uploads the results to S3 for each build.

4. Artifacts:

Amazon S3 (Create a bucket in S3 and provide the bucket name in the configuration. Also, provide a namespace as BUILD ID if you want the artifacts for each build separately.)

Sample Jenkins Codebuild plugin configuration:

Sample Jenkins pipeline configuration:

Sample buildspec.yml file:

Refer to the build specification reference for CodeBuild for additional details.

Conclusion

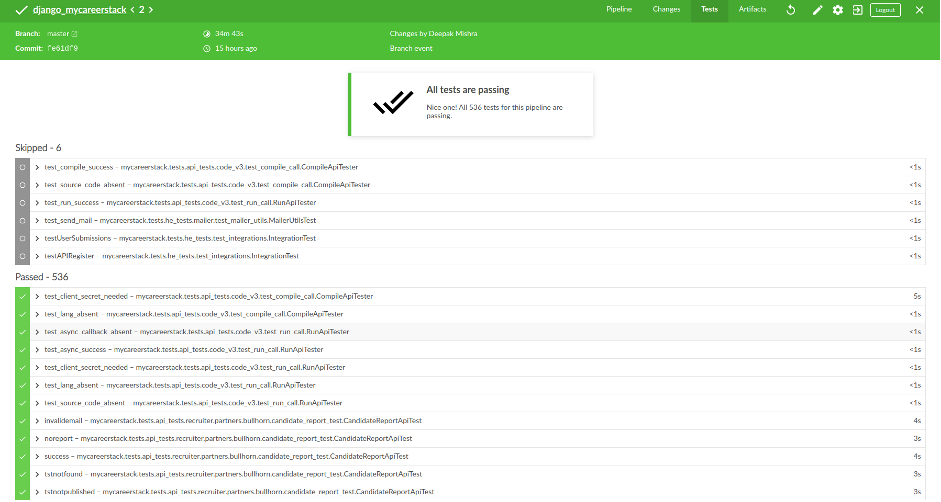

HackerEarth solved all the issues in their former architecture and reduced 50% of the cost per PR build using AWS CodeBuild, Amazon S3, and Amazon ECR. The benefits included:

- Zero Maintenance:

There is absolutely zero maintenance with using AWS CodeBuild. We do not need to maintain any machines at all. - Cost Efficacy:

The pricing structure for AWS CodeBuild is per minute. Hence, unlike the old architecture where there was a fixed cost for keeping EC2 machines up even when not used, we only pay for the duration the service is in use. We have achieved 50% reduction in cost per PR build with AWS CodeBuild. - Continuous Scaling:

As checking out source code became part of AWS CodeBuild build, we didn’t need storage space for Jenkins node, so we could increase the number of executors to 15, which meant 15 PRs would run in parallel without zero wait time. - Independent runtime environment:

AWS CodeBuild provides separate container and allows for source code specific to each build. Each build runs on a completely isolated environment (docker container). This means if something goes wrong in one build, it doesn’t affect any other builds. - Zero Interdependency:

We could now set up DB for each PR individually in AWS CodeBuild. This meant that multiple builds do not share database resources. Each PR will run on its own database and decommission it once done. - Zero downtime:

We don’t have to worry about something going wrong in pre-setup build environments. AWS CodeBuild takes care of that undifferentiated operational effort. - Faster Build times:

With increased parallelism within each PR, the overall build time decreases in proportion to the number of builds running in parallel. For example, the PR running on 2 EC2 instances takes around 30 mins. This same PR would take 15 mins if we run them on 10 parallel AWS CodeBuild builds (While keeping the cost in check!)

All in all, AWS CodeBuild helped HackerEarth reduce cost, reduce build time, all while taking care of managing the undifferentiated heavy lifting of maintenance.