AWS Startups Blog

How WIREWAX Built And Scaled A Media Services Offering With Amazon Web Services

Guest post by WIREWAX

As an interactive video company, WIREWAX processes thousands of videos every single day. Each video uploaded to our platform goes through our computer vision algorithms to automatically detect faces and objects, languages, generate subtitles and much more. Our industry-leading computer vision applications have been trained and vastly improved through years of digital video asset analysis thanks in part to the flexibility and scalability of AWS.

For years, large media companies have battled with vast, unwieldy archives of often poorly labelled digital video assets. Most video archives are full of badly structured data that requires a heavy human resource to navigate. Combining that, along with the unprecedented growth for on-demand streaming services requesting large amounts of these assets for broadcast, and you can be left with a hefty bill and long turnaround to get your assets ready for distribution.

From WIREWAX’s decade of experience using Computer Vision to power the creation of interactive video, we’ve turned our technology to assist media companies to solve the problem of organising large archives of digital video assets. WIREWAX Media Services (WMS) can process these video assets to find duplicates, organize programs, detect subtitles, suggest ad insertion points, and that’s just for starters.

We can even transform assets to allow clients to quickly and accurately compare similar assets to check for any potential issues before sending for distribution. Once we had proven success that our systems would successfully process the video assets in a form desired by media companies, we had to scale it.

The digital video assets coming in were huge. Much larger than we had been used to handling. Generally, each asset ranged in duration anywhere from 20 minutes to 3 hours and was completely uncompressed, resulting in huge file sizes. This meant that we were looking at a 100x in demand on our computer vision instances. A 100x increase sounded magical to our business development team, but we naturally didn’t want to get hit by a similar increase in our AWS bill.

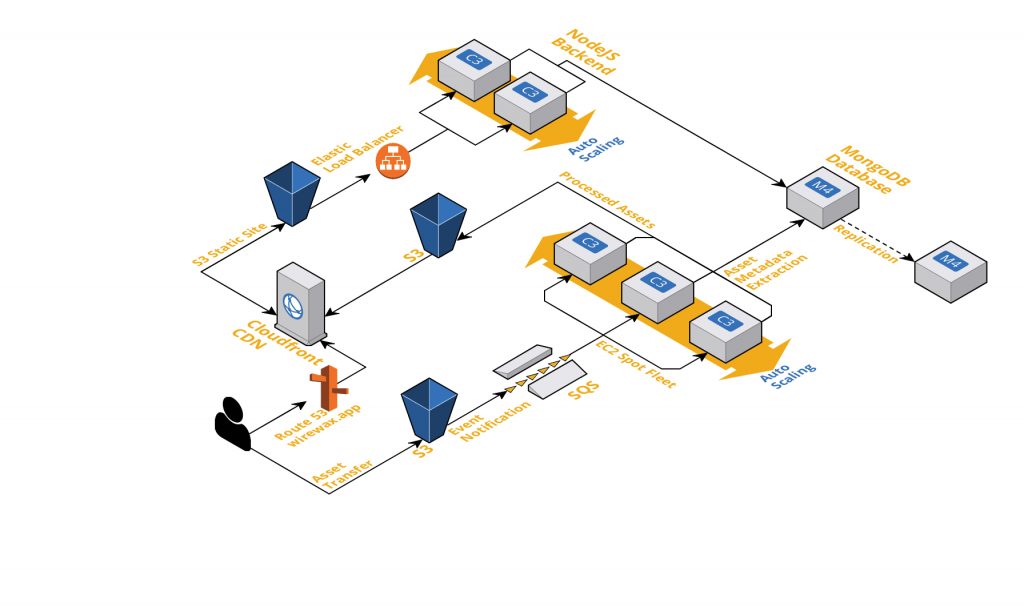

To ingest thousands of assets into our systems, we couldn’t ask our clients to upload using our Studio interface. We decided on an S3 upload as the ideal solution as it’s allowed clients of WMS to build an upload mechanism that works for their bespoke systems. For every asset that is uploaded to S3 for WMS, we configured a bucket event notification to send a message to SQS, providing us with a handy SQS queue that can be considered our pending asset queue. From here, our computer vision instances can get to work on processing these assets.

Now we just needed to scale up those our computer vision application to process all these gigantic assets. Rather than rely on using our old system that, although stable, remained untested at this new scale, we wanted to offload as much of the complexity onto AWS and reduce any custom code required. This meant taking a new approach.

We opted to use an EC2 Spot Fleet request to allow to scale up to meet demand in a cost-effective way. Our vision application can run on a variety of hardware, and EC2 Spot Fleets gave us the flexibility to use the best value instances in the best value Availability Zone automatically, ensuring we got the best value possible when scaling. EC2 Spot Fleets also allow us to auto-scale the fleet size allowing us to save even more during quiet periods.

With this setup, and as an Amazon Technology Partner, we are able to scale up our processing power by 100x but without doing the same to our AWS bill.

Now that’s music to everyone’s ears.