AWS Startups Blog

Postman: Building an API Collaboration Platform for Two Million Developers

Guest post by Ankit Sobti, Co-founder and CTO, Postman

Guest post by Ankit Sobti, Co-founder and CTO, Postman

Postman is an API testing and development suite that helps developers, teams, and companies become more efficient through the entire development lifecycle of an API. We are based in Bangalore with an upcoming office in San Francisco. With two million users, Postman is the world’s most popular API testing and development platform.

Humble Beginnings

Postman started off as a simple side project. In 2010, my cofounder, Abhinav, and I were working together at Yahoo, where we developed the front end for Yahoo’s content management platform. To interact with the REST APIs of the platform, we were using cURL, which was both tedious and error prone. Post Yahoo, Abhinav moved on to establish his first startup, where he faced similar frustrations with API development. He developed a solution by writing the first version of Postman and publishing it on the Chrome Web Store. Postman grew rapidly through word of mouth, reflecting the global need for a well-designed developer tool for APIs. We soon had more than one million users.

Postman Sync: Initial Architecture

In March 2015, we built on the success of Postman by launching our Postman Sync service. Here are just a few of the tasks you can perform with Sync:

- Back up and synchronize your data in our cloud

- Collaboratively develop and share APIs

- Use collections to group HTTP requests and organize them into folders to accurately mirror your API

We developed Sync around the three core tenets of security, reliability, and availability. Because the Sync service would be available for our entire user base, we knew we would hit scalability concerns hard and fast, so we architected the system for it in the following ways:

- Node.js — We chose Node.js for its efficiency in the face of data-intensive, real-time applications. Node.js also complemented our existing front-end JavaScript stack. Fortuitously, Node 0.12 (stable) released with support for clustering right before we launched Sync. Clustering allowed us to scale vertically by load balancing across multiple cores.

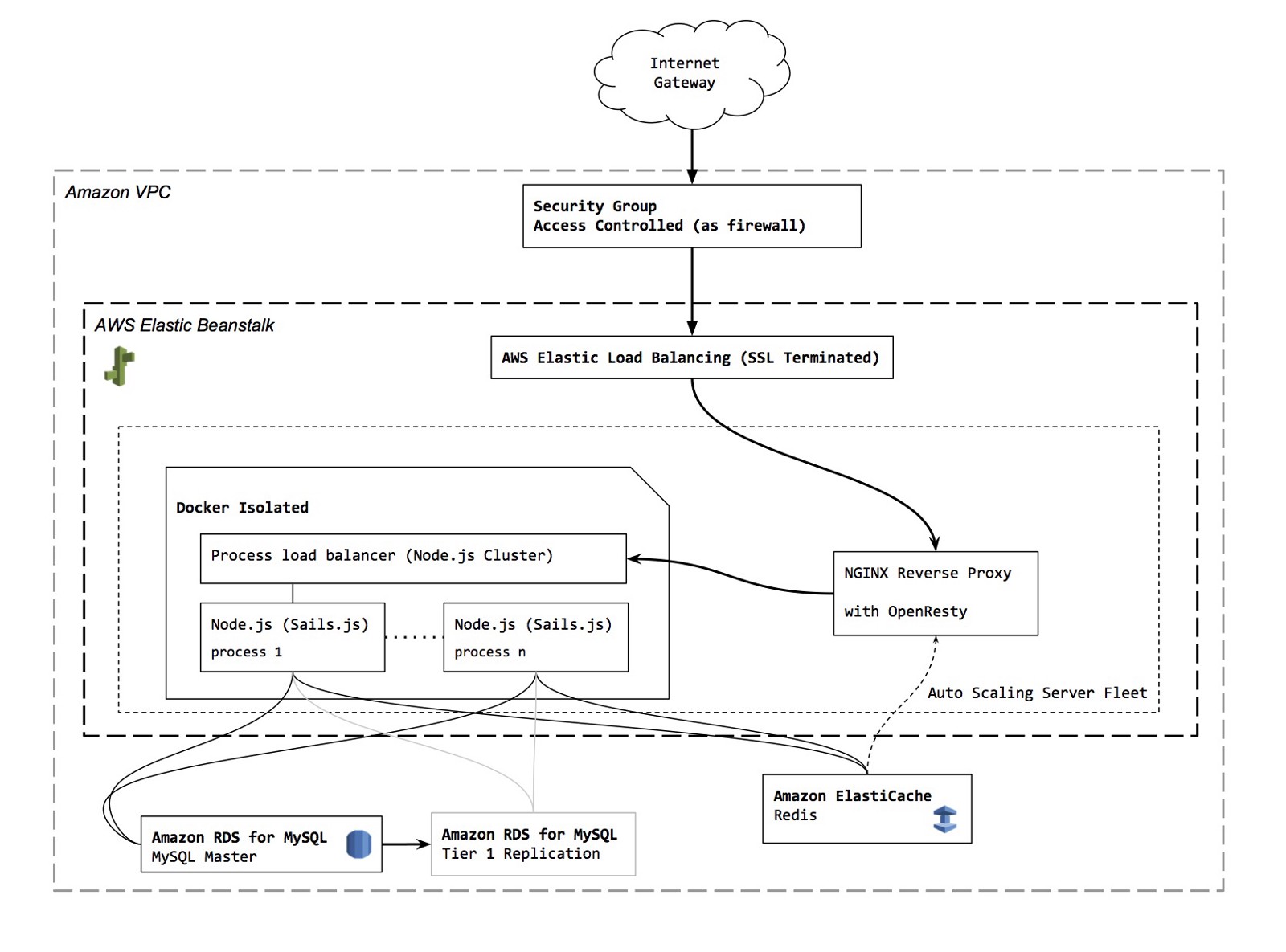

- Session and Socket Management — We abstracted out the management of sessions and sockets through shared Redis stores, while ensuring that no other dependencies of the app relied on shared memory. This allowed us to scale horizontally, load balancing across multiple servers without the need for sticky sessions.

- MySQL — For our database, we chose MySQL, which offers time-tested scalability through clustering and sharding.

Architecturally, we seemed at the time to have made most of the right choices. We began to serve thousands of incoming requests per hour. However, in terms of infrastructure we began to struggle with peak hour load. We had leased servers from a major provider that did not offer elastic scalability. We had to carefully observe a very limited set of load parameters, and manually clone and add machines to the load balancer. This was not the most reliable approach, and with our infrastructure getting increasingly tuned for the upper echelons of traffic load, it wasn’t feasible going forward from both a cost and an operational perspective. MySQL and Redis management was also proving to be fairly manual and relatively unmanaged.

Transitioning to AWS

At this point, we began researching other options and finally settled on AWS for its immense flexibility. Here are some of our favorite AWS services and features that have helped us achieve our goal of high availability:

- AWS Elastic Beanstalk — Elastic Beanstalk gives us the arsenal to scale smartly. Through a single click, we can deploy our Dockerized app servers across multiple Amazon EC2 instances. We have tuned these servers to scale dynamically in response to increasing CPU load and active socket connections. Our peak hour traffic often quadruples compared with base volumes, and we use scheduled scaling to provide a consistent experience. Using environment variables, we have abstracted out all of our system configurations, making transitions between development, stage, and production environments a breeze. All of this is made possible by a well-stocked and configurable monitoring and analysis interface provided by Elastic Beanstalk.

- Optimized Instance Types — Our app servers are more compute intensive than memory intensive. With the flexibility offered by AWS to choose high performance EC2 instances optimized for computation, we can scale up to two to three million requests per hour using a surprisingly small number of C4 instances.

- MySQL on Amazon RDS — The comfort of a managed MySQL service cannot be understated, and Amazon RDS has significantly improved our operational productivity. Through automated backups, replication, and enhanced security via VPC subnets, we now sleep a lot more peacefully. Controls to tweak DB settings using parameter groups, coupled with Amazon CloudWatch analytics and alarms, have proven to be a great value add.

- Support — Postman is a member of the AWS Activate program. It has been exceptionally helpful, both in terms of the credits offered and the business support. The response time and the quality of response for all our queries to AWS Support have been exceptional.

Above is a peek into our AWS infrastructure. Dockerized instances run our node app, load balanced across multiple CPU cores. Each app uses shared Session and Socket stores powered by Amazon ElastiCache for Redis and a database powered by Amazon RDS for MySQL. The dockerized instances scale automatically and are load balanced by AWS Elastic Beanstalk. We also use an NGINX Reverse Proxy, with OpenResty protection, to rate-limit incoming traffic.

Security

Even before our migration to AWS, we had a number of security practices in place, including communication encryption, sensitive data encryption, and systems access protection. However, AWS provided us with a hardware firewall to extend blanket protection across all servers. With AWS, we get security benefits like the following:

- Amazon Virtual Private Cloud — Amazon VPC provides us complete control over our cloud network, with private networking that shields us from the Internet. We use the simple-to-use web console to provide access control based on IP ranges, subnets, and ports. This has erased months of effort in configuring (and maintaining) server configurations, while allowing us to experiment with TCP wrappers or IP tables. It has also saved us hours that we would have spent setting up new services.

- EC2 with Security Groups and Managed Key Pairs — We tweak and secure our EC2 instances by using common best practices and assigning them to strict security groups. The security groups allow access only to specific ports that our servers use. Internal access to the servers are well protected by IP-restricted, password-less SSH access using security keys generated by Amazon EC2 key pairs . The base OS images provided by AWS are already configured for the best security practices, and we have had to spend very little time tweaking them.

- Encryption — We are exploring Amazon Elastic Block Store (Amazon EBS) encryption to encrypt communication between the OS and the disk, along with encrypted data storage. Amazon RDS encryption has also managed to tickle our fancy, thanks to its easy manageability.

Going Forward

We have had a great experience with AWS so far. Two offerings that we are particularly excited about using in the near future are Amazon RDS for Aurora and AWS Lambda. Aurora promises to be significantly faster and cheaper, while maintaining MySQL compatibility. Lambda has the potential to replace a whole host of our housekeeping services, particularly around data analytics and archival. We already use Lambda functions to push our CloudWatch metrics to Slack.

APIs are becoming ubiquitous in the consumer and the enterprise landscape, through the growth of IoTs and an increased focus on microservices. AWS has helped us scale reliably in a performant manner. We are signing up thousands of users for Postman Sync daily, without breaking a sweat. We will launch some amazing services based on Sync in the coming weeks, furthering our core goal of improving developer productivity.