AWS Startups Blog

SafeDK: Giving Control Back to App Developers in an SDK-Fueled World

SafeDK offers an In-App Protection solution and SDK Marketplace, putting mobile app security and quality back in the hands of app developers. How do we do that?

We’ve all heard of mobile Software Development Kits (SDKs). App developers integrate these off-the-shelf mSafeDKobile services into their app for many purposes: advertising and payment, analytics and social, and many more. No doubt these SDKs are a great help in the development process, but they might cause various issues ranging from app slowdown and crashes to excessive battery consumption and malicious behavior.

SafeDK monitors the real-time behavior of mobile SDKs and reports privacy, performance, and stability issues. SafeDK also provides developers with remote control over the SDKs. With a simple click of a button, app developers can turn off an entire SDK or a specific SDK permission in real time, preventing a security breach or crucial bug, with no need to release a new version or wait for users to update.

In this post, I’ll share the story about how SafeDK came to be, discuss lessons learned, and explain how AWS has helped to make it all possible.

Experiencing SDK Issues Firsthand

When I was working for a global location-based services company, I experienced firsthand the difficulty of finding the right SDK for our app. When searching for the SDK that best suited my needs, the only tool I had at my disposal was Google. And googling the SDK only led me to the official SDK page. I couldn’t find any unbiased information or hear about the experience others had with this SDK. Thus, I had no idea if the integration would be easy or painful, or how the SDK actually performs outside of the sandbox.

And soon enough, once our app was deployed and released to users, we started experiencing hiccups.

The problem was we didn’t have just one SDK in our app. Even when we narrowed it down and came to the conclusion that the SDKs were causing the issues, we still didn’t know which one of them was responsible. We felt exposed and vulnerable. We were losing control over our own product.

Before long, I started thinking “there’s got to be a better way.” A better way for choosing the right SDK. A better way of knowing which SDKs are troublemakers and which ones will give me exactly what I want. A better way to not compromise my app’s stability or tarnish its reputation. But most of all, a better way for saying, “I’m sorry, this isn’t working. It’s not me, it’s you,” and stop using this SDK. No muss, no fuss.

From Idea to Execution

I joined forces with Ronnie Sternberg and together we founded SafeDK on September 2014. Our vision was clear: give back control to app developers over their own app even if they use dozens of SDKs.

To provide developers with the best, most comprehensive SDK information, we developed SafeDK Marketplace, a developers’ hub for SDKs. The marketplace is the one-stop-shop to find the right SDK for you because it brings the mobile development community together to research, rate, review, and discuss the latest SDKs. On one hand, app developers can comment and review SDKs, and on the other, SDK developers can use it as a promotional platform as well as engage in technical discussions with developers using their product.

To safeguard their app and their users after SDK integration, we also offer app developers a proactive solution, the SafeDK In-App Protection. We provide a plug-in for the development environment that automatically identifies SDKs in the app and analyzes real-time behavior on our dashboard. We also provide the ability to turn SDKs off entirely or just revoke specific permissions for specific SDKs. The real magic is that the effect to the users is immediate, so there’s no need to release an update for the app or wait for users to update a new version.

So Much Data, So Little Time

We wanted to give app developers as much information as possible about how their SDKs really behave out in the wild. We also wanted to tell them about SDK network consumption, SDK contributions to the app’s start time, log and categorize crashes, report on the frequency of location accesses, and so on. That’s plenty of information.

Now an app has on average 15 SDKs, with popular apps seeing that number in the rearview mirror. Apps can have hundreds of thousands of active users each day, including the potential for dozens or more at any given moment. That generates an enormous amount of data.

Sure, we could have attempted to handle all that data by ourselves. And given enough resources, we just might be able to pull it off. But hey, we’re a startup. Our goal is to get our product stable, reliable, and out there making friends ASAP. So we took advantage of some of the many services of AWS:

- First, we deployed our backend Rails server and set up several instances of it running behind Elastic Load Balancing (ELB). We wrote our server code as an API that can be used by both our internal services and websites, and as a result external customers can connect to these APIs as well.

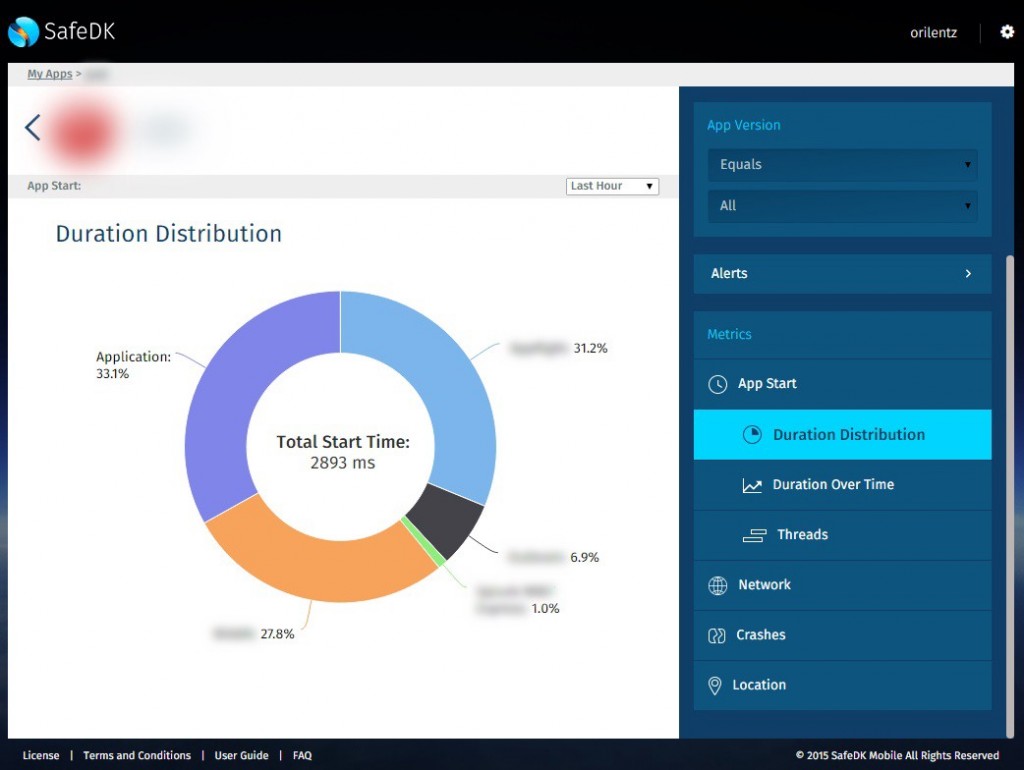

- Second, we store the live data we collect from the apps in real time in an Elasticsearch database, using the AWS hosted solution. Elasticsearch has rich APIs and offers super-fast performance when querying millions of records. The following is an example of an app protected and analyzed on our dashboard, depicting the app’s start time. Here app developers can see that SDKs in their app amount to almost two thirds of the average start time, with the app itself only responsible for a fraction of it.

- Third, we store our smaller-sized data on a PostgreSQL database on Amazon RDS. This allows us to easily scale and back up our DB at any time, without affecting user experience whatsoever.

- Fourth, we’re hosting our dashboard website resources on Amazon S3. Our dashboard is implemented with AngularJS, which allows us to create a rich and smooth user experience. Hosting the resources on S3 lifts the burden of handling the traffic of static assets all on our own, and shoulders that burden over to AWS.

Propagation and Preparation

If you’ve ever taken a course in physics, you know the universe doesn’t have an instant workflow. Things take time.

At SafeDK, our immediate priority was to enable an app to know which SDKs are on and which are off. That data is subject to change from time to time, and somehow we needed to propagate that information to mobile devices without harming user experience or compromising the apps we set out to protect.

The real challenge presented itself when it became apparent that we’re living in global times. Our servers could be residing on one side of the world while our users could be all the way on the other side. Or possibly even scattered around the planet.

So what’s a startup to do? The solution came in the form of the Amazon CloudFront service. I recommend using it as much as you can. Trust me, it’ll save you a lot of time, effort, and headaches. Why? Because CloudFront edge servers will always be physically close to your users, thus improving performance. It’ll save you the trouble of dealing with scale early on and help you get off the ground and running much more quickly.

To get insights on your traffic from CloudFront, I recommend using CloudFront logs on S3 together with Amazon Redshift (see here to get started).

Preparing for Spikes

Most of the time, our traffic’s request per minute (RPM) is steady. However, these are mobile apps we’re dealing with. One notification sent to thousands or more devices could incur sudden spikes in app usage and subsequent spikes in our traffic over a long period of time.

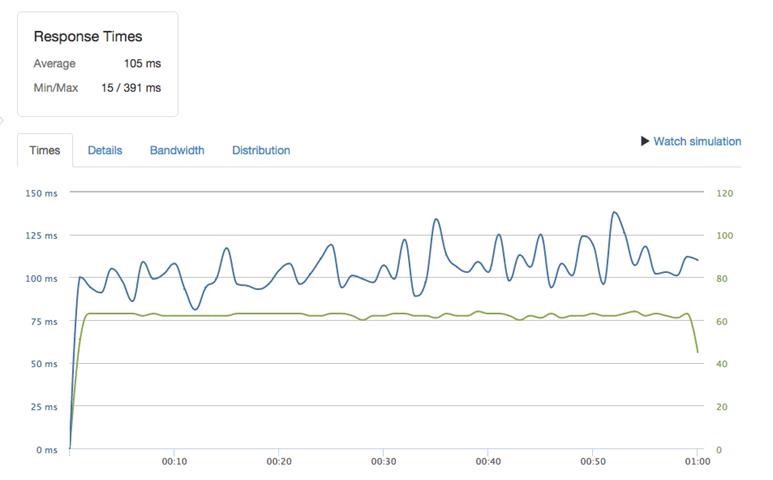

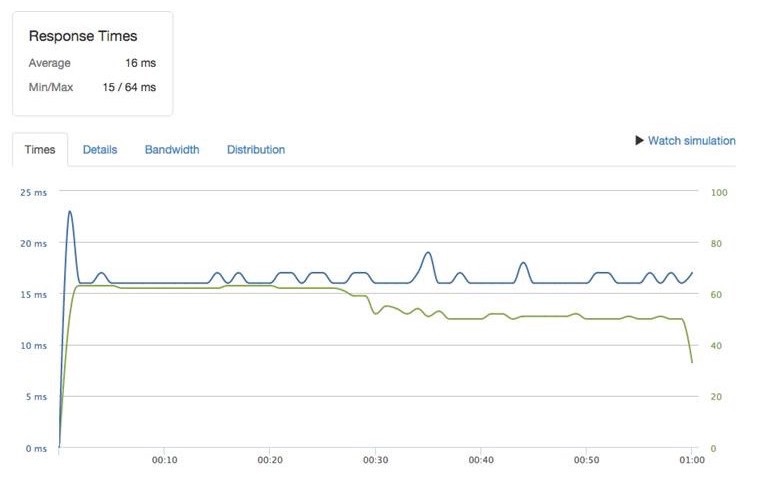

So it’s important to have web servers that can best handle these sudden spikes. AWS offers a variety of instances, and you should choose the best one for you. For instance (no pun intended), we found that T2 instances work considerably better for us than M3 instances of the same model. It improved our response time at those spikes from 100 ms to 16 ms. That’s quite the improvement, wouldn’t you say?

You can easily benchmark your servers using https://loader.io/, and then choose the instance that best handles them for you.

The following screenshot shows our test results. On the left side, with M3 instances, we got an average response time of 106 ms, while on the right side, with T2 instances, we got an average response time of 16 ms. So choosing the right instance for us was a no-brainer.

Preparing for Spikes 2: Auto Scaling

Currently we’re in the process of integrating Auto Scaling into our production environment, so that when we experience sudden spikes we can simply have new instances created automatically (and temporarily) to help carry the load.

At first we thought about implementing Auto Scaling by creating a new Amazon Machine Image (AMI) on every deployment, ready for use. That way when Auto Scaling kicks in, it’ll simply create new instances of the latest AMI (i.e., the latest deployed web server version). However, creating an AMI is a relatively slow process, and we preferred not to depend on it for every deployment.

Some other options we’re exploring are creating an Amazon Elastic Block Store (Amazon EBS) snapshot on each deployment and using it when creating the new instances, or uploading our latest code to S3 for each deployment, and then have Auto Scaling first pull it from S3.

The Road Ahead

Today we have a product we’re very proud of. We continuously get feedback about how we’ve achieved so much in so little time. None of that could have been possible without using a handful of AWS capabilities. As those capabilities continue to grow, we continue to explore what else we can use and benefit from. Along with our work on integrating Auto Scaling, we plan to improve our automation process by using AWS Device Farm, a service that lets you test apps on real devices in the AWS cloud.

We’ve hit the ground running and are excited to keep on moving forward. We’re expanding and evolving our product, giving app developers the control and information they deserve about their app. As we grow our customer base we’re hearing great feedback, both from our current customers and other interested parties.