AWS Storage Blog

Maximizing Microsoft SQL Server Performance with Amazon EBS

AWS customers have been running mission-critical Windows workloads, like SQL Server, on AWS for more than 10 years. In this post, I discuss how to maximize SQL Server performance with Amazon EBS storage.

Persistent block storage is a critical component of a relational database management system (RDBMS) and is ultimately responsible for the speed, security, and durability of your critical data. AWS provides high performance, easy-to-use block storage to suit SQL Server’s needs through EBS.

This post is relevant for SQL Server database architects, administrators, and developers. It provides an overview of optimal storage configuration on the AWS platform. Whether you are currently using AWS or looking to migrate your on-premises workloads to the cloud, you can gain some insight and a basic understanding of EBS.

Amazon EBS overview

EBS is a highly performant block storage service available in all AWS Regions. With EBS, you can create and attach volumes to your SQL Server instances with just a few clicks. This removes the need to configure host bus adaptors (HBAs), switches, network bandwidth, disk cache, controllers, storage area networks, and more.

EBS volumes connect to your instance using a dedicated storage network, providing the flexibility to provision fast and reliable volumes for servicing critical workloads on SQL Server.

EBS volumes are elastic. You can make the following modifications without any disruption to your workload:

- Increase a volume size up to 16 TiB.

- Modify a volume performance up to 64,000 IOPS.

- Switch between SSD and HDD volumes.

EBS volumes persist independently, meaning they can be detached and attached to different instances within the same Availability Zone. One instance can have many volumes attached, but a volume can only attach to one instance at a time.

Some other features that make EBS the logical choice for SQL Server:

- 99.999 percent availability

- Crash-consistent point-in-time snapshot support

- Encryption support

SQL Server and gp2

While you can use a single Provisioned IOPS (io1) volume to meet your IOPS and throughput requirements, General Purpose SSD (gp2) volumes offer a leading balance of price and performance for SQL Server workloads when configured appropriately.

Gp2 volumes deliver single-digit millisecond latencies and the ability to burst to 3,000 IOPS for extended periods. This property is well suited to SQL Server. The IOPS load generated by a relational database like SQL Server tends to spike frequently. For example, table scan operations require a burst of throughput, while other transactional operations require consistent low latency.

Gp2 volumes satisfy the preceding requirements in a cost-effective manner. EBS analyzed a wide variety of application workloads and carefully engineered gp2 to take advantage of this spiking, knowing that you rarely exhaust your accumulated burst of IOPS.

Burst credits accumulate at a rate of three per configured GB per second, and each one pays for one read or one write. Each volume starts with and can accumulate up to 5.4 million credits, and you can spend up to 3,000 per second per volume.

For example, if you have a 200-GiB gp2 volume, you get a baseline of 600 IOPS and a burst up to 3,000 IOPS until you use your 5.4 million credits. The 200-GiB volumes burst for 37.5 minutes (burst rate less accumulation rate).

You can monitor the burst-bucket level for gp2 volumes using the EBS BurstBalance metric available in Amazon CloudWatch. Different-sized gp2 volumes deplete and replenish at different rates depending on the size of the volume. The larger a volume, the higher the baseline performance, and the faster it replenishes the credit balance. For more information about how IOPS are measured, see I/O Characteristics and Monitoring.

CloudWatch also allows you to set an alarm that notifies you when the BurstBalance value falls to a certain level.

For SQL Server benchmarking, instead of provisioning a single 1,000-GiB gp2 volume, I created five 200-GiB volumes and used RAID 0 disk striping in Windows to provide the extra performance needed.

| General Purpose SSD (gp2) | ||||

| Capacity | 1 x 1,000 GiB Volume | 5 x 200 GiB Volume (RAID 0) | ||

| Price | $100/month ($0.10/GB-month) | |||

| Burst | Baseline | Burst | Baseline | |

| Max. Throughput | 250 MiB/s | 250 MiB/s | 1,250 MiB/s | 750 MiB/s |

| Max. IOPS** | 3,000 | 3,000 | 15,000 | 3,000 |

** based on 16 KiB I/O size

While rare, if your gp2 volume does happen to use all of its I/O credit balance, the maximum IOPS performance of the volume remains at the baseline IOPS performance level (the rate at which your volume earns credits). The volume’s maximum throughput reduces to the baseline IOPS multiplied by the maximum I/O size. In this case, the 200-GiB gp2 volume with an empty credit balance has a baseline performance of 600 IOPS and a throughput limit of 150 MiB/s (600 I/O operations per second * 256 KiB per I/O operation = 150 MiB/s).

If you frequently hit the volume performance baseline level (due to an empty I/O credit balance), then you could consider using a larger gp2 volume with a higher baseline performance level. Or you could switch to an io1 volume to get sustained IOPS performance for a higher cost per GB-month.

Best practices for SQL Server configurations

There are several best practices you should consider when configuring your SQL Server instance.

Selecting the right volume

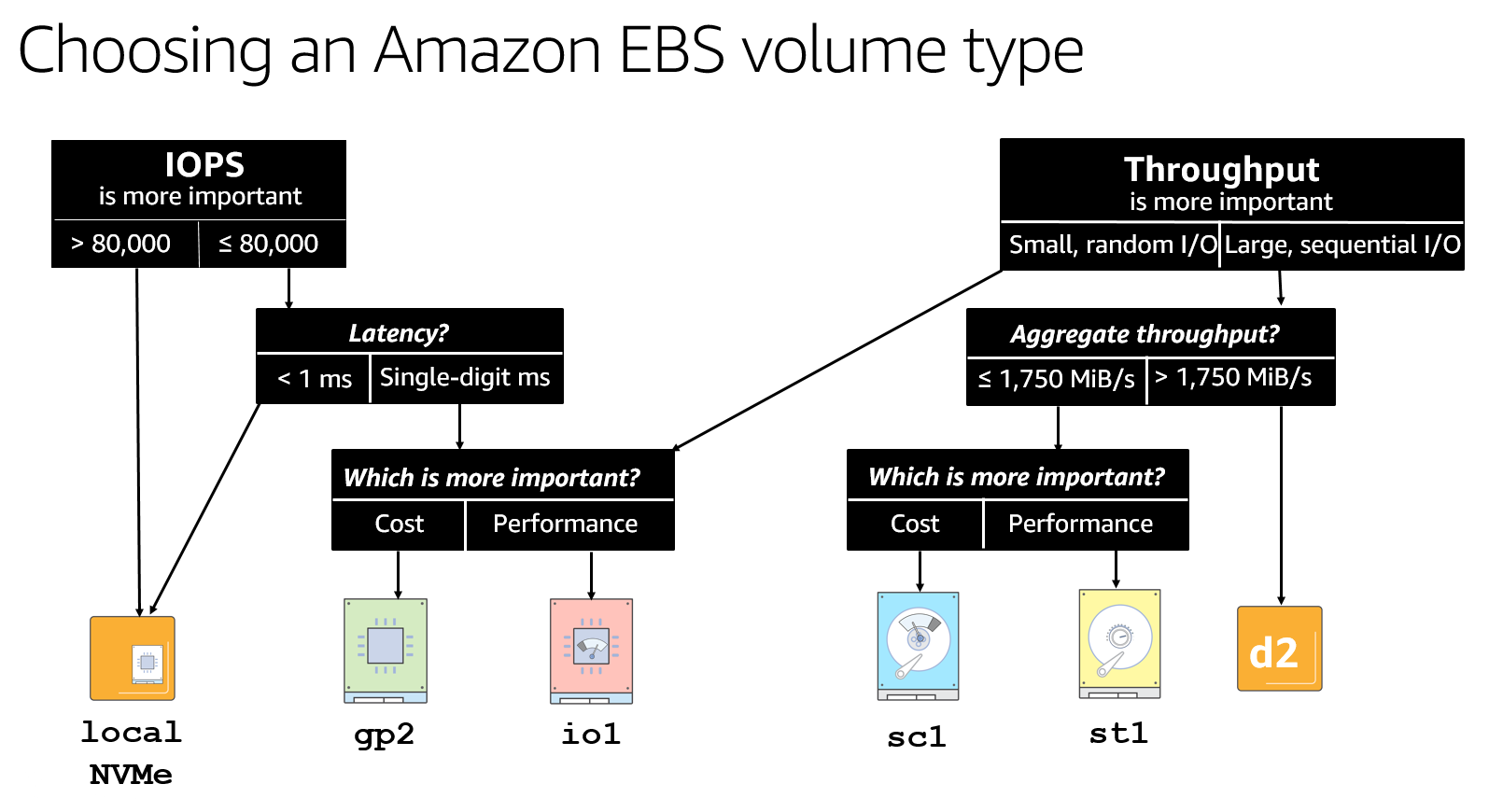

Selecting the right volume for your workload involves closely examining your performance, latency, and cost requirements. If you are unsure, start with gp2 volumes, which offer burst capability and a strong balance of price and performance for most workloads. Consult the following flowchart when deciding on an EBS volume type.

Using EBS-optimized instance types

An EBS-optimized instance uses an optimized configuration stack and provides additional dedicated capacity for EBS I/O. This optimization provides the best performance for your EBS volumes by minimizing contention between EBS I/O and other traffic from your instance. All current-generation instance types enable it by default.

Selecting the correct instance size

After you have selected the most suitable family for your instance, make sure that your instance size supports the storage bandwidth that you need for your EBS volumes.

The key is to make sure that your instance has the required throughput for the EBS volumes to attach. Pay particular attention to the published EBS max throughput/instance and max throughput/volume, as specified by the EBS volume type and EBS-optimized instances, to make sure that you obtain the correct balance.

For example, if you attach a single 20,000-IOPS volume to an r5.4xlarge instance, you reach the instance limit of 18,750 IOPS before you reach the volume limit of 20,000 IOPS.

Striping EBS volumes

Stripe your EBS volumes to optimize performance and exceed the limits of a single volume’s performance.

There is no need to implement any RAID redundancy levels because EBS data is already replicated with 99.999 percent availability and designed for an annual failure rate (AFR) of between 0.1% – 0.2%. For example, implementing RAID1 would give you an unnecessary 50 percent reduction in both EBS bandwidth and capacity.

Using locally attached NVMe storage for tempdb

Many of the new Nitro instance types include local NVMe SSD physically attached to the host. These ultra-low latency disks are perfect for those queries using tempdb. However, local SSD data only persists for the life of the instance.

Using Throughput Optimized HDD (st1)

You may still prefer the tried and trusted method of backing up to an attached EBS volume. The high-throughput performance of the st1 volumes allows for fast local backups and restores for half the cost of SSD storage.

Taking EBS snapshots

Snapshotting your backup volumes to Amazon S3 is an effective strategy for long-term backup. Use Amazon Data Lifecycle Manager (Amazon DLM) to automatically create and (optionally) delete snapshots. Also, EBS integrates with AWS Backup, a fully managed backup service.

You can take Microsoft Volume Shadow Copy Service (VSS)–enabled snapshots through Amazon EC2 Systems Manager Run Command.

Innovation in EBS

With EBS, you have the flexibility to select the storage solution that matches and scales with your workload. EBS is the foundation for some of the world’s most critical SQL Server workloads and evolves to meet your requirements. As EBS innovates, these benefits pass directly on to you through performance, reliability, and security. Here’s a brief timeline of some significant launches over the years:

2006 – Amazon EC2 launches with instance storage.

2008 – EBS launches on magnetic storage.

2012 – AWS introduces Provisioned IOPS and EBS-optimized instances.

2014 – SSD-Backed general-purpose storage enhances EBS.

2014 – EBS data volume encryption replaces third-party security tools.

2014 – Gp2 and io1 volume performance double.

2015 – Encrypted EBS boot volumes launches.

2016 – EBS adds Throughput Optimized HDD (st1) and Cold HDD (sc1) volume types.

2017 – Amazon EBS elastic volumes enable live volume modification.

2017 – Provision IOPS SSD (io1) performance increases by 60 percent.

2017 – EC2 offers Application consistent snapshots with Microsoft VSS.

2018 – General Purpose SSD (gp2) performance increases by 60 percent.

2018 – Provision IOPS SSD (io1) performance doubles.

2019 – EBS integrates with AWS Backup.

2019 – Encryption by default available for new EBS volumes.

2019 – EBS adds ability to take point-in-time crash-consistent snapshot across multiple EBS volumes.

Conclusion

AWS continues to be the best place to run SQL Server, thanks to services like EBS and the innovation on the Windows engineering team. For more information, see the EBS page or SQL Server page.