AWS Partner Network (APN) Blog

Understanding the Data Science Life Cycle to Drive Competitive Advantage

|

|

|

|

By Josh Poduska, Chief Data Scientist at Domino Data Lab

A recent study from MIT confirmed what people in data science already know—while almost all companies expect artificial intelligence (AI) to be the source of a sustained competitive advantage, very few have been able to incorporate it into anything they do. Only 20 percent have any real AI work happening, and just a fraction of those have it deployed extensively.

Across industries, people recognize the need for data science, but they don’t see the path to get there. Many have incorrect assumptions about what data science is and limited understanding how to support it. This results in false starts, wasted resources, and building models that never go into production.

At Domino Data Lab, an AWS Partner Network (APN) Advanced Technology Partner with the AWS Machine Learning Competency, we work with companies in all stages of their data science journey and have seen how the best companies approach data science.

One important thing we’ve seen is that the best companies understand that data science has life cycle: a defined process for choosing projects, managing them through deployment, and maintaining models post-deployment.

Check out Domino on AWS Marketplace >>

The Model Myth

Companies struggling with data science don’t understand the data science life cycle. As a result, they fall into the trap of the model myth. This is the mistake of thinking that because data scientists work in code (usually R or Python), the same processes that works for building software will work for building models. Models are different, and the wrong approach leads to trouble.

For example, software engineers store and version code in Git. For data scientists, the unit of work isn’t the code, but the experiment. To understand how a model was built, you need everything in one place: code, data, parameters, compute environments, libraries, and results. Having code versioned in Git isn’t nearly enough to understand or explain past work.

With data science, insights about the business are important byproducts of model development. Even a failed project creates information that should be shared. It’s these insights that create competitive advantage. Data science programs need ways to preserve these insights, and make them discoverable. Knowledge management is far more important in data science than in traditional software engineering.

Finally, data science teams have constantly changing tools and compute requirements. They may need access to a large number of graphics processing units (GPUs) for a few days, and then not again for months. They may try several different machine learning (ML) libraries before deciding on a path. People may be working in several languages at once. Having a “locked down” workbench and limited compute limits how good the work can be.

Experienced data science leaders know that software engineering processes don’t work for data science. They envision a different life cycle for quantitative research. While the specifics of the life cycle change, there are common high-level phases. Understanding what takes place in each phase is critical to success.

Ideation and Exploration

Quantitative research starts with selecting the right project. Teams should look for projects that have a large positive impact on the business. To find them, people should start by looking at potential business outcomes they might be able to deliver. A best practice is to maintain a backlog of potential projects and engage with stakeholders to prioritize them.

Before committing to delivery, people should look at prior work, available data, and delivery requirements. By the end of this phase, people should have a solid understanding of what is being built, how long it might take, and how success will be measured.

Important considerations for this phase:

- Maintain a central repository of past work. People need to be able to find and understand past work, so they don’t start from scratch when asking new questions.

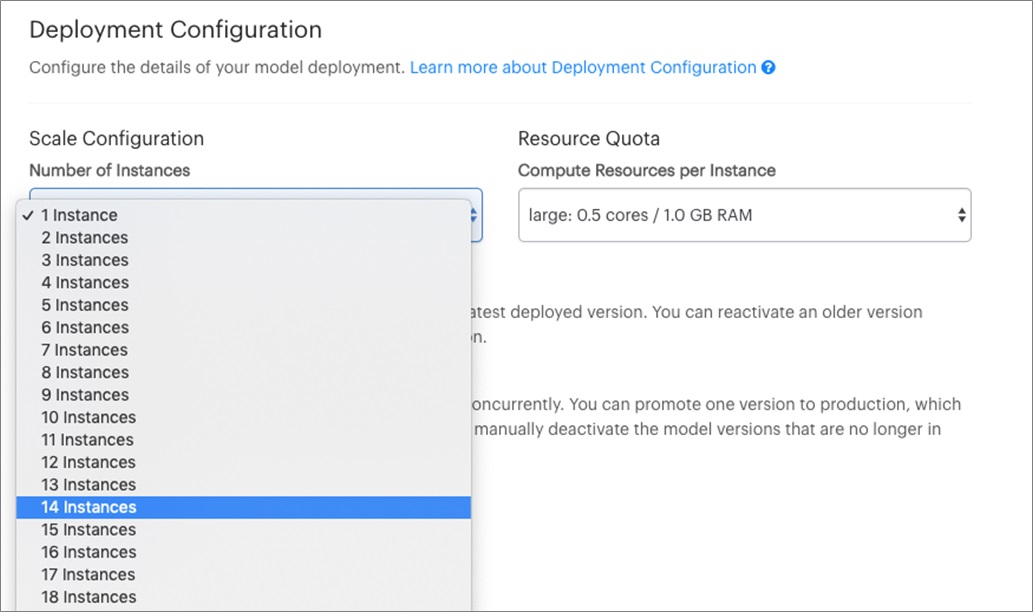

- Provide the right hardware. Researchers need access to large, high-memory machines so they can work with data sets and get through them quickly.

- Use new packages and tools safely. People need isolated environments so they can try new libraries, packages, and approaches without breaking past work or disrupting environments for colleagues.

- Share results back to the team. Whether people hit a dead end or make a new discovery, they need to share this with the team so others can learn from it. While not all projects pan out, all projects should deliver some insight.

Figure 1 – Domino provides easy access to the right Amazon EC2 instance for the job.

Experimentation and Validation

At some point, the process changes from exploration to building models. The work often shifts from exploration in notebooks to batch scripts written in code. People run experiments, review results, and make changes based on what they’ve learned.

This process should involve validation, where others confirm that the approach makes sense. This isn’t like a code review, because the code might be just a short script. Instead, reviewers need to look at everything from the assumptions and data to the model’s sensitivity to changes. They should look at the impact of the model going bad in production and make sure this risk is acceptable.

This phase can be slow when experiments are computationally intensive (e.g. model training tasks). This is also where the “science” part of data science can be especially important: tracking variations in your experiments, ensuring past results are reproducible, getting feedback through a peer review process.

Important considerations for this phase:

- Remove bottlenecks. Scale out compute resources to run many computationally intensive experiments at once.

- Collaborate. Data science teams need to be able to share ideas and build on one another’s insights. Managers need to be able to see the status of in-flight projects to know if they’re on track or in the weeds.

- Track work (i.e. experiments). All work needs to be reproducible so people doing validation can see all the experimental results and not just the favorable ones.

- Engage with downstream stakeholders. Those who will be working with the results of the project should be involved, if only to understand what’s coming, when it’s coming, and how they will need to support it or use it.

Coatue is a leading investment management firm in a highly-competitive market where small incremental gains can lead to large returns. They keenly understands the importance of accelerating experimentation faster than the competition, while also insisting on rigor in their quantitative research methods.

Coatue uses Domino on AWS to accelerate iterative experimentation, resulting in a quicker path to production and highly accurate models while reducing operating costs. Domino is deployed on AWS as a central platform for both developing and deploying models. It tracks what models ran, when, and by whom. As a system of record, tracking the generation of new models, Domino provides clear evidence of custody throughout the model management process.

See how Coatue is using Domino Data Lab and AWS >>

Operationalization and Deployment

Data science work is valuable when it creates a positive business outcome. To do this, it has to be operationalized. This may be in the form of an API, web application, or daily report in people’s inboxes.

Deploying models can be complex. Projects frequently fail because people don’t understand what it takes to actually deliver the model. Perhaps they built a model that didn’t work with the available infrastructure, or required data that wasn’t available in a timely manner. Perhaps DevOps didn’t have engineers who could take the model from the workbench to production. In the end, no matter how good the model was in the lab, it has no value until it gets deployed.

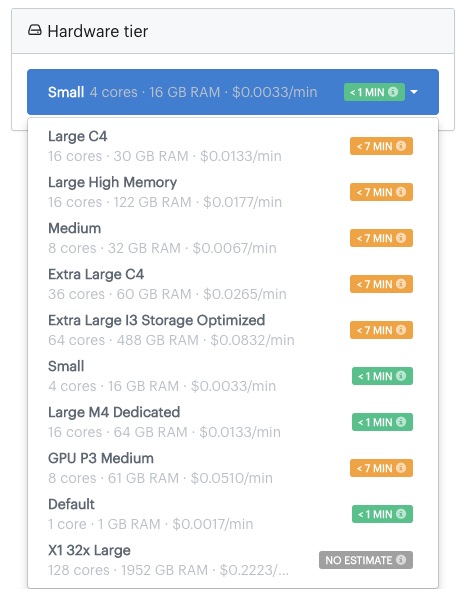

Operationalizing data science also involves additional engineering work and the provisioning of additional servers, storage, and networking. A challenge in this phase is that you may not know what resources you need until you’re ready for or in production. Likewise, you may not know when you’ll need to push an update or roll back a production model. You may not even have a good understanding of the number of servers needed to manage the load on end points.

The uncertainty around resource requirements means projects get stalled and starved for resources frequently.

Important considerations for this phase:

- Deliver models in the way people will use them. This may be dashboards, apps, batch jobs, APIs, or self-service tools. A key requirement is that they can scale on reliable infrastructure.

- Have ready access to infrastructure. Production models require scalable, high-availability hardware, storage, and other infrastructure. Using AWS will accelerate deployment, make it easier to “right size” the hardware, and provide high availability and security.

- Lower the cost of iteration. Organizations that have high overhead to put a model into production deploy fewer of them, meaning lower quality models and higher operating costs.

Moody’s Analytics, a long-time leader in financial modeling, has sought to optimize their ability to develop and deploy models faster. Over time, their models have been widely adopted and become vital to many firms.

To stay at the forefront of an industry that runs on models, Moody’s Analytics saw an opportunity to customize offerings to satisfy unique client requirements. The company had already been successful with a services-based model whereby Moody’s Analytics consultants build custom models for clients in the field, but this business model is expensive, both for Moody’s Analytics and customers, especially at a scale. Moody’s Analytics sought a more scalable approach for building custom models.

Moody’s Analytics has deployed Domino running on AWS to build new models and to monitor, manage, and enhance existing models once they’ve gone into production.

“With Moody’s Analytics know-how and workflow, coupled with Domino and AWS infrastructure, we have been able to accelerate model development, which means information gets into the hands of our clients who need it faster,” says Jacob Grotta, managing director of risk and finance analytics at Moody’s Analytics. “Our customers are excited because their needs are going to be answered in new ways that would previously have been impossible.”

See how Moody’s Analytics is using Domino and AWS >>

Figure 2 – On-demand scaling of API endpoints means production models aren’t starved for resources.

Monitoring and Iteration

Monitoring production models is different from monitoring other infrastructure. Because models automate decision-making at high volume, they introduce new risks that organizations may not understand. Models going bad in production have cost some companies hundreds of millions of dollars or put them out of business. Yet often, data science leaders can’t see what production models are doing.

There are many reasons models might degrade over time. A product recommendation model won’t adapt to changing tastes. A loan risk model won’t adapt to changing economic conditions. With fraud detection, criminals adapt as models evolve. Monitoring tools can tell if a model is running, but they can’t tell what it’s doing for the business.

Data science teams need to be able to detect and react quickly when models drift. A common problem is that companies lose the context of how a model was built, so they can’t update it. It’s also a problem when there are no resources to deploy the new model. In both cases, the damage can be tremendous.

The ability to monitor and iterate on production models is a critical capability.

Important considerations for this phase:

- Be able to see all models. The data science team needs to have visibility and access to production models. This includes access to logs for performance monitoring.

- Development linked to production. Models in production need to have a direct link to the development environment that built them so people can understand how the model operates.

- Retrain and/or roll back. People need to be able to update models quickly and efficiently. This means being able retrain existing models with new data or rolling back to previous versions as a stopgap measure.

Conclusion

Data science represents a new organizational capability with new processes and skills, and tremendous potential for the business. But many companies are trying to develop their capabilities by taking the wrong approach. They treat data science as an extension of software engineering or a side project, disconnected from the business.

Our experience at Domino Data Lab has been that organizations that excel at data science are those that understand it as a unique endeavor, requiring a new approach. Successful data scientists describe a life cycle for selecting projects, building and deploying models, and monitoring them to ensure they’re delivering value.

Want to learn more about organizations that are doing data science right with Domino and AWS? Read about Mashable and DBRS and discover more customer stories.

The content and opinions in this blog are those of the third party author and AWS is not responsible for the content or accuracy of this post.

.

|

|

Domino Data Lab – APN Partner Spotlight

Domino Data Lab is an AWS ML Competency Partner. They add cloud capabilities specific to data science workflows to help data scientists develop and deploy models faster.

Contact Domino | Solution Overview | Customer Success | Buy on Marketplace

*Already worked with Domino? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.