AWS Architecture Blog

Intelligently Search Media Assets with Amazon Rekognition and Amazon ES

Media assets have become increasingly important to industries like media and entertainment, manufacturing, education, social media applications, and retail. This is largely due to innovations in digital marketing, mobile, and ecommerce.

Successfully locating a digital asset like a video, graphic, or image reduces costs related to reproducing or re-shooting. An efficient search engine is critical to quickly delivering something like the latest fashion trends. This in turn increases customer satisfaction, builds brand loyalty, and helps increase businesses’ online footprints, ultimately contributing towards revenue.

This blog post shows you how to build automated indexing and search functions using AWS serverless managed artificial intelligence (AI)/machine learning (ML) services. This architecture provides high scalability, reduces operational overhead, and scales out/in automatically based on the demand, with a flexible pay-as-you-go pricing model.

Automatic tagging and rich metadata with Amazon ES

Asset libraries for images and videos are growing exponentially. With Amazon Elasticsearch Service (Amazon ES), this media is indexed and organized, which is important for efficient search and quick retrieval.

Adding correct metadata to digital assets based on enterprise standard taxonomy will help you narrow down search results. This includes information like media formats, but also richer metadata like location, event details, and so forth. With Amazon Rekognition, an advanced ML service, you do not need to tag and index these media assets. This automatic tagging and organization frees you up to gain insights like sentiment analysis from social media.

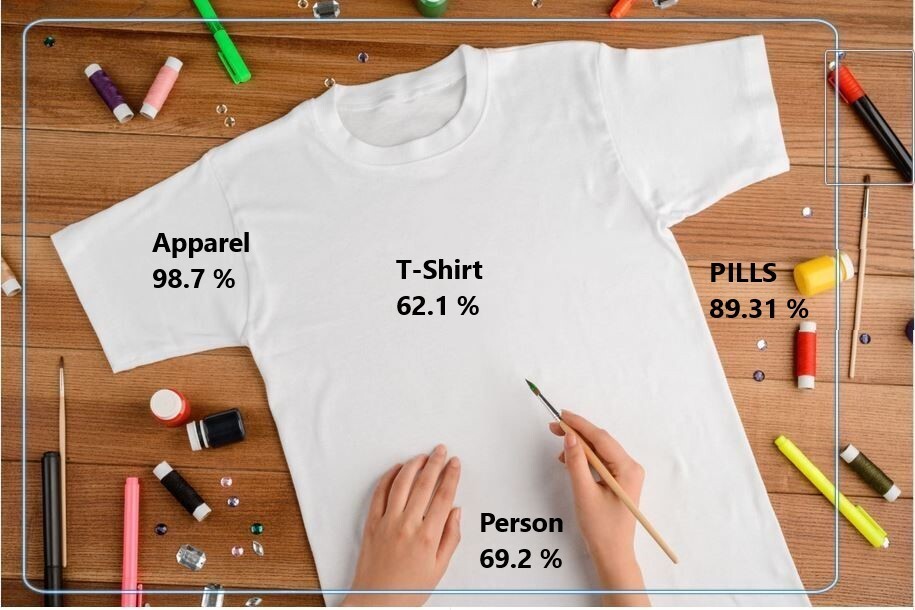

Figure 1 is tagged using Amazon Rekognition. You can see how rich metadata (Apparel, T-Shirt, Person, and Pills) is extracted automatically. Without Amazon Rekognition, you would have to manually add tags and categorize the image. This means you could only do a keyword search on what’s manually tagged. If the image was not tagged, then you likely wouldn’t be able to find it in a search.

Data ingestion, organization, and storage with Amazon S3

As shown in Figure 2, use Amazon Simple Storage Service (Amazon S3) to store your static assets. It provides high availability and scalability, along with unlimited storage. When you choose Amazon S3 as your content repository, multiple data providers are configured for data ingestion for future consumption by downstream applications. In addition to providing storage, Amazon S3 lets you organize data into prefixes based on the event type and captures S3 object mutations through S3 event notifications.

S3 event notifications are invoked for a specific prefix, suffix, or combination of both. They integrate with Amazon Simple Queue Service (Amazon SQS), Amazon Simple Notification Service (Amazon SNS), and AWS Lambda as targets. (Refer to the Amazon S3 Event Notifications user guide for best practices). S3 event notification targets vary across use cases. For media assets, Amazon SQS is used to decouple the new data objects ingested into S3 buckets and downstream services. Amazon SQS provides flexibility over the data processing based on resource availability.

Data processing with Amazon Rekognition

Once media assets are ingested into Amazon S3, they are ready to be processed. Amazon Rekognition determines the entities within each asset. Amazon Rekognition then extracts the entities in JSON format and assigns a confidence score.

If the confidence score is below the defined threshold, use Amazon Augmented AI (A2I) for further review. A2I is an ML service that helps you build the workflows required for human review of ML predictions.

Amazon Rekognition also supports custom modeling to help identify entities within the images for specific business needs. For instance, a campaign may need images of products worn by a brand ambassador at a marketing event. Then they may need to further narrow their search down by the individual’s name or age demographic.

Using our solution, a Lambda function invokes Amazon Rekognition to extract the entities from the ingested assets. Lambda continuously polls the SQS queue for any new messages. Once a message is available, the Lambda function invokes the Amazon Rekognition endpoint to extract the relevant entities.

The following is a sample output from detect_labels API call in Amazon Rekognition and the transformed output that will be updated to downstream search engine:

{'Labels': [{'Name': 'Clothing', 'Confidence': 99.98137664794922, 'Instances': [], 'Parents': []}, {'Name': 'Apparel', 'Confidence': 99.98137664794922,'Instances': [], 'Parents': []}, {'Name': 'Shirt', 'Confidence': 97.00833129882812, 'Instances': [], 'Parents': [{'Name': 'Clothing'}]}, {'Name': 'T-Shirt', 'Confidence': 76.36670684814453, 'Instances': [{'BoundingBox': {'Width': 0.7963646650314331, 'Height': 0.6813027262687683, 'Left':

0.09593021124601364, 'Top': 0.1719706505537033}, 'Confidence': 53.39663314819336}], 'Parents': [{'Name': 'Clothing'}]}], 'LabelModelVersion': '2.0', 'ResponseMetadata': {'RequestId': '3a561e82-badc-4ba0-aa77-39a13f1bb3a6', 'HTTPStatusCode': 200, 'HTTPHeaders': {'content-type': 'application/x-amz-json-1.1', 'date': 'Mon, 17 May 2021 18:32:27 GMT', 'x-amzn-requestid': '3a561e82-badc-4ba0-aa77-39a13f1bb3a6','content-length': '542', 'connection': 'keep-alive'}, 'RetryAttempts': 0}}

As shown, the Lambda function submits an API call to Amazon Rekognition, where a T-shirt image in .jpeg format is provided as the input. Based on your confidence score threshold preference, Amazon Rekognition will prompt you to initiate a human review using Amazon A2I. It will also prompt you to use Amazon Rekognition Custom Labels to train the custom models. Lambda then identifies and arranges the labels and updates the specified index.

Indexing with Amazon ES

Amazon ES is a managed search engine service that provides enterprise-grade search engine capability for applications. In our solution, assets are searched based on entities that are used as metadata to update the index. Amazon ES is hosted as a public endpoint or a VPC endpoint for secure access within the specified AWS account.

Labels are identified and marked as tags, which are assigned to .jpeg formatted images. The following sample output shows the query on one of the tags issued on an Amazon ES cluster.

Query:

curl-XGET https://<ElasticSearch Endpoint>/<_IndexName>/_search?q=T-Shirt

Output:

{"took":140,"timed_out":false,"_shards":{"total":5,"successful":5,"skipped":0,"failed":0},"hits":{"total":{"value":1,"relation":"eq"},"max_score":0.05460011,"hits":[{"_index":"movies","_type":"_doc","_id":"15","_score":0.05460011,"_source":{"fileName":"s7-1370766_lifestyle.jpg","objectTags":["Clothing","Apparel","Sailor

Suit","Sleeve","T-Shirt","Shirt","Jersey"]}}]}}

In addition to photos, Amazon Rekognition also detects the labels on videos. It can recognize labels and identify characters and entities. These are then added to Amazon ES to enhance search capability. This allows users to skip to specific parts of a video for quick searchability. For instance, a marketer may need images of cashmere sweaters from a fashion show that was streamed and recorded.

Once the raw video clip is identified, it is then converted using Amazon Elastic Transcoder to play back on mobile devices, tablets, web browsers, and connected televisions. Elastic Transcoder is a highly scalable and cost-effective media transcoding service in the cloud. Segmented output renditions are created for delivery using the multiple protocols to compatible devices.

Conclusion

This blog describes AWS services that can be applied to diverse set of use cases for tagging and efficient search of images and videos. You can build automated indexing and search using AWS serverless managed AI/ML services. They provide high scalability, reduce operational overhead, and scale out/in automatically based on the demand, with a flexible pay-as-you-go pricing model.

To get started, use these references to create your own sample architectures: