AWS Architecture Blog

Seamlessly migrate on-premises legacy workloads using a strangler pattern

Replacing a complex workload can be a huge job. Sometimes you need to gradually migrate complex workloads but still keep parts of the on-premises system to handle features that haven’t been migrated yet. Gradually replacing specific functions with new applications and services is known as a “strangler pattern.”

When you use a strangler pattern, monolithic workloads are broken down and individual services are scheduled for rehosting, replatforming, and even retirement. As you do this, there is value in having a uniform point of access for the various services, as well as a uniform level of security and a way to manage workloads in the cloud and on-premises.

This blog post covers how to implement a strangler architecture pattern for on-premises legacy workloads to create uniform access and security across your workloads. We walk you through how to implement this pattern, which uses an API facade to ensure your customers continue to see and use the same interface while you “strangle” the monolith by incrementally creating and deploying new microservices in the cloud.

Solution overview

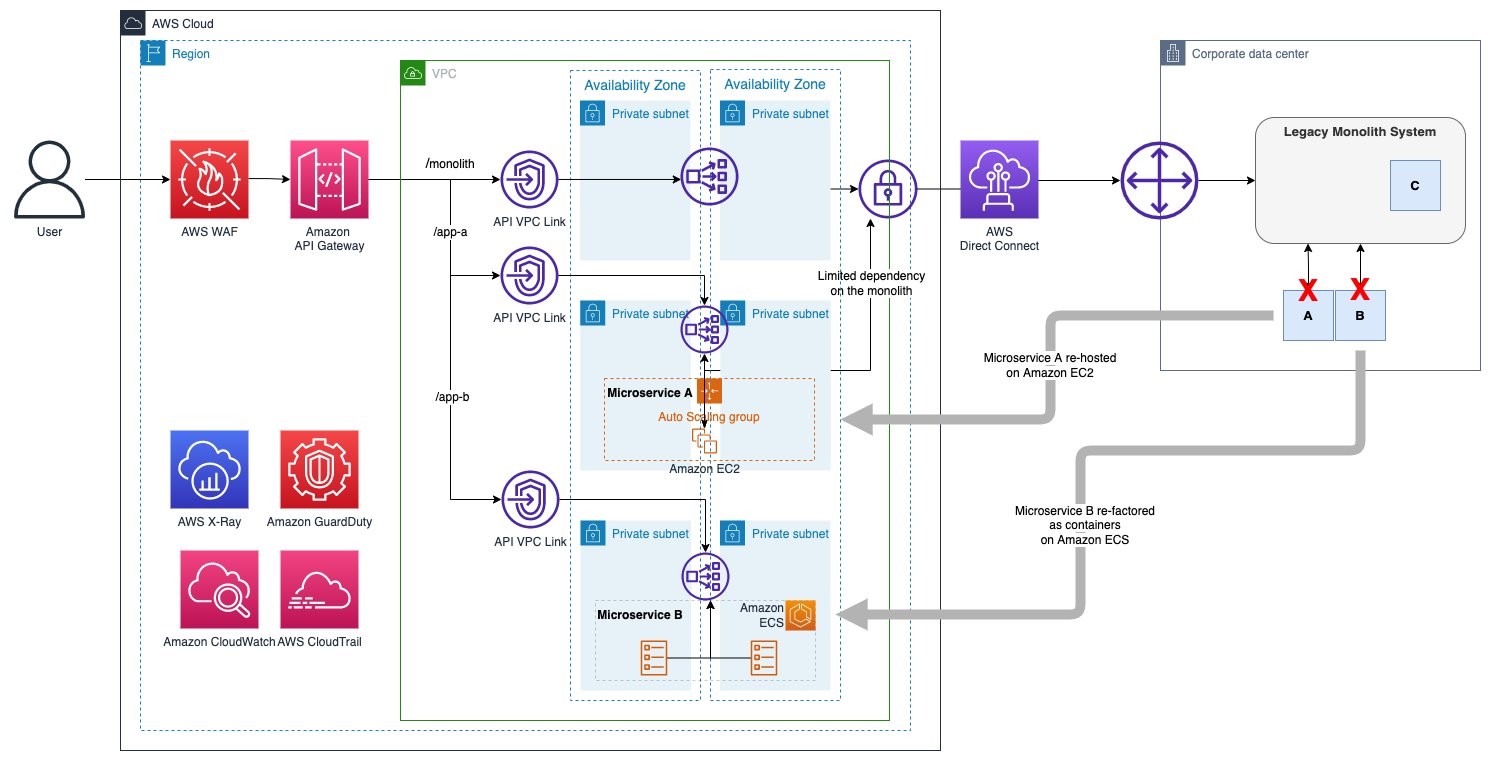

This solution uses Amazon API Gateway to create an API facade for your on-premises monolith application. As you deploy new microservices on AWS, you can create new API resources/methods under the same API Gateway endpoint (to learn more about creating REST APIs, see Creating a REST API in Amazon API Gateway).

AWS Direct Connect, along with API Gateway private integrations that use virtual private cloud (VPC) links, provide secure network connectivity to your on-premises services.

The following sections provide more detail on these services and their functions.

On-premises Connectivity

Direct Connect provides a dedicated connection between the on-premises services and AWS. This allows you to implement a hybrid workload by securely connecting the API Gateway and the application deployed on your on-premises environment.

You can use an AWS Site-to-Site VPN to connect to on-premises environments, but Direct Connect is preferred for its reduced latency and dedicated bandwidth.

API facade

API Gateway creates the facade for customer APIs/services (the monolith and the new microservices) deployed in the on-premises environment as well as the ones migrated to AWS.

API Gateway uses private integrations to securely connect to on-premises services and resources launched into Amazon Virtual Private Cloud (Amazon VPC) like re-hosted microservices running on Amazon Elastic Compute Cloud (Amazon EC2) or modernized applications running on container services like Amazon Elastic Container Service (Amazon ECS).

The Network Load Balancer is part of the private integration for API Gateway. It acts as a high throughput, high availability resource that fronts the API backends deployed either in the on-premises environment or Amazon VPC. Network Load Balancers support different target types. Use the IP target type to target on-premises servers hosting legacy workloads and use the instance and Application Load Balancer target types for applications hosted within AWS environments.

Security

Use AWS Web Application Firewall for API Gateway REST endpoints. It provides the ability to monitor and block HTTP and HTTPS traffic according to stateless and stateful rule groups.

Amazon GuardDuty provides threat detection across your microservices.

(Optional) Enable AWS Shield Advanced for Amazon CloudFront distributions that are configured for regional API Gateway endpoints. This provides added distributed denial of service (DDoS) protection beyond AWS Shield Standard, which is automatically included.

Logging and monitoring

AWS X-Ray and Amazon CloudWatch give you visibility into your requests and assorted service metrics.

AWS CloudTrail allows you to track interactions with your infrastructure through the AWS control plane APIs.

Strangler process

The strangler pattern allows you to smoothly migrate resources from on-premises environments by placing a cloud-based API facade in front of them. The next sections show an example scenario of what a strangler pattern-based migration process could look like for a given workload.

Putting a facade in front of the monolith

First, we add our API Gateway facade in front of our on premises monolith. The API Gateway acts as a facade to the customer APIs/services (the monolith and the new microservices) deployed in the on-premises environment as well as the ones migrated to AWS. This means that as the on-premises monolith application is strangled and new microservices are created, the new services are added to the API Gateway so that they can consumed along with the monolith services, as shown in Figure 2.

Breaking up the monolith behind the facade

Next, let’s break up our monolith into component microservices, as shown in Figure 3. This allows us more flexibility in deciding how best to migrate individual services. With the strangler pattern, we can incrementally update sections of code and functionality of the monolith (extract as a microservice with minimum dependency to the monolith application) without needing to completely refactor the entire application. Eventually, all the monolith’s services and components will be migrated, and the legacy system can be retired. Monoliths can be decomposed by business capability, subdomain, transactions, or based on the teams that maintain them.

Figure 3. Microservices A and B being decomposed from a legacy monolith, component C scheduled for retirement is not broken out into a microservice

Migrating microservices into the cloud

With our monolith broken up into its component microservices, we can begin moving the microservices into the cloud.

In our example, we rehost microservice A and refactor microservice B.

- Rehosting a microservice: Here, we take microservice A and rehost it from on-premises virtual machines onto EC2 instances in AWS. We have deployed the microservice across multiple Availability Zones with Amazon EC2 Auto Scaling group. As you see from Figure 4, even after deployment to AWS, microservice A continues to have limited dependency on the monolith application. This dependency will eventually be removed as the strangling process is completed and the monolith is completely decomposed.

- Refactoring a microservice: With functionality broken out across microservices, we can opt to refactor certain services using containerization and orchestration platforms like Amazon ECS. Here, we take microservice B and containerize it using Docker and then use Amazon ECS to deploy it.

Figure 5. Microservice B being refactored and after containerization and being moved onto Amazon ECS

Retire the monolith

Finally, when ready (application users have all been migrated to the new microservice endpoints), you can retire the legacy monolith application. Figure 6 shows the end state where the monolith application is retired along with hybrid connectivity. The API facade now serves the new migrated microservices. At this point, you can decide to retire application components.

Figure 6. Microservices A and B after the legacy monolith retired and on-premises connectivity has ceased

Conclusion

In this blog post, we showed you how to use a strangler pattern to smoothly transition on-premises workloads through a hybrid migration process with a uniform entry point in AWS. We walked you through the process of strangling a legacy monolith by decomposing it into microservices and bringing microservices into the cloud one by one with migration approaches that best fit each service.

Ready to get started? Learn how to implement private integration for API Gateway. See how to further integrate mediation layers to support legacy XML and other non-JSON-based API responses. Get hands-on with the Break a Monolith Application into Microservices project.