AWS News Blog

Amazon EBS Update – New Elastic Volumes Change Everything

It is always interesting to speak with our customers and to learn how the dynamic nature of their business and their applications drives their block storage requirements. These needs change over time, creating the need to modify existing volumes to add capacity or to change performance characteristics. Today’s 24×7 operating models leaves no room for downtime; as a result, customers want to make changes without going offline or otherwise impacting operations.

Over the years, we have introduced new EBS offerings that support an ever-widening set of use cases. For example, we introduced two new volume types in 2016 – Throughput Optimized HDD (st1) and Cold HDD (sc1). Our customers want to use these volume types as storage tiers, modifying the volume type to save money or to change the performance characteristics, without impacting operations.

In other words, our customers want their EBS volumes to be even more elastic!

New Elastic Volumes

Today we are launching a new EBS feature we call Elastic Volumes and making it available for all current-generation EBS volumes attached to current-generation EC2 instances. You can now increase volume size, adjust performance, or change the volume type while the volume is in use. You can continue to use your application while the change takes effect.

This new feature will greatly simplify (or even eliminate) many of your planning, tuning, and space management chores. Instead of a traditional provisioning cycle that can take weeks or months, you can make changes to your storage infrastructure instantaneously, with a simple API call.

You can address the following scenarios (and many more that you can come up with on your own) using Elastic Volumes:

Changing Workloads – You set up your infrastructure in a rush and used the General Purpose SSD volumes for your block storage. After gaining some experience you figure out that the Throughput Optimized volumes are a better fit, and simply change the type of the volume.

Spiking Demand – You are running a relational database on a Provisioned IOPS volume that is set to handle a moderate amount of traffic during the month, with a 10x spike in traffic during the final three days of each month due to month-end processing. You can use Elastic Volumes to dial up the provisioning in order to handle the spike, and then dial it down afterward.

Increasing Storage – You provisioned a volume for 100 GiB and an alarm goes off indicating that it is now at 90% of capacity. You increase the size of the volume and expand the file system to match, with no downtime, and in a fully automated fashion.

Using Elastic Volumes

You can manage all of this from the AWS Management Console, via API calls, or from the AWS Command Line Interface (AWS CLI).

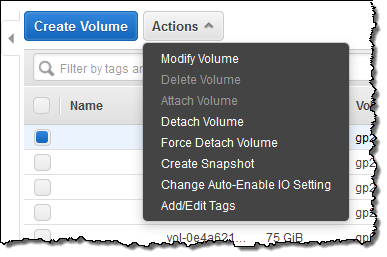

To make a change from the Console, simply select the volume and choose Modify Volume from the Action menu:

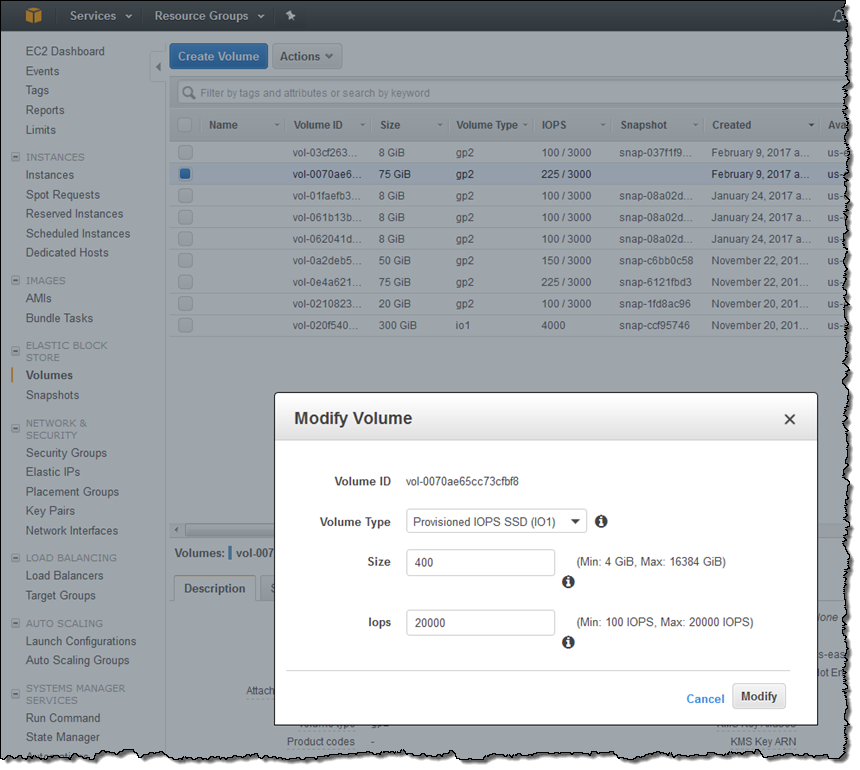

Then make any desired changes to the volume type, size, and Provisioned IOPS (if appropriate). Here I am changing my 75 GiB General Purpose (gp2) volume into a 400 GiB Provisioned IOPS volume, with 20,000 IOPS:

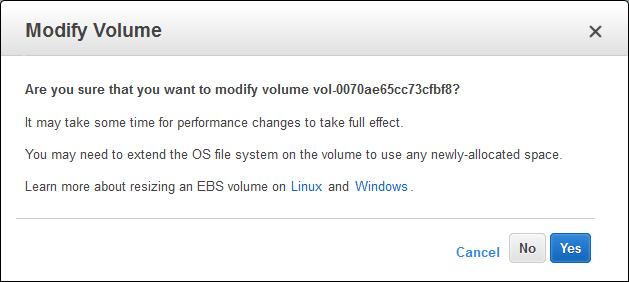

When I click on Modify I confirm my intent, and click on Yes:

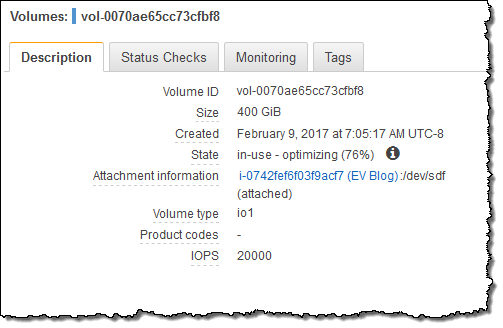

The volume’s state reflects the progress of the operation (modifying, optimizing, or complete):

The next step is to expand the file system so that it can take advantage of the additional storage space. To learn how to do that, read Expanding the Storage Space of an EBS Volume on Linux or Expanding the Storage Space of an EBS Volume on Windows. You can expand the file system as soon as the state transitions to optimizing (typically a few seconds after you start the operation). The new configuration is in effect at this point, although optimization may continue for up to 24 hours. Billing for the new configuration begins as soon as the state turns to optimizing (there’s no charge for the modification itself).

Automatic Elastic Volume Operations

While manual changes are fine, there’s plenty of potential for automation. Here are a couple of ideas:

Right-Sizing – Use a CloudWatch alarm to watch for a volume that is running at or near its IOPS limit. Initiate a workflow and approval process that could provision additional IOPS or change the type of the volume. Or, publish a “free space” metric to CloudWatch and use a similar approval process to resize the volume and the filesystem.

Cost Reduction – Use metrics or schedules to reduce IOPS or to change the type of a volume. Last week I spoke with a security auditor at a university. He collects tens of gigabytes of log files from all over campus each day and retains them for 60 days. Most of the files are never read, and those that are can be scanned at a leisurely pace. They could address this use case by creating a fresh General Purpose volume each day, writing the logs to it at high speed, and then changing the type to Throughput Optimized.

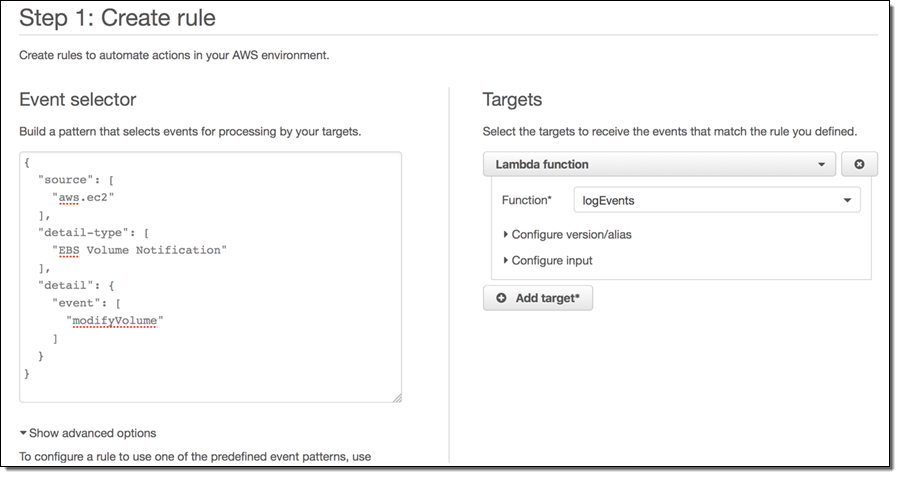

As I mentioned earlier, you need to resize the file system in order to be able to access the newly provisioned space on the volume. In order to show you how to automate this process, my colleagues built a sample that makes use of CloudWatch Events, AWS Lambda, EC2 Systems Manager, and some PowerShell scripting. The rule matches the modifyVolume event emitted by EBS and invokes the logEvents Lambda function:

The function locates the volume, confirms that it is attached to an instance that is managed by EC2 Systems Manager, and then adds a “maintenance tag” to the instance:

Available Today

The Elastic Volumes feature is available today and you can start using it right now!

To learn about some important special cases and a few limitations on instance types, read Considerations When Modifying EBS Volumes.

— Jeff;

PS – If you would like to design and build cool, game-changing storage services like EBS, take a look at our EBS Jobs page!